Intel’s Dual-Core Xeon First Look

by Jason Clark & Ross Whitehead on December 16, 2005 12:05 AM EST- Posted in

- IT Computing

The future is performance per watt.

Given the way that the energy markets have gone during the past year, it was fairly obvious that there was going to be a focus on power, and performance per watt. Some may say that power is irrelevant and performance is key. While performance is important, performance per Watt is more important. Both Intel and AMD are focusing on ways of delivering more performance with less power - it is the future. We're facing rising energy prices everyday, and those numbers trickle down to everyone, whether you are drying your clothes, or running a few racks of servers at a datacenter.

Recently, we spoke to a bandwidth provider in one of the largest datacenters on the US east coast. The datacenter that this provider uses for its services is out of power. They can't add any more racks because the datacenter doesn't have enough power. We're not talking about a small datacenter either; this is a very large datacenter that serves some of the world's largest websites. They can't get anymore power because government regulations won't allow it; so, now what? They have to find ways to reduce power consumption.

Space is also a concern at most datacenters, so blade systems are becoming very popular at the datacenter. IDC recently forecasted that blade systems would reach 8% of the server market this year, from 2% a year ago. Blade systems may solve the space problem, but add to the power problem, as you end up with a more power-dense environment.

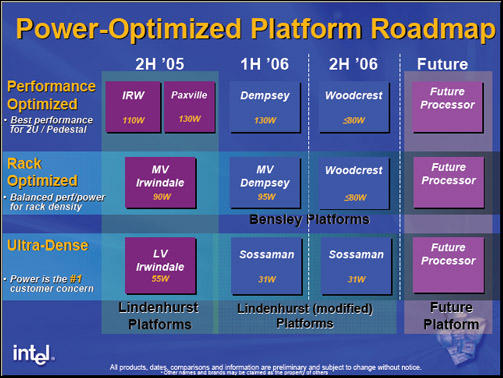

Intel's performance per watt play won't come into full effect until next year, with Woodcrest. Rough numbers for Woodcrest put it at somewhere in the 80Watt range or less. If you think back over the past few years at how the focus has been all about performance, people seem to have overlooked where AMD is in terms of performance per Watt. The Opteron has been competitive with Intel since its inception not only on performance, but delivering that performance in a lower power envelope. The Opteron 280 processor is a 95 Watt part already, and has been very competitive with Intel's Xeon.

Intel Power/Performance Roadmap

Given the way that the energy markets have gone during the past year, it was fairly obvious that there was going to be a focus on power, and performance per watt. Some may say that power is irrelevant and performance is key. While performance is important, performance per Watt is more important. Both Intel and AMD are focusing on ways of delivering more performance with less power - it is the future. We're facing rising energy prices everyday, and those numbers trickle down to everyone, whether you are drying your clothes, or running a few racks of servers at a datacenter.

Recently, we spoke to a bandwidth provider in one of the largest datacenters on the US east coast. The datacenter that this provider uses for its services is out of power. They can't add any more racks because the datacenter doesn't have enough power. We're not talking about a small datacenter either; this is a very large datacenter that serves some of the world's largest websites. They can't get anymore power because government regulations won't allow it; so, now what? They have to find ways to reduce power consumption.

Space is also a concern at most datacenters, so blade systems are becoming very popular at the datacenter. IDC recently forecasted that blade systems would reach 8% of the server market this year, from 2% a year ago. Blade systems may solve the space problem, but add to the power problem, as you end up with a more power-dense environment.

Intel's performance per watt play won't come into full effect until next year, with Woodcrest. Rough numbers for Woodcrest put it at somewhere in the 80Watt range or less. If you think back over the past few years at how the focus has been all about performance, people seem to have overlooked where AMD is in terms of performance per Watt. The Opteron has been competitive with Intel since its inception not only on performance, but delivering that performance in a lower power envelope. The Opteron 280 processor is a 95 Watt part already, and has been very competitive with Intel's Xeon.

Intel Power/Performance Roadmap

67 Comments

View All Comments

Viditor - Friday, December 16, 2005 - link

1. Double the number (probably more, but for the sake of argument make it double) for the difference in air conditioning.

2. The PSU drawas power at about (average) 75-80% efficiency, so increased power demand increases the loss from PSU inefficiancy

I can't say that the AT number is right, but I can't say it's wrong either...

Furen - Friday, December 16, 2005 - link

Like I said, cooling normally matches the system's power consumption, so the difference is actually 2x $8,140. To this you apply a 20% increase due to inefficiency and you get $19,536. This is why everyone out there is complaining about power consumption and this is why performance per watt is the wave of the future (this is what we all said circa 2001... when transmeta was big) for data centers. I think Intel will have a slight advantage in this regard when it releases its Sossaman (or whatever he hell that's called) unless AMD can push its Opteron a bit more (a 40W version would suffice, considering that it has many benefits over the somewhat-crippled Sossaman).Viditor - Friday, December 16, 2005 - link

Sossaman being only 32 bit will be a fairly big disadvantage, but it might do well in some blade environments...

The HE line of Dual Core Opterons have a TDP of 55w, which means that their actual power draw is substantially less. 40w?...I don't know. If they are able to implement the new strained silicon process when they go 65nm, then probably at least that low...

Furen - Friday, December 16, 2005 - link

Yes, that's what I meant by the "...considering it has many benefits over the somewhat-crippled Sossaman"... that and the significantly inferior floating-point performance. I've never worked with an Opteron HE so I can't say how their power consumption is. The problem is not the hardware itself, though, but also the fact that AMD does not PUSH the damn chip. It's hard to find a non-blade system with HEs, so AMD probably needs to drop price a bit on these to get people to adopt them on regular rack servers.Viditor - Friday, December 16, 2005 - link

Me neither...and judging it by the TDP isn't really a good idea either (but it does give a ceiling). Another point we should probably look at is the FBDimms... I've noticed that they get hot enough to require active cooling (at least on the systems I've seen). I know that Opteron is supposedly going to FBDs with the Socket F platforms (though that's uncomfirmed so far). This brings up 2 important questions...

1. How well do the Dempseys do using standard DIMMS?

2. How much of the Dempsey test system power draw is for the FBDs?

Heinz - Saturday, December 17, 2005 - link

Go to:

http://www.amdcompare.com/techoutlook/">http://www.amdcompare.com/techoutlook/

There, FBDIMM is mentioned in 2007 (Platform overview).

Thus, either the whole Socket F platform is pushed to 2007, or Socket F simply uses DDR2 or maybe both :)

But I guess it will be DDR2. Historically AMD uses old, reliable RAM techniques at higher speeds, like PC133 SDRAM, DDR400.. and now it is DDR2-667/800.

Just wondering, if AMD will introduce then another "Socket G" already by 2007 ?

byebye

Heinz

Furen - Friday, December 16, 2005 - link

Dempseys should perform as badly as current Paxvilles if they use normal DDR2. This is because I seriously doubt anyone (basically, Intel) is going to make a quad-channel DDR2 controller since it requires lots and lots of traces and there's better technology out there (FB IS better, whatever everyone says, it's just having its introduction quirks). Remember that Netbursts are insanely bandwidth starved so having quad-channel DDR2 (which is enough to basically saturate the two 1066MHz Front Side Buses) is extremely useful in a two-way system. Once you get to 4-way, however, the bandwidth starvation comes back again.Intel is being smart by sending out preview systems with the optimal configuration and I'm somewhat disappointed that reviewers dont point out that this IS the best the chips can get. Normally people dont even mention that the quad-channel DDR2 is what gives these systems its performance benefits but let it be assumed that its the 65nm chips that perform better than the 90nm parts (for some miraculous reason) under the same conditions. That why I'm curious about how these perform on a 667MHz FSB. Having quad-channel DDR2 533 is certainly not useful at all with such a low FSB, I think. Remember how Intel didn't send out Paxvilles out to reviewers? I'd guess that the 667FSB Dempseys will perform even worse than those.

IntelUser2000 - Friday, December 16, 2005 - link

What do you mean?? Bensley uses FB-DIMM 4-ch if you didn't know. And people who researches into these stuff/have looked into technical details say, Intel's memory controller design rivals the best, if not better.

Also, Bensley uses DDR2-533, while Lindenhurst uses DDR2-400. We all know that DDR2-533 is faster than DDR2-400.

Yes, but that's certainly better than Dual channel DDR2-400 with 800FSB since Bensley will have two FSB's anyway.

Paxville and Dempsey has same amount of cache, only difference being Dempsey is clocked higher.

Furen - Friday, December 16, 2005 - link

If you read what you quoted again I said that Dempseys should perform the same as current Paxvilles if they use NORMAL DDR2 (as in not FB-DIMMs). I know there are 4 FB channels on Benseley but Viditor said that he wondered how Dempseys would perform on regular DDR2. Yes, Intel's memory controllers are among the best (nVidia's DDR2 mem controller is slightly better, I think) but I said that I don't believe they will make a quad-channel DDR2 northbridge, since the amount of traces coming out of a single chip would be insane. You're correct about Linderhurst sucking.Consider that having two FB533 channels. Basically the two CPUs (4 cores) will share the equivalent of a FSB1066 in memory bandwidth, having an insanely wide FSB doesn't help if you dont have any use for it and memory bandwidth limitations always hurt Netbursts significantly. The same could be said for having 4 FB channels and running at dual FSB667. I dont even want to think about Intel's quad-core stuff, though the P6 architecture is much less reliant on memory bandwidth than Netburst.

Viditor - Friday, December 16, 2005 - link

Some good points Furen...it seems to me that with the Dempsey on Benseley, Intel will finally be competitive in the dual dual market. I agree that the quad duals will still be a problem for them...I think that in the dual dual sector though, while I doubt Intel will regain any marketshare, they may at least slow down the bleeding.