ASUS P5N-E SLI: NVIDIA's 650i enters with a Bang

by Gary Key on December 22, 2006 5:00 AM EST- Posted in

- Motherboards

NVIDIA introduced the 680i chipset back in early November with eager anticipation from users looking to try something different within the Intel chipset world. There was a lot of hype, fanfare, and a great deal of media coverage that surrounded the launch of the NVIDIA 600i family of chipsets. We reviewed the 680i chipset in-depth at launch and came away very impressed with its capabilities for the upper-end enthusiast. Since the launch, most of the focus surrounding the 680i chipset changed from its impressive performance and flexibility to issues that seemed to plague the reference board designs from the launch partners such as EVGA and BFG. These issues revolved around audio issues when using SLI and data corruption or performance loss when utilizing SATA drives on the reference boards. The audio issues were solved with a quick BIOS update although we found in our testing that loading the Microsoft DX9 October update before the audio drivers also solved the issue. The data corruption on drives was an entirely different issue that seemed to be centered on users with RAID arrays but also spread to single drive users under varying circumstances.

A revised BIOS was introduced this past week that has apparently cured the majority of data corruption issues with the reference boards - an issue we did not witness in our testing of the ASUS 680i motherboards or with our own EVGA motherboards. While NVIDIA attributes the problems to signal timings on the motherboard, we are still investigating NVIDIA's claims about why the issue occurs on one board and not the other. NVIDIA has commented that statistical variations in the electrical paths between each board can vary and result in one board being affected and another one not. We know from the board manufacturers that this chipset is very sensitive to electrical noise and is one of the main reasons why a specific set of voltages is required to reach the upper overclock limits of the board. This specific set of voltage settings seem to differ from board to board and our initial opinion about this issue is based upon us having a "tolerant" MCP/SPP combination on our review boards.

We are about finished with our testing of several 680i boards and will have a full review up in the near future but at this time we are glad NVIDIA has come up with a fix. At the same time, these types of problems are not something people want to see in a top-end enthusiast chipset, and a bit more testing and validation in the future before launch might be a better solution than BIOS patches after the fact.

Since our first look at the 680i chipset we along with our readers have wondered when the lower priced 650i family of chipsets would arrive and more importantly how they would perform. ASUS is first to market with the NVIDIA 650i SLI chipset and we will see other manufacturers utilizing this chipset in January. The base 650i Ultra boards should be arriving in late January or early February. The 650i SLI was designed to offer dual x8 SLI operation, provide competitive performance to the Intel P965, and do this for a price in the $125~$175 range. The $64,000 question is if NVIDIA succeeded in designing a competitive chipset when compared to the Intel P965. We will provide some initial performance results today that should help in determining if ASUS' implementation of this chipset offers some serious competition or if we need to wait on additional motherboards before coming to a conclusion.

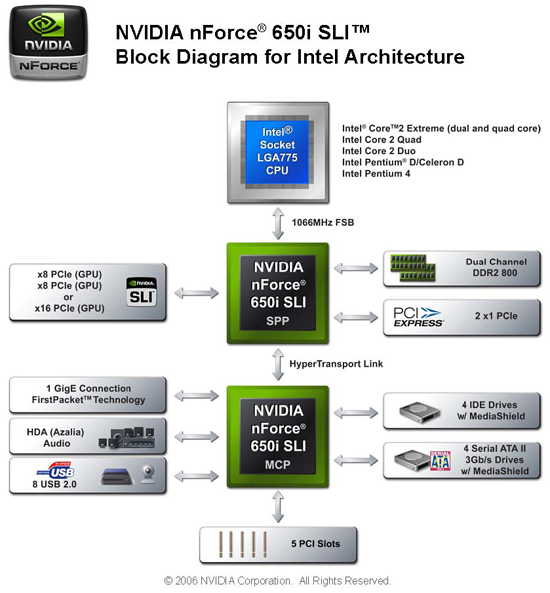

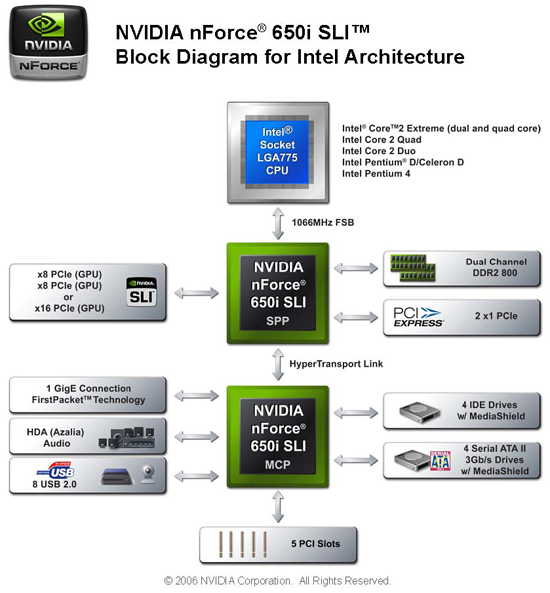

NVIDIA has designed the 650i SLI as their true mainstream performance chipset, given the 680i is targeted to the upper end performance segment with pricing starting around $230 compared to $130 for the 650i. Details about the differences between the two chipsets can be found here. The major highlights are the 650i only supports dual x8 SLI operation, single Gigabit Ethernet, four SATA 3Gb/s ports, and eight USB 2.0 ports instead of the dual x16 SLI, physics card slot, dual Gigabit Ethernet with teaming, six SATA 3Gb/s ports, and ten USB 2.0 ports on the 680i. Although NVIDIA has not stated specific support for the upcoming 1333FSB processors in the 650i, ASUS is saying the board will be capable of supporting them with an updated BIOS release. We also noticed that ASUS included EPP memory capability along with LinkBoost technology, both items are not officially supported by NVIDIA in their product documentation although each feature did work as advertised.

This leads us into today's performance preview of the ASUS P5N-E SLI. In our article today we will briefly go over the board layout and features, provide a few important performance results, and discuss our issues with the board. We will provide a further review of this product once we receive additional 650i based boards in January. With that said, let's take a look at where this board stands now.

A revised BIOS was introduced this past week that has apparently cured the majority of data corruption issues with the reference boards - an issue we did not witness in our testing of the ASUS 680i motherboards or with our own EVGA motherboards. While NVIDIA attributes the problems to signal timings on the motherboard, we are still investigating NVIDIA's claims about why the issue occurs on one board and not the other. NVIDIA has commented that statistical variations in the electrical paths between each board can vary and result in one board being affected and another one not. We know from the board manufacturers that this chipset is very sensitive to electrical noise and is one of the main reasons why a specific set of voltages is required to reach the upper overclock limits of the board. This specific set of voltage settings seem to differ from board to board and our initial opinion about this issue is based upon us having a "tolerant" MCP/SPP combination on our review boards.

We are about finished with our testing of several 680i boards and will have a full review up in the near future but at this time we are glad NVIDIA has come up with a fix. At the same time, these types of problems are not something people want to see in a top-end enthusiast chipset, and a bit more testing and validation in the future before launch might be a better solution than BIOS patches after the fact.

Since our first look at the 680i chipset we along with our readers have wondered when the lower priced 650i family of chipsets would arrive and more importantly how they would perform. ASUS is first to market with the NVIDIA 650i SLI chipset and we will see other manufacturers utilizing this chipset in January. The base 650i Ultra boards should be arriving in late January or early February. The 650i SLI was designed to offer dual x8 SLI operation, provide competitive performance to the Intel P965, and do this for a price in the $125~$175 range. The $64,000 question is if NVIDIA succeeded in designing a competitive chipset when compared to the Intel P965. We will provide some initial performance results today that should help in determining if ASUS' implementation of this chipset offers some serious competition or if we need to wait on additional motherboards before coming to a conclusion.

NVIDIA has designed the 650i SLI as their true mainstream performance chipset, given the 680i is targeted to the upper end performance segment with pricing starting around $230 compared to $130 for the 650i. Details about the differences between the two chipsets can be found here. The major highlights are the 650i only supports dual x8 SLI operation, single Gigabit Ethernet, four SATA 3Gb/s ports, and eight USB 2.0 ports instead of the dual x16 SLI, physics card slot, dual Gigabit Ethernet with teaming, six SATA 3Gb/s ports, and ten USB 2.0 ports on the 680i. Although NVIDIA has not stated specific support for the upcoming 1333FSB processors in the 650i, ASUS is saying the board will be capable of supporting them with an updated BIOS release. We also noticed that ASUS included EPP memory capability along with LinkBoost technology, both items are not officially supported by NVIDIA in their product documentation although each feature did work as advertised.

This leads us into today's performance preview of the ASUS P5N-E SLI. In our article today we will briefly go over the board layout and features, provide a few important performance results, and discuss our issues with the board. We will provide a further review of this product once we receive additional 650i based boards in January. With that said, let's take a look at where this board stands now.

27 Comments

View All Comments

JarredWalton - Monday, December 25, 2006 - link

The big problem with AGP is that it only allowed for one high-speed port. PCIe allows for many more (depending on chipset), plus you get high up and down bandwidth, whereas AGP had fast writes (CPU to card) but slow reads (card to CPU). X8 PCIe is still at least as fast as 8X AGP in terms of bandwidth, and in most instances we aren't stressing that level of bandwidth.Lord Evermore - Monday, December 25, 2006 - link

x8 PCIe can be as slow as AGP4X depending on the traffic pattern. 4 lanes of PCIe (or 8 half-lanes technically; the number of lanes in each direction in x8) is 1GBps, AGP4X is 1.066GBps. So if most of the data were being streamed in one direction, those two would be equivalent, theoretically. AGP8X would have 2.13GBps in which to stream that uni-directional data. If half the data were going in each direction, then x8 PCIe would be equivalent to AGP8X since they'd both have 1GBps available for each direction, or 2GBps half the time for AGP actually (though performance might be lower with AGP because of the non-independent half-duplex nature).But since AGP4X is probably still capable of handling the majority of applications, it doesn't really matter much.

Too bad we can't manually control the number of lanes in use to a particular slot. It would be very interesting to compare performance using the same graphics card on the same mainboard using x1, which could depending on the pattern be about equal to a simple PCI card or AGP1X, to x2, x4, x8 and x16 (since x16 can in some cases be comparable to AGP8X). That would help to definitively say whether all the increased bandwidth is actually making a difference, or if other factors are involved.

Lord Evermore - Monday, December 25, 2006 - link

AGP 3.0 supports multiple slots depending on what the chipset is designed to support. According to Wikipedia, HP AlphaServer GS1280 has up to 16 AGP slots. Those basically all connect to a single interface on the chipset. It's likely that since it's a part of the AGP3 spec, every chipset could have supported multiple ports, but normal mainboard makers never used it. There were probably reasons that it wouldn't have worked well for an SLI type feature, possibly the read/write bandwidth issue.Any chipset designer also could have just put in multiple AGP interfaces I bet, even if they only supported one card a time. Don't know what effect that would have on bandwidth or contention for access to the CPU. The cards probably also would have not been able to work in any sort of SLI configuration where the data had to go over the chipset bus.

PrinceGaz - Friday, December 22, 2006 - link

Your article starts with questions about this, and they remain unresolved at least up until nForce4 chipsets to my knowledge (because I have one). Of course I'm not stupid enough to risk using nVidia's hardware firewall and associated drivers, but even their IDE drivers can cause a normal installation of Windows XP to have trouble starting which means I cannot safely enable NCQ (I have a dual-core processor) or even benefit from any acceleration the nForce4 chipset might provide, because the nVidia drivers are unstable.I once used to trust nVidia, especially with drivers back in the early GeForce days, but the latest official GeForce drivers have been bug-ridden what with incorrect monitor refresh-rate detection (even after using the .inf file), and stupidity like doubling the reported memory clock speed of the card when it had always previously been correct.

Their good graphics-card drivers were why I bought an nForce4 based board, and also on this site's recommendation, and I must admit I'm only so-so about it. It works and does everything it says it should on the box, but the computer doesn't feel as responsive as it should and I suspect that is partly because I had to revert to the default Microsoft disk drivers.

All reviews of nVidia chipset motherboards should include a mention about their driver issues (bugs) until they are fixed. Just because you test a mobo for one day and it seems to work and overclock to a given level, does not mean it can be trusted day-in day-out. If you cannot install the IDE drivers, then NCQ and other hard-drive features are negated. If the hardware firewall drivers are so bad no one with any sense goes near them, then that hardware in the chipset is worthless and could best be described as a liability.

I like this site, but it would be nice if you sometimes looked back on products you've been given earlier in the year and report on whether they actually lived up to expectations. Assuming you get to keep any of your stuff. If you don't, then the opinions of the writers becomes almost meaningless because anything looks good for a day or two.

Tanclearas - Saturday, December 23, 2006 - link

Gary Key should be sensitive to this issue more than anyone. Gary tried to facilitate contact between me and Nvidia to try to nail down the cause of the hardware firewall corruption issues. He contacted Nvidia several times for me, and I was contacted by an Nvidia rep twice. I provided the Nvidia rep with detailed steps that I had used to install Windows and the drivers. I conducted tests without any software installed, and continually experienced issues. I provided screen shots of errors to the rep as well. I offered to install Windows and drivers of any version they requested, using whatever steps they wanted.After providing them with all of the details and making that offer, Nvidia never contacted me again. Gary followed up with me, and contacted Nvidia again on my behalf to try to get them to get in touch with me. Ultimately, they just removed official support for the firewall. I am honestly surprised a class action suit never came of it. Nvidia used the hardware firewall as a selling feature, then made no attempt to solve the issues that were being experienced by many users, and finally just pulled the plug on it.

Anyway, I too have little faith in Nvidia actually taking the issues seriously and finding a solution. I'm not going to say that I'll never buy a board with an Nvidia chipset again, but I can guarantee I won't be buying 680/650 when there are already known issues, and any future board based on an Nvidia chipset will have to go through months of retail availability and positive user feedback before I'd be willing to try again.

LoneWolf15 - Tuesday, December 26, 2006 - link

Insightful post. I'm still using an nForce 4 Ultra chipset board (MSI 7125 K8N Neo4 Platinum), and it's been good for me, but I've never used their firewall software after hearing reports from others.The current 680i issues have led me to the same conclusion as you: I have no interest in buying an nVidia chipset mainboard next time around (so far, Intel's i975X seems to be the only one I'd be interested in). It seems nVidia has a history of sweeping troubles (i.e., this issue, first-generation PureVideo fiascos with the NV40/45 graphics chipsets that I'm surprised never caused a class-action, the nForce3 250Gb firewall that didn't provide the acceleration they first claimed it did) under the rug if they cannot resolve them through software fixes, and hope nobody raises enough of a ruckus (a method which seems to have worked well for them).

I've just bought a new Geforce graphics card, but experiencing the PureVideo issues alone caused me to skip to ATI for two generations. It's also taught me to read forums with additional user experiences of a product for the first month after release, before I purchase. It seems review sites often miss driver issues/bugs in first-rev. hardware, due to limited time envelopes for review, or not being able to test with as wide a variety of hardware as the community (admittedly, not their fault). I'm not willing to pay the early-adopter/rev 0.9 price any more.

KeypoX - Saturday, December 23, 2006 - link

anyone notice how low quality these articles have become? A couple years ago this site was a decent place to get some info but now ...Please go back to the old good qual cause now you guys are not good at all ... i feel pretty sad everytime i visit the site

Xcom1Cheetah - Friday, December 22, 2006 - link

Was just wandering isn't the power numbers of idle and full load are a little to high for the stability of the system.. i m not sure but i feel the higher power is going to reduce the stability of the over clock in the longer run...Performance and feature wise it look pretty ideal to me.. only if its power number has been inline with P965.

Any chance that these power number coming down due to the BIOS fix/update.?

JarredWalton - Friday, December 22, 2006 - link

I doubt the power req's will drop much at all over time. However, higher power draw doesn't necessarily mean less stable. It does mean you usually need more cooling, but a lot of it is simply a factor of the chipset design. I'm pretty sure 650i is a 90nm process technology, but for whatever reason NVIDIA has always made chips that run hot. The Pentium 4 wasn't less stable because it used more power, though, and neither is the nForce series.Perhaps part of the cause of the high power is that NVIDIA uses HyperTransport as well as the Intel FSB architecture. Then having two chips that run hot.... Added circuitry to go from one to the other? I don't know. Still, the ~40W power difference is pretty amazing (in a bad way).

Avalon - Friday, December 22, 2006 - link

For $130, that's a pretty good looking board. I was expecting the 650SLI chipset based boards to be more around $150-$175. Now this makes me curious as to how 650Ultra will pan out.