The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

Meet The 5970

To cool the beast, AMD has stepped up the cooler from a solid copper block to a vapor chamber design, which offers slightly better performance for large surface area needs. Vapor chambers (which are effectively flat heatpipes) have largely been popularized by Sapphire, who uses them on their Vapor-X and other high-end series cards. This is the first time we’ve seen a vapor chamber cooler on a stock card. AMD tells us this cooler is design to keep up with 400W of thermal dissipation.

With the need for such a cooler, AMD has finally parted with their standard 5000 series port configuration in order to afford a full slot to vent hot air. In place of the 2xDVI + HDMI + DisplayPort configuration, we have 2xDVI + MiniDisplayPort, all on one slot. MDP was just approved by the VESA last week, and is identical to DisplayPort in features, the only difference is that it’s smaller. This allows AMD to continue offering Eyefinity support, and it also conviently solves any questions of how to plug 3 monitors in, as there are now only as many DVI-type ports as there are available TMDS encoder pairs.

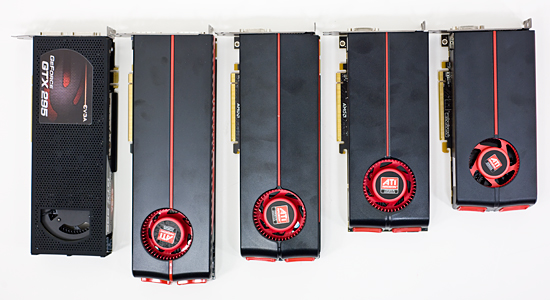

Finally, as dual-GPU cards are always bigger than their single-GPU brethren, and the 5970 is no exception to this rule. However the 5970 really drives this point home, being the largest video card we’ve ever tested. The PCB is 11.5” long, and with the overhang of the cooling shroud, that becomes 12.16” (309mm). This puts it well past our previous record holder, the 5870, and even father ahead of dual-GPU designs like the 4870X2 and GTX 295, both of which were 10.5”. The only way to describe the 5970 is “ridiculously long”.

With such a long card, there are going to be some definite fitting issues on smaller cases. For our testing we use a Thermaltake Speedo case, which is itself an oversized case. We ended up having to remove the adjustable fan used to cool the PCIe slots in order to make the 5970 fit. On a smaller and more popular case like the Antec P182, we had to remove the upper hard drive cage completely in order to fit the card.

In both cases we were able to fit the card, but it required some modification to get there, and this we suspect is going to be a common story. AMD tells us that the full ATX spec calls for 13.3” of room for PCIe cards, and while we haven’t been able to find written confirmation of this, this seems to be correct. Full size towers should be able to accept the card, and some mid size towers should too depending on what’s behind the PEG slot. However – and it’s going to be impossible to stress this enough – if you’re in the market for this card, check your case

GTX 295, 5970, 5870, 5850, 5770

On a final note, while the ATX spec may call for 13.3”, we hope that we don’t see cards this big; in fact we’d like to not see cards this big. Such a length is long enough that it precludes running a fan immediately behind the video card on many cases, and quite frankly at a 294W TDP, this card is hot enough that we’d feel a lot better if we had a fan there to better feed air to the card.

114 Comments

View All Comments

Paladin1211 - Saturday, November 21, 2009 - link

To be precise, anything above the monitor refresh rate is not going to be recognizable. Mine maxed out at 60Hz 1920x1200. Correct me if I'm wrong.Thanks :)

noquarter - Saturday, November 21, 2009 - link

If you read AnandTech's 'Triple Buffering: Why We Love It' article, there is a very slight advantage at more than 60fps even though the display is only running at ~60Hz. If the GPU finishes rendering a frame immediately after the display refresh then that frame will be 16ms stale by the time the display shows it as it won't have the next one ready in time. If someone started coming around the corner while that frame is stale it'd be 32ms (stale frame then fresh frame) before the first indicator showed up. This is simplified as with v-sync off you'll just get torn frames but the idea is still there.To me, it's not a big deal, but if you're looking at a person with quick reaction speed of 180ms, 32ms of waiting for frames to catch up could be significant I guess. If you increase the fps past 60 you're more likely to have a fresh frame rendered right before each display refresh.

T2k - Friday, November 20, 2009 - link

Seriously: is he no more...? :DXeroG1 - Thursday, November 19, 2009 - link

OK, so seriously, did you really take a $600 video card and benchmark Crysis Warhead without turning it all the way up? The chart says "Gamer Quality + Enthusiast Shaders". I'm wondering if that's really how you guys benchmarked it, or if the chart is just off. But if not, the claim "Crysis hasn’t quite fallen yet, but it’s very close" seems a little odd, given that you still don't have all the settings turned all the way up.Incidentally, I'm running a GeForce 9800 GTX (not plus) and a Core2Duo E8550, and I play Warhead at all settings enthusiast, no AA, at 1600x900. At those settings, it's playable for me. People constantly complain about performance on that title, but really if you just turn down the resolution, it scales pretty well and still looks better than anything else on the market IMHO.

XeroG1 - Thursday, November 19, 2009 - link

Er, oops - that was supposed to say "E8500", not "E8550", since there is no 8550.mapesdhs - Thursday, November 19, 2009 - link

Carnildo writes:

> ... I was the administrator for a CAVE system. ...

Ditto! :D

> ... ported a number of 3D shooters to the platform. You haven't

> lived until you've seen a life-sized opponent come around the

> corner and start blasting away at you.

Indeed, Quake2 is amazing in a CAVE, especially with both the player

and the gun separately motion tracking - crouch behind a wall and be

able to stick your arm up to fire over the wall - awesome! But more

than anything as you say, it's the 3D effect which makes the experience.

As for surround-vision in general... Eyefinity? Ha! THIS is what

you want:

http://www.sgidepot.co.uk/misc/lockheed_cave.jpg">http://www.sgidepot.co.uk/misc/lockheed_cave.jpg

270 degree wraparound, 6-channel CAVE (Lockheed flight sim).

I have an SGI VHS demo of it somewhere, must dig it out sometime.

Oh, YouTube has some movies of people playing Quake2 in CAVE

systems. The only movie I have of me in the CAVE I ran was

a piece taken of my using COVISE visualisation software:

http://www.sgidepot.co.uk/misc/iancovise.avi">http://www.sgidepot.co.uk/misc/iancovise.avi

Naturally, filming a CAVE in this way merely shows a double-image.

Re people commenting on GPU power now exceeding the demands for

a single display...

What I've long wanted to see in games is proper modelling of

volumetric effects such as water, snow, ice, fire, mud, rain, etc.

Couldn't all this excess GPU power be channeled into ways of better

representing such things? It would be so cool to be able to have

genuinely new effects in games such as naturally flowing lava, or

an avalanche, or a flood, tidal wave, storm, landslide, etc. By this

I mean it being done so that how the substance behaves is governed

by the environment in a natural way (physics), not hard coded. So far,

anything like this is just simulated - objects involved are not

physically modelled and don't interact in any real way. Rain is

a good example - it never accumulates, flows, etc. Snow has weight,

flowing water can make things move, knock you over, etc.

One other thing occurs to me: perhaps we're approaching a point

where a single CPU is just not enough to handle what is now possible

at the top-end of gaming. To move them beyond just having ever higher

resolutions, maybe one CPU with more & more cores isn't going to

work that well. Could there ever be a market for high-end PC

gaming with 2-socket mbds? I do not mean XEON mbds as used for

servers though. Just thoughts...

Ian.

gorgid - Thursday, November 19, 2009 - link

WITH THEIR CARDS ASUS PROVIDES THE SOFTWARE WHERE YOU CAN ADJUST CORE AND MEMORY VOLTAGES. YOU CAN ADJUST CORE VOLTAGE UP TO 1.4VREAD THAT:

http://www.xtremesystems.org/forums/showthread.php...">http://www.xtremesystems.org/forums/sho...cd1d6d10...

I ORDERED ONE FROM HERE:

http://www.provantage.com/asus-eah5970g2dis2gd5a~7...">http://www.provantage.com/asus-eah5970g2dis2gd5a~7...

K1rkl4nd - Wednesday, November 18, 2009 - link

Am I the only one waiting for TI to come out with a 3x3 grid of 1080p DLPs? You'd think if they can wedge ~2.2 million mini-mirrors on a chip, they should be able to scale that up to a native 5760x3240. Then they could buddy up with Dell and sell it as an Alienware premium package of display + computer capable of using it.skrewler2 - Wednesday, November 18, 2009 - link

When can we see benchmarks of 2x 5970 in CF?Mr Perfect - Wednesday, November 18, 2009 - link

"This means that it’s not just a bit quieter to sound meters, but it really comes across that way to human ears too"Have you considered using the dBA filter rather then just raw dB? dBA is weighted to measure the tones that the human ear is most sensitive to, so noise-oriented sites like SPCR use dBA instead.