The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

Meet The 5970

To cool the beast, AMD has stepped up the cooler from a solid copper block to a vapor chamber design, which offers slightly better performance for large surface area needs. Vapor chambers (which are effectively flat heatpipes) have largely been popularized by Sapphire, who uses them on their Vapor-X and other high-end series cards. This is the first time we’ve seen a vapor chamber cooler on a stock card. AMD tells us this cooler is design to keep up with 400W of thermal dissipation.

With the need for such a cooler, AMD has finally parted with their standard 5000 series port configuration in order to afford a full slot to vent hot air. In place of the 2xDVI + HDMI + DisplayPort configuration, we have 2xDVI + MiniDisplayPort, all on one slot. MDP was just approved by the VESA last week, and is identical to DisplayPort in features, the only difference is that it’s smaller. This allows AMD to continue offering Eyefinity support, and it also conviently solves any questions of how to plug 3 monitors in, as there are now only as many DVI-type ports as there are available TMDS encoder pairs.

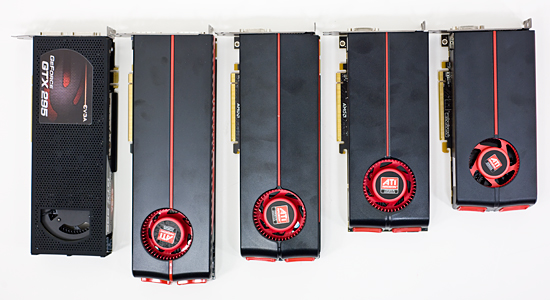

Finally, as dual-GPU cards are always bigger than their single-GPU brethren, and the 5970 is no exception to this rule. However the 5970 really drives this point home, being the largest video card we’ve ever tested. The PCB is 11.5” long, and with the overhang of the cooling shroud, that becomes 12.16” (309mm). This puts it well past our previous record holder, the 5870, and even father ahead of dual-GPU designs like the 4870X2 and GTX 295, both of which were 10.5”. The only way to describe the 5970 is “ridiculously long”.

With such a long card, there are going to be some definite fitting issues on smaller cases. For our testing we use a Thermaltake Speedo case, which is itself an oversized case. We ended up having to remove the adjustable fan used to cool the PCIe slots in order to make the 5970 fit. On a smaller and more popular case like the Antec P182, we had to remove the upper hard drive cage completely in order to fit the card.

In both cases we were able to fit the card, but it required some modification to get there, and this we suspect is going to be a common story. AMD tells us that the full ATX spec calls for 13.3” of room for PCIe cards, and while we haven’t been able to find written confirmation of this, this seems to be correct. Full size towers should be able to accept the card, and some mid size towers should too depending on what’s behind the PEG slot. However – and it’s going to be impossible to stress this enough – if you’re in the market for this card, check your case

GTX 295, 5970, 5870, 5850, 5770

On a final note, while the ATX spec may call for 13.3”, we hope that we don’t see cards this big; in fact we’d like to not see cards this big. Such a length is long enough that it precludes running a fan immediately behind the video card on many cases, and quite frankly at a 294W TDP, this card is hot enough that we’d feel a lot better if we had a fan there to better feed air to the card.

114 Comments

View All Comments

SJD - Wednesday, November 18, 2009 - link

Thanks Anand,That kind of explains it, but I'm still confused about the whole thing. If your third monitor supported mini-DP then you wouldn't need an active adapter, right? Why is this when mini-DP and regular DP are the 'same' appart from the actual plug size. I thought the whole timing issue was only relevant when wanting a third 'DVI' (/HDMI) output from the card.

Simon

CrystalBay - Wednesday, November 18, 2009 - link

WTH is really up at TWSC ?Jacerie - Wednesday, November 18, 2009 - link

All the single game tests are great and all, but once I would love to see AT run a series of video card tests where multiple instances of games like EVE Online are running. While single instance tests are great for the FPS crowd, all us crazy high-end MMO players need some love too.Makaveli - Wednesday, November 18, 2009 - link

Jacerie the problem with benching MMO's and why you don't see more of them is all the other factors that come into play. You have to now deal with server latency, you also have no control of how many players are usually in the server at any given time when running benchmarks. There is just to many variables that would not make the benchmarks repeatable and valid for comparison purposes!mesiah - Thursday, November 19, 2009 - link

I think more what he is interested in is how well the card can render multiple instances of the game running at once. This could easily be done with a private server or even a demo written with the game engine. It would not be real world data, but it would give an idea of performance scaling when multiple instances of a game are running. Myself being an occasional "Dual boxer" I wouldn't mind seeing the data myself.Jacerie - Thursday, November 19, 2009 - link

That's exactly what I was trying to get at. It's not uncommon for me to be running at lease two instances of EVE with an entire assortment of other apps in the background. My current 3870X2 does the job just fine, but with 7 out and DX11 around the corner I'd like to know how much money I'm going to need to stash away to keep the same level of usability I have now with the newer cards.Zool - Wednesday, November 18, 2009 - link

The so fast is only becouse 95% of the games are dx9 xbox ports. Still crysis is the most demanding game out there quite a time (it need to be added that it has a very lazy engine). In Age of Conan the diference in dx9 and dx10 is more than half(with plenty of those efects on screen even1/3) the fps drop. Those advanced shader efects that they are showing in demos are actualy much more demanding on the gpu than the dx9 shaders. Its just the thing they dont mention it. It will be same with dx11. A full dx11 game with all those fancy shaders will be on the level of crysis.crazzyeddie - Wednesday, November 18, 2009 - link

... after their first 40nm test chips came back as being less impressive than **there** 55nm and 65nm test chips were.silverblue - Wednesday, November 18, 2009 - link

Hehe, I saw that one too.frozentundra123456 - Wednesday, November 18, 2009 - link

Unfortunately, since playing MW2, my question is: are there enough games that are sufficiently superior on the PC to justify the inital expense and power usage of this card? Maybe thats where eyefinity for AMD and PhysX for nVidia come in: they at least differentiate the PC experience from the console.I hate to say it, but to me there just do not seem to be enough games optimized for the PC to justify the price and power usage of this card, that is unless one has money to burn.