NVIDIA Optimus - Truly Seamless Switchable Graphics and ASUS UL50Vf

by Jarred Walton on February 9, 2010 9:00 AM ESTA Brief History of Switchable Graphics

So what is Optimus exactly? You could call it NVIDIA Switchable Graphics III, but that makes it sound like only a minor change. After our introduction, in some ways the actual Optimus technology is a bit of a letdown. This is not to say that Optimus is bad—far from it—but the technology only addresses a subset of our wish list. The area Optimus does address—specifically switching times—is dealt with in what can only be described as an ideal fashion. The switch between IGP and discrete graphics is essentially instantaneous and transparent to the end-user, and it's so slick that it becomes virtually a must-have feature for any laptop with a discrete GPU. We'll talk about how Optimus works in a minute, but first let's discuss how we got to our present state of affairs.

It turns out that the Optimus hardware has been ready for a while, but NVIDIA has been working hard on the software side of things. On the hardware front, all of the current G200M and G300M 40nm GPUs have the necessary internal hardware to support Optimus. Earlier laptop designs using those GPUs don't actually support the technology, but the potential was at least there. The software wasn't quite ready, as it appears to be quite complex—NVIDIA says that the GeForce driver base now has more lines of code than Windows NT, for example. NVIDIA was also keen to point out that they have more software engineers (over 1000) than hardware engineers, and some of those software engineers are housed in partner offices (e.g. there are NVIDIA employees working over at Adobe, helping with the Flash 10.1 software). Anyway, the Optimus hardware and software are both ready for public release, and you should be able to find first Optimus enabled laptops for sale starting today.

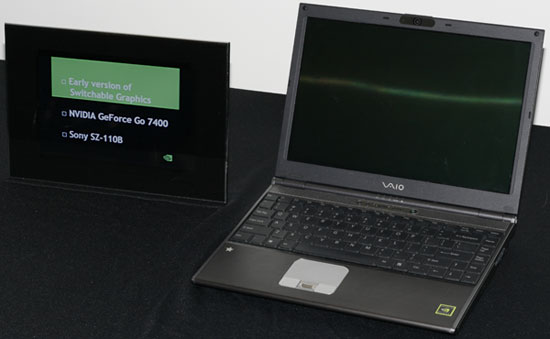

The original switchable graphics designs used a hardware switch to allow users to select either an IGP or discrete GPU. The first such system that we tested was the ASUS N10JC, but the very first implementation came from Sony in the form of the VAIO SZ-110B launched way back in April 2006. (The Alienware m15x was also gen1 hardware.) Generation one required a system reboot in order to switch between graphics adapters, with hardware multiplexers routing power to the appropriate GPU and more multiplexers to route the video signal from either GPU to the various display outputs. On the surface, the idea is pretty straightforward, but the actual implementation is much more involved. A typical laptop will have three separate video devices: the laptop LCD, a VGA port, and a DVI/HDMI port. Adding the necessary hardware requires six (possibly more) multiplexer ICs at a cost of around $1 each, plus more layers on the motherboard to route all of the signals. In short, it was expensive, and what's worse the required system reboot was highly disruptive.

Many users of the original switchable graphics laptops seldom switched, opting instead to use either the IGP or GPU all the time. I still liked the hardware and practically begged manufacturers to include switchable graphics in all future laptop designs. My wish wasn't granted, although given the cost it's not difficult to see why. As far as the rebooting, my personal take is that it was pretty easy to simply switch your laptop into IGP mode right before spending a day on the road. If you were going to spend most of the day seated at your desk, you'd switch to discrete mode. The problem is, as one of the highly technical folks on the planet, I'm not a good representation of a typical user. Try explaining to a Best Buy shopper exactly what switchable graphics is and how it works, and you're likely to cause more confusion than anything. Our readers grasp the concept, but the added cost for a feature many wouldn't use meant there was limited uptake.

Generation two involved a lot more work on the software side, as the hardware switch became a software controlled switch. NVIDIA also managed to eliminate the required system reboot (although certain laptop vendors continue to require a reboot or at least a logout, e.g. Apple). Again, that makes it sound relatively simple, but there are many hurdles to overcome. Now the operating system has to be able to manage two different sets of drivers, but Windows Vista in particular doesn't allow multiple display drivers to be active. The solution was to create a "Display Driver Interposer" that had knowledge of both driver sets. Launched in 2008, the first laptop we've reviewed with gen2 hardware and software was actually the ASUS UL80Vt, which took everything we loved about gen1 and made it a lot more useful. Now there was no need to reboot the system; you could switch between IGP and dGPU in about 5 to 10 seconds, theoretically allowing you the best of both worlds. We really liked the UL80Vt and gave it our Silver Editors' Choice award, but there was still room for improvement.

First, the use of a driver interposer meant that generic Verde drivers would not work with switchable graphics gen2. The interposer conforms to the standard graphics APIs, but then there's a custom API to talk to the IGP drivers. The result is that the display driver package contains both NVIDIA and Intel drivers (assuming it's a laptop with an Intel IGP), so driver updates are far more limited. If either NVIDIA or Intel release a new driver, there's an extra ~10 days of validation and testing that take place; if all goes well, the new driver is released, but any bugs reset the clock. Of course, that's only in a best-case situation where NVIDIA and Intel driver releases happen at the same time, which they rarely do. In practice, the only time you're likely to get a new driver is if there's a showstopper bug of some form and the laptop OEM asks NVIDIA for a new driver drop. This pretty much takes you back to the old way of doing mobile graphics drivers, which is not something we're fond of.

Another problem with gen2 is that there are still instances where switching from IGP to dGPU or vice versa will "block". Blocking occurs when an application is in memory that currently uses the graphics system. If blocking was limited to 3D games it wouldn't be a critical problem, but the fact is blocking can occur on many applications—including minesweeper and solitaire, web browsers where you're watching (or have watched) Flash videos, etc. If a blocking application is active, you need to close it in order to switch. The switch also results in a black screen as the hardware shifts from one graphics device to the other, which looks like potentially flaky hardware if you're not expecting the behavior. (This appears to be why Apple MacBook Pro systems require a reboot/logout to switch, even though they're technically gen2 hardware.) Finally, it's important to note that gen2 costs just as much as gen1 in terms of muxes and board layers, so it can still increase BOM and R&D costs substantially.

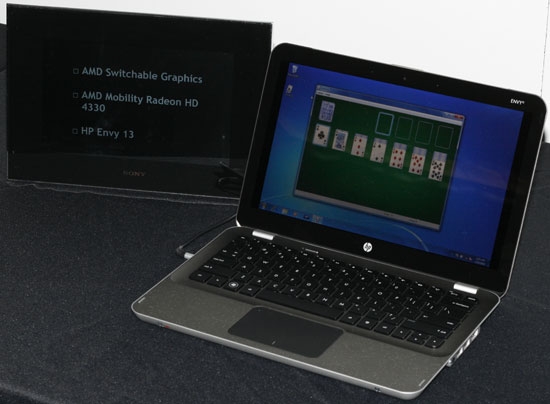

Incidentally, AMD switchable graphics is essentially equivalent to NVIDIA's generation two implementation. The HP Envy 13 is an example of ATI switchable graphics, with no reboot required and about 5-10 seconds required to switch between IGP and discrete graphics (an HD 4330 in this case—and why is it they always seem to use the slowest GPUs; why no HD 4670?).

For technically inclined users, gen2 was a big step forward and the above problems aren't a big deal; for your typical Best Buy shopper, though, it's a different story. NVIDIA showed a quote from Roger Kay, President of Endpoint Technology Associates, that highlights the problem for such users.

"Switchable graphics is a great idea in theory, but in practice people rarely switch. The process is just too cumbersome and confusing. Some buyers wonder why their performance is so poor when they think the discrete GPU is active, but, unknown to them, it isn't."

The research from NVIDIA indicates that only 1% of users ever switched between IGP and dGPU, which frankly seems far too low. Personally, if a laptop is plugged in then there's no real reason to switch off the discrete graphics, and if you're running on battery power there's little reason to enable the discrete graphics most of the time. It could be that only 1% of users actually recognize that there's a switch taking place when they unplug their laptop; it could also be that MacBook Pro users with switchable graphics represented a large percentage of the surveyed users. Personally, I am quite happy with gen2 and my only complaint is that not enough companies use the technology in their laptops.

49 Comments

View All Comments

JarredWalton - Tuesday, February 9, 2010 - link

You can manually set applications to only use the IGP instead of turning on the dGPU, but to my knowledge there's no way to completely turn off the dGPU and keep it off. Of course, when the GPU isn't needed it is totally powered off so you don't lose battery life unless you start running apps that want to run on the GPU.macroecon - Tuesday, February 9, 2010 - link

Well, I was getting ready to pull the trigger over the weekend to buy a UL30Vt, but I'm glad that I waited. While this is not a revolutionary feature, it does make laptops that lacks it less valuable in my opinion. The video that Jarred posted toward the end of the article really demonstrates the value of on-the-fly GPU switching. I think that I'll wait for bit longer for Optimus, and also DirectX11 nVidia GPU, to hit the market. Thanks for the coverage Jarred!lopri - Tuesday, February 9, 2010 - link

Not to rain on NV's parade, but I'd much prefer if Optimus is doing its thing in 100% hardware. In an ideal world, software solution can do the same job as hardware solution, but I've seen some caveats on software solutions - on desktops, admittedly. Instead of trying to 'detect' the apps, detecting 'loads' and take care of it in hardware.Some might know what I'm talking about.

JarredWalton - Tuesday, February 9, 2010 - link

The only problem with this is that the software is needed to work between Intel and NVIDIA hardware. There's also a concern about if you want something to NOT run on the dGPU (for testing purposes or to save battery life). With IGP reaching the point where it can handle most video tasks, you wouldn't want to power up the dGPU to do H.264 decoding as power requirements would jump several watts.Of course, if you could have NVIDIA IGP and dGPU it might be possible to do more on the hardware side, but Arrandale, Pineview, Sandy Bridge, etc. make it very unlikely that we would see another NVIDIA IGP any time soon.

acooke - Tuesday, February 9, 2010 - link

OK, so this is awesome (particularly with Lenovo and CUDA mentioned). But how is the encrupted profile update driver yadda yadda stuff going to work with Linux?I'm a software developer, I work with CUDA (OpenCL actually), I use Linux. NVidia should worry about people like me because we're the motor behind the take-up of Fermi, which is going to be a significant source of cash for them. Currently I can do very basic OpenCL development while on the road with my laptop using the AMD CPU driver (despite having Intel/Lenovo hardware), but being able to use a GPU woul dbe a huge improvement (it's not that much fun running GPU code on a CPU!).

darckhart - Wednesday, February 10, 2010 - link

yes, i'm curious about this also.room1oh1 - Tuesday, February 9, 2010 - link

I hope they don't fit any brakes into a laptop!MonkeyPaw - Tuesday, February 9, 2010 - link

Yeah, it's rather unfortunate that they said it should work like a hybrid, and they have the picture of a 2010 Prius in the slide. Just goes to show that car analogies don't work! They could have just drawn the parallel to your laptop battery--when you unplug the laptop, it starts using the battery with no user intervention.horseeater - Tuesday, February 9, 2010 - link

Switchable graphics are nice, but I want external gfx cards (or enclosures for desktop gfx cards) for laptops. Just plug it in when you're home, kill precious time playing useless junk, and use the igp when on the road.That being said, UL80-vt is reportedly awesome, and improvements are surely welcome, if they don't up the price.

synaesthetic - Wednesday, February 10, 2010 - link

I want external GPUs also, but I want one that can use the laptop's LCD display rather than forcing me to plug in an external display. After all, external displays aren't portable, but a ViDock isn't terribly large.