OCZ's Agility 2 Reviewed: The First SF-1200 with MP Firmware

by Anand Lal Shimpi on April 21, 2010 7:22 PM ESTWhile it happens a lot less now than a couple of years ago, I still see the question of why SSDs are worth it every now and then. Rather than give my usual answer, I put together a little graph to illustrate why SSDs are both necessary and incredibly important.

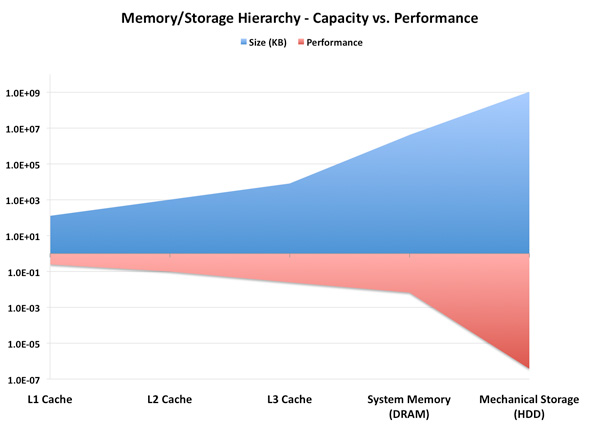

Along the x-axis we have different types of storage in a modern computer. They range from the smallest, fastest storage elements (cache) to main memory and ultimately at the other end of the spectrum we have mechanical storage (your hard drive). The blue portion of the graph indicates typical capacity of these storage structures (e.g. 1024KB L2, 1TB HDD, etc...). The further to the right you go, the larger the structure happens to be.

The red portion of the graph lists performance as a function of access latency. The further right you go, the slower the storage medium becomes.

This is a logarithmic scale so we can actually see what’s going on. While capacity transitions relatively smoothly as you move left to right, look at what happens to performance. The move from main memory to mechanical storage occurs comes with a steep performance falloff.

We could address this issue by increasing the amount of DRAM in a system. However, DRAM prices are still too high to justify sticking 32 - 64GB of memory in a desktop or notebook. And when we can finally afford that, the applications we'll want to run will just be that much bigger.

Another option would be to improve the performance of mechanical drives. But we’re bound by physics there. Spinning platters at more than 10,000 RPM proves to be power, sound and reliability prohibitive. The majority of hard drives still spin at 7200 RPM or less.

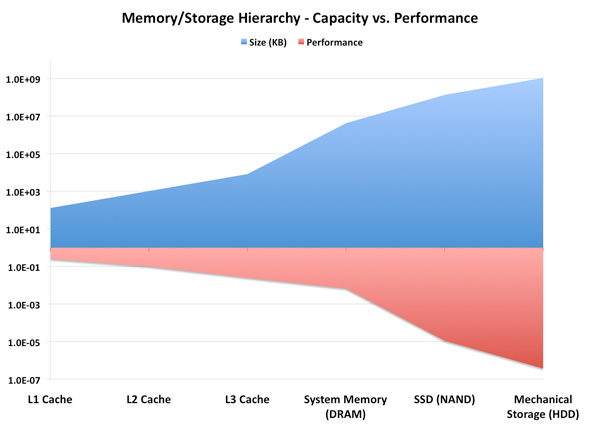

Instead, the obvious solution is to stick another level in the memory hierarchy. Just as AMD/Intel have almost fully embraced the idea of a Level 3 cache in their desktop/notebook processors, the storage industry has been working towards using NAND as an intermediary between DRAM and mechanical storage. Let’s look at the same graph if we stick a Solid State Drive (SSD) in there:

Not only have we smoothed out the capacity curve, but we’ve also addressed that sharp falloff in performance. Those of you who read our most recent VelociRaptor VR200M review will remember that we recommend a fast SSD for your OS/applications, and a large HDD for games, media and other large data storage. The role of the SSD in the memory hierarchy today is unfortunately user-managed. You have to manually decide what goes on your NAND vs. mechanical storage, but we’re going to see some solutions later this year that hope to make some of that decision for you.

Why does this matter? If left unchecked, sharp dropoffs in performance in the memory/storage hierarchy can result in poor performance scaling. If your CPU doubles in peak performance, but it has to wait for data the majority of the time, you’ll rarely realize that performance increase. In essence, the transistors that gave your CPU its performance boost will have been wasted die area and power.

Thankfully we tend to see new levels in the memory/storage hierarchy injected preemptively. We’re not yet at the point where all performance is bound by mass storage, but as applications like virtualization become even more prevalent the I/O bottleneck is only going to get worse.

Motivation for the Addiction

It’s this sharp falloff in performance between main memory and mass storage that makes SSDs so enticing. I’ve gone much deeper into how these things work already, so if you’re curious I’d suggest reading our SSD Relapse.

SSD performance is basically determined by three factors: 1) NAND, 2) firmware and 3) controller. The first point is obvious; SLC is faster (and more expensive) than MLC, but is limited to server use mostly. Firmware is very important to SSD performance. Much of how an SSD behaves is determined by the firmware. It handles all data mapping to flash, how to properly manage the data that’s written on the drive and ensures that the SSD is always operating as fast as possible. The controller is actually less important than you’d think. It’s really a combination of the firmware and controller that help determine whether or not an SSD is good.

For those of you who haven’t been paying attention, we basically have six major controller manufacturers competing today: Indilinx, Intel, Micron, Samsung, SandForce and Toshiba. Micron uses a Marvell controller, and Toshiba has partnered up with JMicron on some of its latest designs.

Of that list, the highest performing SSDs come from Indilinx, Intel, Micron and SandForce. Micron makes the only 6Gbps controller, while the rest are strictly 3Gbps. Intel is the only manufacturer on our shortlist that we’ve been covering for a while. The rest of the companies are relative newcomers to the high end SSD market. Micron just recently shipped its first competitive SSD, the RealSSD C300 as did SandForce.

We first met Indilinx a little over a year ago when OCZ introduced a brand new drive called the Vertex. While it didn’t wow us with its performance, OCZ’s Vertex seemed to have the beginnings of a decent alternative to Intel’s X25-M. Over time the Vertex and other Indilinx drives got better, eventually earning the title of Intel alternative. You wouldn’t get the same random IO performance, but you’d get better sequential performance and better pricing.

Several months later OCZ introduced another Indilinx based drive called Agility. Using the same Indilinx Barefoot controller as the Vertex, the only difference was Agility used 50nm Intel or 40nm Toshiba NAND. In some cases this resulted in lower performance than Vertex, while in others we actually saw it pull ahead.

OCZ released many other derivatives based on Indilinx’s controller. We saw the Vertex EX which used SLC NAND for enterprise customers, as well as the Agility EX. Eventually as more manufacturers started releasing Indilinx based drives, OCZ attempted to differentiate by releasing the Vertex Turbo. The Vertex Turbo used an OCZ exclusive version of the Indilinx firmware that ran the controller and external DRAM at a higher frequency.

Despite a close partnership with Indilinx, earlier this month OCZ announced that its next generation Vertex 2 and Agility 2 drives would not use Indilinx controllers. They’d instead be SandForce based.

60 Comments

View All Comments

berzerk101 - Thursday, April 22, 2010 - link

Hi,

A little question here I was wondering if those SandForce drive have some Garbage recycling in place like the Vertex with there last FW.

If you look on youtube OWC state that there drive don't drop in performance on Mac but there's no TRIM support on Mac last time I checked.

Because for now the only drive I found having some hardware auto-trim working on Mac is the Vertex with Indilinx controller.

Video link:

http://www.youtube.com/watch?v=52OpellvMLQ

Thanks

Hacp - Thursday, April 22, 2010 - link

Anand, you mention Intel/indilinx but not the realssd in your final review? It has the lowest cost per gb AND is one of the best performers. It scored very high on your real world benches.GullLars - Thursday, April 22, 2010 - link

No, not at all. I'm saying if SandForce had made the controller with a SATA 6Gbps interface instead of 3Gbps, it would have maxed the interface anyway for highly compressible data, both reading and writing.With RAW speeds of about 200-220MB/s read and 120-130MB/s write, data compressible to 1:2 could be read at 400-440MB/s and written at 240-260MB/s, and data compressible to 1:3 could be read at 600MB/s and written at 360-390MB/s...

Anand says in his Agility 2 review that sandforce found installing windows 7 and MS office 2007 physically took up less than half of the original size, meaning those kinds of files would be written above 250MB/s and read above 450MB/s...

The sandforce drives get PCmark scores around 38-40K, with a SATA 6Gbps interface i think they would get over 50K, maybe even over 60K. The limiting factor would be the read IOPS, wich tops out around 30-35K IOPS, and wouldn't get a boost by a faster interface since it seems to be controller computing power bound.

Since it seems the 50GB versions are capable of the same both RAW and compressed speeds as 100GB and 200GB versions, a ROC + 2x SF-1200 in a 2,5" enclosure with SATA/SAS 6Gbps interface could be nice. Sort of like OCZ Apex, G.skill Titan, and OCZ Colossus, only made decently with full NCQ support and not tonnes of accesstime overhead. SF-1200 due to price, and (2x10K=) 20K IOPS sustained random write being "enough". Such a drive would give the same numbers as anvil has posted above, at possibly 1,3-1,5x the price, but on a single port, making it easy to scale to insane bandwidth on LSI 92xx for compressible data (over 4GB/s both read and write).

GullLars - Thursday, April 22, 2010 - link

Like the subject says, that was meant as a reply on XS forum...Here is the post i meant to post:

Anand

As always, a great review, but i still miss 4KB random tests at higher queue depths to test out more than just 3 of the flash channels. A graph of 4KB random read with Queue Depth (2^n steps) on the x axis and IOPS/bandwidth on the y axis would also be nice, as it would show scaling by load.

If you were to do this test, you would see that your statement that SandForce's 4KB random read performance on compressed data being worse than Indilinx is false for queue depths over 4, where indilinx tops out at ca 60MB/s = 15K IOPS, while sandforce reaches about 120MB/s. Seing as in that case SandForce has double the 4KB random read performance of Barefoot on uncompressible data, i suggest the statement should be retracted, or at least a note added to state it's not true for higher queue depths.

GullLars - Thursday, April 22, 2010 - link

A little PS to the above:To back my statement with benchmark data:

xtremesystems.org/forums/showpost.php?p=4352703&postcount=56

AS SSD benchmark and CrystalDiskMark 3.0 use hardly compressible data, as shown by the sequential write speeds meassured.

And for those interrested, the benchmark numbers i was reffering to that anvil posted "above" are these:

xtremesystems.org/forums/showpost.php?p=4352876&postcount=57

It's 2 Vertex LE 100GB in RAID-0 off ICH10R, IRST 9.6, WBC off.

vol7ron - Thursday, April 22, 2010 - link

I just want to stress what the graphs show:The Agility's power consumption at idle or full load, is never more than 1W.

That is amazing.

vol7ron

Mr Perfect - Thursday, April 22, 2010 - link

"SandForce also claims that its reduced write amplification could enable the use of cheaper NAND on these drives. It’s an option that some manufacturers may take however OCZ has committed to using the same quality of NAND as it has in the past. The Agility 2 uses 34nm IMFT NAND, presumably similar to what’s used in Intel’s X25-M G2."Sounds good. Hopefully other OEMs follow OCZs example.

Impulses - Thursday, April 22, 2010 - link

Why are you using the street of the Corsair Nova on the Pricing Comparison chart at the end? Isn't that drive essentially the same thing as the OCZ Solid 2? You can get said drive for $70 less after MIR! (and the MIR always seems to be running) That dramatically impacts the cost per GB and it's value when compared to the other drives imo... Even the Agility is still $50 cheaper. The price of the 160GB X25-M seems to be off too, it might've spiked up during the days that the review was posted but it's been pretty easy to find at $450... ($40 less)Realistically the X25-M G2 drives and older Indillix drives end up being much cheaper per GB than the chart would seem to indicate, $2.50-2.75... So you pay quite a substantial premium for a C300 or a SF drive, 'specially if you opt for the 128GB C300 (which costs more per GB than the 256GB version, should include both on the chart for reference imo).

I realize prices are always in shift, but these have actually been pretty stable over the past couple of months and I don't think they're likely to change (outside of specials and/or the occasional rebate) 'till end of year when they all start shifting to 20nm NAND flash... It's kind of amusing that the larger C300 breaks the mold and ends up being one of the better values (if you actually do need that much capacity and have the controller to take advantage of it's full performance); the X25-M and the older Indillix drives are probably still the best option for most people tho.

glugglug - Thursday, April 22, 2010 - link

This is better than we are getting from a Fusion I/O. I know Fusion I/O claims 100K IOPS but I think they need to be 4KB sequential to achieve that.GullLars - Thursday, April 22, 2010 - link

Fusion-IO's IOdrive at 160GB SLC does 100K IOPS 4KB random write.ioXtreme, the 80GB MLC prosumer version on the other hand does around 30K i think.