Intel Unveils Moorestown and the Atom Z600, The Fastest Smartphone Platform?

by Anand Lal Shimpi on May 4, 2010 11:54 PM EST- Posted in

- Smartphones

- Intel

- Atom

- Mobile

- SoCs

When I wrote my first article on Intel's Atom architecture I called it The Journey Begins. I did so because while Atom has made a nice home in netbooks over the years, it was Intel's smartphone aspirations that would make or break the product. And the version of Atom that was suitable for smartphone use was two years away.

Time sure does fly. Today Intel is finally unveiling its first Atom processors for smartphones and tablets. Welcome to Moorestown.

Craig & Paul’s Excellent Adventure

Six years ago Intel’s management canned a project called Tejas. It was destined to be another multi-GHz screamer, but concerns over power consumption kept it from coming to fruition. Intel instead focused on its new Core architecture that eventually led to the CPUs we know and love today (Nehalem, Lynnfield, Arrandale, Gulftown, etc...).

When a project gets cancelled, it wreaks havoc on the design team. They live and breathe that architecture for years of their lives. To not see it through to fruition is depressing. But Intel’s teams are usually resilient, as is evidenced by another team that worked on a canceled T-project.

The Tejas team in, er, Texas was quickly tasked with coming up with the exact opposite of the chip they had just worked on: an extremely low power core for use in some sort of a mobile device (it actually started as a low power core as a part of a many core x86 CPU, but the many core project got moved elsewhere before the end of 2004). A small group of engineers were first asked to find out whether or not Intel could reuse any existing architectures in the design of this ultra low power mobile CPU. The answer quickly came back as a no and work began on what was known as the Bonnell core.

No one knew what the Bonnell core would be used in, just that it was going to be portable. Remember this was 2004 and back then the smartphone revolution was far from taking over. Intel’s management felt that people were either going to carry around some sort of mobile internet device or an evolution of the smartphone. Given the somewhat conflicting design goals of those two devices, the design team in Austin had to focus on only one for the first implementation of the Bonnell core.

In 2005, Intel directed the team to go after mobile internet devices first. The smartphone version would follow. Many would argue that it was the wrong choice, after all, when was the last time you bought a MID? Hindsight is 20/20 and back then the future wasn’t so clear. Not to mention that shooting for a mobile extension of the PC was a far safer bet for a PC microprocessor company than going after the smartphone space. Add in the fact that Intel already had a smartphone application processor division (XScale) at the time and going the MID route made a lot of sense.

The team had to make an ultra low power chip for use in handheld PCs by 2008. The power target? Roughly 0.5W.

Climbing Bonnell

An existing design wouldn’t suffice, so the Austin team lead by Belli Kuttanna (former Sun and Motorola chip designer) started with the most basic of architectures: a single-issue, in-order core. The team iterated from there, increasing performance and power consumption until their internal targets were met.

In order architectures, as you may remember, have to execute instructions in the order they’re decoded. This works fine for low latency math operations but instructions that need data from memory will stall the pipeline and severely reduce performance. It’s like not being able to drive around a stopped car. Out of order architectures let you schedule around memory dependent operations so you can mask some of the latency to memory and generally improve performance. Despite what order you execute instructions, they all must complete in the program’s intended order. Dealing with this complexity costs additional die area and power. It’s worth it in the long run as we’ve seen. All Intel CPUs since the Pentium Pro have been wide (3 - 4 issue), out of order cores, but they also have had much higher power budgets.

As I mentioned in my original Atom article in 2008 Intel was committed to using in order cores for this family for the next 5 years. It’s safe to assume that at some point, when transistor geometries get small enough, we’ll see Intel revisit this fundamental architectural decision. In fact, ARM has already gone out of order with its Cortex A9 CPU.

The Bonnell design was the first to implement Intel’s 2 for 1 rule. Any feature included in the core had to increase performance by 2% for every 1% increase in power consumption. That design philosophy has since been embraced by the entire company. Nehalem was the first to implement the 2 for 1 rule on the desktop.

What emerged was a dual issue, in-order architecture. The first of its kind from Intel since the original Pentium microprocessor. Intel has learned a great deal since 1993, so reinventing the Pentium came with some obvious enhancements.

The easiest was SMT, or as most know it: Hyper Threading. Five years ago we were still arguing about the merits of single vs. dual core processors, today virtually all workloads are at least somewhat multithreaded. SMT vastly improves efficiency if you have multithreaded code, so Hyper Threading was a definite shoe in.

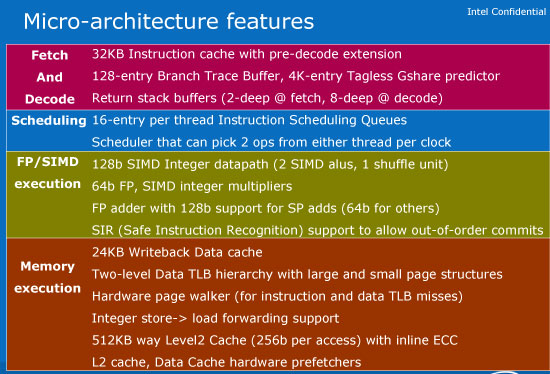

Other enhancements include Safe Instruction Recognition (SIR) and macro-op execution. SIR allows conditional out of order execution depending if the right group of instructions appear. Macro-op execution, on the other hand, fuses x86 instructions that perform related ops (e.g. load-op-store, load-op-execute) so they go down the pipeline together rather than independently. This increases the effective width of the machine and improves performance (as well as power efficiency).

Features like hardware prefetchers are present in Bonnell but absent from the original Pentium. And the caches are highly power optimized.

Bonnell refers to the core itself, but when paired with an L2 cache and FSB interface it became Silverthorne - the CPU in the original Atom. For more detail on the Atom architecture be sure to look at my original article.

67 Comments

View All Comments

CSMR - Wednesday, May 5, 2010 - link

Agreed. Intel needs a process advantage to beat ARM with x86. (Notwithstanding the software pain of transitioning to x86). But it actually doesn't have it. They are roughly on par in this segment, Intel leading by maybe a few months.http://channel.hexus.net/content/item.php?item=225...

hyvonen - Wednesday, May 5, 2010 - link

Sorry, but Intel is ahead way more than two months. Intel's 32nm process is better from performance/power point of view than 28nm bulk processes from others. Relying on numbers such as 32nm and 28nm to figure out which one is better is like using only CPU clock frequency numbers to determine which CPU is fastest.Oh, and since Intel's 32nm products started shipping in the beginning of this year, Intel is roughly a year ahead... maybe more.

metafor - Wednesday, May 5, 2010 - link

Yes and no. The 32nm shipping is the high-performance (high leakage) one used in desktop/laptop processors and also the current Atom. For a smartphone, that simply won't do.The 45nm low-leakage process they used for Moorestown is new territory for Intel and in that respect, they are behind TSMC. While the current bulk silicon 45nm isn't faster than Intel's metal gate 45nm, it's a lot less leaky in terms of power. I would guess it'll take Intel 2 iterations or so before they have leakage down to the point of being competitive. But they have performance going for them.

hyvonen - Wednesday, May 5, 2010 - link

Yeah; first iteration: 45nm low-power process. Second iteration: 32nm low-power process.TSMC is stuck, and won't be able to come up with a low-power process beyond the current one for a couple of years. 40nm is in trouble, 32nm is toast. Good luck with making anything below 32nm "low power" in any sense of the word.

LuxZg - Wednesday, May 5, 2010 - link

Ok, so this should be x86 CPU. So will the "tablet version" support normal Windows 7 OS or something similar? That's my only question.. I don't expect Win 7 on smartphone, but unless we have 100% software compatibility "x86 everywhere" won't mean much to people (except to Intel).iwodo - Wednesday, May 5, 2010 - link

It looks great, If Intel could give Apple some VERY good deal i guess Apple might take it.I cant wait for the 32nm Medfield.

But Apple using it would means no more surprise in terms of Hardware.....

iwodo - Wednesday, May 5, 2010 - link

After reading, I still do not understand the idea why Apple needs to buy other Chip Maker. If Morrestown is this good, and Medfield is much better. ( Apple should know it well before hand ) why spend money.Intel is making chips at volume much cheaper then Apple designing and making their own. Hardware CPU dont differentiate the product. Outlook and Software does.

WaltFrench - Sunday, May 9, 2010 - link

“…I still do not understand the idea why Apple needs to buy other Chip Maker. If Morrestown is this good, and Medfield is much better.”I think you answered your own question. Apple, who probably had some inkling of Intel's plans, has been plowing ahead with proprietary silicon. They must think that for the next couple of years, anyway, they're better off with ARM-based designs, tweaked in-house and sent to whatever foundry gives them the capability they need.

Can anybody estimate the number of Atom-class chips Intel sells? The general estimates are that ARM designs go into a billion devices per year, and Apple is probably thinking that they'll move 50 or 100 million per year. Intel would appear to have a serious resource/investment challenge, in addition to the business challenge of talking people into abandoning ARM.

Visual - Wednesday, May 5, 2010 - link

Let me see if I get this straight... this is a x86 system. And it will NOT run standard x86 OSes or binaries?If so, the developers of this are complete idiots.

It must definitely have the ability to run normal desktop windows apps at launch - either by running a full windows OS, maybe modded to make better use of small screen and no kbd, or at least by some wine-like layer. It must run dosbox with the dynamic core.

Else it being x86 is completely useless.

safcman84 - Wednesday, May 5, 2010 - link

Windows 7 for Phones is hardly an established Smartphone OS. As this chip is targeted for Smartphones, then not having support for Windows is not an issue.Besides, why would Intel support a OS that is not optimised for their CPU when it is touted as the most powerful smartphone solution ? A non-optimised OS will make the chip look bad. If Intel supports andriod devices (plus MeeGo and Moblin) suddenly get 2x the performance, with excellent battery life then Intel's decision not to support Windows based phones could force MS to optimise their OS for use on Moorestown, otherwise Windows based devices dont have a chance.

Intel have not said they will NEVER support windows devices, just that they dont at the moment cos the current iteration of Windows 7 for phones is unoptimised.

In addition, as someone who used Smartphone for use with work, I would happily deal with the inconvenience of having a slightly longer phone if I got 2x the performance for the same battery life.

If the theoretical performance proves true in practice then:

Andriod + Moorestown = Yes please