AnandTech 2010 Server Upgrade: The CPUs

by Anand Lal Shimpi on August 30, 2010 6:54 PM EST- Posted in

- IT Computing

- CPUs

- Intel

With our 2010 server upgrade we're doing more than just replacing hardware, we're moving to a fully virtualized server environment. We're constructing two private clouds, one for our heavy database applications and one for everything else. The point of creating two independent clouds is to equip them with different levels of hardware - more memory/IO for the db cloud and something a bit more reasonable for the main cloud. Within each cloud we're looking to completely duplicate all hardware to make our environment much more manageable.

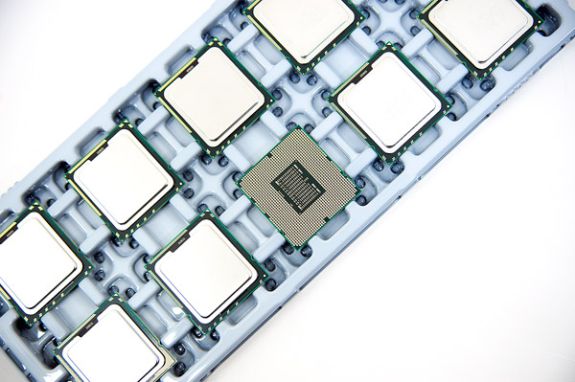

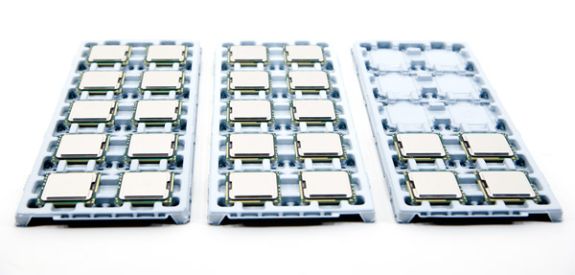

The first hardware we got in for the upgrade were our CPUs. We're moving from a 28 server setup to a 12 server environment. Each server has two CPU sockets and we're populating them with Intel Xeon L5640s.

The L5640 is a 32nm Westmere processor with 6-cores/12MB L3 per chip. The L indicates a lower voltage part. The L5640 carries a 60W TDP thanks to its 2.26GHz clock speed. We're mostly power constrained in our racks so saving on power is a top priority.

Each server will have two of these chips, that's 12-cores/24-threads per server. We've reviewed Intel's Xeon 5670 as well as the L5640 in particular. As Johan concluded in his review, the L5640 makes sense for us as we have hard power limits at the rack level and are charged extra for overages.

There's not much else to show off at this point but over the coming days/weeks you'll see more documentation from our upgrade process.

Hopefully this will result in better performance for all of you as well as more uptime as we can easily scale hardware within our upcoming cloud infrastructure.

67 Comments

View All Comments

7Enigma - Tuesday, August 31, 2010 - link

I'd also find that very interesting....Anand Lal Shimpi - Tuesday, August 31, 2010 - link

I'll try and gather that information for our existing infrastructure. It's not too surprising but the number one issue we've had has been HDD failure, followed by PSU failure. We've actually never had a cooling fan go bad surprisingly enough :-)Take care,

Anand

judasmachine - Tuesday, August 31, 2010 - link

I want one, and by one I mean one set of two 6-cores totally in 24 threads....Stuka87 - Tuesday, August 31, 2010 - link

What do you guys use so many servers for? I don't see this site getting *THAT* much traffic to require that kind of horse power. But maybe I am wrong.Syran - Tuesday, August 31, 2010 - link

They do currently have 3800 active members just on the forums right at this minute, that has to call for some fairly decent database calls just there.brundlefly77 - Tuesday, August 31, 2010 - link

Just curious as to why you still manage your own physical servers at all, especially for a content website...there are so many options which are more cost and labor effective and which provide services you otherwise need to implement and manage yourself.It never ceases to amaze me how much more powerful and flexible AWS is than managing bare hardware.

Zero capital expense, no hardware depreciation schedules for tax purposes, instant and virtually unlimited upscaling (and downscaling - when was the last time you saw a colo effectively cost-managed for downscaling?!), CDNs, video streaming, relational and search-optimized databases, point and click image snapshots & backups, unlimited storage, physical region redundancy - all managed better than most dedicated IT teams, and with no sysadmin costs, and all manageable from any web browser - !?

I always look at DropBox - a phenomenal service with extraordinary requirements. If they can run off AWS with that kind of reliability and performance, you have to wonder why you're paying a sysadmin$50+/hr to work a screwdriver in a server room at all.

Syran - Tuesday, August 31, 2010 - link

Personally, it's probably because it is what it is. Anandtech is a Hardware web site, and one of the things they have enjoyed playing with is the server side of life. If they can afford it, it allows them to get their hands dirty building it as well. Many places don't have the boss working on setting up the servers for the clouds on their network; even in IT focused shops.klipprand - Tuesday, August 31, 2010 - link

Hi Anand,I'm assuming (perhaps wrongly) that you'll be running ESX and consolidating further. Recent benchmarks showed quad socket Xeon systems outperforming dual socket systems by about 2.5x. Wouldn't it work even better then to run say 6, or maybe only 5 quad CPU servers? Just a thought.

Kelly

Syran - Tuesday, August 31, 2010 - link

My guess would be to allow them to maximize memory, which seems to be the true bane of any virtualized system. Our current at work bladecentre has 4 VM blades, running quad nahlem xeon's in 2 sockets with 48GB of ram each. Our blade cpu usage is normally in the neighborhood of 5-9% per blade, and typically about 55-59% memory usage.mino - Tuesday, August 31, 2010 - link

For clustering there is a minimum count of servers necessary regardless of their performance.So basically the lowest amount making any sense is 2xMGMT(FT) + 6xPROD(2x 3 per site).

Until you NEED more than 6 2S serves PER CLUSTER it makes no sense going for the big boxen.

Unless, of course, RAS is not a requirement.