Facebook's "Open Compute" Server tested

by Johan De Gelas on November 3, 2011 12:00 AM ESTThe Facebook Server

In the basement of the Palo Alto, California headquarters, three Facebook engineers built Facebook's custom-designed servers, power supplies, server racks, and battery backup systems. The Facebook server had to be much cheaper than the average server, as well as more power efficient.

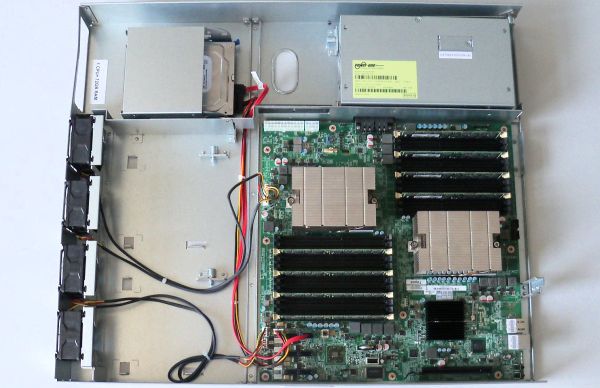

The first change they made was the chassis height, going for a 1.5U high design as a compromise between density and making the server easier to cool. 1.5U allows them to use taller heatsinks, larger (60mm) lower-RPM fans than the screaming 40mm energy hoggers used in a 1U chassis. The result is that the fans consume only 2% to 4% of the total power, which is pretty amazing as we have seen 1U fans that can consume up to one third of the total system power. It seems that air-cooling in the Open Compute 1.5U server is as efficient as the best 3U servers.

At the same time, Facebook Engineering kept the chassis very simple, without any plastic. It makes the airflow through the server smoother and reduces weight. The bottom plate of one server serves as the top plate for the server beneath it.

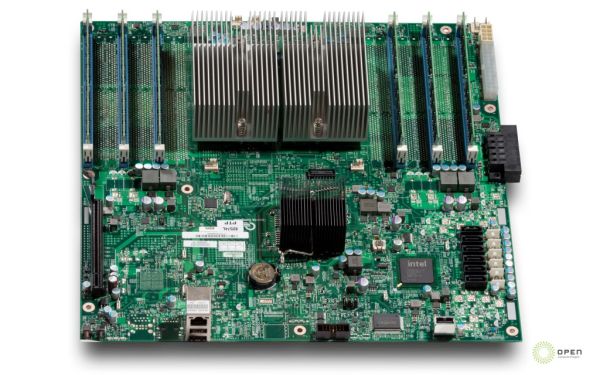

Facebook has designed an AMD and an Intel motherboard, both manufactured by Quanta. Much attention was paid to the efficiency of the voltage regulators (94% efficiency). The other trick was again to remove anything that was not absolutely necessary. These motherboards have no BMC, very few USB (2) and NIC ports (2), one expansion slot, and are headless (no videochip).

The only thing that an administrator can do remotely is "reboot over LAN". The idea is that if that does not help, the problem is in 99% of cases severe enough that you have to send an administrator to the server anyway.

The AMD servers are mostly used as Memcached servers, as the four channels of AMD Magny-cours Opterons 6100 are capable of using 12 DIMMs per CPU, or 24 DIMMs in total. That works out to 384GB of caching memory.

In contrast the Facebook Open Compute Xeon servers only have six DIMM slots as they are used for processing intensive tasks such as the PHP "assembling" data servers.

67 Comments

View All Comments

iwod - Thursday, November 3, 2011 - link

And i am guessing Facebook has at least 10 times more then what is shown on that image.DanNeely - Thursday, November 3, 2011 - link

Hundreds or thousands of times more is more likely. FB's grown to the point of building its own data centers instead of leasing space in other peoples. Large data centers consume multiple megawatts of power. At ~100W/box, that's 5-10k servers per MW (depending on cooling costs); so that's tens of thousands of servers/data center and data centers scattered globally to minimize latency and traffic over longhaul trunks.pandemonium - Friday, November 4, 2011 - link

I'm so glad there are other people out there - other than myself - that sees the big picture of where these 'miniscule savings' goes. :)npp - Thursday, November 3, 2011 - link

What you're talking about is how efficient the power factor correction circuits of those PSUs are, and not how power efficient the units their self are... The title is a bit misleading.NCM - Thursday, November 3, 2011 - link

"Only" 10-20% power savings from the custom power distribution????When you've got thousands of these things in a building, consuming untold MW, you'd kill your own grandmother for half that savings. And water cooling doesn't save any energy at all—it's simply an expensive and more complicated way of moving heat from one place to another.

For those unfamiliar with it, 480 VAC three-phase is a widely used commercial/industrial voltage in USA power systems, yielding 277 VAC line-to-ground from each of its phases. I'd bet that even those light fixtures in the data center photo are also off-the-shelf 277V fluorescents of the kind typically used in manufacturing facilities with 480V power. So this isn't a custom power system in the larger sense (although the server level PSUs are custom) but rather some very creative leverage of existing practice.

Remember also that there's a double saving from reduced power losses: first from the electricity you don't have to buy, and then from the power you don't have to use for cooling those losses.

npp - Thursday, November 3, 2011 - link

I don't remember arguing that 10% power savings are minor :) Maybe you should've posted your thoughts as a regular post, and not a reply.JohanAnandtech - Thursday, November 3, 2011 - link

Good post but probably meant to be a reply to erwinerwinerwin ;-)NCM - Thursday, November 3, 2011 - link

Johan writes: "Good post but probably meant to be a reply to erwinerwinerwin ;-)"Exactly.

tiro_uspsss - Thursday, November 3, 2011 - link

Is it just me, or does placing the Xeons *right* next to each other seem like a bad idea in regards to heat dissipation? :-/I realise the aim is performance/watt but, ah, is there any advantage, power usage-wise, if you were to place the CPUs further apart?

JohanAnandtech - Thursday, November 3, 2011 - link

No. the most important rule is that the warm air of one heatsink should not enter the stream of cold air of the other. So placing them next to each other is the best way to do it, placing them serially the worst.Placing them further apart will not accomplish much IMHO. most of the heat is drawn away to the back of the server, the heatsinks do not get very hot. You also lower the airspeed between the heatsinks.