The Xeon E5-2600: Dual Sandy Bridge for Servers

by Johan De Gelas on March 6, 2012 9:27 AM EST- Posted in

- IT Computing

- Virtualization

- Xeon

- Opteron

- Cloud Computing

Intel's Sandy Bridge architecture was introduced to desktop users more than a year ago. Server parts however have been much slower to arrive, as it has taken Intel that long to transpose this new engine into a Xeon processor. Although the core architecture is the same, the system architecture is significantly different from the LGA-1155 CPUs, making this CPU quite a challenge, even for Intel. Completing their work late last year, Intel first introduced the resulting design as the six-core high-end Sandy Bridge-E desktop CPU, and since then have been preparing SNB-E for use in Xeon processors. This has taken a few more months but Xeon users' waits are at an end at last, as today Intel is launching their first SNB-E based Xeons .

Compared to its predecessor, the Xeon X5600, the Xeon E5-2600 offers a number of improvements:

A completely improved core, as described here in Anand's article. For example, the µop cache lowers the pressure on the decoding stages and lowers power consumption, killing two birds with one stone. Other core improvements include an improved branch prediction unit and a more efficient Out-of-Order backend with larger buffers.

A vastly improved Turbo 2.0. The CPU can briefly go beyond the TDP limits, and when returning to the TDP limit, the CPU can sustain higher "steady-state" clockspeed. According to Intel, enabling turbo allows the Xeon E5 to perform 14% better in the SAP S&D 2 tier test. This compares well with the Turbo inside the Xeon 5600 which could only boost performance by 4% in the SAP benchmark.

Support for AVX Instructions combined with doubling the load bandwidth should allow the Xeon to double the peak floating point performance compared to the Xeon "Westmere" 5600.

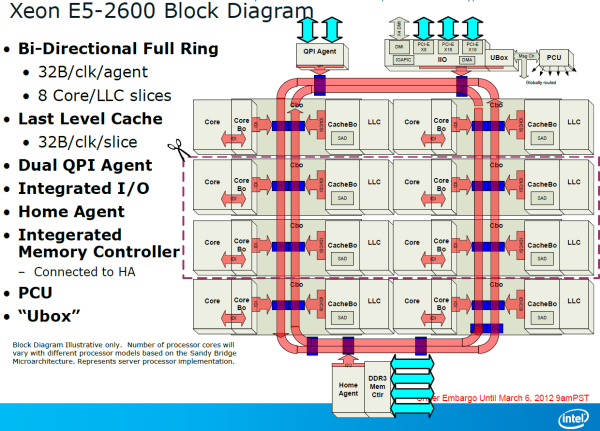

A bi-directional 32 byte ring interconnect that connects the 8 cores, the L3-cache, the QPI agent and the integrated memory controller. The ring replaces the individual wires from each core to the L3-cache. One of the advantages is that the wiring to the L3-cache can be simplified and it is easier to make the bandwidth scale with the number of cores. The disadvantage is that the latency is variable: it depends on how many hops a certain piece of data inside the L3-cache must cross before ends up at the right core.

A faster QPI: revision 1.1, which delivers up to 8 GT/s instead of 6.4 GT/s (Westmere).

Lower latency to PCI-e devices. Intel integrated a PCIe 3.0 I/O subsystem inside the die which sits on the same bi-directional 32 bit ring as the cores. PCIe 3.0 runs at 8 GT/s (PCIe 2.0: 5 GT/s), but the encoding has less overhead. As a result, PCIe 3.0 can deliver up to 1 GB full duplex per second per lane, which is twice as much as PCIe 2.0.

Removing the I/O lowered PCIe latency by 25% on average according to Intel. If you only access the local memory, Intel measured 32% lower read latency.

The access latency to PCIe I/O devices is not only significantly lower, but Intel's Data Direct I/O Technology allows the PCIe NICs to read and write directly to the L3-cache instead of to the main memory. In extremely bandwidth constrained situations (using 4 infiniband controllers or similar), this lowers power consumption and reduces latency by another 18%, which is a boon to HPC users with 10G Ethernet or Infiniband NICs.

The new Xeon also supports faster DDR-3 1600, up to 2 DIMMs per channel can run at 1600 MHz.

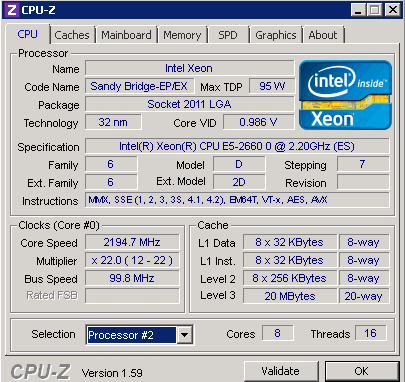

Last but certainly not least: 2 additional cores and up to 66% more L3 cache (20 MB instead of 12 MB). Even with 8 cores and a PCIe agent (40 lanes), the Xeon E5 still runs at 2.2 GHz within a 95W TDP power envelope. Pretty impressive when compared with both the Opteron 6200 and Xeon 5600.

81 Comments

View All Comments

MrSpadge - Tuesday, March 6, 2012 - link

Put some sarcasm tags in there to save some people from getting confused...cynic783 - Tuesday, March 6, 2012 - link

definitely sarcastic. i was actually surprised not to see any fanbois so I thought I'd pretendbadjohny - Tuesday, March 6, 2012 - link

I have no doubt these chips or something similar will end up in the new mac pros. Who are in a very bad need of a refresh.Shuxclams - Tuesday, March 6, 2012 - link

Looking at a complete visualization transformation in our server room, looks like the decision was made for us as far as architecture. Wow....TeXWiller - Tuesday, March 6, 2012 - link

<quote>The new Xeon also supports faster DDR-3 1600. Contrary to the Interlagos Opteron which can only support this memory speed with one DIMM per channel</quote>Interlagos supports memory up to DDR3-1600 using two single rank memory modules, or one single rank and one double rank module if using registered memory, and two single rank modules if using unbuffered memory. DDR3-1866 is supported on a single load-reduced registered, or on a single unbuffered module per channel. It depends on the board manufacturer and more importantly, it can be all read on the manual, so to speak.davegraham - Tuesday, March 6, 2012 - link

AMD Interlagos can support more than 1 DDR3-1600 ECC/REG dimm per channel. I run 8 on a single socket 6276 and it works at the rated speed.TeXWiller - Wednesday, March 7, 2012 - link

Too bad these kinds of errors in the articles are not usually fixed.JohanAnandtech - Wednesday, March 7, 2012 - link

I will double check .meloz - Tuesday, March 6, 2012 - link

Just wanted to congratulate Johan on a job well done. Very thorough analysis, Intel have achieved a very dominant position with this new platform and this is reflected in pricing of their processors as well!AMD was already a sub 10% niche (with a market share to mirror) in the data center, now even that niche has evaporated.

New Opterons (based on Piledriver) might decrease the performance gap to Intel under certain benchmarks, but I doubt they will beat Intel. Intel has plenty of SKUs above the quickest AMD Opterons to adjust prices and kill any new challenge from AMD, instantly.

JohanAnandtech - Wednesday, March 7, 2012 - link

Thanks! Although I hope Intel gets a bit more competition though.