Haswell at IDF 2012: 10W is the New 17W

by Anand Lal Shimpi on September 7, 2012 2:52 PM EST- Posted in

- CPUs

- Intel

- Haswell

- Trade Shows

- IDF 2012

Earlier this week Intel let a little bit of information leak about Haswell, which is expected to be one of the main focal points of next week's Intel Developer Forum. Haswell is a very important architecture for Intel, as it aims lower on the TDP spectrum in order to head off any potential threat from ARM moving up the chain. Haswell still remains very separate from the Atom line of processors (it should still be tangibly faster than IVB), but as ARM has aspirations of higher performance chips Intel needed to ensure that its position at lower power points wasn't being threatened.

The main piece of news Intel supplied was the TDP target for Haswell ULV (Ultra Low Voltage) parts is now 10W, down from 17W in Sandy and Ivy Bridge. The standard voltage Haswell parts will drop in TDP as well, but it's not clear by how much. Intel originally announced that Haswell would be focused on the 15 - 25W range, so it's entirely possible that standard voltage parts will fall in that range with desktop Haswell going much higher.

Intel also claims that future Haswell revisions may push even lower down the TDP chain. At or below 10W it should be possible to cram Haswell into something the thickness of a 3rd gen iPad. The move to 14nm in the following year will make that an even more desirable reality.

Although Haswell's design is compete and testing is ahead of schedule, I wouldn't expect to see parts in the market until Q2 2013.

Early next year we'll see limited availability of 10W Ivy Bridge ULV parts. These parts will be deployed in some very specific products, likely in the convertible Ultrabook space, and they won't be widely available. Any customer looking to get a jump start on Haswell might work with Intel to adopt one of these.

The limited availability of 10W ULV Ivy Bridge parts does highlight another major change with Haswell: Intel will be working much closer than it has in the past with OEMs to bring Haswell designs to market. Gone are the days when Intel could just release CPUs into the wild and expect its partners to do all of the heavy lifting. Similar to Intel's close collaboration with Apple on projects like the first MacBook Air, Intel will have to work very closely with its PC OEMs to bring the most exciting Haswell designs to market. It's necessary not just because of the design changes that Haswell brings, but also to ensure that these OEMs are as competitive as possible in markets that are heavily dominated by Apple (e.g. the tablet market).

Don't expect any earth shattering increases in CPU performance over Ivy Bridge, although I've heard that gains in the low double digits are possible. The big gains will come from the new GPU and on-package L4 cache. Broadwell (14nm, 2014) will bring another healthy set of GPU performance increases but we'll likely see more than we did from IVB with the transition to Haswell on the graphics side.

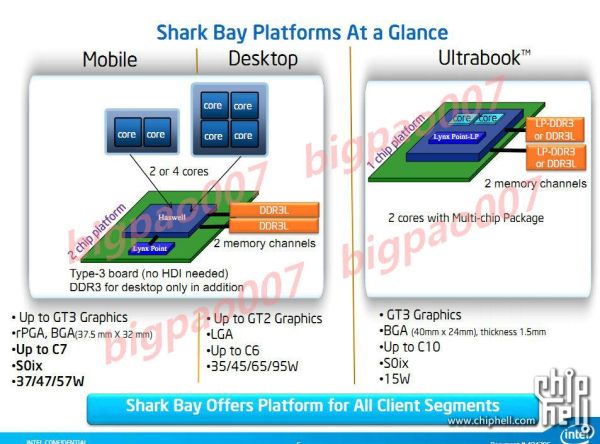

Configurable TDP and connected standby are both supported. We'll also see both single and dual-chip platforms (SoC with integrated IO hub or SoC with off-chip IO hub), which we've known for a while. We'll get more architectural details next week, as well as information about all of the new core and package power states. Stay tuned.

43 Comments

View All Comments

mrdude - Saturday, September 8, 2012 - link

I've been waiting for some Haswell news and this sounds great :) I'm sure many people are disappointed that HW isn't going to bring that 20%+ performance bump but, frankly, people have way more CPU than they need. Recompiling for modern ISAs and software optimization potentially brings far more gains than any IPC and clock speed gains Intel can manage to squeeze out. The fact that this isn't happening is another matter, though.I'm interested to see what the performance of the new on-die GPU will bring. The current Ultrabooks are just abysmal with only a few standouts and that mainly due to the other goodies -- the Zenbook with it's great IPS panel and the Samsung Series 9s with their great panel as well. I'm hoping HW is able to inject some more sense into the platform with lower GPU throttling and better GPU performance. Maturation of the 22nm node and some price decreases wouldn't hurt either ;)

UpSpin - Sunday, September 9, 2012 - link

On the one hand it's a bit disappointing that Haswell won't be worth an upgrade for CPU speed addicts.But let's face the current trend:

People want ultraportable devices with long battery life, which ARM rules at the moment. Smartphones and tablets are a huge market, probably much larger than the PC business. So Intel has to compete in this low power sector to stay alive.

People want high resolution displays, but therefore you need a powerful GPU, which Intel doesn't has. So Intel must improve GPU performance in order to drive high resolution displays with the IGP.

More and more programs switch to GPU for parallel processing tasks to speed up the task by magnitudes, impossible with traditional CPU improvements. AMD understood this with their APUs, Intel must follow.

An increase in clock frequency causes higher power consumption. Multiple cores, which can get disabled individually however are faster in the sum and consume less power in idle. So better integrate many smaller cores than few large ones, if power consumption is important, which it is.

Thus Intel will follow AMD most probably and stop trying to release faster and faster CPUs and focus on parallel computing and GPU acceleration.

ARM on the other hand does everything at the same time. They have the big.LITTLE tech to save power, A15 to improve per core performance, develop 64bit cores and constantly scale up their GPU performance, but ARM has the advantage that many companies further develop ARM processors.

ARM products get sold in the currently most active market, Intel has no real competition there. On the other hand people won't upgrade their old computers because Haswell doesn't offer any visible advantage except reduced power consumption.

If Win 8 on Intel tablets isn't a success, Intel will face a hard time.

azazel1024 - Monday, September 10, 2012 - link

I doubt it. Intel ships tons of processors in new systems that people buy all the time who are upgrading from much older systems. There have been rare times that Intel has released something that is such an upgrade that whole scads of enthusiats abandoned their last generation processor and adopted the new one.Gains over most generations have been in the 10-20% range in the last few releases. In large part it has less to do with no competition with AMD, though that helps, and more focusing on things like power consumption and iGPU.

Yeah, I want better CPU performance as well, as do most people, but CPU performance IS good enough for 90% of consumers out there, but iGPU performance is NOT good enough for 90% of consumers. Especially in the mobile sector if you want even higher resolution displays. As mentioned earlier, combine that with some of the things you can do with GPGPU computing along side of the CPU and the way to go, for now, is the concentrate most of your effort on lowering package power consumption as well as improving the GPU. If you can improve CPU performance along the way, then great, but it isn't critical.

Besides, lower power consumption, increased GPU performance and increased CPU performance sounds good to me in the mobile space BIG time. For a desktop system lower power consumption and increased CPU performance is nice (discrete GPU there just about always). I can always use quieter and cooler running desktop machines. Alas my life where I must wait 2 or 3 years between CPU generations to get "worthwhile" upgrade, which could still mean a 25-40% increase in performance over 2-3 generations as well as probably lower power consumption.

My games don't run slow because my 3570 running at 4Ghz can't cope. PS is just snappy as can be. My transcodes are pretty fast too. Oh, I'd love them to be faster, but going from 30s to export a dozen RAW files to JPEGs to 15s doesn't change my life significantly. Going from a 2hr BR->1080p transcode to 1hr is nice, but doesn't change my life that dramatically (not until we are getting in to take a few minutes to reencode a video file, then it might change my life).

I went from a C2D E7500~3.1Ghz to a 3570@4Ghz (4.2 turbo). Transcode performance improved 5x on average! Ballpark performance improvements in photo editing and some other stuff too that is heavily multithreaded. The ultrabook with a 3517u in it manages about 1.4-1.8x faster performance than my old C2D desktop does as well! I am pretty happy overall with CPU performance. Sure, I want improvements, but they stopped being game changers for me.

Now once 14nm is reached, lets start talking maybe hexa or octocore for mainstream desktops and laptops please. That could be a bit of a game changer too in heavily threaded stuff compared to current day improvements