The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTAs our regular readers are well aware, NVIDIA’s 28nm supply constraints have proven to be a constant thorn in the side of the company. Since Q2 the message in financial statements has been clear: NVIDIA could be selling more GPUs if they had access to more 28nm capacity. As a result of this capacity constraint they have had to prioritize the high-profit mainstream mobile and high-end desktop markets above other consumer markets, leaving holes in their product lineups. In the intervening time they have launched products like the GK104-based GeForce GTX 660 Ti to help bridge that gap, but even that still left a hole between $100 and $300.

Now nearly 6 months after the launch of the first Kepler GPUs – and 9 months after the launch of the first 28nm GPUs – NVIDIA’s situation has finally improved to the point where they can finish filling out the first iteration of the Kepler GPU family. With GK104 at the high-end and GK107 at the low-end, the task of filling out the middle falls to NVIDIA’s latest GPU: GK106.

As given away by the model number, GK106 is designed to fit in between GK104 and GK107. GK106 offers a more modest collection of functional blocks in exchange for a smaller die size and lower power consumption, making it a perfect fit for NVIDIA’s mainstream desktop products. Even so, we have to admit that until a month ago we weren’t quite sure whether there would even be a GK106 since NVIDIA has covered so much of their typical product lineup with GK104 and GK107, leaving open the possibility of using those GPUs to also cover the rest. So the arrival of GK106 comes as a pleasant surprise amidst what for the last 6 months has been a very small GPU family.

GK106’s launch vehicle will be the GeForce GTX 660, the central member of NVIDIA’s mainstream video card lineup. GTX 660 is designed to come in between GTX 660 Ti and GTX 650 (also launching today), bringing Kepler and its improved performance down to the same $230 price range that the GTX 460 launched at nearly two years ago. NVIDIA has had a tremendous amount of success with the GTX 560 and GTX 460 families, so they’re looking to maintain this momentum with the GTX 660.

| GTX 660 Ti | GTX 660 | GTX 650 | GT 640 | |

| Stream Processors | 1344 | 960 | 384 | 384 |

| Texture Units | 112 | 80 | 32 | 32 |

| ROPs | 24 | 24 | 16 | 16 |

| Core Clock | 915MHz | 980MHz | 1058MHz | 900MHz |

| Shader Clock | N/A | N/A | N/A | N/A |

| Boost Clock | 980MHz | 1033MHz | N/A | N/A |

| Memory Clock | 6.008GHz GDDR5 | 6.008GHz GDDR5 | 5GHz GDDR5 | 1.782GHz DDR3 |

| Memory Bus Width | 192-bit | 192-bit | 128-bit | 128-bit |

| VRAM | 2GB | 2GB | 1GB/2GB | 2GB |

| FP64 | 1/24 FP32 | 1/24 FP32 | 1/24 FP32 | 1/24 FP32 |

| TDP | 150W | 140W | 64W | 65W |

| GPU | GK104 | GK106 | GK107 | GK107 |

| Transistor Count | 3.5B | 2.54B | 1.3B | 1.3B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm |

| Launch Price | $299 | $229 | $109 | $99 |

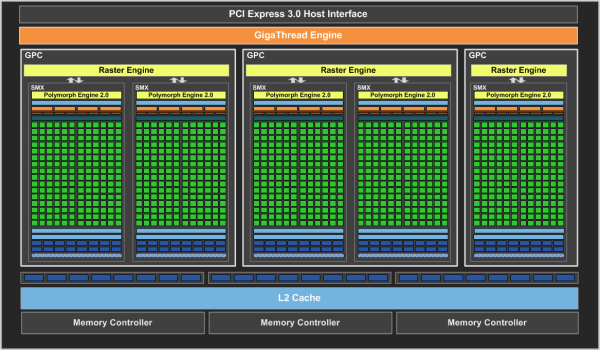

Diving right into the guts of things, the GeForce GTX 660 will be utilizing a fully enabled GK106 GPU. A fully enabled GK106 in turn is composed of 5 SMXes – arranged in an asymmetric 3 GPC configuration – along with 24 ROPs, 3 64bit memory controllers, and 384KB of L2 cache. Design-wise this basically splits the difference between the 8 SMX + 32 ROP GK104 and the 2 SMX + 16 ROP GK107. This also means that GTX 660 ends up looking a great deal like a GTX 660 Ti with fewer SMXes.

Meanwhile the reduction in functional units has had the expected impact on die size and transistor count, with GK106 packing 2.54B transistors into 214mm2. This also means that GK106 is only 2mm2 larger than AMD’s Pitcairn GPU, which sets up a very obvious product showdown.

In breaking down GK106, it’s interesting to note that this is the first time since 2008’s G9x family of GPUs that NVIDIA’s consumer GPU has had this level of consistency. The 200 series was split between 3 different architectures (G9x, GT200, and GT21x), and the 400/500 series was split between Big Fermi (GF1x0) and Little Fermi (GF1x4/1x6/1x8). The 600 series on the other hand is architecturally consistent from top to bottom in all respects, which is why NVIDIA’s split of the GTX 660 series between GK104 and GK106 makes no practical difference. As a result GK104, GK106, and GK107 all offer the same Kepler family features – such as the NVENC hardware H.264 encoder, VP5 video decoder, FastHDMI support, TXAA anti-aliasing, and PCIe 3.0 connectivity – with only the number of functional units differing.

As GK106’s launch vehicle, GTX 660 will be the highest performing implementation of GK106 that we expect to see. NVIDIA is setting the reference clocks for the GTX 660 at 980MHz for the core and 6GHz for the memory, the second to only the GTX 680 in core clockspeed and still the same common 6GHz memory clockspeed we’ve seen across all of NVIDIA’s GDDR5 desktop Kepler parts this far. Compared to GTX 660 Ti this means that on paper GTX 660 has around 76% of the shading and texturing performance of the GTX 660 Ti, 80% of the rasterization performance, 100% of the memory bandwidth, and a full 107% of the ROP performance.

These figures mean that the performance of the GTX 660 relative to the GTX 660 Ti is going to be heavily dependent on shading and rasterization. Shader-heavy games will suffer the most while memory bandwidth-bound and ROP-bound games are likely to perform very similarly between the two video cards. Interestingly enough this is effectively opposite the difference between the GTX 670 and GTX 660 Ti, where the differences between the two of those cards were all in memory bandwidth and ROPs. So in scenarios where GTX 660 Ti’s configuration exacerbated GK104’s memory bandwidth limitations GTX 660 should emerge relatively unscathed.

On the power front, GTX 660 has power target of 115W with a TDP of 140W. Once again drawing a GTX 660 Ti comparison, this puts the TDP of the GTX 660 at only 10W lower than its larger sibling, but the power target is a full 19W lower. In practice power consumption on the GTX 600 series has been much more closely tracking the power target than it has the TDP, so as we’ll see the GTX 660 is often pulling 20W+ less than the GTX 660 Ti. This lower level of power consumption also means that the GTX 660 is the first GTX 600 product to only require 1 supplementary PCIe power connection.

Moving on, for today’s launch NVIDIA is once again going all virtual, with partners being left to their own designs. However given that this is the first GK106 part and that partners have had relatively little time with the GPU, in practice partners are using NVIDIA’s PCB designs with their own coolers – many of which have been lifted from their GTX 660 Ti designs – meaning that all of the cards being launched today are merely semi-custom as opposed to some fully custom designs like we saw with the GTX 660 Ti. This means that though there’s going to be a wide range designs with respect to cooling, all of today’s launch cards will be extremely consistent with regard to clockspeeds and power delivery.

Like the GTX 660 Ti launch, partners have the option of going with either 2GB or 3GB of RAM, with the former once more taking advantage of NVIDIA’s asymmetrical memory controller functionality. For partners that do offer cards in both memory capacities we’re expecting most partners to charge $30-$40 more for the extra 1GB of RAM.

NVIDIA has set the MSRP on the GTX 660 at $229, which NVIDIA’s partners will be adhering to almost to a fault. Of the 3 cards we’re looking at in our upcoming companion GTX 660 launch roundup article, every last card is going for $229 despite the fact that every last card is also factory overclocked. Because NVIDIA does not provide an exhaustive list of cards and prices it’s not possible to say for sure just what the retail market will look like ahead of time, but at this point it looks like most $229 cards will be shipping with some kind of factory overclock. This is very similar to how the GTX 560 launch played out, though if it parallels the GTX 560 launch close enough then reference-clocked cards will still be plentiful in time.

At $229 the GTX 660 is going to be coming in just under AMD’s Radeon HD 7870. AMD’s official MSRP on the 7870 is $249, but at this point in time the 7870 is commonly available for $10 cheaper at $239 after rebate. Meanwhile the 2GB 7850 will be boxing in the GTX 660 in from the other side, with the 7850 regularly found at $199. Like we saw with the GTX 660 Ti launch, these prices are no mistake by AMD, with AMD once again having preemptively cut prices so that NVIDIA doesn’t undercut them at launch. It’s also worth noting that NVIDIA will not be extending their Borderlands 2 promotion to the GTX 660, so this is $229 without any bundled games, whereas AMD’s Sleeping Dogs promotion is still active for the 7870.

Finally, along with the GTX 660 the GK107-based GTX 650 is also launching today at $109. For the full details of that launch please see our GTX 650 companion article. Supplies of both cards are expected to be plentiful.

| Summer 2012 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon HD 7950 | $329 | ||||

| $299 | GeForce GTX 660 Ti | ||||

| Radeon HD 7870 | $239 | ||||

| $229 | GeForce GTX 660 | ||||

| Radeon HD 7850 | $199 | ||||

| Radeon HD 7770 | $109 | GeForce GTX 650 | |||

| Radeon HD 7750 | $99 | GeForce GT 640 | |||

147 Comments

View All Comments

chizow - Sunday, September 16, 2012 - link

But SB had no impact on 980X pricing, Intel is very deliberate in their pricing and EOL schedules so these parts do not lose much value before they gracefully go EOL. Otherwise, 980X still offered benefit over SB with 6 cores, something that was not replaced until SB-E over 1 year later. Even then, there was plenty of indication before Intel launched their SB-E platform to mitigate any sense of buyer's remorse.As for the German site you're critical of, you need to read German to be able to understand numbers and bar graphs? Not to mention Computerbase is internationally acclaimed as one of the best resources for PC related topics. I linked their sites because they were one of the first to use such easy to read performance summaries and even break them down by resolution and settings.

If you prefer since you listed it, TechPowerUp has similar listings, they only copied the performance summaries of course after ground-breaking sites like Computerbase were using them for some years.

http://www.techpowerup.com/reviews/NVIDIA/GeForce_...

As you can see, even at your lower 1680x1050 resolution, the GTX 280 still handily outclasses the 4870 512MB by ~21%, so I guess there goes your theory? I've seen more recent benchmarks in games like Skyrim or any title with 4xAA or high-res textures where the gap widens as the 4870 chokes on the size of the requisite framebuffer.

As for your own situation and you're wife's graphics card, TigerDirect has the same GTX 670 GC card I bought for $350 after rebate. Not as good as the deal I got but again, there are certainly new ones out there to be had for cheap. I'm personally going to wait another week or two to see if the GTX 660 price and its impact on AMD prices (another round of cuts expected next week, LOL) forces Nvidia to drop their prices as I also need to buy another GPU for the gf.

Galidou - Sunday, September 16, 2012 - link

And then once again you're off the subject, you send me relative performance of the gtx 280 to the 4870 2 years after their launch...... We were speaking of the rebate issued in 2008 for the performance it had back then. All the links I sent you were from 4 years ago and there's a reason to it and they're showing the 280 on average 10% difference in performance, and sometimes loosing big time.Sure the 1gb ram of the gtx 280 in the long run paid off. But we're speaking of 2008 situation that forced them to issue rebates..... which is 1 month after it's launch there's a part that performs ''similarly'' that costs less than HALF of it's price. computerbase is a good website, not the first time I see it, but it's the only one(and I never use only one website to base on the REAL average) that shows 20% difference in performance for a part that did still cost 115% more than the radeon 4870... 115 FREAKING % within one month!! nothing else to say.

From a % point of view the 7970 and gtx 680 was a REALLY different fight.... and it was 3 month separating them which is something we commonly see in video card industry. while 115% more pricey parts that performs let's say 15% for your pleasure average from all websites than another part.....

''980X still offered benefit over SB with 6 cores''

Never said it didn't, that's why I precised: ''A gamer buying an i7 980x 1 week prior to the sandy bridge launch''. For the gamer there was NO benefit at ALL, it even lost to the sandy bridge in games.

But that's true, the 980x was out for a while unlike the gtx 280 who didn't have almost any time to keep it's amazing lead from last gen parts. The reason why they HAD to issue rebates, and the reason why I switched back to ATI from my 7800gt that died.

The gtx 660 ti is a fine card, I'm just worried about the ROPs for future proofing, she'll keep the card a VERY long time so I regret not buying the 670 in the first place.

chizow - Sunday, September 16, 2012 - link

No I'm not off the subject, you're obviously basing your performance differences based on a specific low-resolution setting that was important to you at launch while I'm showing performance numbers of all resolutions that have only increased over time. The TPU link I provided was from a later review because as I already stated, Computerbase was one of the first sites to use these aggregate performance numbers, only later did other sites like TPU follow suit. The GTX 280 was always the best choice for enthusiasts running higher resolutions and more demanding AA and those differences only increased over time, just as I stated.Nvidia didn't feel they needed to drop the price any more than the initial cut on the 280 because after the cut they had a "similar" part to compete with the 4870 with their own GTX 260. Once again, AMD charged too little for their effort, but that has no bearing on the fact that the 280's launch price was *JUSTIFIED* based on relative performance to last-gen parts, unlike the situation with AMD's 28nm launch prices.

As for the 680 and 7970? It just started driving home the fact the 7970 was grossly overpriced, as it offered 10-15% *more* performance than the 7970 at *10%* less price which began the tumble on AMD prices we see today. I've also been critical of the GTX 680 though, as it only offers ~35-40% increase in performance over GTX 580 at 100% of the price, which is still the worst increase in the last 10 years for Nvidia, but still obviously better than the joke AMD launched with Tahiti. 115% performance for 110% of the price compared to last-gen after 18 months is an absolute debacle.

As for the 980X and SB, again the whole tangent is irrelevant. What would make it applicable would be if AMD launched a bulldozer variant that offered 90% of 980X performance at $400 price point and forced Intel to drop prices and issue rebates, but that obviously didn't happen. You're comparing factors that Intel has complete control over where in the case of the GTX 280, Nvidia obviously had no control over what AMD decided to do with the 4870.

chizow - Sunday, September 16, 2012 - link

There were numerous other important resolutions that took advantage of the 280's larger frame buffer, 1600x1200, 1920x1080 and 1920x1200. While they were obviously not as prevalent as they are now, they were certainly not uncommon for anyone shopping for a $300+ or $500 video card.As for the 7970 asking price, are you kidding? I had 10x as many AMD fanboys saying the 7970 price was justified at launch (not just Rarson), and where do you get 70% more perf? Its 50% being generous.

So you got 150% performance for 150% of last-gen AMD price compared to 6970, how is that a good deal? Or similarly, you got 120% more performance for 110% the price compared to GTX 580, both last-gen parts.

What you *SHOULD* expect is 150-200% performance for 100% of last-gen price, which is what the GTX 280 offered relative to 8800GTX, which is why I stated its pricing was justified.

We've already covered the RV770, AMD could've easily priced it higher, even matched the GTX 260 price at $400 and still won, but they admittedly chose to go after market/mindshare instead after being beaten so badly by Nvidia since R600. Ever since then, they have clearly admitted their pricing mistake and have done everything in their power to slowly creep those prices upwards, culminating in the HUGE price increase we saw with Tahiti (see 150% price increase from 6970).

Galidou - Sunday, September 16, 2012 - link

They went for price related to the size of the Die. The radeon 4870 was more than half the size of the gtx 280 thus costing less than half to produce then justifying the value of the chip by the size and not the perdformance.We all know why this is happening now, AMD was battling to get back for competition against the top because they left this idea by building smaller die with the HD 3xxx, leaving the higher end to double chip boards, end parts below 400$ prices. So I knew it had to happen one day or another.

So if you're really into making a wikipedia about pricing scheme for video cards and developping about it, go on. But in my opinion, as long as they do not sell us something worse than last gen for a higher price, I'll leave it for people to discern what they need. With all the competition in the market, it's hard to settle for anything that's a real winner, it's mostly based on personnal usage and money someone is willing to spend.

If someone want to upgrade his video card for xxx$, only thing he have to do is look at the benchmark for the game(s) he plays. Not looking at the price of the last generation of video cards to see if the price is relevant to the price he pays now. Usually a good 30 minutes looking at 3-4 different web sites looking at the graphs reading a little will give you good indication without sending you in the dust by speaking and arguing about last gen stuff compared to what's out now...

You speak like you're trying to justify what people should buy now because of how things were priced in the past..... Not working. You take your X bucks check out benchies, go out there and buy the card you want end of the freaking line. No video card is interesting you now, wait until something does. Stop living in the past and get to another chapter ffs...

chizow - Sunday, September 16, 2012 - link

Heh Wikipedia page? Obviously its necessary to set the record straight as revisionists like yourself are only going to emphasize the lowlights rather than the highlights.What you don't seem to understand is that transactions in a free market are not conducted in a vacuum, so the purchases of others do directly impact you if you are in the same market for these goods.

Its important for reviewers to emphasize such important factors like historical prices and changes in performance, otherwise it reinforces and encourages poor pricing practices like we've seen from AMD. It just sets a bad precedent.

Obviously the market has reacted by rejecting AMD's pricing scheme, and as a result we see the huge price drops we've seen over the last few months on their 28nm parts. All that's left is all the ill will from the AMD early adopters. You think all those people who got burned are OK with all the price drops, and that AMD won't have to deal with those repercussions later?

You want to get dismissive and condescending, if all it took was a good 30 minutes looking at 3-4 different websites to get it, why haven't people like you and rarson gotten it yet?

rarson - Tuesday, September 18, 2012 - link

Yeah, it was rhetorical. It was also pointless and off-topic."And even after the launch of 28nm, they still held their prices because there was no incentive or need to drop in price based on relative price and performance?"

Your problem is that you just don't pay any goddamn attention. You have the attention span of a fruit fly. Let me refresh your memory. Kepler "launched" way back in March. All throughout April, the approximate availability of Kepler was zero. AMD didn't drop prices immediately because Kepler only existed in a few thousand parts. They dropped prices sometime around early May, when Kepler finally started appearing in decent quantities, because by then, the cards had already been on the market for FOUR MONTHS. See, even simple math escapes you.

"You have no idea what you're talking about, stop typing."

You're projecting and need to take your own advice.

CeriseCogburn - Thursday, November 29, 2012 - link

AMD's 7970/7950 series supply finally became "available" on average a few days before Kepler launched.LOL

You said something about amnesia ?

You rarson, are a sad joke.

CeriseCogburn - Thursday, November 29, 2012 - link

I love it when the penny pinching amd fanboy whose been whining about 5 bucks in amd "big win!" pricing loses their mind and their cool and starts yapping about new technology is expensive, achieving the highest amd price apologist marks one could hope for.LOL

It's awesome seeing amd fanboys with zero cred and zero morality.

The GTX570 made the 7850 and 7870 the morons choice from the very first date of release.

You cannot expect the truth from the amd fans. It never happens. If there's any exception to that hard and fast rule, it's a mistake, soon to be corrected, with a vengeance, as the brainwashing and emotional baggage is all powerful.

Galidou - Monday, September 17, 2012 - link

While speaking about all that, pricing of the 4870 and 7970 do you really know everything around that, because it seems not when you are arguing, you just seem to put everything on the shoulder of a company not knowing any of the background.Do you know the price of the 4870 was already decided and it was in correlation with Nvidia's 9000 series performance. That the 4870 was supposed to compete against 400$ cards and not win and the 4850 supposed to compete against 300$ series card and not win. You heard right, the 9k series, not the GTX 2xx.

The results even just before the coming out of the cards were already ''known''. The real things were quite different with the final product and last drivers enhancements. The performance of the card was actually a surprise, AMD never thought it was supposed to compete against the gtx 280, because they already knew the performance of the latter and that it was ''unnaittanable'' considering the size of the thing. Life is full of surprise you know.

Do you know that after that, Nvidia sued AMD/ATI for price fixing asking for more communications between launch and less ''surprises''. Yes, they SUED them because they had a nice surprise... AMD couldn't play with prices too much because they were already published by the media and it was not supposed to compete against gtx2xx series. They had hoped that at 300$ it would ''compete'' against the gtx260 and not win against i thus justifying the price of the things at launch. And here you are saying it's a mistake launching insults at me, telling me I have a low intelligence and showing you're a know it all....

Do you know that this price fixing obligation is the result of the pricing of the 7970, I bet AMD would of loved to price the latter at 400$ and could do it but it would of resulted in another war and more suing from Nvidia that wanted to price it's gtx 680 500$ 3 month after so to not break their consumers joy, they communicate A LOT more than before so everyone is happy, except now it hurts AMD because you compare to last gen and it makes things seems less of a deal. But with things back to normal we will be able to compare last gen after the refreshed radeon 7xxx parts and new gen after that.

Nvidia the ''giant'' suing companies on the limit of ''extinction'', nice image indeed. Imagine the rich bankers starting to sue people in the streets, and they are the one you defend so vigorously. If they are that rich, do you rightly think the gtx 280 was well priced even considering it was double the last generation..

It just means one thing, they could sell their card for less money but instead they sue the other company to take more money from our pockets, nice image.... very nice..... But that doesn't mean I won't buy an Nvidia card, I just won't defend them as vigorously as you do.... For every Goliath, we need a David, and I prefer David over Goliath.... even if I admire the strenght of the latter....