Micron P320h PCIe SSD (700GB) Review

by Anand Lal Shimpi on October 15, 2012 3:00 AM ESTUpdate: Micron tells us that the P320h doesn't support NVMe, we are digging to understand how Micron's controller differs from the NVMe IDT controller with a similar part number.

Well over a year ago Micron announced something unique in a sea of PCIe SSDs that were otherwise nothing more than SATA drives in RAID on a PCIe card. The drive Micron announced was the P320h, featuring a custom ASIC and a native PCIe interface. The vast majority of PCIe SSDs we've looked at thus far feature multiple SATA/SAS SSD controllers with their associated NAND behind a SATA/SAS RAID controller on a PCIe card. These PCIe SSDs basically deliver the performance of a multi-drive SSD RAID-0 on a single card instead of requiring multiple 2.5" bays. There's decent interest in these types of PCIe SSDs simply because of the form factor advantage as many servers these days have moved to slimmer form factors (1U/2U) that don't have all that many 2.5" drive bays. Long term however, this SATA/SAS RAID on a PCIe card SSD solution is clunky at best. Ideally you'd want a native PCIe controller that could talk directly to the NAND, rather than going through an unnecessary layer of abstraction. That's exactly what Micron's P320h promised. Today, we have a review of that very drive.

Although it was publicly announced a long time ago (in SSD terms), the P320h's specifications are still very competitive:

| Micron P320h | ||||||

| Capacity | 350GB | 700GB | ||||

| Interface | PCIe 2.0 x8 | |||||

| NAND | 34nm ONFI 2.1 SLC | |||||

| Max Sequential Performance (Reads/Writes) | 3.2 / 1.9 GBps | |||||

| Max Random Performance (Reads/Writes) | 785K / 205K IOPS | |||||

| Max Latency (QD=1, Read/Write) | 47 µs / 311 µs (nonposted) | |||||

| Endurance (Max Data Written) | 25PB | 50PB | ||||

| Encryption | N | |||||

| TDP | 25W | |||||

| Form Factor |

Half-Height, Half-Length PCIe 68.9mm x 167.65mm x 18.71mm |

|||||

In fact, the only indication that this product was announced over a year ago is the fact that it is launching using 34nm SLC NAND. Most of the enterprise SSDs we review these days have shifted to 2x-nm eMLC or MLC-HET. Micron will be making a 25nm SLC version available as well as eMLC/MLC-HET versions in the future, but the launch product uses 34nm SLC NAND. I don't have official pricing from Micron yet, but I would expect it to be pretty high given the amount of expensive SLC NAND on each of the drives (512GB for the 350GB drive, 1TB for the 700GB drive).

The obvious benefit from using SLC NAND is endurance. While Intel's MLC-HET based 910 SSD tops out at 14PB of writes over the life of the 800GB, the 350GB P320h is rated for 25PB. The 700GB drive doubles that to 50 petabytes of writes.

Micron is also quite proud of its low read/write latencies, enabled by its low overhead PCIe controller and driver stack.

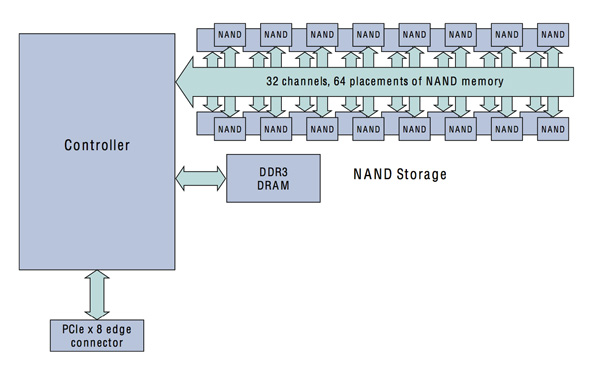

As a native PCIe SSD, the P320h features a single controller on the card - a giant 1517-pin controller made by IDT. The huge pin count is needed to connect the controller to its 32 independent NAND channels, 4x what we see from most SATA SSD controllers:

There are no bridge chips or RAID controllers on-board, that single Micron developed IDT manufactured controller is all that's needed. Talk about clean.

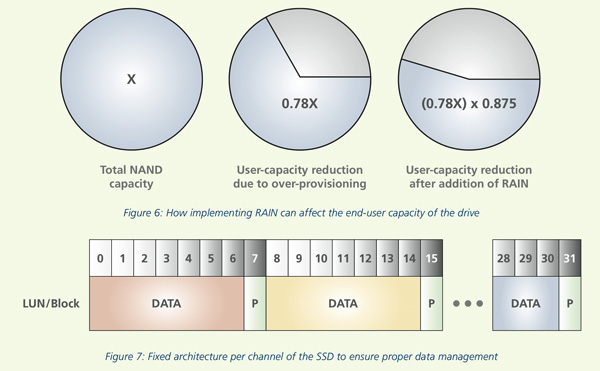

Each of the 32 channels can talk to up to 8 targets, with a maximum capacity of 4TB although Micron only uses 1TB of NAND on-board. Twenty two percent of the on-board NAND is set aside as spare area for garbage collection, bad block replacement and wear leveling. An additional 1/8 of the user capacity is reserved for parity data.

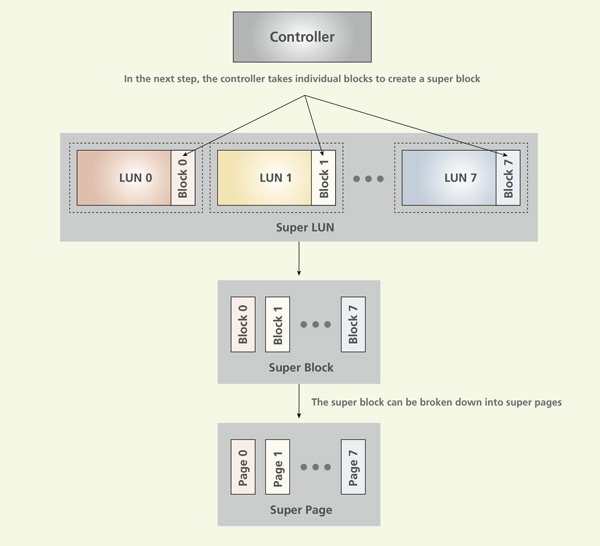

The IDT controller features a configurable hardware RAID-5 that stripes accesses across multiple logical units. The logical units are broken down into blocks and pages as is standard for NAND based SSDs. Blocks and pages are striped across logical units, with parity data calculated from every 7 blocks/pages.

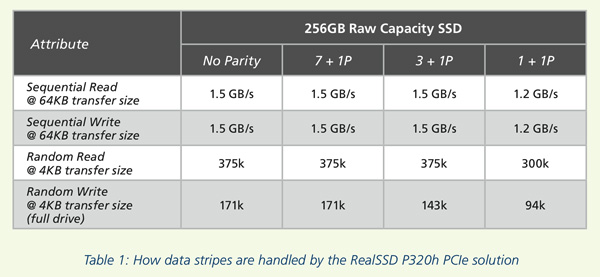

Micron picked 7+1P as its preferred balance of performance, user capacity and failure protection:

Calculating parity based on fewer blocks/pages would be able to withstand greater failures but capacity and performance would suffer. As NAND failures should be far more rare/predictable than mechanical storage failures, this tradeoff shouldn't be a problem.

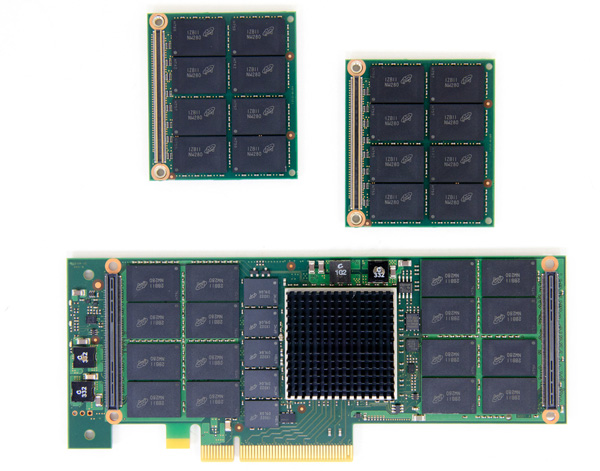

The P320h is available in one form factor: a half-height, half-length PCIe 2.0 x8 card. In the box are both half and full height brackets allowing the P320h to fit in both types of cases:

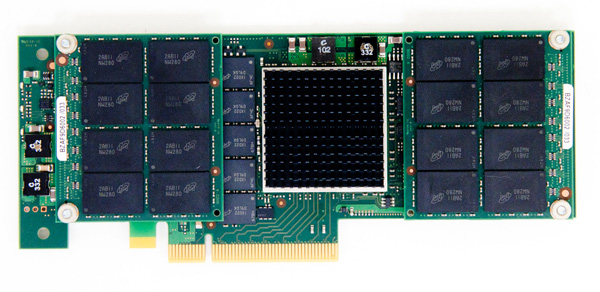

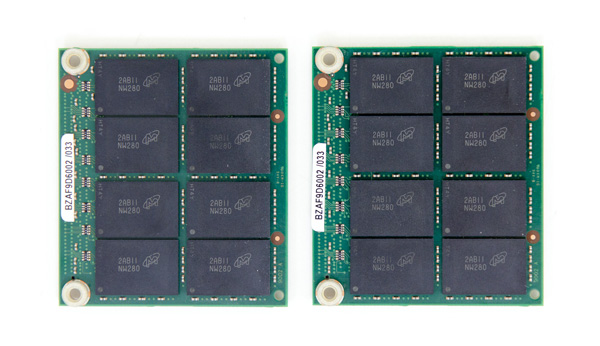

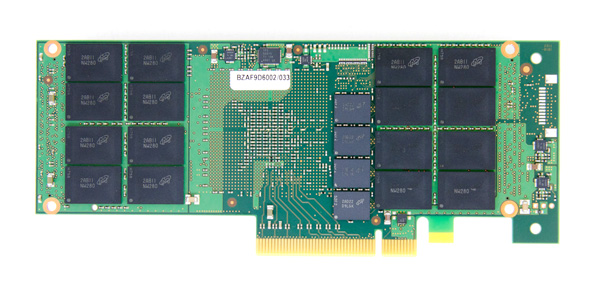

Unlike most 2.5" SATA/SAS SSDs, these PCIe SSDs are pretty interesting to look at. With much more bandwidth to saturate, the drive makers have become more creative in finding ways to cram as many NAND devices onto a half height, half length PCIe card as possible. While sticking to a single slot profile, Micron uses two smaller daughterboards attached via high density interface connectors to the main P320h card to double the amount of NAND on the drive.

Each daughtercard has sixteen 34nm 128Gb NAND packages for a total of 256GB of NAND. That's 512GB of NAND on cards, and then another 512GB on the main P320h card itself for a total of 1TB of NAND for a 700GB drive. The 350GB drive keeps the daughtercards but moves to 64Gb NAND packages instead. Remember that these are 34nm SLC NAND die, so you're looking at only 2GB per die vs. the 8GB per die we get from 25nm MLC NAND (or 4GB per die from 25nm SLC NAND).

Of course with a huge increase in the number of NAND devices, there's a correspondingly large increase in the number of DRAM devices to keep track of all of the LBAs and flash mapping tables. The P320h features nine 256MB DDR3-1333 devices (also made by Micron) for a total of 2.25GB of on-board DRAM.

There's a relatively small heatsink on the custom PCIe controller itself. Micron claims it only needs 1.5m/s of airflow in order to maintain its operating temperature. Prying the heatsink off reveals IDT's NVMe (Non-Volatile Memory Express) controller. This is a native PCIe controller that supports up to 32 NAND channels, as well as a full implementation of the NVMe spec. Although the controller itself is PCIe Gen 3, Micron only certifies it for PCIe Gen 2 operation. With 8 PCIe lanes there's more than enough host bandwidth on PCIe 2.x so this isn't an issue. Update: Micron tells us that the P320h doesn't support NVMe, we are digging to understand how Micron's controller differs from the NVMe IDT controller with a similar part number.

The NVMe spec promises a lower overhead, more efficient command set for native PCIe SSDs. This is a transition that makes a lot of sense as the current approach of just using SATA/SAS controllers behind a PCIe switch is unnecessarily complex. With NVMe the NAND talks to a native PCIe controller which can in turn deliver tons of bandwidth to the host vs. being bottlenecked by 6Gbps SATA or SAS. The NVMe host spec also scales the number of concurrent IOs supported all the way up to 64,000 (a max of 256 currently supported under Windows vs 32 for SATA based SSDs), well beyond what most current workloads would be able to generate.

As NVMe spec defines the driver interface between the SSD and the host OS, it requires a new set of drivers to function. The goal is down the road these drivers will be built into the OS, but in the short term you'd hopefully only need one NVMe driver that would work on all NVMe SSDs rather than the current mess of having an individual driver for every PCIe SSD. Companies like Intel have gotten around the driver issues by simply using SATA/SAS to PCIe controllers whose drivers are already integrated into modern OSes (e.g. LSI's Falcon 2008 controller on the Intel SSD 910).

In the long run NVMe SSDs should enjoy the same plug and play benefits that SATA drives enjoy today. You never have to worry about installing a SATA driver to make your new SSD work (you shouldn't at least), and the same will hopefully be true for NVMe SSDs. The reality today is much more complicated than that.

Micron provided us with drivers for the P320h under the guidance that the driver was only tested/validated for certain server configurations. Even having other PCIe devices installed in the system could cause incompatibilities. In practice I found Micron's warnings accurate. While the P320h had no issues working on our X79 testbed, our H67 testbed wouldn't boot into Windows with the P320h installed. What was really strange about the P320h in the H67 system was that the simple presence of the card caused graphical corruption at POST. I noticed other incompatibilities with certain PCIe video cards installed in our X79 system. I eventually ended up with a stable configuration that let me run through our suite of tests, but even then I noticed the P320h would sometimes drop out of the system entirely - requiring a power cycle to come back again.

Micron made no attempt to hide the fact that the P320h is only validated on specific servers, but it's something worth considering if you're looking at this drive. Apparently the state of Linux drivers is much better than Windows, unfortunately most of our tests run under Windows which forced us into dealing with these compatibility issues head on.

57 Comments

View All Comments

JellyRoll - Monday, October 15, 2012 - link

Of course you have absolutely no experience with Virtualization, which would mean that for your archaic workloads you wouldn't need something of this nature.users that purchase this will not be running one database at such low queue depths, that would be an insane waste of money.

This is designed for high load OLTP and virtualized environments, not to run the database of one website.

you may be in IT at some small company, but you havent seen anything on datacenter scale apparently.

DataC - Tuesday, October 16, 2012 - link

JellyRoll is correct. I work for Micron, and we developed the P320h’s controller and firmware through collaboration with enterprise OEMs—which is why we optimized for higher queue depths. When the P320h is run in these environments (which are common in datacenters), you’ll see significantly higher performance than what’s shown in the charts above.jospoortvliet - Tuesday, October 16, 2012 - link

Yup. And it should be tested on a proper enterprise platform - this test is like running a Nascar vehicle with the handbrakes on.Time for an upgrade to a real OS, Anand.

Denithor - Monday, October 15, 2012 - link

Would have liked to see the fastest consumer-grade drive thrown in just to see exactly how much faster enterprise drives go. Also would like to see how this drive would perform in the standard Light and Heavy Bench tests.FunBunny2 - Monday, October 15, 2012 - link

Actually, against a Fusion-io part, the closest example.jwilliams4200 - Monday, October 15, 2012 - link

Right, enterprise drives should get all the standard consumer SSD tests run on them in addition to the enterprise tests.mckirkus - Wednesday, October 17, 2012 - link

And I'd argue a RAMDisk should be included just to get a sense of relative performance.Kevin G - Monday, October 15, 2012 - link

I'm kinda surprised that there wasn't as much discussion about the effects of the native PCI-e controller. Lower latency results do crop up in various benchmarks here. I wonder if the impact is merely 'benchmark only' and not anything that'd be noticeable in more real world tests.By going with 34 nm SLC, they have limited capacity but his article seems to indicate that the controller is capable of support MLC in the 20 to 30 nm range. That would allow it to hit the 4 TB maximum capacity of the controller. I'm also curious on how such a change would perform. The current P320h does need a PCI-e 2.0 8x connection as some of the benchmarks are (barely) exceeding what a PCI-e 2.0 4x link can provide. With faster NAND, a move to PCI-e 3.0 8x or PCI-e 2.0 16x may be warranted.

I'm also curious if multiple P320h's can be used in a system behind a RAID. Overkill the overkill?

Now for a few general comments about NVMe. I'd love to see NAND chips on DIMMs at the enterprise level. If the controller detects NAND failure or chips reaching their maximum endurance, they could potentially be swapped out. This is akin to current ECC DIMMs. Along those same lines it would be nice to see a SAS or SATA port on the board so that it could fail over to a hard drive in the event of multiple impending NAND failures. The main reasoning I can see to avoid DIMMs would simply be physical space.

This is also a good preview of what to expect with SATA-Express drives next year. They won't reach such bandwidth figures as they'll be limited to two PCI-e lanes but the latency improvements should carry over with a good controller.

PCTC2 - Monday, October 15, 2012 - link

You could probably just do an OS-level software stripe (like in Linux). I think that would be more beneficial just in terms of usable capacity rather than the increase in performance. However, the increase in performance could be tangible, depending on your workload.As for the link, I think we're more constrained by the controller to the performance than the NAND. I don't think we need the PCIe 3.0 or PCIe 2.0 x16 links for this iteration of the controller. I don't think it would saturate the link. As you said, some of the tests don't even saturate a PCIe x4 link, if you don't include overhead (there is overhead).

Also, Anand did point out a 25nm eMLC version is coming out in the future.

As for putting chips on DIMMs, for a HH/HL PCIe card, that is a waste of space, as you said yourself. Between the controller, DRAM, and then the NAND, the sockets would just take up space. The daughterboard direction allows a much more compact, proprietary size depending on the board itself. If you wanted a FH/HL card, I'm sure DIMMs would be more possible.

FunBunny2 - Monday, October 15, 2012 - link

Check out the Sun/Oracle flash appliance. Other niche Enterprise flash storage also exist.