Plextor Updates The Firmware on M5 Pro: Promises Increased Performance, We Test It

by Kristian Vättö on December 10, 2012 2:30 PM ESTPerformance Consistency

In our Intel SSD DC S3700 review we introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

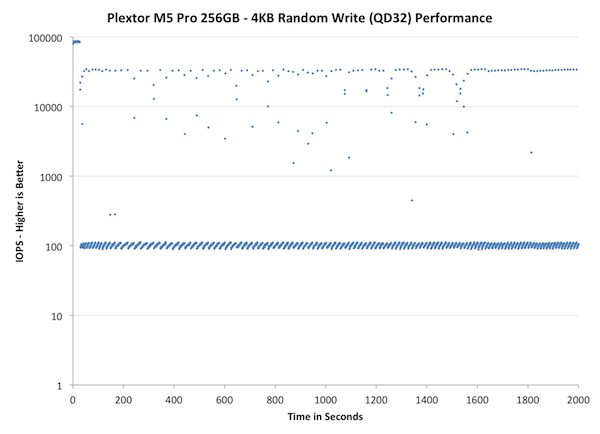

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

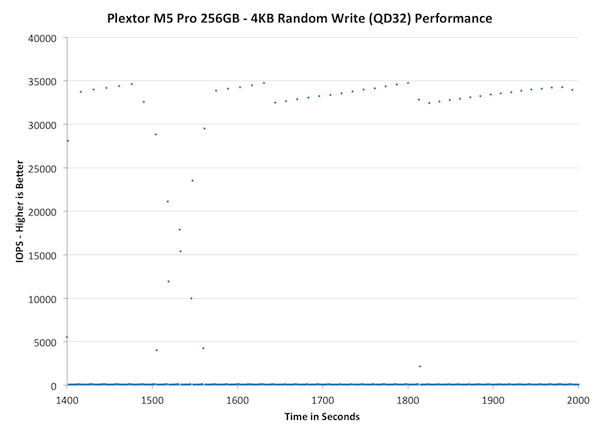

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

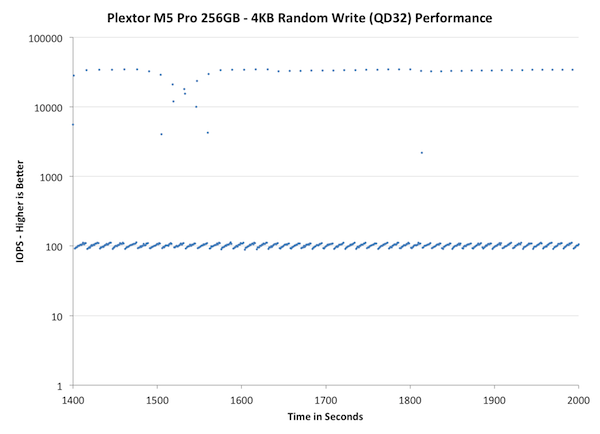

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

Wow, that's bad. While we haven't run the IO consistency test on all the SSDs we have in our labs, the M5 Pro is definitely the worst one we have tested so far. In less than a minute the M5 Pro's performance drops below 100, which at 4KB transfer size is equal to 0.4MB/s. What makes it worse is that the drops are not sporadic but in fact most of the IOs are in the magnitude of 100 IOPS. There are singular peak transfers that happen at 30-40K IOPS but the drive consistently performs much worse.

Even bigger issue is that over-provisioning the drive more doesn't bring any relief. As we discovered in our performance consistency article, giving the controller more space for OP usually made the performance much more consistent, but unfortunately this doesn't apply to the M5 Pro. It does help a bit as it takes longer for the drive to enter steady-state and there are more IOs happening in the ~40K IOPS range, but the fact is that most IO are still handicapped to 100 IOPS.

The next set of charts look at the steady state (for most drives) portion of the curve. Here we'll get some better visibility into how everyone will perform over the long run.

Concentrating on the final part of the test doesn't really bring anything new because as we saw in the first graph already, the M5 Pro reaches steady-state very quickly and the performance stays about the same throughout the test. The peaks are actually high compared to other SSDs but having one IO transfer at 3-5x the speed every now and then won't help if over 90% of the transfers are significantly slower.

46 Comments

View All Comments

Beenthere - Monday, December 10, 2012 - link

Unless the 1.02 firmware corrects some Bug or other issue, it's hardly worth the effort to update. As far as the listed SSDs, few if anyone would actually be able to tell the difference in performance between the various drives in actual use. The synthetic benches produce theoretical differences which can't be seen by typical desktop or lapto users.Jocelyn - Monday, December 10, 2012 - link

Any chance we'll see Steady State testing with the M5 Pro for comparison?Jocelyn - Monday, December 10, 2012 - link

Had no idea this was a multi part review, sorry and Thank You :)jwilliams4200 - Monday, December 10, 2012 - link

"The peaks are actually high compared to other SSDs but having one IO transfer at 3-5x the speed every now and then won't help if over 90% of the transfers are significantly slower."Just a note that the statement quoted above is misleading. From your graphs, you can see that the Plextor is cycling between 100 IOPS and 32,000+ IOPS (there are a scattering of points in between, but the distribution is predominantly bimodal). So it is hardly "3-5x the speed". The peaks are actually more than 300x the low speed. So the average throughput depends almost entirely on what percentage of the time the SSD spends at the 32,000+ IOPS speed. It is easy to see that adding 20% or 25% OP allows the Plextor to spend a larger percentage of its time at the high speed.

In my testing, the throughput peaks always last about 0.4sec, but at 0% OP they only occur with a period of about 7 seconds, while at 20% OP the peaks occur with a period of about 1.4 seconds, so the average throughput increases by about a factor of 5 (= 7 / 1.4) when comparing 20% OP to 0% OP. Incidentally, the SSD is spending about 30% of its time in the high-speed mode with 20% OP, but only about 6% of its time in the high-speed mode with 0% OP.

chrnochime - Monday, December 10, 2012 - link

jwilliams. Got a few models of SSD that you'd recommend for reliability? I have a plextor M3 pro 120 GB and I'm thinking of buying a second one, probably 128, maybe 256. Samsung 830 is hard to find cheap now, so I'm looking at the Samsung 840 pro/Plextor M5 pro/Corsair/Kingston new ones released in the past 3 months.Thanks.

jwilliams4200 - Monday, December 10, 2012 - link

At the moment, I think the Plextor M5P is probably the highest quality consumer SSD that you can buy. It has been out for more than 4 months and I have not seen any real bugs or defects reported. I like that Plextor publicizes the details of the tests they do for qualification as well as their production tests, and they are tough tests, so you know that the shipping SSDs that have passed those tests are high quality.Now, I am assuming that you are not going to be subjecting the SSD to sustained heavy workloads like an hour of 4KQD32 writes. The M5P can do that of course, but if that were your main type of workload, there are higher performance choices like the Samsung 840 Pro or the Corsair Neutron GTX. But the 840 Pro has not been out long enough for me to call it high quality, and the Neutron and Neutron GTX do have a few reports of failures that may (or may not) be indicative of lower quality than the Plextor.

JellyRoll - Tuesday, December 11, 2012 - link

In steady state the 840 is ridiculously good compared to other SSDs.chrnochime - Tuesday, December 11, 2012 - link

Thank you for the detailed explanation! Much appreciated.I guess I can go ahead and search for good deals on M5P. I put reliability and durability(as in how long the drive can last) above all else. A slower speed that makes the drive that much longer is much more preferable to me.

Kristian Vättö - Tuesday, December 11, 2012 - link

Oh, what I meant was that Plextor's peak throughput is around 3-5x the peak throughput of other SSDs.JellyRoll - Tuesday, December 11, 2012 - link

The consistency testing is totally irrelevant for consumer workloads. Testing 4k full span random writes is ridiculous on an consumer SSD. This will NEVER be seen by any user outside of an enterprise scenario. This type of testing has been done for ages with enterprise SSDs by several sites. Never for consumer SSDs as its irrelevance is obvious.Then mix in the fact that this is done without a filesystem (which no user could ever do because they need this little thing called an 'operating system) and to top it off with absolutely no TRIM in play. without a filesystem there is no TRIM. What exactly are you recreating here that is of relevance?