Plextor Updates The Firmware on M5 Pro: Promises Increased Performance, We Test It

by Kristian Vättö on December 10, 2012 2:30 PM ESTEarlier this week Plextor put out a press release about a new firmware for the M5 Pro SSD. The new 1.02 firmware is branded "Xtreme" and Plextor claims increases in both sequential write and random read performance. We originally reviewed the M5 Pro back in August and it did well in our tests but due to the new firmware, it's time to revisit the M5 Pro. It's still the only consumer SSD based on Marvell's 88SS9187 controller and it's one of the few that uses Toshiba's 19nm MLC NAND as most manufacturers are sticking with Toshiba's 24nm MLC for now.

It's not unheard of for manufacturers to release faster firmware updates even after the product has already made it to market. OCZ's Vertex 4 is among the most well known for its firmware updates because OCZ didn't provide just one, but two firmware updates that increased performance by a healthy margin. The SSD space is no stranger to aggressive launch schedules that force products out before they're fully baked. Fortunately a lot can be done via firmware updates.

The new M5 Pro firmware is already available at Plextor's site and the update should not be destructive, although we still strongly suggest that you have an up-to-date backup before flashing the drive. I've compiled the differences between the new 1.02 firmware and older versions in the table below:

| Plextor M5 Pro with Firmware 1.02 Specifications | |||

| Capacity | 128GB | 256GB | 512GB |

| Sequential Read | 540MB/s | 540MB/s | 540MB/s |

| Sequential Write | 340MB/s -> 330MB/s | 450MB/s -> 460MB/s | 450MB/s -> 470MB/s |

| 4KB Random Read | 91K IOPS -> 92K IOPS | 94K IOPS -> 100K IOPS | 94K IOPS -> 100K IOPS |

| 4KB Random Write | 82K IOPS | 86K IOPS | 86K IOPS -> 88K IOPS |

The 1.02 firmware doesn't bring any major performance increases and the most you'll be getting is 6% boost in random read speed. To test if there are any other changes, I decided to run the updated M5 Pro through our regular test suite. I'm only including the most relevant tests in the article but you can find all results in our Bench. The test system and benchmark explanations can be found in any of our SSD reviews, such as the original M5 Pro review.

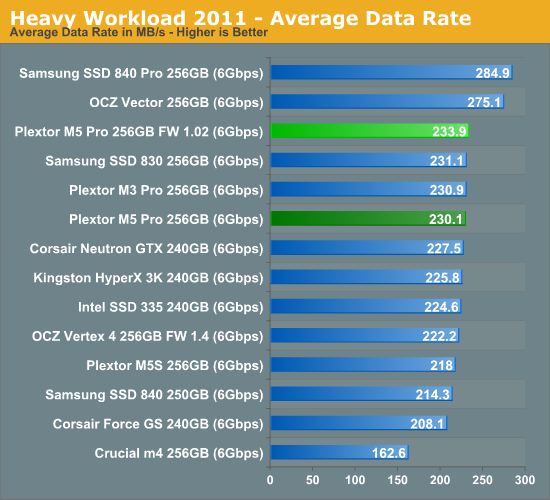

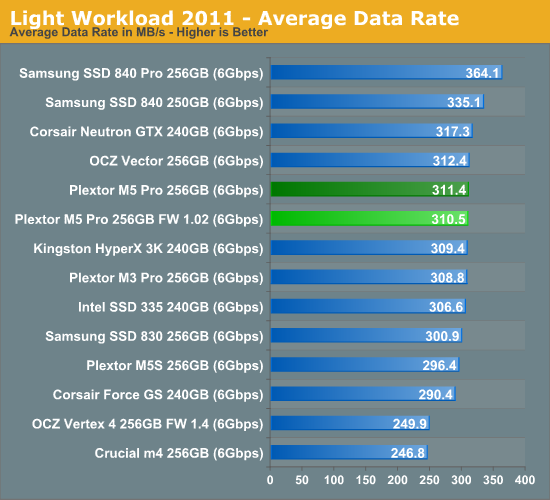

AnandTech Storage Bench

In our storage suites, the 1.02 firmware isn't noticeably faster. In our Heavy suite the new firmware is able to pull 3.8MB/s (1.7%) higher throughput but that falls within the range of normal run to run variance. The same applies to the Light suite test where the new firmware is actually slightly slower.

'

'

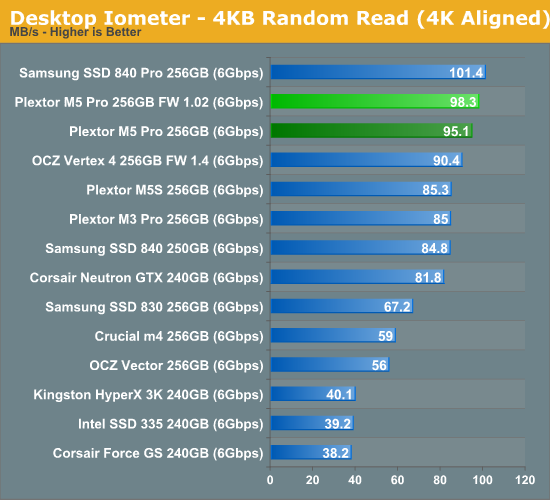

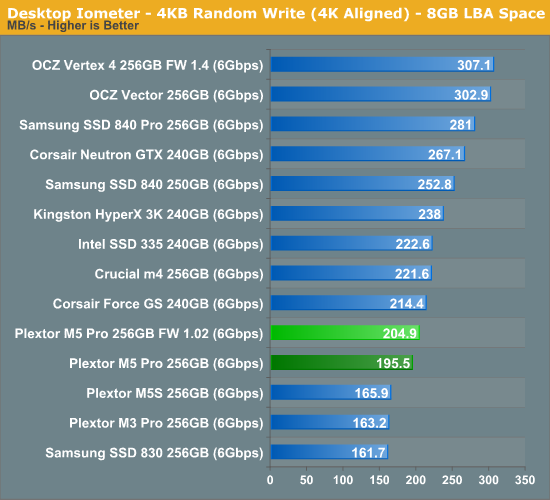

Random & Sequential Read/Write Speed

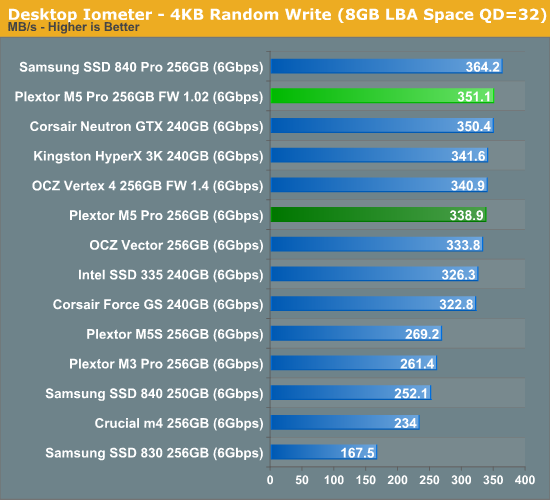

Random speeds are all up by 3-5%, though that's hardly going to impact real world performance.

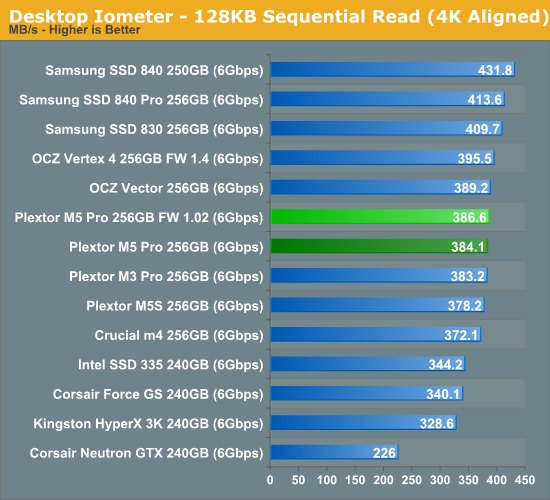

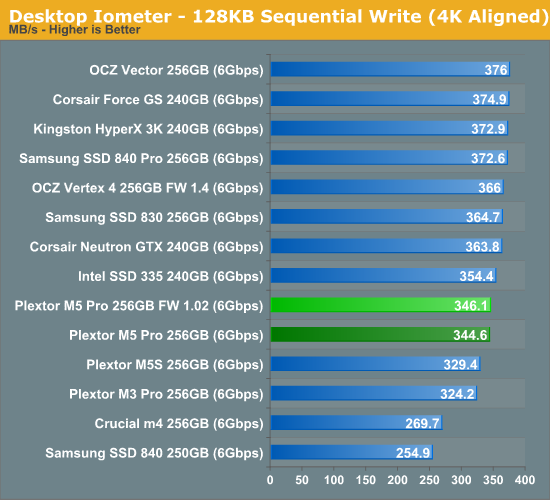

Sequential speeds are essentially not changed at all and the M5 Pro is still a mid-range performer.

46 Comments

View All Comments

lunadesign - Tuesday, December 11, 2012 - link

Understood, if my server's workload were to be relatively heavy. But do you really think that my server's workload (based on an admittedly rough description above) is going to get into these sorts of problematic situations?'nar - Tuesday, December 11, 2012 - link

I disagree. RAID 5 stripes, as does RAID 0, so they need to be synchronized(hard drives had to spin in-sync.) But RAID 1 uses the drive that answers first, as they have the same data. RAID 10 is a bit of both I suppose, but I also don't agree that you think that the lack of TRIM forces the drive into a low speed state in the first place.Doesn't TRIM just tell the drive what is safe to delete? Unless the drive is near full, why would that affect its' speed? TRIM was essential 2-3 years ago, but after SF drives GC got much better. I don't even think TRIM matters on consumer drives now.

For the most part I don't think these "steady state" tests even matter on consumer drives(or servers as lunadesign has). Sure, they are nice tests and have useful data, but it lacks real world data. The name "steady state" is misleading, to me anyway. It will not be a steady state in my computer as that is not my usage pattern. Why not test the IOPS during standard benchmark runs? Even with 8-10 VM's his server will be idle most of the time. Of course, if all of those VM's are compiling software all day, then that is different, but that's not what VM's are setup for anyway.

JellyRoll - Tuesday, December 11, 2012 - link

GC still does not handle deleted data as efficiently as TRIM. There is still a huge need for TRIM.We can see the affects of using this SSD for something other than its intended purpose outside of a TRIM environment. There is a large distribution of writes that are returning sub-par performance in this environment. The array (striped across RAID 1) will suffer low performance, constrained to the speed of the lowest I/O.

There are SSDs designed for this type of use specifically, hence why they have the distinction between enterprise and consumer storage.

cdillon - Tuesday, December 11, 2012 - link

Re: 'nar "RAID 5 stripes, as does RAID 0, so they need to be synchronized(hard drives had to spin in-sync.)"Only RAID 3 required spindle-synced drives for performance reasons. No other RAID level requires that. Not only is spindle-sync completely irrelevant for SSDs, hard drives haven't been made with spindle-sync support for a very long time. Any "synchronization" in a modern RAID array has to do with the data being committed to stable storage. A full RAID 4/5/6 stripe should be written and acknowledged by the drives before the next stripe is written to prevent the data and parity blocks from getting out of sync. This is NOT a consideration for RAID 0 because there is no "stripe consistency" to be had due to the lack of a parity block.

Re: JellyRoll "The RAID is only as fast as the slowest member"

It is not quite so simple in most cases. It is only that simple for a single mirror set (RAID 1) performing writes. When you start talking about other RAID types, the effect of a single slow drive depends greatly on both the RAID setup and the workload. For example, high-QD small-block random read workloads would be the least affected by a slow drive in an array, regardless of the RAID type. In that case you should achieve random I/O performance that approaches the sum of all non-dedicated-parity drives in the array.

JellyRoll - Tuesday, December 11, 2012 - link

i agree, but i was speaking specifically to writes.bogdan_kr - Monday, March 4, 2013 - link

1.03 firmware has been released for Plextor M5 Pro series. Is there a chance for performance consistency check for this new firmware?