The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

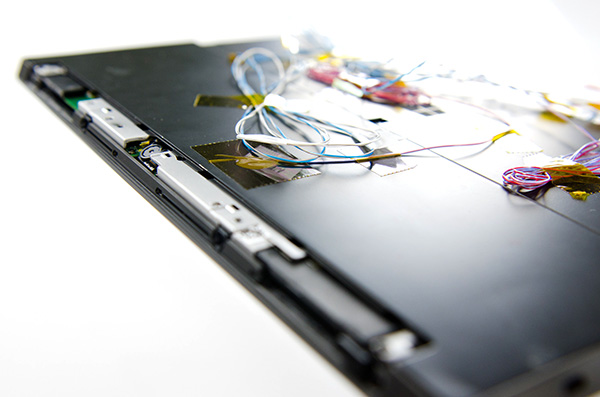

Late last month, Intel dropped by my office with a power engineer for a rare demonstration of its competitive position versus NVIDIA's Tegra 3 when it came to power consumption. Like most companies in the mobile space, Intel doesn't just rely on device level power testing to determine battery life. In order to ensure that its CPU, GPU, memory controller and even NAND are all as power efficient as possible, most companies will measure power consumption directly on a tablet or smartphone motherboard.

The process would be a piece of cake if you had measurement points already prepared on the board, but in most cases Intel (and its competitors) are taking apart a retail device and hunting for a way to measure CPU or GPU power. I described how it's done in the original article:

Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

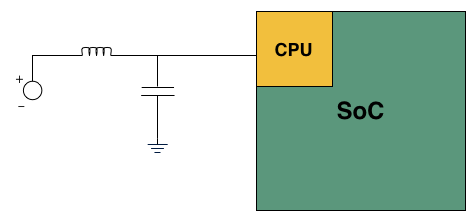

A basic LC filter

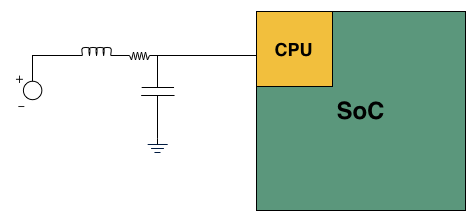

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments (NI USB-6289) you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

The previous article focused on an admittedly not too interesting comparison: Intel's Atom Z2760 (Clover Trail) versus NVIDIA's Tegra 3. After much pleading, Intel returned with two more tablets: a Dell XPS 10 using Qualcomm's APQ8060A SoC (dual-core 28nm Krait) and a Nexus 10 using Samsung's Exynos 5 Dual (dual-core 32nm Cortex A15). What was a walk in the park for Atom all of the sudden became much more challenging. Both of these SoCs are built on very modern, low power manufacturing processes and Intel no longer has a performance advantage compared to Exynos 5.

Just like last time, I ensured all displays were calibrated to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased at retail by Intel, but I verified their performance against our own samples/data and noticed no meaningful deviation. Since I don't have a Dell XPS 10 of my own, I compared performance to the Samsung ATIV Tab and confirmed that things were at least performing as they should.

We'll start with the Qualcomm based Dell XPS 10...

140 Comments

View All Comments

extide - Friday, January 4, 2013 - link

When will you post an article about Bay Trail / Valley View?? Usually you guys are pretty fast to post stuff about topics like this yet I have seen some info on other sites already...jpcy - Friday, January 4, 2013 - link

...which I bet CISC users thought had ended about 18 years ago...It's good to see a resurgence of this highly useful, extremely low-power and very hardy British CPU platform.

I remember back in the day when ARMs were used in the Acorn computers (possibly too long ago for most to remember, now - I still have an A7000 and a RISC PC with both a StrongARM and a DX2-66 lol) was at war with Intel's Pentium CPU range and AMD's K6's, boasting an almost 1:1 ration of MIPS:MHz - Horsepower for your money (something Intel and AMD were severely lacking in, if I remember correctly.)

And now, well, who'dve thought it... These ARM CPUs are now in nearly everything we use... Phones, smartphones, tablets, notebooks...

Suppose I was right in the argument with my mate in school afterall... RISC, superior technology (IMHO) may well take over, yet!

nofumble62 - Friday, January 4, 2013 - link

No performance advantage, no battery life advantage. Why anyone would bother with incompatible software?sseemaku - Friday, January 4, 2013 - link

Looks like people have changed religion from AMD to ARM. Thats what I see from some comments.mugiebahar - Saturday, January 5, 2013 - link

Yeah n no. They wanted a no paid opinions to screw with the outcome. But Intel hype won over real life .Intel better and will get better - yes

Any chance they will compete (performance and PRICE) and legacy. Support to phone apps - Never in the near future which is the only time for them.

tuxRoller - Saturday, January 5, 2013 - link

Also, any chance for an actual performance comparison between the platforms?Apple's performance and power use look awesome. Better than I had imagined.

I'd love to see how they compare on the same tests, however.

Kogies - Saturday, January 5, 2013 - link

It appears the war has begun, well two wars in fact. The one you have articulately described, and the oft ensuing war-of-words...Thanks Anand, I appreciate the analysis you have given. It is excellent to get to see the level of granularity you have been able to achieve with your balance of art and science, and knowing where to hook into! I am very interested to see how the L2 cache power draw effects the comparison, just a little jitter in my mind. If nothing else, it looks as if the delicate balance of process tech., and desired performance/power may have a greater bearing on this "war" than mere ISA.

With Krait 300, Haswell, and more A15's this is going to be a tremendous year. Keep up the good work.

Torrijos - Saturday, January 5, 2013 - link

Any chance we could see the same tests run on the latest Apple iPad?That way we could have a chance to see what Apple tried to improve compared to the A15 generation.

urielshun - Saturday, January 5, 2013 - link

The whole discussion about ARM and x86 is not important when you go for the ecomonics of each platform. ARM is dirty cheap and works well. It's 1/10th of the price of any current Atom with decent perfomace (talking about RK3066).Don't underestimate the Chinese they are having a field day with ARM's pricing model and and have shown amazing chips.

In 8 years from now all SoC's would have reached the usuable performace and the only thing that will matter will be power and cost of integration.

iwod - Saturday, January 5, 2013 - link

Where are you getting 1/10 of a price from? Unless they are produced on good old 40nm LP Node with Nothing else, or crap included, otherwise there just aren't any Chinese SoC selling for $4