The Tegra 4 GPU, NVIDIA Claims Better Performance Than iPad 4

by Anand Lal Shimpi on January 14, 2013 6:13 PM ESTAt CES last week, NVIDIA announced its Tegra 4 SoC featuring four ARM Cortex A15s running at up to 1.9GHz and a fifth Cortex A15 running at between 700 - 800MHz for lighter workloads. Although much of CEO Jen-Hsun Huang's presentation focused on the improvements in CPU and camera performance, GPU performance should see a significant boost over Tegra 3.

The big disappointment for many was that NVIDIA maintained the non-unified architecture of Tegra 3, and won't fully support OpenGL ES 3.0 with the T4's GPU. NVIDIA claims the architecture is better suited for the type of content that will be available on devices during the Tegra 4's reign.

Despite the similarities to Tegra 3, components of the Tegra 4 GPU have been improved. While we're still a bit away from a good GPU deep-dive on the architecture, we do have more details than were originally announced at the press event.

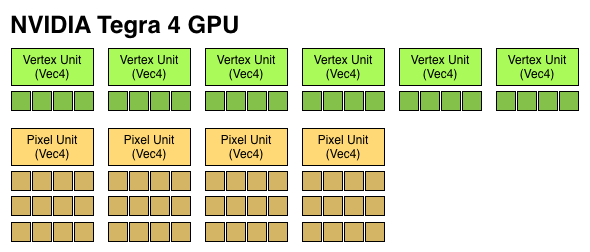

Tegra 4 features 72 GPU "cores", which are really individual components of Vec4 ALUs that can work on both scalar and vector operations. Tegra 2 featured a single Vec4 vertex shader unit (4 cores), and a single Vec4 pixel shader unit (4 cores). Tegra 3 doubled up on the pixel shader units (4 + 8 cores). Tegra 4 features six Vec4 vertex units (FP32, 24 cores) and four 3-deep Vec4 pixel units (FP20, 48 cores). The result is 6x the number of ALUs as Tegra 3, all running at a max clock speed that's higher than the 520MHz NVIDIA ran the T3 GPU at. NVIDIA did hint that the pixel shader design was somehow more efficient than what was used in Tegra 3.

If we assume a 520MHz max frequency (where Tegra 3 topped out), a fully featured Tegra 4 GPU can offer more theoretical compute than the PowerVR SGX 554MP4 in Apple's A6X. The advantage comes as a result of a higher clock speed rather than larger die area. This won't necessarily translate into better performance, particularly given Tegra 4's non-unified architecture. NVIDIA claims that at final clocks, it will be faster than the A6X both in 3D games and in GLBenchmark. The leaked GLBenchmark results are apparently from a much older silicon revision running no where near final GPU clocks.

| Mobile SoC GPU Comparison | |||||||||||||||

| GeForce ULP (2012) | PowerVR SGX 543MP2 | PowerVR SGX 543MP4 | PowerVR SGX 544MP3 | PowerVR SGX 554MP4 | GeForce ULP (2013) | ||||||||||

| Used In | Tegra 3 | A5 | A5X | Exynos 5 Octa | A6X | Tegra 4 | |||||||||

| SIMD Name | core | USSE2 | USSE2 | USSE2 | USSE2 | core | |||||||||

| # of SIMDs | 3 | 8 | 16 | 12 | 32 | 18 | |||||||||

| MADs per SIMD | 4 | 4 | 4 | 4 | 4 | 4 | |||||||||

| Total MADs | 12 | 32 | 64 | 48 | 128 | 72 | |||||||||

| GFLOPS @ Shipping Frequency | 12.4 GFLOPS | 16.0 GFLOPS | 32.0 GFLOPS | 51.1 GFLOPS | 71.6 GFLOPS | 74.8 GFLOPS | |||||||||

Tegra 4 does offer some additional enhancements over Tegra 3 in the GPU department. Real multisampling AA is finally supported as well as frame buffer compression (color and z). There's now support for 24-bit z and stencil (up from 16 bits per pixel). Max texture resolution is now 4K x 4K, up from 2K x 2K in Tegra 3. Percentage-closer filtering is supported for shadows. Finally, FP16 filter and blend is supported in hardware. ASTC isn't supported.

If you're missing details on Tegra 4's CPU, be sure to check out our initial coverage.

59 Comments

View All Comments

B3an - Monday, January 14, 2013 - link

GFLOPs are not everything though. The PS Vita is capable of coming reasonably close to PS3 graphics and thats with ARM A9 and SGX543MP4+. The Tegra 4 should pretty much be capable of 360/PS3 level graphics, to the point where most people wouldn't notice a difference anyway. It will also have more RAM to work with, but i'm guessing with less bandwidth.Jamezrp - Monday, January 14, 2013 - link

Also worth noting that during the event they made very little noise to say it was faster than the iPad. They didn't say it wasn't, but barely made any mention of it. Considering Apple's phone and tablet are already out and Tegra 4 will be on a few dozen devices this year, you'd think that would be a major highlight to point out.As always with Tegra I assume that when they say it's more powerful, they really mean that if developers target their apps for Tegra then they can have better performance (potentially), not for general programming. Of course, considering my knowledge of programming, I don't know exactly what that means...

Krysto - Monday, January 14, 2013 - link

Rogue can go pretty high - for a cost in power consumption. So just because it can reach a certain level, doesn't mean you'll actually see that in the first GPU models coming out this year. We'll see what Apple uses this spring, but I doubt they'll use anything more than twice as powerful as A6x, after only ~6 months. It might even be only 50% faster.powerarmour - Monday, January 14, 2013 - link

Well, I'd be more surprised if it wasn't tbh!hmaarrfk - Monday, January 14, 2013 - link

Yea seriously. If Apple wasn't as secretive as they are, they would have released their demo of A6X last year around this time.....Mumrik - Tuesday, January 15, 2013 - link

It would be really really weird if nV wasn't able to claim better performance than the iPad 4 at this point.KoolAidMan1 - Tuesday, January 15, 2013 - link

It would need to be well over a doubling in performance to get up to iPad 4 levels of performance.We'll see how things are with independent benchmarks. It is bizarre that a company known for its GPU has been playing catchup with PowerVR and Enyxos for so long now.

BugblatterIII - Monday, January 14, 2013 - link

The iPad 4 with A6X will have been out for quite some time by the time Tegra 4 devices are available. It'd be nice if Nvidia could come up with something that would more than just barely beat the A6X given a perfectly-balanced workload.I'm firmly in the Android camp but the (comparatively) lacklustre GPUs we get landed with are a big source of annoyance.

menting - Monday, January 14, 2013 - link

i'm all for faster GPU speeds, but not if the tradeoff is battery life.People that play stressful 3D games are still in the minority.

quiksilvr - Monday, January 14, 2013 - link

Android chips (generally) have faster CPUs but slower GPUs when compared to the Apple chips.So in other words, despite iOS looking smoother (and running games more smoothly), load times and execution is generally faster in Android.