A Month with Apple's Fusion Drive

by Anand Lal Shimpi on January 18, 2013 9:30 AM EST- Posted in

- Storage

- Mac

- SSDs

- Apple

- SSD Caching

- Fusion Drive

When decent, somewhat affordable, client focused solid state drives first came on the scene in 2008 the technology was magical. I called the original X25-M the best upgrade you could do for your system (admittedly I threw in the caveat that I’d like to see > 100GB and at a better price than $600). Although NAND and SSD pricing have both matured handsomely over time, there’s still the fact that mechanical storage is an order of magnitude cheaper.

The solution I’ve always advocated was a manual combination of SSD and HDD technologies. Buy a big enough SSD to house your OS, applications and maybe even a game or two, and put everything else on a RAID-1 array of hard drives. This approach works quite well in a desktop, but you have to be ok with manually managing where your files go.

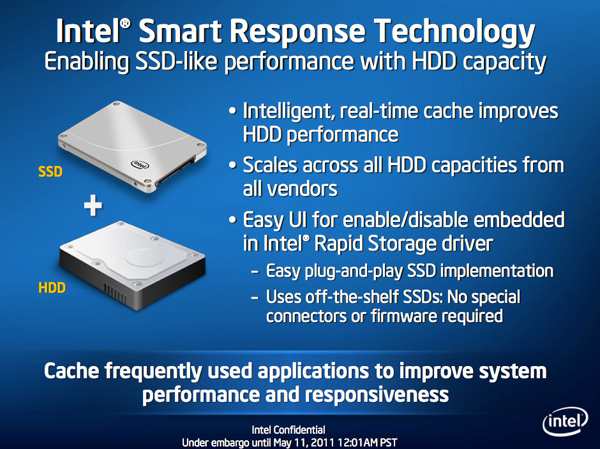

I was always curious about how OEMs would handle this problem, since educating the masses on having to only put large, infrequently used files on one drive with everything else on another didn’t seem like a good idea. With its 6-series chipsets Intel introduced its Smart Response Technology, along with a special 20GB SLC SSD designed to act as a cache for a single hard drive or a bunch in an array.

Since then we’ve seen other SSD caching solutions come forward that didn’t have Intel’s chipset requirements. However most of these solutions were paired with really cheap, really small and really bad mSATA SSDs. More recently, OEMs have been partnering with SSD caching vendors to barely meet the minimum requirements for Ultrabook certification. In general, the experience is pretty bad.

Hard drive makers are working on the same problem, but are trying to fix it by adding a (very) small amount of NAND onto their mechanical drives. Once again this usually results in a faster hard drive experience, rather than an approximation of the SSD experience.

Typically this is the way to deal with hiding latency the lower you get in the memory hierarchy. Toss a small amount of faster memory between two levels and call it a day. Unlike adding a level 3 cache to a CPU however, NAND storage devices already exist in sizes large enough to house all of your data. It’s the equivalent of having to stick with an 8MB L3 cache when for a few hundred dollars more you could have 16GB. Once you’ve tasted the latter, the former seems like a pointless compromise.

Apple was among the first OEMs to realize the futility of the tradeoff. All of its mainstream mobile devices are NAND-only (iPhone, iPad and MacBook Air). More recently, Apple started migrating even its professional notebooks over to an SSD-only setup (MacBook Pro with Retina Display). Apple does have the luxury of not competing at lower price points for its Macs, which definitely makes dropping hard drives an easier thing to accomplish. Even so, out of the 6 distinct Macs that Apple ships today (MBA, rMBP, MBP, Mac mini, iMac and Mac Pro), only two of them ship without any hard drive option by default. The rest come with good old fashioned mechanical storage.

Moving something like the iMac to a solid state configuration is a bit tougher to pull off. While notebook users (especially anyone using an ultraportable) are already used to not having multiple terabytes of storage at their disposal, someone replacing a desktop isn’t necessarily well suited for the same.

Apple’s solution to the problem is, at a high level, no different than all of the PC OEMs who have tried hybrid SSD/HDD solutions in the past. The difference is in the size of the SSD component of the solution, and the software layer.

127 Comments

View All Comments

BrooksT - Friday, January 18, 2013 - link

Excellent point and insight.I'm 40+ years old; I still know x86 assembly language and use Ethernet and IP protocol analyzers frequently. I'm fluent in god-knows how many programming languages and build my own desktops. I know perfectly well how to manage storage.

But why would I *want* to? I have a demanding day job in the technology field. I have a couple of hobbies outside of computers and am just generally very, very busy. If I can pay Apple (or anyone) a few hundred bucks to get 90% of the benefit I'd see from spending several hours a year doing this... why in the world would I want to do it myself?

The intersection of people who have the technical knowledge to manage their own SSD/HD setup, people who have the time to do it, and people who have the interest in doing it is *incredibly* tiny. Probably every single one of them is in this thread :)

Death666Angel - Friday, January 18, 2013 - link

I wonder how you organize stuff right now? Even before I had more than one HDD I still had multiple partitions (one for system and one for media at the time), so that I could reinstall windows without having my media touched. And that media partition was segregated into photos, music, movies, documents etc. That is how I organize my files and know where what is located.I don't see any change to my behaviour with an SSD functioning as my system partition and the HDDs functioning as media partitions.

Do people just put everything on the desktop? How do you find anything? I just don't understand this at all.

KitsuneKnight - Friday, January 18, 2013 - link

Do you not have any type of file that's both large, numerous, and demands high performance?I regularly work with Virtual Machines, with each of them usually being around 10 Gb (some being as small as 2, with the largest closer to 60). I have far too many to fit on my machine's SSD, but they're also /far/ faster when run from it.

So what do I have to do? I have to break my nice, clean hierarchy. I have a folder both on my SSD and on my eSATA RAID for them. The ones I'm actively working with the most I keep on the SSD, and the ones I'm not actively using on the HDD. Which means I also have to regularly move the VMs between the disks. This is /far/ from an ideal situation. It means I never know /exactly/ where any given VM is at any given moment.

On the other hand, it sounds like a Fusion Drive set up could handle the situation far better. If I hadn't worked with a VM in a while, there would be an initial slowdown, but eventually the most used parts would be promoted to the SSD (how fast depend on implementation details), resulting in very fast access. Also, since it isn't on a per-file level, the parts of the VM's drive that are rarely/never accessed won't be wasting space on the SSD... potentially allowing me to store more VMs on the SSD at any given moment, resulting in better performance.

So I have potentially better performance over all (either way, I doubt it's too far from a manual set up), zero maintenance overhead of shuffling files around, and not having to destroy my clean hierarchy (symlinks would mean more work for me and potentially more confusion).

VMs aren't the only thing I've done this way. Some apps I virtually never use I've moved over (breaking that hierarchy). I might have to start doing this with more things in the future.

Let me ask you this: Why do you think you'd do a better job managing the data than a computer? It should have no trouble figuring out what files are rarely accessed, and what are constantly accessed... and can move them around easier than you (do you plan on symlinking individual files? what about chunks of files?).

Death666Angel - Friday, January 18, 2013 - link

Since I don't use my computer for any work, I don't have large files I need frequent access to.How many of those VMs do you have? How big is your current SSD?

Adding the ability for FD adds 250 to 400USD which is enough for another 250 to 500GB SSD, would that be enough for all your data?

If you are doing serious work on the PC, I don't understand why you can't justify buying a bigger SSD. It's a business expense, so it's not as expensive as it is for consumers and the time you save will mean a better productivity.

The negatives of this setup in my opinion:

I don't know which physical location my files have, so I cannot easily upgrade one of the drives. I also don't know what happens if one of the drives fail, do I need to replace both and lose all the data? It introduces more complexity to the system which is never good.

Performance may be up for some situations, but it will obviously never rival real SSD speeds. And as Anand showed in this little test, some precious SSD space was wasted on video files. There will be inefficiencies. Though they might get better over time. But then again, so will SSD pricing.

As for your last point: Many OSes still don't use their RAM very well, so I'm not so sure I want to trust them with my SSD space. I do envision a future where there will be 32 to 256GB of high speed NAND on mainboards which will be addressed in a similar fashion to RAM and then people add SSDs/HDDs on top of that.

KitsuneKnight - Friday, January 18, 2013 - link

Currently, 10 VMs, totally approximately 130 GBs. My SSD is only 128 GB. Even if I'd sprung for a 500 GB model (which would have cost closer to $1,000 at the time), I'd have still needed a second HDD to store all my data, most of which would work fine on a traditional rust bucket, as they're not bound by the disk's transfer speed (they're bound by humans... i.e. the playback speed of music/video files).Also, for any data stored on the SSD by the fusion drive, it wouldn't just "rival" SSD speeds, it would /be/ SSD speeds.

I'm also not sure what your comment about RAM is about... Operating Systems do a very good job managing RAM, trying to keep as much of it occupied with something as possible (which includes caching files). There are extreme cases where it's less than ideal, but if you think it'd be a net-win for memory to be manually managed by the user, you're nuts.

If one of the drives fail, you'd just replace that, and then restore from a backup (which should be pretty trivial for any machine running OSX, thanks to TimeMachine's automatic backups)... the same as if a RAID 0 array failed. Same if you want to upgrade one of the drives.

Death666Angel - Friday, January 18, 2013 - link

Oh and btw.: I think this is still a far better product than any Windows SSD caching I've seen. And if you can use it like the 2 people who made the first comments, great. But getting it directly from Apple makes it less appealing with the current options.EnzoFX - Saturday, January 19, 2013 - link

This. No one should want to do this manually. Everyone will have their own thresholds, but that's besides the point.robinthakur - Sunday, January 20, 2013 - link

Lol exactly! When I was a student and had loads of free time, I built my own pcs and overclocked them (Celeron 300a FTW!) but over the years, I really don't have the time anymore to tinker constantly and find myself using Macs increasingly now, booting into Windows whenever I need to use Visual Studio. Yes they are more expensive, but they are very nicely designed and powerful (assuming money is no limiter)mavere - Friday, January 18, 2013 - link

"The proportion of people who can handle manually segregating their files is much, much smaller than most of us realize"I agreed with your post, but it always astounds me that commenters in articles like these need occasional reminders that the real world exists, and no, people don't care about obsessive, esoteric ways to deal with technological minutiae.

WaltFrench - Friday, January 18, 2013 - link

Anybody else getting a bit of déjà vu? I recently saw a rehash of the compiler-vs-assembly (or perhaps, trick-playing to work around compiler-optimization bugs); the early comment was K&P, 1976.Yes, anybody who knows what they're doing, and is willing to spend the time, can hand-tune a machine/storage system, better than a general-purpose algorithm. *I* have the combo SSD + spinner approach in my laptop, but would have saved myself MANY hours of fussing and frustration, had a good Fusion-type solution been available.

It'd be interesting to see how much time Anand thinks a person of his skill and general experience, would take to install, configure and tune a SSD+spinner combo, versus the time he'd save per month from the somewhat better results vis-à-vis a Fusion drive. As a very rough SWAG, I'll guess that the payback for an expert, heavy user is probably around 2–3 years, an up-front sunk cost that won't pay back because it'll be necessary to repeat with a NEW machine before the time.