The Great Equalizer Part 2: Surface Pro vs. Android Devices in 3DMark

by Anand Lal Shimpi on April 2, 2013 2:26 PM EST

While we're still waiting for Windows RT and iOS versions of the latest 3DMark, there is one cross-platform comparison we can make: Ivy Bridge/Clover Trail to the Android devices we just tested in 3DMark.

The same caveats exist under 3DMark that we mentioned in our GL/DXBenchmark coverage: not only is this a cross-OS comparison, but we're also looking across APIs as well (OpenGL ES 2.0 for Android vs. Direct3D FL 9_1 for Windows). There will be differences in driver maturity not to mention just how well optimized each OS is for the hardware its running on. There are literally decades of experience in optimizing x86 hardware for Windows, compared to the past few years of evolution on the Android side of the fence.

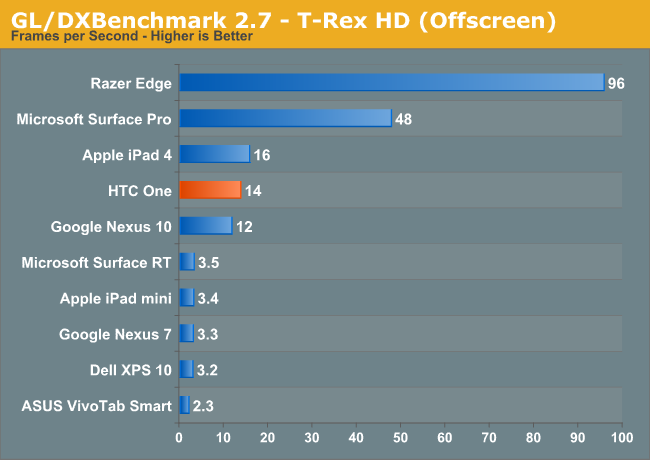

Absent from these charts is anything running on iOS. We're still waiting for a version of 3DMark for iOS unfortunately. If our previous results are any indication however, Qualcomm's Adreno 320 seems to be hot on the heels of the GPU performance of the 4th generation iPad:

In our GL/DXBenchmark comparison we noted Microsoft's Surface Pro (Intel HD 4000 graphics) managed to deliver 3x the performance of the 4th generation iPad. Will 3DMark agree?

All of these tests are run at the default 720p settings. The key comparisons to focus on are Surface Pro (Intel HD 4000), VivoTab Smart (Intel Clover Trail/SGX 545) and the HTC One (Snapdragon 600/Adreno 330). I've borrowed the test descriptions from this morning's article.

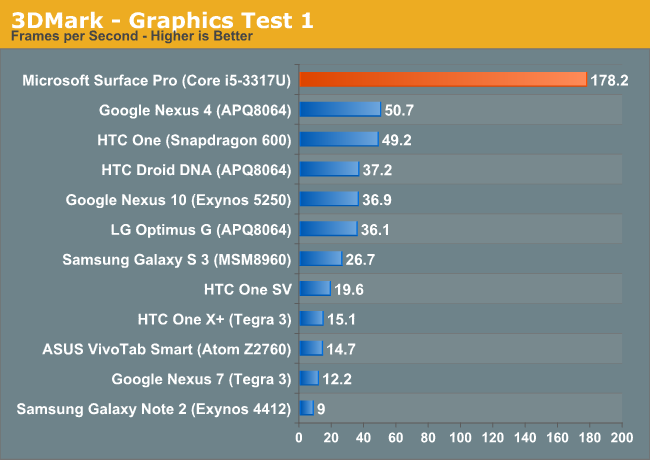

Graphics Test 1

Ice Storm Graphics test 1 stresses the hardware’s ability to process lots of vertices while keeping the pixel load relatively light. Hardware on this level may have dedicated capacity for separate vertex and pixel processing. Stressing both capacities individually reveals the hardware’s limitations in both aspects.

In an average frame, 530,000 vertices are processed leading to 180,000 triangles rasterized either to the shadow map or to the screen. At the same time, 4.7 million pixels are processed per frame.

Pixel load is kept low by excluding expensive post processing steps, and by not rendering particle effects.

In this mostly geometry bound test, Surface Pro does extremely well. As we saw in our GL/DXBenchmark comparison however, the ARM based Android platforms don't seem to deliver the most competitive triangle throughput.

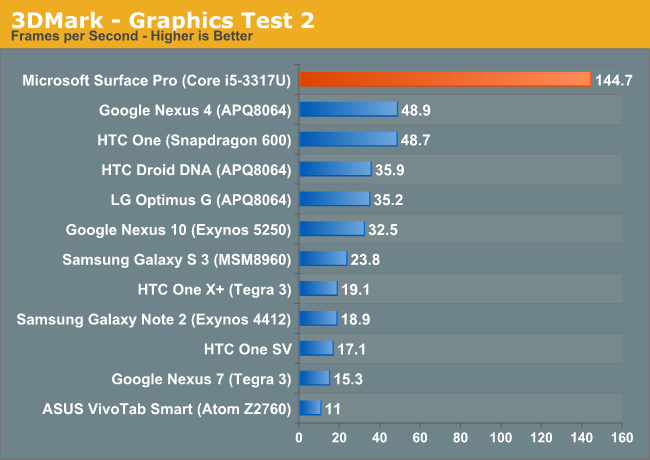

Graphics Test 2

Graphics test 2 stresses the hardware’s ability to process lots of pixels. It tests the ability to read textures, do per pixel computations and write to render targets.

On average, 12.6 million pixels are processed per frame. The additional pixel processing compared to Graphics test 1 comes from including particles and post processing effects such as bloom, streaks and motion blur.

In each frame, an average 75,000 vertices are processed. This number is considerably lower than in Graphics test 1 because shadows are not drawn and the processed geometry has a lower number of polygons.

The more pixel shader bound test seems to agree with what we've seen already: Intel's HD 4000 appears to deliver around 3x the performance of the fastest ultra mobile GPUs, obviously at higher power consumption. In the PC space a 3x gap would seem huge, but taking power consumption into account it doesn't seem all that big of a gap here.

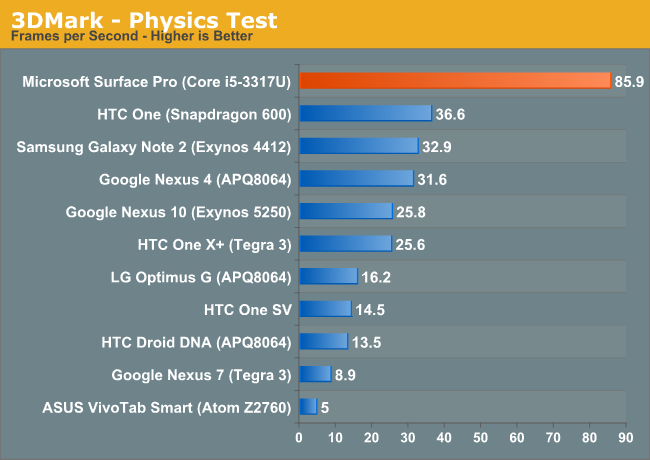

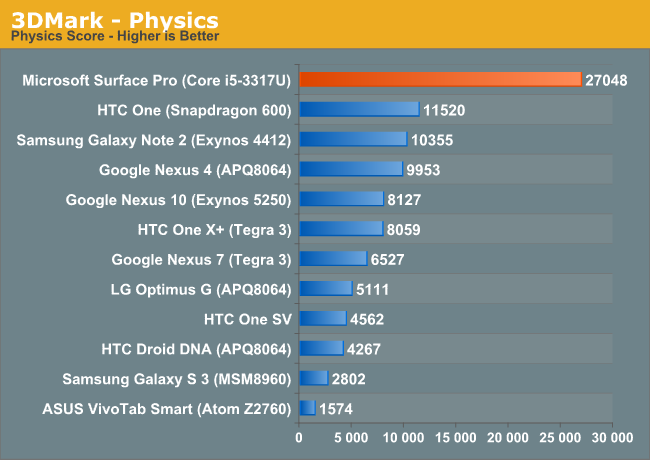

Physics Test

The purpose of the Physics test is to benchmark the hardware’s ability to do gameplay physics simulations on CPU. The GPU load is kept as low as possible to ensure that only the CPU’s capabilities are stressed.

The test has four simulated worlds. Each world has two soft bodies and two rigid bodies colliding with each other. One thread per available logical CPU core is used to run simulations. All physics are computed on the CPU with soft body vertex data updated to the GPU each frame. The background is drawn as a static image for the least possible GPU load.

The Physics test uses the Bullet Open Source Physics Library.

Surprisingly enough, the CPU bound physics test has Surface Pro delivering 2.3x the performance of the Snapdragon 600 based HTC One. Quad-core Cortex A15 based SoCs will likely eat into this gap considerably. What will be most interesting is to see how the ARM and x86 platforms converge when they are faced with similar power/thermal constraints.

What was particularly surprising to me is just how poorly Intel's Atom Z2560 (Clover Trail) does in this test. Granted the physics benchmark is very thread heavy, but the fact that four Cortex A9s can handily outperform two 32nm Atom cores is pretty impressive. Intel hopes to fix this with its first out-of-order Atom later this year, but that chip will also have to contend with Cortex A15 based competitors.

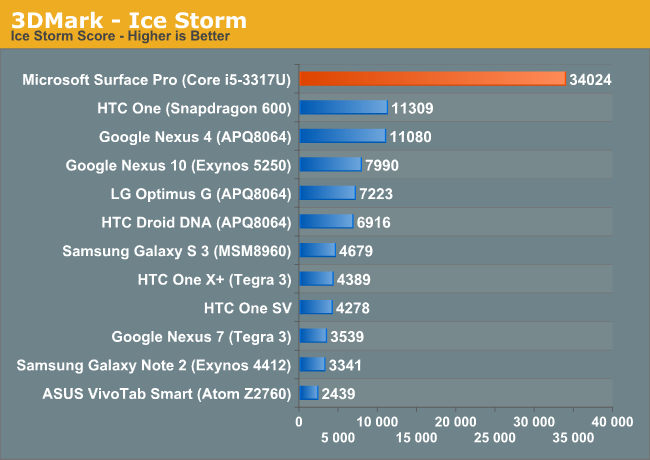

Ice Storm - Overall Score

The overall score seems to agree with what we learned from the GL/DXBenchmark comparison. Intel's HD 4000 delivers around 3x the graphics performance of the leading ultra mobile GPUs, while consuming far more power. Haswell will drive platform power down, but active power should still be appreciably higher than the ARM based competition. I've heard rumblings of sub-10W TDP (not SDP) Haswell parts, so we may see Intel move down the power curve a bit more aggressively than I've been expecting publicly. The move to 14nm, and particularly the shift to Skylake in 2015 will bring about true convergence between the Ultrabook and 10-inch tablet markets. Long term I wonder if the 10-inch tablet market won't go away completely, morphing into a hybird market (think Surface Pro-style devices) with 7 - 8 inch tablets taking over.

It's almost not worth it to talk about Clover Trail here. The platform is just bad when it comes to graphics performance. You'll notice I used ASUS' VivoTab Smart here and in the GL/DXBenchmark comparison instead of Acer's W510. That's not just because of preference. I couldn't get the W510 to reliably complete GL/DXBenchmark without its graphics driver crashing. It's very obvious to me that Intel didn't spend a lot of time focusing on 3D performance or driver stability with Clover Trail. It's disappointing that even in 2012/2013 there are parts of Intel that still don't take GPU performance seriously.

The next-generation 22nm Intel Atom SoC will integrate Intel's gen graphics core (same architecture as HD 4000, but with fewer EUs). The move to Intel's own graphics core should significantly modernize Atom performance. The real question is how power efficient it will be compared to the best from Imagination Tech and Qualcomm.

56 Comments

View All Comments

dealcorn - Wednesday, April 3, 2013 - link

I thought Intel disclosed what the next gen 22 nm atom's graphics engine is "comparable to". I wonder where that "comparable to" lands on the charts. 22 nm Atom will not have HD 4000 but it may be possible to speculate sorta insight-fully whether 22 nm Atom may have the right stuff. There is a place for properly labeled, insightful speculation about the upcoming battle. I liked the articles where you you used lots of wires to measured voltage drop and calculated power consumption by functional unit (e.g. graphics engine) within a SOC. The appeal of applying that technique to the "comparable to" SOC is it should provide an upper bound to next gen Atom's GPU power consumption. That technique provides wrong results because next gen Atoms will be built with a different (more power efficient) fabrication technology. However, it may hint how much (or little) Intel needs out of its low power fab tech to be competitive. If the din from the crowd at the coliseum clamoring for more red meat gets too loud, I might throw that into the ring.Second, I think in the first Chart, it is a boo boo to color the HPC item red.

scaramoosh - Wednesday, April 3, 2013 - link

Why do people keep comparing this to ARM tablets? This is a Laptop replacement, those other devices are basically just large mobiles without the mobile capability.Two very different markets.

meacupla - Wednesday, April 3, 2013 - link

Because ivy bridge needs to lower power consumption, while ARM needs to improve computing and graphics capacity?These two technologies are in convergence, so it helps to see where they are at now.

krumme - Wednesday, April 3, 2013 - link

I think its relevant to compare a Porche to a Toyota. That gives some insigt, and the test here gives some surprising results.But the presentation of the test and the context is wrong, because its presented as if IB is a competitor to Arm. And thats just way off, and done in that perspective, it would be the same as if i compared my Samsung 9 series x3c to my doughters HP 11.6 AMD e450 APU. Thats just different markets and needs.

When you read this and other articles you get the impression its interesting if broadwell or successor can reach AMD power levels. How on earth did that become interesting. Its excactly like comparing a cheap tn low res screen to the excellent pls. Or cheap wiskey to the most expensive. The comparison have its strong sides, and also the obvious limitations. I think its good journalism to present at write that explicit.

Intel have huge capex, and that means its a dream to compare IB to ARM. Even Atom is a stretch, but thats the same market as huge high-end ARM, so its fine.

krumme - Wednesday, April 3, 2013 - link

ARM not AMD power levels - reaching BD levels should be easy :)zeusk - Wednesday, April 3, 2013 - link

uhm, there is a big mistake in the article, QSD600 carries adreno 320 with higher AXI bus speed, not the 330 which is slated for QSD800Drazick - Wednesday, April 3, 2013 - link

Why don't you publish it on Google+?Anyhow,

Could you compare the same hardware under Android and WP8 so we could see which OS is more efficient?

Thanks.

kyuu - Wednesday, April 3, 2013 - link

I don't believe they released the benchmark for WP8.Krysto - Wednesday, April 3, 2013 - link

Who cares about Surface Pro? Why are you comparing $1000-$2000 tablets with $200-$500 tablets? You know that's not a fair comparison.A much more fair comparison is with Surface RT, and then we can actually see what's the difference in scores for the cross-API/cross-platform benchmark, while using the same hardware (Tegra 3). So do one with Transformer Prime vs Surface RT. You may even include the Nexus 7 (although that may have a slightly lower clock for both CPU and GPU).

meacupla - Wednesday, April 3, 2013 - link

It's more of a comparison of ARM vs. x86Seeing as x86 only has 3 or 4 CPU choices, ULV i3/i5 and clovertrail atom, it's quite obvious that these would be included.

And why would I want a $500 tablet, when I'll need a $500 laptop to go with it? I might as well buy myself a $1000 x86 tablet.