Intel's Return to DRAM: Haswell GT3e to Integrate 128MB eDRAM?

by Anand Lal Shimpi on April 23, 2013 11:58 AM EST

We've known for a while now that Intel will integrate some form of DRAM on-package for the absolute highest end GPU configurations of its upcoming Haswell SoC. Memory bandwidth is a very important enabler of GPU (and multi-core CPU) performance, but delivering enough of it typically required very high speed interfaces (read: high power) and/or very wide interfaces (read: large die areas). Neither of the traditional approaches to scaling memory bandwidth are low power or cost effective, which have kept them out of ultra mobile and integrated processor graphics.

The days of simple performance scaling by throwing more transistors at a design are quickly coming to an end. Moore's Law will continue but much like the reality check building low power silicon gave us a while ago, building high performance silicon will need some out of the box thinking going forward.

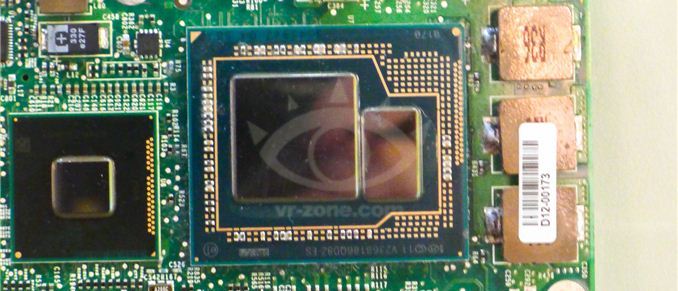

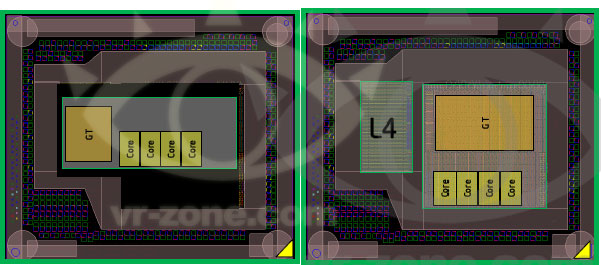

Dating back to Ivy Bridge (3rd gen Core/2012), Intel had plans to integrate some amount of DRAM onto the package in order to drive the performance of its processor graphics. Embedding DRAM onto the package adds cost and heat, and allegedly Paul Otellini wasn't willing to greenlight the production of a part that only Apple would use so it was canned. With Haswell, DRAM is back on the menu and this time it's actually going to come out. We've referred to the Haswell part with embedded DRAM as Haswell GT3e. The GT3 refers to the GPU configuration (40 EUs), while the lowercase e denotes embedded DRAM. Haswell GT3e will only be available in a BGA package (soldered-on, not socketed), and is only expected to appear alongside higher TDP (read: not Ultrabook) parts. The embedded DRAM will increase the thermal load of the SoC, although it shouldn't be as painful as including a discrete GPU + high speed DRAM. Intel's performance target for Haswell GT3e is NVIDIA's GeForce GT 650M.

What we don't know about GT3e is the type, size and speed of memory that Intel will integrate. Our old friend David Kanter at RealWorldTech presented a good thesis on the answers to those questions. Based on some sound logic and digging through the list of papers to be presented at the 2013 VLSI Technology Symposium in Kyoto, Kanter believes that the title of this soon to be presented Intel paper tells us everything we need to know:

"A 22nm High Performance Embedded DRAM SoC Technology Featuring Tri-Gate Transistors and MIMCAP COB"

According to Kanter's deductions (and somewhat validated by our own sources), Haswell GT3e should come equipped with 128MB of eDRAM connected to the main SoC via a 512-bit bus. Using eDRAM vs. commodity DDR3 makes sense as the former is easier to integrate into Intel's current fabs. There are also power, manufacturability and cost concerns as well that resulted in the creation of Intel's own DRAM design. The interface width is a bit suspect as that would require a fair amount of area at the edges of the Haswell die, but the main takeaway is that we're dealing with a parallel interface. Kanter estimates the bandwidth at roughly 64GB/s, not anywhere near high-end dGPU class but in the realm of what you can expect from a performance mainstream mobile GPU. At 22nm, Intel's eDRAM achieves a density of around 17.5Mbit/mm^2, which works out to be ~60mm^2 for the eDRAM itself. Add in any additional interface logic and Kanter estimates the total die area for the eDRAM component to be around 70 - 80mm^2. Intel is rumored to be charging $50 for the eDRAM adder on top of GT3, which would deliver very good margins for Intel. It's a sneaky play that allows Intel to capture more of the total system BoM (Bill of Materials) that would normally go to a discrete GPU company like NVIDIA, all while increasing utilization of their fabs. NVIDIA will still likely offer better perfoming solutions, not to mention the benefits of much stronger developer relations and a longer history of driver optimization. This is just the beginning however.

Based on leaked documents, the embedded DRAM will act as a 4th level cache and should work to improve both CPU and GPU performance. In server environments, I can see embedded DRAM acting as a real boon to multi-core performance. The obvious fit in the client space is to improve GPU performance in games. At only 128MB I wouldn't expect high-end dGPU levels of performance, but we should see a substantial improvement compared to traditional processor graphics. Long term you can expect Intel to bring eDRAM into other designs. There's an obvious fit with its mobile SoCs, although there we're likely talking about something another 12 - 24 months out.

AMD is expected to integrate a GDDR5 memory controller in its future APUs, similar to what it has done with the PlayStation 4 SoC, as its attempt to solve the memory bandwidth problem for processor based graphics.

Source: RealWorldTech

83 Comments

View All Comments

Talaii - Tuesday, April 23, 2013 - link

If those slides are an exhaustive list, there aren't any dual-core standard voltage parts at all. I think it's likely that when the dual-core chips are released some of them might have GT3e.tipoo - Tuesday, April 23, 2013 - link

Hmm, I hope so. That would make the 13"ers more appealing.Hrel - Tuesday, April 23, 2013 - link

I'm confused, it's an integrated GPU. If it's too big for ultrabooks then what's the point? Anything bigger (thicker) and I'm getting a dedicated GPU with proper driver updates. Their will either be ultrabooks that use it or it will only be Apple using it. On the other hand (I find this nearly impossible) if they can actually hit a GT650M in terms of across the board performance I might actually care this time.tipoo - Tuesday, April 23, 2013 - link

If they were going to pair in a low end GPU in a larger laptop, it's still cost, power, and motherboard area savings. That could be used to reduce weight, increase battery size, etc etc.Ktracho - Tuesday, April 23, 2013 - link

But won't the price be too high for a low end laptop?tipoo - Wednesday, April 24, 2013 - link

Who said low end?ShieTar - Wednesday, April 24, 2013 - link

It is not nearly impossible, from a hardware viewpoint alone. The currently existing difference between HD4000 and an 650M is almost completely covered by the increase in Execution Units alone. Add some improvements in IPC efficiency, some catching up by the driver development team, and the performance boost from the integrated memory, and it is actually quiet likely that GT3e will achieve 650M levels.tipoo - Tuesday, April 23, 2013 - link

On the AMD method, GDDR5 is obviously great for GPUs, but the memory timings are much slower than DDR3, even accounting for the clock speed differences. I know differences in latency don't really affect modern CPUs a whole lot, but this difference would be bigger than the slowest to the fastest DDR3 latencies, it's many times higher. I wonder how that will turn out.I'm also curious, IBM integrated eDRAM into its Power7 processors a long time ago, I wonder what the performance implications of the CPU being able to access the eDRAM in Haswell will be, and if they'll carry that over to the high end server/workstation markets like Power7.

marc1000 - Tuesday, April 23, 2013 - link

a lot of people is asking that. IMHO they will do it, but not at launch. even if they say they won't do it.Kevin G - Tuesday, April 23, 2013 - link

The comparison for using eDRAM on the POWER7 was similar latencies to having an external SRAM in the same package (like IBM did with the POWER6). Going SRAM for the L3 cache would have been faster in the POWER7 but IBM felt that capacity/density was more important considering the chip's market.