Intel SSD DC S3500 Review (480GB): Part 1

by Anand Lal Shimpi on June 11, 2013 6:10 PM EST- Posted in

- Storage

- IT Computing

- SSDs

- Intel

- Datacenter

- Enterprise

Last year Intel introduced its first truly new SSD controller since 2008. Oh how times have changed since then. Intel's original SSD controller design was dual purpose, designed for both consumer and enterprise workloads. Launching first as the brains behind a mainstream Intel SSD, that original controller did a wonderful job of kicking off the SSD revolution that followed. Growing pains and a couple of false starts kept a true successor to Ephraim (Intel's first controller) from ever really surfacing over the next few years.

Last year, Ephraim got a true successor and it came in the form of a very high-end enterprise drive: the Intel SSD DC S3700. Equipped with tons of 25nm HET-MLC NAND, the S3700 officially broke the enterprise addiction to SLC for high endurance drives while raising the bar in all aspects of performance. In addition to the usual considerations however, Intel had a new focus with the S3700: performance consistency.

Due to the nature of NAND flash, there's a lot of background management/cleanup that happens in order to ensure endurance as well as high performance. It's these background management tasks that can dramatically impact performance. I love the cleaning your room analogy because it accurately describes the tradeoffs SSD controller makers have to deal with. Clean early and often and you'll do well. Put off cleaning until later and you'll enjoy tons of free time early but you'll quickly run into a problem. It's an oversimplification, but the latter is what most SSD controllers have done historically, and the former is what Intel always believed in. With the S3700, Intel took it to the next level and built the most consistent performing SSD I'd ever tested.

Performance consistency matters for a couple of reasons. The most obvious is an impact to user experience. Predictable latencies are what you want, otherwise your applications can encounter odd hiccups. In client drives, those hiccups appear as unexpected pauses during application usage. In the enterprise, the manifestation is similar except the user encounters the issue somewhere over the internet rather than locally. The other issue with inconsistent performance really creeps up in massive RAID arrays. With many drives in a RAID array, overall performance is determined by the slowest performing drive. Inconsistent performance, particularly with large downward swings, can result in substantial decrease in the performance of large RAID arrays. The motivation to build a consistently performing SSD is high, but so is the level of difficulty in building such a drive.

Intel had the luxury of being able to start over with the S3700's controller architecture. It moved to a flat indirection table (mapping between LBAs and NAND pages), which incurred a DRAM size penalty but ultimately made it possible to deliver much better performance consistency. The S3700 did amazingly well in our enterprise tests, and produced the most consistent IO consistency curves I'd ever seen. The only downside? Despite being much better priced than the Intel X25-E and SSD 710, the S3700 is still a very expensive drive. The move to a better architecture helped reduce the amount of spare area needed for good performance, which in turn reduced cost, but the S3700 still used Intel's most expensive, highest endurance MLC NAND available (25nm HET-MLC). With the largest versions capable of enduring nearly 15 petabytes of writes, the S3700 was really made for extremely write intensive workloads. The drive performs very well across the board, but if you don't have an extremely write intensive workload you'd be paying for much more than you needed.

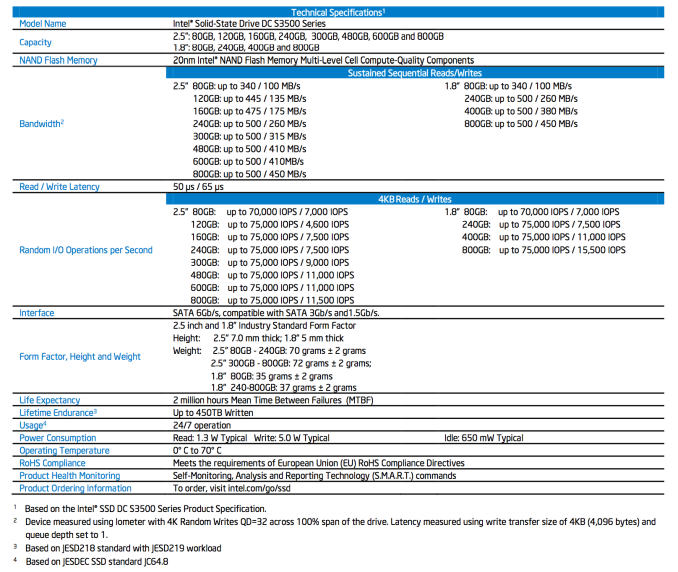

We always knew that Intel would build a standard MLC version of the S3700, and today we have that drive: the Intel SSD DC S3500.

54 Comments

View All Comments

toyotabedzrock - Thursday, June 13, 2013 - link

If my math is correct, excluding the spare area, this mlc can only be written to 700 times?ShieTar - Friday, June 14, 2013 - link

Your math is unrealistically simplified. You could fill up 75% of the drive with data that you never change, so then you can write the remaining 25% of space 2800 times before you reach the 450TB written.Also, Intel only want to guarantee 450TB written. That could still mean that the average drive survives much longer, it just is not meant as a major selling point for this drive.

jhh - Friday, June 14, 2013 - link

I don't understand why the review says latency measurements are done, when the chart shows IOPS. Latency is measured in milliseconds, not IOPS. I want to know how long it takes for the drive to complete an operation after it gets the command. Even more interesting is how that measurement changes as the queue is bigger or smaller. Any chance of getting measurements like this?I'm not sure how this works in Windows, but in Linux, when an application wants to be sure data is persistently stored, this operation translates into a filesystem barrier, which does not return until the drive has written the data (or stored it in a place where it's safe from power failure). The faster the barrier completes, the faster the application runs. This is why I would like to know latency in milliseconds. While IOPS has its value, so does milliseconds.

mk8 - Tuesday, January 14, 2014 - link

Anand, I think one thing that you don't mention at all in the article is IF the S3500 needs or benefits of over provisioning. I guess the performance benefits would be minor, but what about write amplification? I look forward for the "Part 2" of the article. Thanks