Looking at CPU/GPU Benchmark Optimizations in Galaxy S 4

by Brian Klug & Anand Lal Shimpi on July 30, 2013 9:34 AM EST- Posted in

- Smartphones

- Samsung

- Android

- Mobile

- Benchmarks

Somehow both Anand and I ended up with international versions of Samsung’s Galaxy S 4, equipped with the first generation Exynos 5 Octa (5410) SoC. Anand bought an international model GT-I9500 while I held out for the much cooler SK Telecom Korean model SHV-E300S, including Samsung’s own SS222 LTE modem capable of working on band 17 (AT&T LTE) and Band 2,5 WCDMA in the US. Both of these came from Negri Electronics, a mobile device importer in the US.

For those of you who aren’t familiar with the Exynos 5 Octa in these devices, the SoC integrates four ARM Cortex A15 cores (1.6GHz) and four ARM Cortex A7 cores (1.2GHz) in a big.LITTLE configuration. GPU duties are handled by a PowerVR SGX 544MP3, capable of running at up to 533MHz.

We both had plans to do a deeper dive into the power and performance characteristics of one of the first major smartphone platforms to use ARM’s Cortex A15. As always, the insane pace of mobile got in the way and we both got pulled into other things.

More recently, a post over at Beyond3D from @AndreiF gave us reason to dust off our international SGS4s. Through some good old fashioned benchmarking, the poster alleged that Samsung was only exposing its 533MHz GPU clock to certain benchmarks - all other apps/games were limited to 480MHz. For the past few weeks we’ve been asked by many to look into this, what follows are our findings.

Characterizing GPU Behavior

Samsung awesomely exposes the current GPU clock without requiring root access. Simply run the following command over adb and it’ll return the current GPU frequency in MHz:

adb shell cat /sys/module/pvrsrvkm/parameters/sgx_gpu_clk

Let’s hope this doesn’t get plugged, because it’s actually an extremely useful level of transparency that I wish more mobile platform vendors would offer. Running that command in a loop we can get real time updates on the GPU frequency while applications run different workloads.

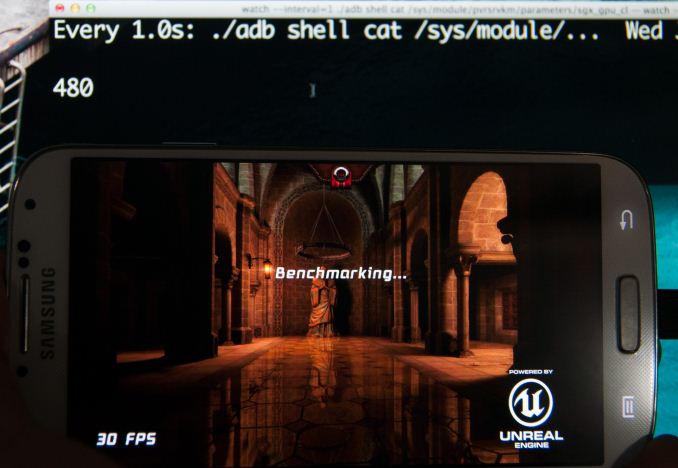

Running any games, even the most demanding titles, returned a GPU frequency of 480MHz - just like @AndreiF alleged. Samsung never publicly claimed max GPU frequencies for the Exynos 5 Octa (our information came from internal sources), so no harm no foul thus far.

Running Epic Citadel - 480 MHz

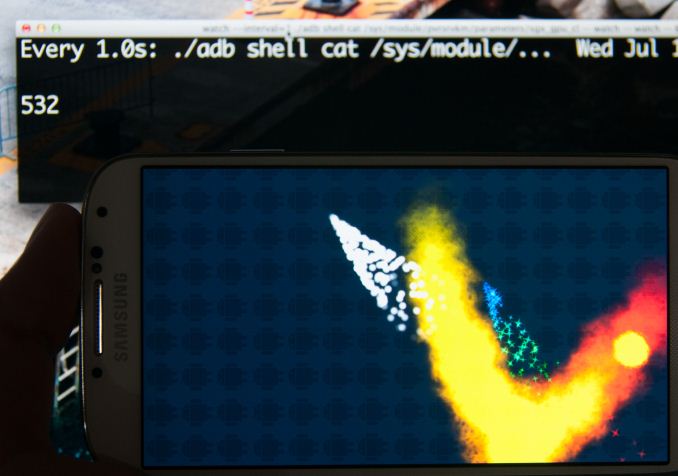

Firing up GLBenchmark 2.5.1 however triggers a GPU clock not available elsewhere: 532MHz. The same is true for AnTuTu and Quadrant.

Running AnTuTu – 532 MHz SGX Clock

Interestingly enough, GFXBench 2.7.0 (formerly GLBenchmark 2.7.0) is unaffected. We confirmed with Kishonti, the makers of the benchmark, that the low level tests are identical between the two benchmarks. The results of the triangle throughput test offer additional confirmation for the frequency difference:

| GT-I9500 Triangle Throughput Performance | |||||||||

| Total System Power | GPU Freq | Run 1 | Run 2 | Run 3 | Run 4 | Run 5 | Average | ||

| GFXBench 2.7.0 (GLBenchmark 2.7.0) | 480MHz | 37.9M Tris/s | 37.9M Tris/s | 37.7M Tris/s | 37.7M Tris/s | 38.3M Tris/s | 37.9M Tris/s | ||

| GLBenchmark 2.5.1 | 532MHz | 43.1M Tris/s | 43.2M Tris/s | 42.8M Tris/s | 43.4M Tris/s | 43.4M Tris/s | 43.2M Tris/s | ||

| % Increase | 10.8% | 13.9% | |||||||

We should see roughly an 11% increase in performance in GLBenchmark 2.5.1 over GFXBench 2.7.0, and we end up seeing a bit more than that. The reason for the difference? GLBenchmark 2.5.1 appears to be singled out as a benchmark that is allowed to run the GPU at the higher frequency/voltage setting.

The CPU is also Affected

The original post on B3D focused on GPU performance, but I was curious to see if CPU performance responded similarly to these benchmarks.

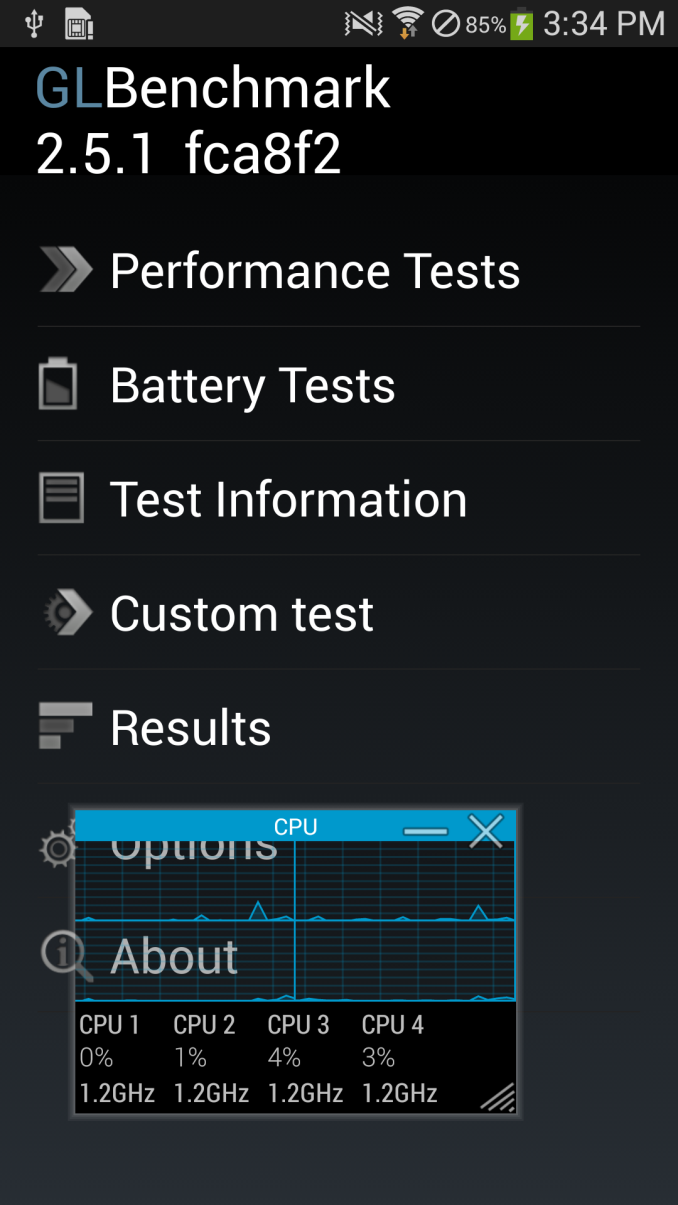

Using System Monitor I kept an eye on CPU frequency while running the same tests. Firing up GLBenchmark 2.5.1 causes a switch to the ARM Cortex A15 cluster, with a default frequency of 1.2GHz. The CPU clocks never drop below that, even when just sitting idle at the menu screen of the benchmark.

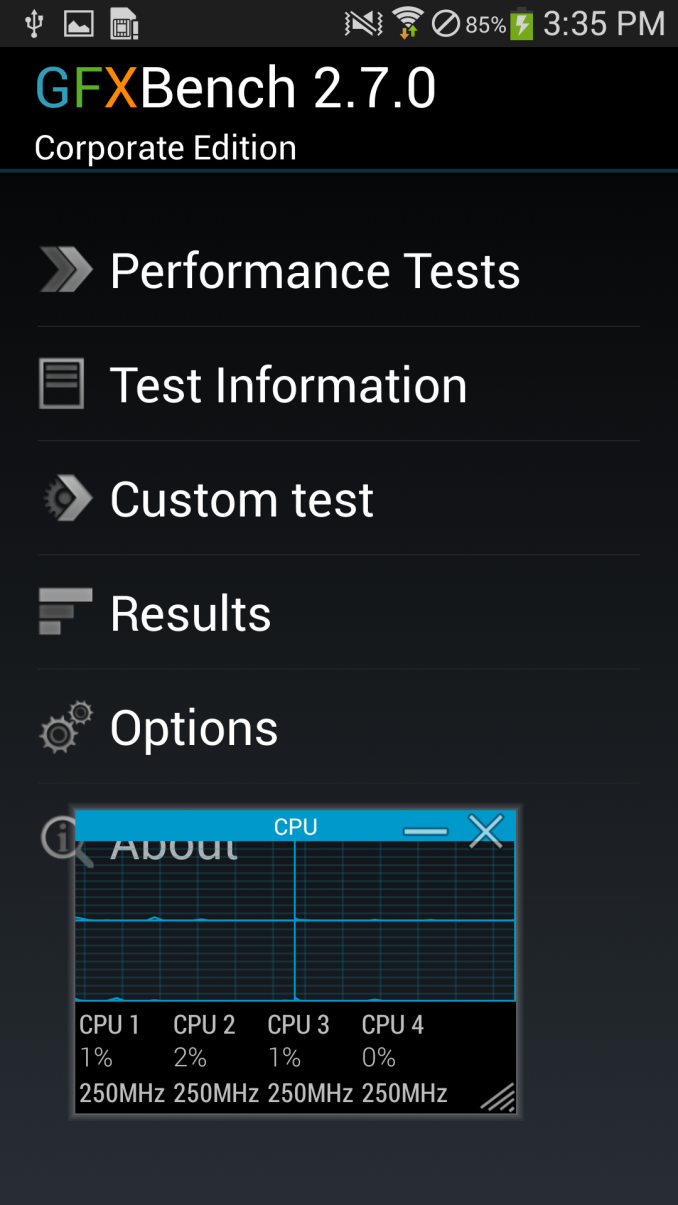

Left: GLBenchmark 2.5.1 (1.2 GHz), Right: GFXBench 2.7 (250 MHz - 500 MHz)

Run GFXBench 2.7 however and the SoC switches over to the Cortex A7s running at 500MHz (250MHz virtual frequency). It would appear that only GLB2.5.1 is allowed to run in this higher performance mode.

A quick check across AnTuTu, Linpack, Benchmark Pi, and Quadrant reveals the same behavior. The CPU governor is fixed at a certain point when either of those benchmarks is launched.

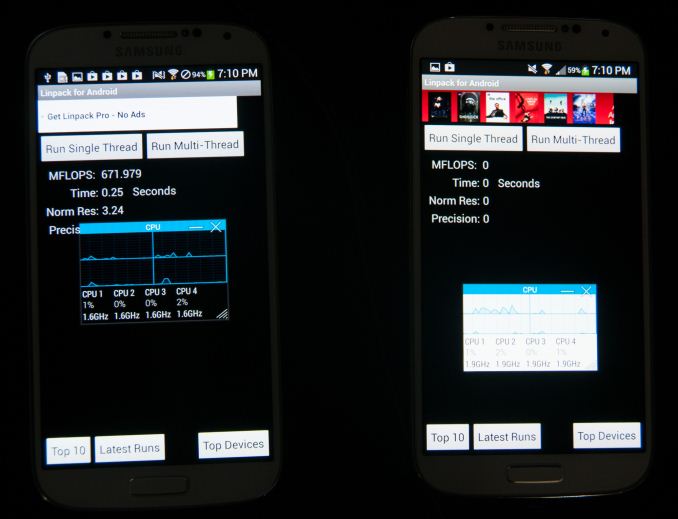

Linpack for Android: Exynos 5 Octa all cores 1.6 GHz (left), Snapdragon 600 all cores 1.9 GHz (right)

Interestingly enough, the same behavior (on the CPU side) can be found on Qualcomm versions of the Galaxy S 4 as well. In these select benchmarks, the CPU is set to the maximum CPU frequency available at app launch and stays there for the duration, all cores are plugged in as well, regardless of load, as soon as the application starts.

Note that the CPU behavior is different from what we saw on the GPU side however. These CPU frequencies are available for all apps to use, they are simply forced to maximum (and in the case of Snapdragon, all cores are plugged in) in the case of these benchmarks. The 532MHz max GPU frequency on the other hand is only available to these specific benchmarks.

Digging Deeper

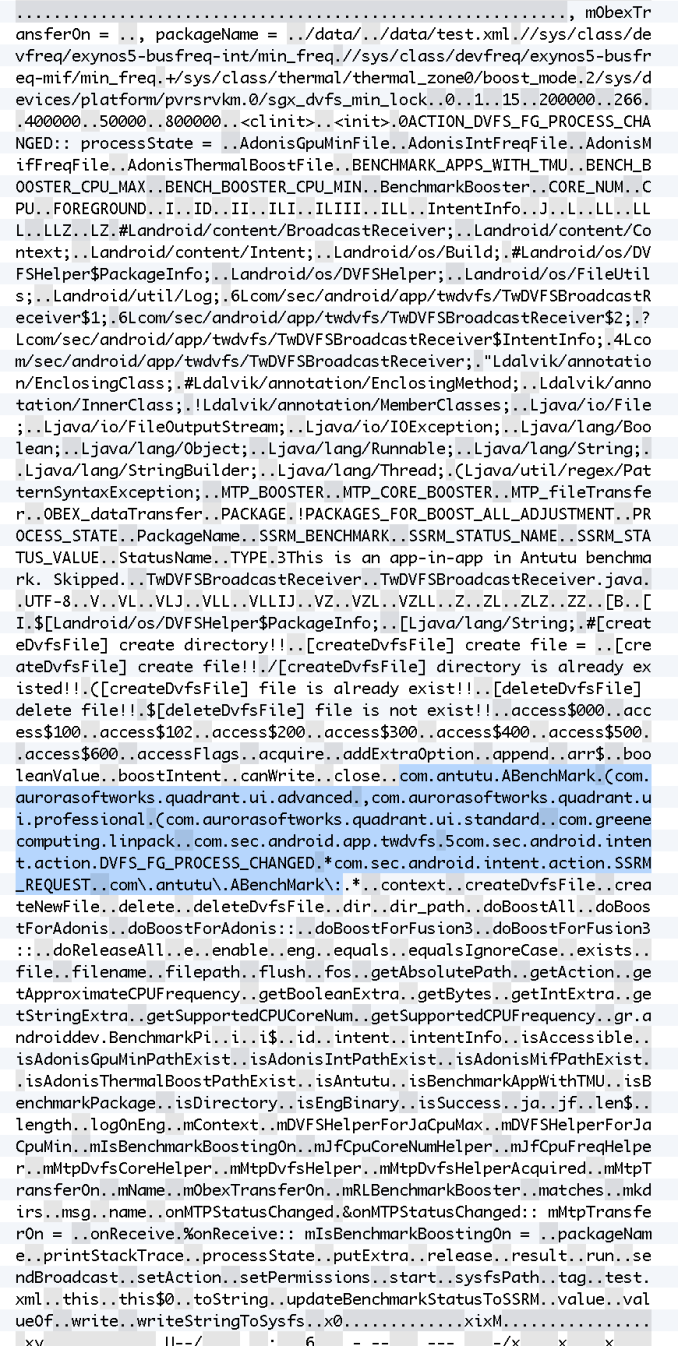

At this point the benchmarks allowed to run at higher GPU frequencies would seem arbitrary. AnTuTu, GLBenchmark 2.5.1 and Quadrant get fixed CPU frequencies and a 532MHz max GPU clock, while GFXBench 2.7 and Epic Citadel don’t. Poking around I came across the application changing the DVFS behavior to allow these frequency changes – TwDVFSApp.apk. Opening the file in a hex editor and looking at strings inside (or just running strings on the .odex file) pointed at what appeared to be hard coded profiles/exceptions for certain applications. The string "BenchmarkBooster" is a particularly telling one:

You can see specific Android java naming conventions immediately in the highlighted section. Quadrant standard, advanced, and professional, linpack (free, not paid), Benchmark Pi, and AnTuTu are all called out specifically. Nothing for GLBenchmark 2.5.1 though, despite its similar behavior.

We can also see the files that get touched by TwDVFSApp while it is running:

//sys/class/devfreq/exynos5-busfreq-int/min_freq//sys/class/devfreq/exynos5-busfreq-mif/min_freq+/sys/class/thermal/thermal_zone0/boost_mode2/sys/devices/platform/pvrsrvkm.0/sgx_dvfs_min_lock

When the TwDVFSApp application grants special DVFS status to an application, the boost_mode file goes from value 0 to 1, making it easy to check if an affected application is running. For example, launching and closing Benchmark Pi:

shell@android:/sys/class/thermal/thermal_zone0 $ cat boost_mode1shell@android:/sys/class/thermal/thermal_zone0 $ cat boost_mode0

There are strings for Fusion3 (the Snapdragon 600 + MDM9x15 combo) and Adonis (the codename for Exynos 5 Octa):

doBoostAlldoBoostForAdonisdoBoostForAdonis::doBoostForFusion3doBoostForFusion3::

What's even more interesting is the fact that it seems as though TwDVFSApp seems to have an architecture for other benchmark applications not specifically in the whitelist to request for BenchmarkBoost mode as an intent, since the application is also a broadcast receiver.

6Lcom/sec/android/app/twdvfs/TwDVFSBroadcastReceiver$1;6Lcom/sec/android/app/twdvfs/TwDVFSBroadcastReceiver$2;?Lcom/sec/android/app/twdvfs/TwDVFSBroadcastReceiver$IntentInfo;4Lcom/sec/android/app/twdvfs/TwDVFSBroadcastReceiver;boostIntent5com.sec.android.intent.action.DVFS_FG_PROCESS_CHANGED*com.sec.android.intent.action.SSRM_REQUEST

So we not only can see the behavior and empirically test to see what applications are affected, but also have what appears to be the whitelist and how the TwDVFSApp application grants special DVFS to certain applications.

Why this Matters & What’s Next

None of this ultimately impacts us. We don’t use AnTuTu, BenchmarkPi or Quadrant, and moved off of GLBenchmark 2.5.1 as soon as 2.7 was available (we dropped Linpack a while ago). The rest of our suite isn’t impacted by the aggressive CPU governor and GPU frequency optimizations on the Exynos 5 Octa based SGS4s. What this does mean however is that you should be careful about comparing Exynos 5 Octa based Galaxy S 4s using any of the affected benchmarks to other devices and drawing conclusions based on that. This seems to be purely an optimization to produce repeatable (and high) results in CPU tests, and deliver the highest possible GPU performance benchmarks.

We’ve said for years now that the mobile revolution has/will mirror the PC industry, and thus it’s no surprise to see optimizations like this employed. Just because we’ve seen things like this happen in the past however doesn’t mean they should happen now.

It's interesting that this is sort of the reverse of what we saw GPU vendors do in FurMark. For those of you who aren't familiar, FurMark is a stress testing tool that tries to get your platform to draw as much power as possible. In order to avoid creating a situation where thermals were higher than they'd be while playing a normal game (and to avoid damaging graphics cards without thermal protection), we saw GPU vendors limit the clock frequency of their GPUs when they detected these power-virus style of apps. In a mobile device I'd expect even greater sensitivity to something like this. I suspect we'll eventually get to that point. I'd also add that just like we've seen this sort of thing many times in the PC space, the same is likely true for mobile. The difficulty is in uncovering when something strange is going on.

What Samsung needs to do going forward is either open up these settings for all users/applications (e.g. offer a configurable setting that fixes the CPU governor in a high performance mode, and unlocks the 532MHz GPU frequency) or remove the optimization altogether. The risk of doing nothing is that we end up in an arms race between all of the SoC and device makers where non-insignificant amounts of time and engineering effort is spent on gaming the benchmarks rather than improving user experience. Optimizing for user experience is all that’s necessary, good benchmarks benefit indirectly - those that don’t will eventually become irrelevant.

111 Comments

View All Comments

ancientarcher - Tuesday, July 30, 2013 - link

Is this a hatchet job commissioned by Intel?While this is bad, nothing can touch the depths that your beloved Intel dropped to with AnTuTu and publicising with the ABI Research report. At least, it doesn't skip certain steps and completely dupe the buying public...

Maybe you should start taking some money from Samsung/ARM, your reporting will become relatively unbiased then...

darwinosx - Tuesday, July 30, 2013 - link

Ah here is the Samsung apologist..what took you so long?ancientarcher - Tuesday, July 30, 2013 - link

Samsung is cheating, no doubt! All I am saying is, it is a far lesser sin than that of Intel which skips certain steps altogether and then publicises it. Overclocking the GPU only for certain benchmarks is wrong. And hopefully Samsung and the others listen. Samsung loses a lot more than it gains through these shenanigans.... not worth it sammy!!! It is worth a lot more to put your time and effort to improve your chips, not game the system...SydneyBlue120d - Tuesday, July 30, 2013 - link

What about the Google Experience version of the device? Same behavior or it doesn't cheat?rd_nest - Tuesday, July 30, 2013 - link

It's for Exynos.GE version uses S600.

boris81 - Tuesday, July 30, 2013 - link

From the above article:"Interestingly enough, the same behavior (on the CPU side) can be found on Qualcomm versions of the Galaxy S 4 as well."

sherlockwing - Tuesday, July 30, 2013 - link

It is different. The 532mhz GPU clock for Exynos is only avaliable for certain benchmarks, what they do with S600 is force the CPU to run at full clock(1.9Ghz) and all cores(4) regardless of load. But that 1.9Ghz/quad core performance is available(not always needed) for all apps and benchmarks unlike the 532mhz GPU clock.boris81 - Tuesday, July 30, 2013 - link

Thanks for clarifying that! Holding all cores on the S600 at the highest clock speed may still produce better benchmark scores than if they were allowed to change speed as they normally do. I wouldn't bet that it's significant but I think it's worth investigating.name99 - Tuesday, July 30, 2013 - link

This is misleadingly optimistic. It gives users an unrealistic view of the ACTUAL performance/power tradeoffs made by the device during use.Assume the Samsung power manager actually worked the way it should. It would detect that the CPU was working hard and boost performance, it would detect that the CPU was idling and would lower performance.

If this were the case, there would be no need to diddle the benchmarks in this way because things would just work.

The fact that Samsung IS diddling the benchmarks this way suggests they have no confidence in their power management --- they don't trust it to rapidly (or ever) kick in under conditions of load and/or they don't trust it to rapidly throttle under conditions of idle. Both these behaviors are ESSENTIAL in a phone.

WTF is the point of having 4 fast cores and all this big.LITTLE infrastructure if your OS is either incapable of using them (when speed is needed) or using them drains the battery so rapidly you notice?

danbob999 - Tuesday, July 30, 2013 - link

Well to be fair with Samsung, it's possible that many of these benchmarks are poorly written and depend on very short compute time. In these cases, loosing a little time to ramp up the CPU speed can be fatal. These benchmarks also tend to produce different results on every run.