The iPhone 5s Review

by Anand Lal Shimpi on September 17, 2013 9:01 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 5S

A7 SoC Explained

I’m still surprised by the amount of confusion around Apple’s CPU cores, so that’s where I’ll start. I’ve already outlined how ARM’s business model works, but in short there are two basic types of licenses ARM will bestow upon its partners: processor and architecture. The former involves implementing an ARM designed CPU core, while the latter is the creation of an ARM ISA (Instruction Set Architecture) compatible CPU core.

NVIDIA and Samsung, up to this point, have gone the processor license route. They take ARM designed cores (e.g. Cortex A9, Cortex A15, Cortex A7) and integrate them into custom SoCs. In NVIDIA’s case the CPU cores are paired with NVIDIA’s own GPU, while Samsung licenses GPU designs from ARM and Imagination Technologies. Apple previously leveraged its ARM processor license as well. Until last year’s A6 SoC, all Apple SoCs leveraged CPU cores designed by and licensed from ARM.

With the A6 SoC however, Apple joined the ranks of Qualcomm with leveraging an ARM architecture license. At the heart of the A6 were a pair of Apple designed CPU cores that implemented the ARMv7-A ISA. I came to know these cores by their leaked codename: Swift.

At its introduction, Swift proved to be one of the best designs on the market. An excellent combination of performance and power consumption, the Swift based A6 SoC improved power efficiency over the previous Cortex A9 based design. Swift also proved to be competitive with the best from Qualcomm at the time. Since then however, Qualcomm has released two evolutions of its CPU core (Krait 300 and Krait 400), and pretty much regained performance leadership over Apple. Being on a yearly release cadence, this is Apple’s only attempt to take back the crown for the next 12 months.

Following tradition, Apple replaces its A6 SoC with a new generation: A7.

With only a week to test battery life, performance, wireless and cameras on two phones, in addition to actually using them as intended, there wasn’t a ton of time to go ridiculously deep into the new SoC’s architecture. Here’s what I’ve been able to piece together thus far.

First off, based on conversations with as many people in the know as possible, as well as just making an educated guess, it’s probably pretty safe to say that the A7 SoC is built on Samsung’s 28nm HK+MG process. It’s too early for 20nm at reasonable yields, and Apple isn’t ready to move some (not all) of its operations to TSMC.

The jump from 32nm to 28nm results in peak theoretical scaling of 76.5% (the same design on 28nm can be no smaller than 76.5% of the die area at 32nm). In reality, nothing ever scales perfectly so we’re probably talking about 80 - 85% tops. Either way that’s a good amount of room for new features.

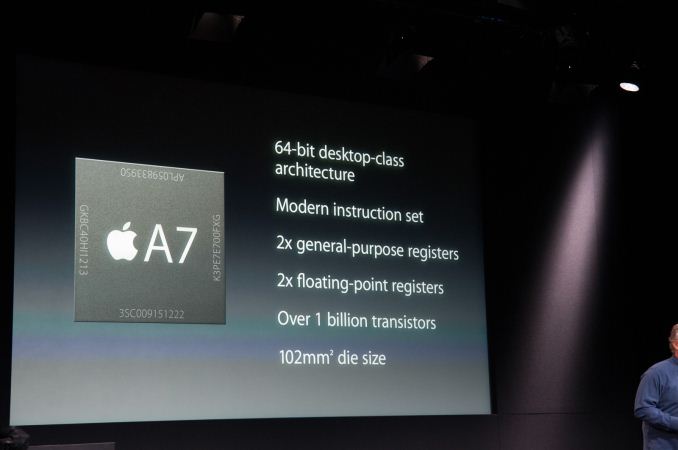

At its launch event Apple officially announced both die size for the A7 (102mm^2) as well as transistor count (over 1 billion). Don’t underestimate the magnitude of both of these disclosures. The technical folks at Cupertino are clearly winning some battle to talk more about their designs and not less. We’re not yet at the point where I’m getting pretty diagrams and a deep dive, but it’s clear that Apple is beginning to open up more (and it’s awesome).

Apple has never previously disclosed transistor count. I also don’t know if this “over 1 billion” figure is based on a schematic or layout transistor count. The only additional detail I have is that Apple is claiming a near doubling of transistors compared to the A6. Looking at die sizes and taking into account scaling from the process node shift, there’s clearly a more fundamental change to the chip’s design. It is possible to optimize a design (and transistors) for area, which seems to be what has happened here.

The CPU cores are, once again, a custom design by Apple. These aren’t Cortex A57 derivatives (still too early for that), but rather some evolution of Apple’s own Swift architecture. I’ll dive into specifics of what I’ve been able to find in a moment. To answer the first question on everyone’s mind, I believe there are two of these cores on the A7. Before I explain how I arrived at this conclusion, let’s first talk about cores and clock speeds.

I always thought the transition from 2 to 4 cores happened quicker in mobile than I had expected. Thankfully there are some well threaded apps that have been able to take advantage of more than two cores and power gating keeps the negative impact of the additional cores down to a minimum. As we saw in our Moto X review however, two faster cores are still better for most uses than four cores running at lower frequencies. NVIDIA forced everyone’s hand in moving to 4 cores earlier than they would’ve liked, and now you pretty much can’t get away with shipping anything less than that in an Android handset. Even Motorola felt necessary to obfuscate core count with its X8 mobile computing system. Markets like China seem to also demand more cores over better ones, which is why we see such a proliferation of quad-core Cortex A5/A7 designs. Apple has traditionally been sensible in this regard, even dating back to core count decisions in its Macs. I remembering reviewing an old iMac and pitting it against a Dell XPS One at the time. This was in the pre-power gating/turbo days. Dell went the route of more cores, while Apple chose for fewer, faster ones. It also put the CPU savings into a better GPU. You can guess which system ended out ahead.

In such a thermally constrained environment, going quad-core only makes sense if you can properly power gate/turbo up when some cores are idle. I have yet to see any mobile SoC vendor (with the exception of Intel with Bay Trail) do this properly, so until we hit that point the optimal target is likely two cores. You only need to look back at the evolution of the PC to come to the same conclusion. Before the arrival of Nehalem and Lynnfield, you always had to make a tradeoff between fewer faster cores and more of them. Gaming systems (and most users) tended to opt for the former, while those doing heavy multitasking went with the latter. Once we got architectures with good turbo, the 2 vs 4 discussion became one of cost and nothing more. I expect we’ll follow the same path in mobile.

Then there’s the frequency discussion. Brian and I have long been hinting at the sort of ridiculous frequency/voltage combinations mobile SoC vendors have been shipping at for nothing more than marketing purposes. I remember ARM telling me the ideal target for a Cortex A15 core in a smartphone was 1.2GHz. Samsung’s Exynos 5410 stuck four Cortex A15s in a phone with a max clock of 1.6GHz. The 5420 increases that to 1.7GHz. The problem with frequency scaling alone is that it typically comes at the price of higher voltage. There’s a quadratic relationship between voltage and power consumption, so it’s quite possibly one of the worst ways to get more performance. Brian even tweeted an image showing the frequency/voltage curve for a high-end mobile SoC. Note the huge increase in voltage required to deliver what amounts to another 100MHz in frequency.

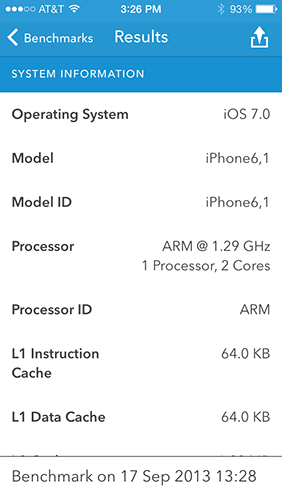

The combination of both of these things gives us a basis for why Apple settled on two Swift cores running at 1.3GHz in the A6, and it’s also why the A7 comes with two cores running at the same max frequency. Interestingly enough, this is the same max non-turbo frequency Intel settled at for Bay Trail. Given a faster process (and turbo), I would expect to see Apple push higher frequencies but without those things, remaining conservative makes sense. I verified frequency through a combination of reporting tools and benchmarks. While it’s possible that I’m wrong, everything I’ve run on the device (both public and not) points to a 1.3GHz max frequency.

Verifying core count is a bit easier. Many benchmarks report core count, I also have some internal tools that do the same - all agreed on the same 2 cores/2 threads conclusion. Geekbench 3 breaks out both single and multithreaded performance results. I checked with the developer to ensure that the number of threads isn’t hard coded. The benchmark queries the max number of logical CPUs before spawning that number of threads. Looking at the ratio of single to multithreaded performance on the iPhone 5s, it’s safe to say that we’re dealing with a dual-core part:

| Geekbench 3 Single vs. Multithreaded Performance - Apple A7 | ||||||

| Integer | FP | |||||

| Single Threaded | 1471 | 1339 | ||||

| Multi Threaded | 2872 | 2659 | ||||

| A7 Advantage | 1.97x | 1.99x | ||||

| Peak Theoretical 2C Advantage | 2.00x | 2.00x | ||||

Now the question is, what’s changed in these cores?

464 Comments

View All Comments

akdj - Friday, September 27, 2013 - link

I completely agree---that said, we're really only 5 years 'in'. The original iPhone in '07, a true Android follow up in late '07/early '08---those were crap. Not really necessary to 'bench' them. We all kinda knew the performance we could expect, same for the next generation or two. In the past three years---Moore's law has swung in to high gear, these are now---literally---replacement computers (along with tablets) for the majority of the population. They're not using their home desktop anymore for email, Facebook, surfing and recipes. Even gaming---unless their @ 'work' and in front of their 'work Dell' from 2006, they're on their smartphones...for literally everything! In these past three years---and it seems Anand, Brian and crew are quite 'up front' about the lack of mobile testing applications and software----we're in it's infancy. 36 real months in with software, hardware and OS'es worth 'testing, benchmarking, and measuring' their performance. Just my opinion....and I suppose we're saying the same thing.That said---even Google's new Octane test was and is being used lately---GeekBench has revised their software, it's coming is my point. But just looking at those differences in the generations of iPhones makes it blatantly obvious how far we've come in 4/5 short years. In 2008 and 9---these were still phones with easier ways to text and access the internet, some cool apps and ways to take, manipulate and share pics and videos. Today----they do literally everything an actual computer does and I'd bet---in a lot of cases, these phones are as or more powerful, faster and more acessible than those ancient beige boxes from the mid 2000s a lot of folks have in their home office;)

Duck <(' ) - Thursday, October 3, 2013 - link

they are posting false benchmark scores. Same phones score different in youtube vids.iPhone 5 browsermark 2 score is around 2300

check here https://www.youtube.com/watch?v=iATFnXociC4

sgs4 scores 2745 here https://www.youtube.com/watch?v=PdNE4NoFq8U

ddriver - Wednesday, September 18, 2013 - link

Actually, there are quite a lot of discrepancies in this review.For starters, the "CPU performance" page only contains JS benchmarks and not a single native application. And iOS and Android use entirely different JS engines, so this is literally a case of comparing apples to oranges.

Native benchmarks don't compare the new apple chip to "old 32 bit v7 chips" - it only compares the new apple chip to the old ones, and also compares the new chip in 32bit and 64 bit mode. Oddly enough, the geekbench at engadget shows tegra 4 actually being faster.

Then, there is the inclusion of hardware implementation in charts that are supposed to show the benefits of 64bit execution mode, but in reality the encryption workloads are handled in a fundamentally different way in the two modes, in software in 32bit mode and implemented in hardware in 64bit mode. This turns the integer performance chart from a mixed bad into one falsely advertising performance gains attributed to 64bit execution and not to the hardware implementations as it should. The FP chart also shows no miracles, wider SIMD units result in almost 2x the score in few tests, nothing much in the rest.

All in all, I'd say this is a very cleverly compiled review, cunningly deceitful to show the new apple chip in a much better light than it is in reality. No surprises, considering this is AT, it would be more unexpected to see an unbiased review.

I guess we will have to wait a bit more until mass availability for unbiased reviews, considering all those "featured" reviews usually come with careful guidelines by the manufacturer that need to be followed to create an unrealistically good presentation of the product. That is the price you have to pay to get the new goodies first - play by the rules of a greedy and exploitative industry. Corporate "honesty" :)

I don't say the new chip is bad, I just say it is deliberately presented unrealistically good. Krait has expanded the SIMD units to 128 bit as well, so we should see similar performance even without the move to a 64bit ecosystem. Last but not least, 64bit code bloats the memory footprint of applications because of pointers being twice as big, and while those limited memory footprint synthetic benches play well with the single gigabyte of ram on this device, I expect an actual performance demanding real world application will be bottlenecked by the ram capacity. All in all, the decision to go for 64 bit architecture is mostly a PR stunt, surely, 64bit is the future, but in the case of this product, and considering its limited ram capacity, it doesn't really make all that sense, but is something that will no doubt keep up the spirit of apple fanboys, and make up for their declining sales while they bring out the iphone 6, which will close all those deliberately left gaping holes in the 5s.

Slaanesh - Wednesday, September 18, 2013 - link

Interesting comment. I'd like to know what Anand has to say about this.ddriver - Wednesday, September 18, 2013 - link

I am betting my comment will most likely vanish mysteriously. I'd be happy to see my concerns addressed though, but I admit I am putting Anand in a very inconvenient position.Mondozai - Wednesday, September 18, 2013 - link

It's all a conspiracy. In fact your comment has already disappeared, but in its place is now a hologram effect that makes it impossible to tell it from the blank space. So why put in the hologram and not just delete it? Because Anand is playing mind games with us.And who said I typed this comment? It could have been someone else, someone doing Anand's bidding.

I admit I am putting his scheme of deception in a very difficult position right now.

/s

ddriver - Wednesday, September 18, 2013 - link

Few days ago I posted a comment criticizing AT moderators being idle and tolerant of the "I make $$$ sitting in front of my mac" spam, few minutes later my comment was removed while the spam remained, which led me to expect similar fate for this comment. Good thing I was wrong ;)WardenOfBats - Wednesday, September 18, 2013 - link

I'd honestly like to see your misleading comment removed as well. You seem to think that anyone cares what the 32bit performance is of the 5S when everyone knows damn well that app developers are going to be clamouring to switch to the 64bit tech (that Android doesn't even have or support) to get these power increases. Other than that, the rest of your comment is just nonsense. The whole point is that the iPhone 5S is faster and the fact that they use different JS engines is a part of that. Apple just knows how to make software optimizations and hardware that runs them faster and you can see how they just blow the competition away.CyberAngel - Thursday, September 19, 2013 - link

Misleading? Yes! In favor of Apple!You need to double the memory lines, too and caches and...oh boy!

The next Apple CPU will be "corrected" and THEN we'll see...hopefully RAM is 8GB...

Ryan Smith - Thursday, September 19, 2013 - link

As we often have to remind people, we don't delete comments unless they're spam. However when we do so, any child comments become orphaned and lose their place in the hierarchy, becoming posts at the end of the thread. Your comment isn't going anywhere, nor have any of your previous comments.