Server Guide Part 1: Introduction to the Server World

by Johan De Gelas on August 17, 2006 1:45 PM EST- Posted in

- IT Computing

What makes a server different?

This is far from an academic or philosophical question as it allows you to see the difference between a souped up desktop with the label "server" which is being sold with a higher profit margin and a real server configuration that will be offering reliable services for years.

A few years ago, the question above would have been very easy to answer for a typical hardware person. Servers used to distinguish themselves on first sight from a normal desktop pc: they had SCSI disks, RAID controllers, multiple CPUs with large amounts of cache and Gigabit Ethernet. In a nutshell, servers had faster and more CPUs, better storage and faster access to the LAN.

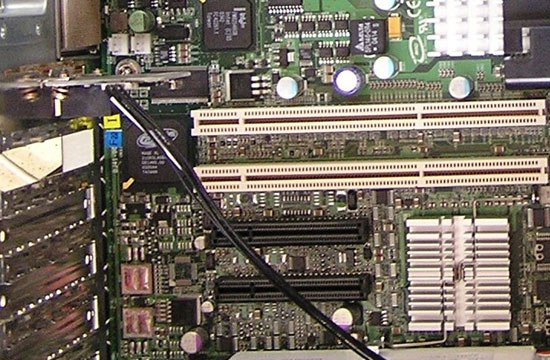

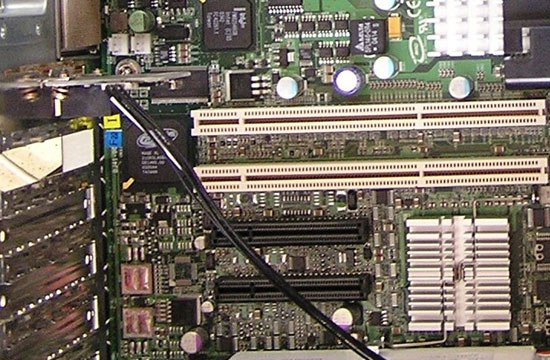

PCI-e (black) has still a long way to go before it

will replace PCI-X (white) in the server world

It is clear that is a pretty simplistic and wrong way to understand what servers are all about. Since the introduction of new SATA features (SATA revision 2.5) such as staggered spindle spin up, Native Command Queuing and Port Multipliers, servers are equipped with SATA drives just like desktop PCs. A high end desktop pc has two CPU cores, 10,000 rpm SATA drives, gigabit Ethernet and RAID-5. Next year that same desktop might even have 4 cores.

So it is pretty clear that the hardware gap between servers and desktops is shrinking and not really a good way to judge. What makes a server a server? A server's main purpose is to make certain IT services (database, web, mail, DHCP...) available to many users at the same time, and concurrent access to these services is an important build criteria. Secondly, a server is a business tool, therefore it will be evaluated on how much it costs to deliver those services during each year or semester. The focus on Total Cost of Ownership (TCO) and concurrent access performance is what really sets a server apart from a typical desktop at home.

Basically, a server is different on the following points:

The three last points all are part of lowering TCO. So what is TCO anyway?

This is far from an academic or philosophical question as it allows you to see the difference between a souped up desktop with the label "server" which is being sold with a higher profit margin and a real server configuration that will be offering reliable services for years.

A few years ago, the question above would have been very easy to answer for a typical hardware person. Servers used to distinguish themselves on first sight from a normal desktop pc: they had SCSI disks, RAID controllers, multiple CPUs with large amounts of cache and Gigabit Ethernet. In a nutshell, servers had faster and more CPUs, better storage and faster access to the LAN.

PCI-e (black) has still a long way to go before it

will replace PCI-X (white) in the server world

It is clear that is a pretty simplistic and wrong way to understand what servers are all about. Since the introduction of new SATA features (SATA revision 2.5) such as staggered spindle spin up, Native Command Queuing and Port Multipliers, servers are equipped with SATA drives just like desktop PCs. A high end desktop pc has two CPU cores, 10,000 rpm SATA drives, gigabit Ethernet and RAID-5. Next year that same desktop might even have 4 cores.

So it is pretty clear that the hardware gap between servers and desktops is shrinking and not really a good way to judge. What makes a server a server? A server's main purpose is to make certain IT services (database, web, mail, DHCP...) available to many users at the same time, and concurrent access to these services is an important build criteria. Secondly, a server is a business tool, therefore it will be evaluated on how much it costs to deliver those services during each year or semester. The focus on Total Cost of Ownership (TCO) and concurrent access performance is what really sets a server apart from a typical desktop at home.

Basically, a server is different on the following points:

- Hardware optimized for concurrent access

- Professional upgrade slots such as PCI-X

- RAS features

- Chassis format

- Remote management

The three last points all are part of lowering TCO. So what is TCO anyway?

32 Comments

View All Comments

AtaStrumf - Sunday, October 22, 2006 - link

Interesting stuff! Keep up the good work!LoneWolf15 - Thursday, October 19, 2006 - link

I'm guessing this is possible, but I've never tried it...Wouldn't it be possible to use a blade server, and just have the OS on each blade, but have a large, high-bandwith (read: gig ethernet) NAS box? That way, each blade would have, say (for example), two small hard disks in RAID-1 with the boot OS for ensuring uptime, but any file storage would be redirected to RAID-5 volumes created on the NAS box(es). Sounds like the best of both worlds to me.

dropadrop - Friday, December 22, 2006 - link

This is what we've had in all of the places I've been working at during the last 5-6 years. The term used is SAN, not NAS, and servers have traditionally been connected to it via fiberoptics. It's not exactly cheap storage, actually it's really damn expensive.To give you a picture, we just got a 22TB SAN at my new employer, and it cost way over 100000$. If you start counting price for gigabyte, it's not cheap at all. Ofcourse this does not take into consideration the price of Fiber Connections (cards on the server, fiber switches, cables ect). Now a growing trend is to use iScsi instead of fiber. Iscsi is scsi over ethernet and ends up being alot cheaper (though not quite as fast).

Apart from having central storage with higher redundancy, one advantage is performance. A SAN can stripe the data over all the disks in it, for example we have a RAID stripe consisting of over 70 disks...

LoneWolf15 - Thursday, October 19, 2006 - link

(Since I can't edit)I forgot to add that it even looks like Dell has some boxes like these that can be attached directly to their servers with cables (I don't remember, but it might be an SAS setup). Support for a large number of drives, and mutliple RAID volumes if necessary.

Pandamonium - Thursday, October 19, 2006 - link

I decided to give myself the project of creating a server for use in my apartment, and this article (along with its subsequent editions) should help me greatly in this endeavor. Thanks AT!Chaotic42 - Sunday, August 20, 2006 - link

This is a really interesting article. I just started working in a fairly large data center a couple of months ago, and this stuff really interests me. Power is indeed expensive for these places, but given the cost of the equipment and maintenance, it's not too bad. Cooling is a big issue though, as we have pockets of hot and cold air through out the DC.I still can't get over just how expensive 9GB WORM media is and how insanely expensive good tape drives are. It's a whole different world of computing, and even our 8 CPU Sun system is too damned slow. ;)

at80eighty - Sunday, August 20, 2006 - link

Target Reader here - SMB owner contemplating my options in the server routeagain - thank you

you guys fucking \m/

peternelson - Friday, August 18, 2006 - link

Blades are expensive but not so bad on ebay (as is regular server stuff affordable second user).

Blades can mix architecture eg IBM blades of CELL processor could mix with pentium or maybe opteron blades.

How important U size is depends if it's YOUR rack or a datacentre rack. Cost/sq ft is more in a datacentre.

Power is not just $cents per kwh paid to the utility supplier.

It is cost of cabling and PDU.

Cost (and efficiency overhead) of UPS

Cost of remote boot (APC Masterswitch)

Cost of transfer switch to let you swap out ups batteries

Cost of having generator power waiting just in case.

Some of these scale with capacity so cost more if you use more.

Yes virtualisation is important.

IBM have been advertising server consolidation (ie not invasion of beige boxes).

But also see STORAGE consolidation. eg EMC array on a SAN. You have virtual storage across all platforms, adding disks as needed or moving the free space virtually onto a different volume as needed. Unused data can migrate to slower drives or tape.

Tujan - Friday, August 18, 2006 - link

"[(o)]/..\[(o)]"Zaitsev - Thursday, August 17, 2006 - link

Fourth paragraph of intro.

Haven't finished the article yet, but I'm looking forward to the series.