Intel SSD 530 (240GB) Review

by Kristian Vättö on November 15, 2013 1:45 PM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 530

AnandTech Storage Bench 2013

When Anand built the AnandTech Heavy and Light Storage Bench suites in 2011 he did so because we didn't have any good tools at the time that would begin to stress a drive's garbage collection routines. Once all blocks have a sufficient number of used pages, all further writes will inevitably trigger some sort of garbage collection/block recycling algorithm. Our Heavy 2011 test in particular was designed to do just this. By hitting the test SSD with a large enough and write intensive enough workload, we could ensure that some amount of GC would happen.

There were a couple of issues with our 2011 tests that we've been wanting to rectify however. First off, all of our 2011 tests were built using Windows 7 x64 pre-SP1, which meant there were potentially some 4K alignment issues that wouldn't exist had we built the trace on a system with SP1. This didn't really impact most SSDs but it proved to be a problem with some hard drives. Secondly, and more recently, we've shifted focus from simply triggering GC routines to really looking at worst-case scenario performance after prolonged random IO.

For years we'd felt the negative impacts of inconsistent IO performance with all SSDs, but until the S3700 showed up we didn't think to actually measure and visualize IO consistency. The problem with our IO consistency tests is that they are very focused on 4KB random writes at high queue depths and full LBA spans–not exactly a real world client usage model. The aspects of SSD architecture that those tests stress however are very important, and none of our existing tests were doing a good job of quantifying that.

We needed an updated heavy test, one that dealt with an even larger set of data and one that somehow incorporated IO consistency into its metrics. We think we have that test. The new benchmark doesn't even have a name, we've just been calling it The Destroyer (although AnandTech Storage Bench 2013 is likely a better fit for PR reasons).

Everything about this new test is bigger and better. The test platform moves to Windows 8 Pro x64. The workload is far more realistic. Just as before, this is an application trace based test–we record all IO requests made to a test system, then play them back on the drive we're measuring and run statistical analysis on the drive's responses.

Imitating most modern benchmarks Anand crafted the Destroyer out of a series of scenarios. For this benchmark we focused heavily on Photo editing, Gaming, Virtualization, General Productivity, Video Playback and Application Development. Rough descriptions of the various scenarios are in the table below:

| AnandTech Storage Bench 2013 Preview - The Destroyer | ||||||||||||

| Workload | Description | Applications Used | ||||||||||

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox | ||||||||||

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite | ||||||||||

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox | ||||||||||

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware | ||||||||||

| Video Playback | Copy and watch movies | Windows 8 | ||||||||||

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 | ||||||||||

While some tasks remained independent, many were stitched together (e.g. system backups would take place while other scenarios were taking place). The overall stats give some justification to what we've been calling this test internally:

| AnandTech Storage Bench 2013 Preview - The Destroyer, Specs | |||||||||||||

| The Destroyer (2013) | Heavy 2011 | ||||||||||||

| Reads | 38.83 million | 2.17 million | |||||||||||

| Writes | 10.98 million | 1.78 million | |||||||||||

| Total IO Operations | 49.8 million | 3.99 million | |||||||||||

| Total GB Read | 1583.02 GB | 48.63 GB | |||||||||||

| Total GB Written | 875.62 GB | 106.32 GB | |||||||||||

| Average Queue Depth | ~5.5 | ~4.6 | |||||||||||

| Focus | Worst-case multitasking, IO consistency | Peak IO, basic GC routines | |||||||||||

SSDs have grown in their performance abilities over the years, so we wanted a new test that could really push high queue depths at times. The average queue depth is still realistic for a client workload, but the Destroyer has some very demanding peaks. When we first introduced the Heavy 2011 test, some drives would take multiple hours to complete it; today most high performance SSDs can finish the test in under 90 minutes. The Destroyer? So far the fastest we've seen it go is 10 hours. Most high performance SSDs we've tested seem to need around 12–13 hours per run, with mainstream drives taking closer to 24 hours. The read/write balance is also a lot more realistic than in the Heavy 2011 test. Back in 2011 we just needed something that had a ton of writes so we could start separating the good from the bad. Now that the drives have matured, we felt a test that was a bit more balanced would be a better idea.

Despite the balance recalibration, there's just a ton of data moving around in this test. Ultimately the sheer volume of data here and the fact that there's a good amount of random IO courtesy of all of the multitasking (e.g. background VM work, background photo exports/syncs, etc...) makes the Destroyer do a far better job of giving credit for performance consistency than the old Heavy 2011 test. Both tests are valid; they just stress/showcase different things. As the days of begging for better random IO performance and basic GC intelligence are over, we wanted a test that would give us a bit more of what we're interested in these days. As Anand mentioned in the S3700 review, having good worst-case IO performance and consistency matters just as much to client users as it does to enterprise users.

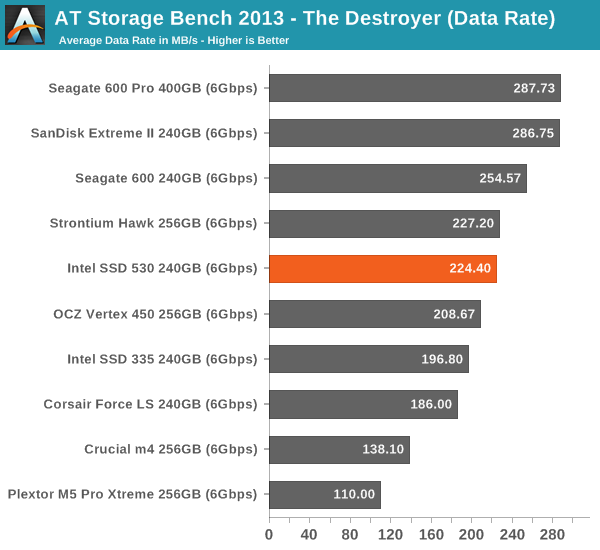

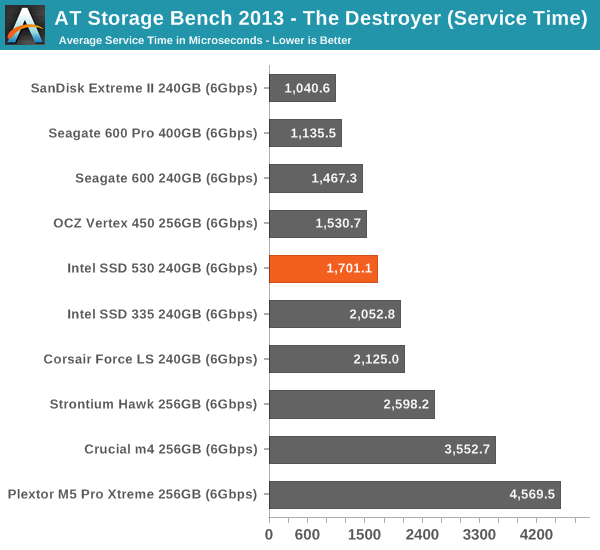

We're reporting two primary metrics with the Destroyer: average data rate in MB/s and average service time in microseconds. The former gives you an idea of the throughput of the drive during the time that it was running the Destroyer workload. This can be a very good indication of overall performance. What average data rate doesn't do a good job of is taking into account response time of very bursty (read: high queue depth) IO. By reporting average service time we heavily weigh latency for queued IOs. You'll note that this is a metric we've been reporting in our enterprise benchmarks for a while now. With the client tests maturing, the time was right for a little convergence.

The SSD 530 does okay in our new Storage Bench 2013. The improvement from SSD 335 is again quite significant, which is mostly thanks to the improved performance consistency. However, the SF-2281 simply can't challenge the more modern designs and for ultimate performance the SanDisk Extreme II is still the best pick.

60 Comments

View All Comments

spacecadet34 - Friday, November 15, 2013 - link

Given that this very drive is today's Newegg Canada's ShellShocker deal, I'd say this review is quite timely!ExodusC - Friday, November 15, 2013 - link

I picked up a 180GB Intel 530 recently after doing a lot of searching for a cheap SSD for my OS and some programs. It replaced my old first generation 60GB OCZ Vertex. I was hesitant about using a SandForce controller drive, since many people apparently still have issues with certain drives, but I decided to jump on the 530.I'm pleased with the performance and price, and I was blown away by Intel's software, allowing you to flash the drive to the latest firmware while it's running with your OS on it. That's a huge leap above the pains of trying to get my old OCZ drive to flash to the latest firmware (which is sometimes a destructive flash).

Samus - Friday, November 15, 2013 - link

Amazingly I haven't ever had an issue with an Intel Sandforce drive. I had some quirkiness (not detecting upon reboot/resume from hibernation) with a 330 at launch but they fixed it almost immediately with a firmware.I can't say the same for OCZ. I've owned 3 of their drives and 2 failed, including the RMA's, in under 6 months. One failed in 3 days. Just wouldn't detect in BIOS, even on different machines or with a USB SATA cable. Ironically, the Vertex 2 240GB I have has been solid for over 2 years in my media center running 24/7 so there is no rhyme or reason to it.

If only Intel's networking division was as on-the-ball with software updates as their storage division. My Intel 7260 AC wifi card occasionally doesn't detect any networks and it is a very common problem. At least they sorted the Bluetooth issues.

ExodusC - Friday, November 15, 2013 - link

The 60GB OCZ Vertex I replaced actually was not my first. I RMA'd my original drive after I think I screwed up a firmware flash (it seemed to be my fault and not the drive's). Another reason I'm happy with my Intel drive, the firmware updating is so incredibly painless and low risk.If you're familiar with Anand's SSD anthology and the history behind the Vertex, you might remember that the first generation OCZ Vertex with production firmware was the first consumer SSD that didn't suffer from awful stuttering issues (due in large part to Anand's communication with OCZ on the issue). Other companies followed suit and prioritized consistent performance over maximum throughput. At the time, the Vertex was a no-brainer (this was in the pre-Intel X25-M days).

You're right that OCZ seems to have some QC issues nowadays. On the plus side, I can definitely say that OCZ's customer support is top notch. They were extremely fast in qualifying me to RMA my drive after the failed firmware flash.

'nar - Monday, November 18, 2013 - link

You are complaining with no details to back it up. You said your Vertex 2 works fine, but you failed to mention the model OCZ drives that failed.I have used Vertex (Limited, 2, 3) and now Vector drives and have not had a bad experience yet. But I looked into the hardware and never considered the Solid or Agility series in the first place. I have replaced another guy's SSD three times. I finally told him to give up on RMA's and buy a quality drive. Solid and Agility are not quality, they are cheap. That's why OCZ finally dropped them.

I've installed dozen of SSD's, mostly Intel/Sandforce models, and have never had an unexplained failure. I did have one, but that system killed a hard drive a month even before I installed the SSD, so it is just a quirk of that system.

I have OCZ in all of my own systems(9) because they eek out a bit more performance, but the Intel Toolbox is a winner for me to use for others where I cannot be there for support.

jonjonjonj - Thursday, November 21, 2013 - link

looks like you made a ocz fanboy mad. ocz deserves the terrible reputation they have and after all the bad drives they sold i wouldn't touch one.Bullwinkle J Moose - Saturday, November 23, 2013 - link

You did not screw up the firmware flash!!!The number one failure mechanism for OCZ is a firmware update as could easily have been verified by the complaints at OCZ's forum and Newegg customer reviews

I have torture tested OCZ SSD's (Vertex 1 and 2) by killing power, not aligning partitions, defragging and several other methods not recommended by OCZ

Nothing would damage the drives until the firmware was updated as per OCZ instructions as can be seen by the thousands of customer complaints

Anyone commenting otherwise is a LIAR and did not research this topic thoroughly or honestly!

Cellar Door - Friday, November 15, 2013 - link

My Intel failed after just a year and a half - so don't think they are immune to it.Sivar - Saturday, November 16, 2013 - link

This is true. Nothing is immune to manufacturing defects.I had an opportunity for a few years to see actual return rates for many hard drive and SSD manufacturers. Intel SSDs consistently had the lowest failure rates in the industry, at least through the 520. I haven't the most current data, but I would be surprised if the numbers suddenly changed since then.

Sivar - Saturday, November 16, 2013 - link

Note that the OCZ Vertex 3 and later have been pretty solid. The previous generations were so alarmingly bad that I am a little surprised they are still in business.