Inside the First Android Wear Devices: LG G Watch & Samsung Gear Live Teardown

by Anand Lal Shimpi on June 30, 2014 4:50 PM EST

Last week at IO, Google gave attendees a choice of one of two Android Wear devices to take home and get experience with the platform. Although I personally opted for LG's G Watch (the display looked better), my friend James Bruce at ARM managed to get both and spent the next day taking them apart. James sent over high res pics of the result and gave us permission to post the images here (Update: ARM posted its own blog on the teardown here). I'm working on a review of Android Wear and the G Watch now (and yes, I have battery life data), but I hadn't yet taken it apart.

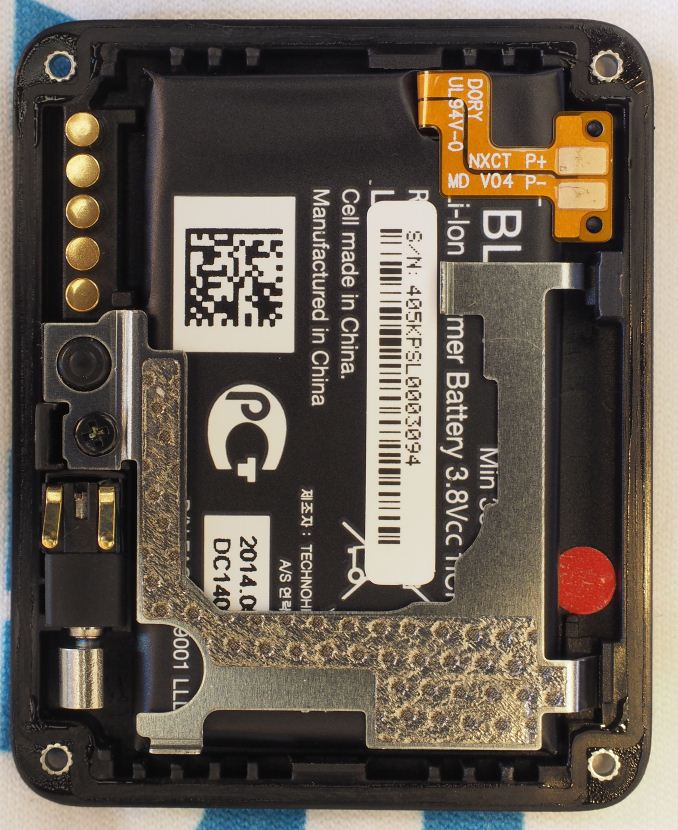

The G Watch turns out to be pretty easy to take apart. There are four Torx T5 screws on the back, removing them gives you access to its internals. The 400mAh battery is integrated into the back cover. ARM's teardown confirms 3.8V chemistry, resulting in a 1.52Wh total capacity.

In the photo above (left) you can see the thin gasket that runs around the perimeter of the G Watch, helping to give it its IP67 rating (fully sealed against dust, submergible in up to 1 meter of water for up to 30 minutes).

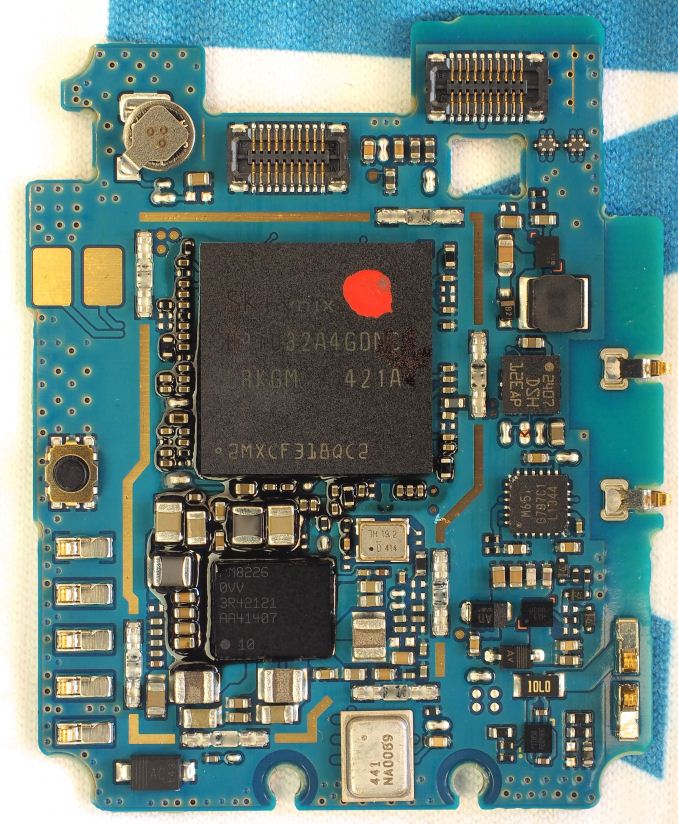

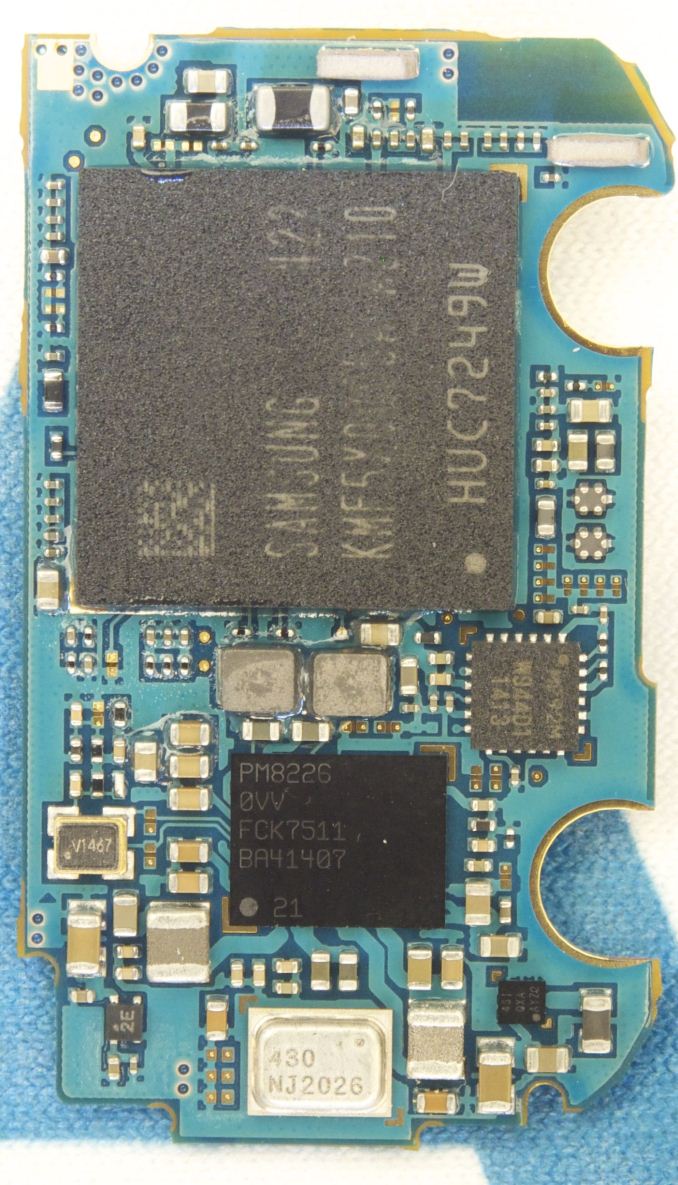

Two more screws hold the incredibly small PCB in place. The Qualcomm APQ8026 SoC (4 x Cortex A7 + Adreno 305 graphics) is underneath the Hynix DRAM device. There's a discrete Qualcomm PM8226 PMIC just south of the SoC.

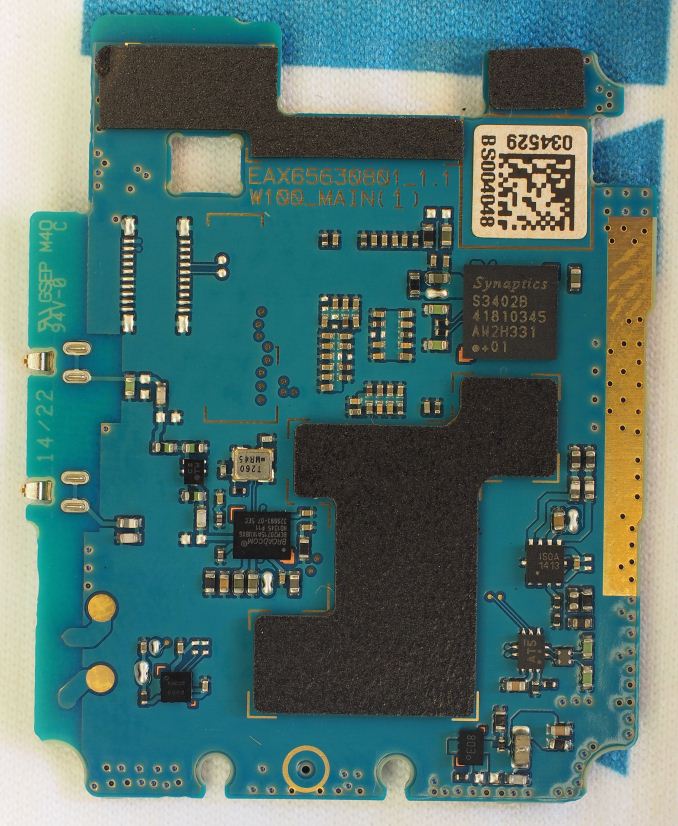

On the flip side of the PCB we see Synaptics' touch controller (the same model used in the Moto X).

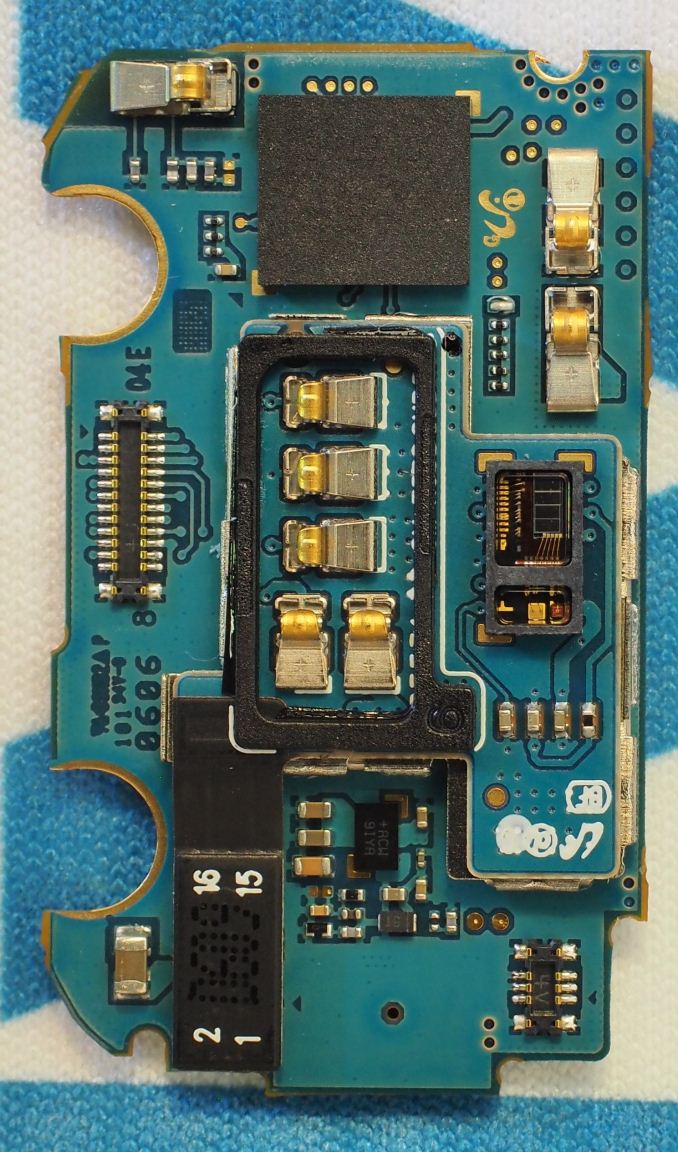

The Gear Live is also held together by four screws, although dismantling the watch takes a little more effort. The battery and heart rate monitor are both enclosed in the back of the watch. Samsung is also using 3.8V chemistry for its watch battery.

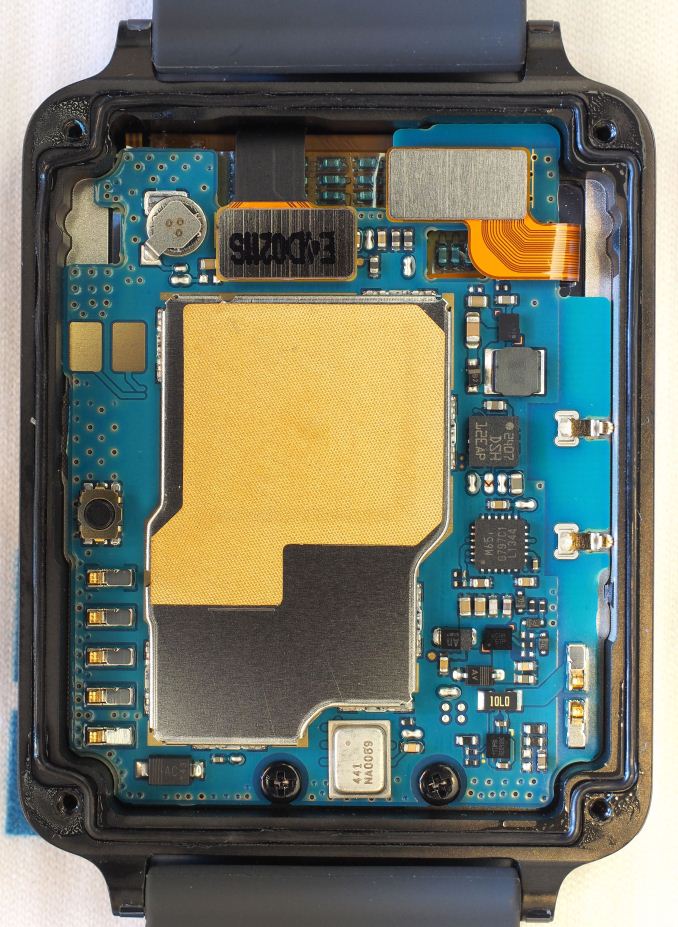

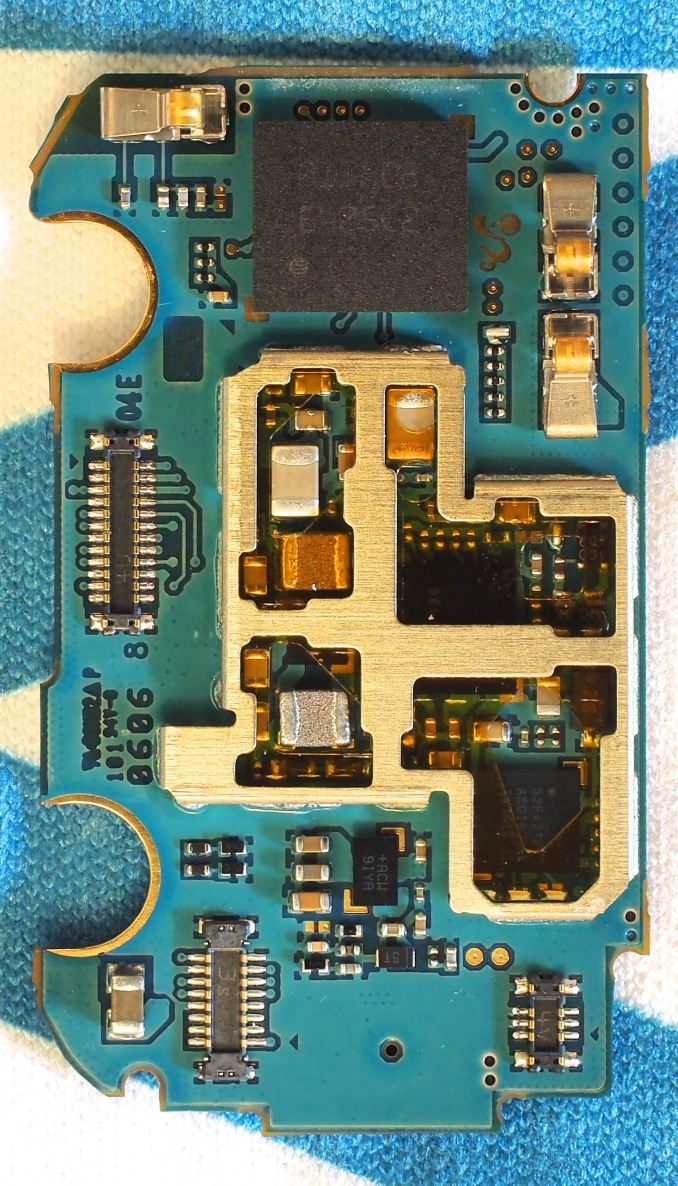

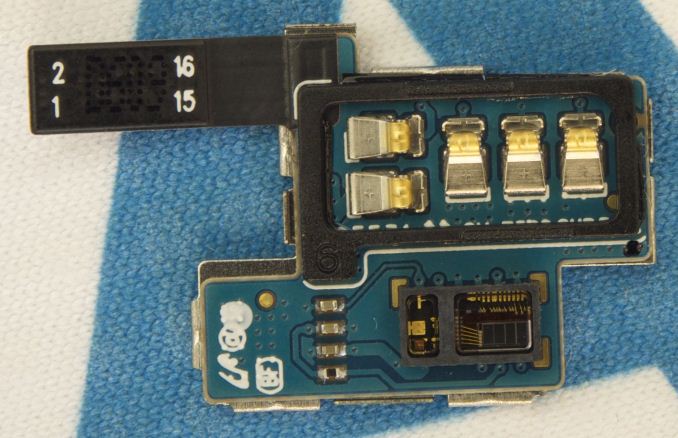

The logic board in the Gear Live is a bit more complex. There's a second layer stacked on the main logic board that also acts as an RF can.

We see the same APQ8026 SoC from Qualcomm below a Samsung DRAM device in a PoP stack.

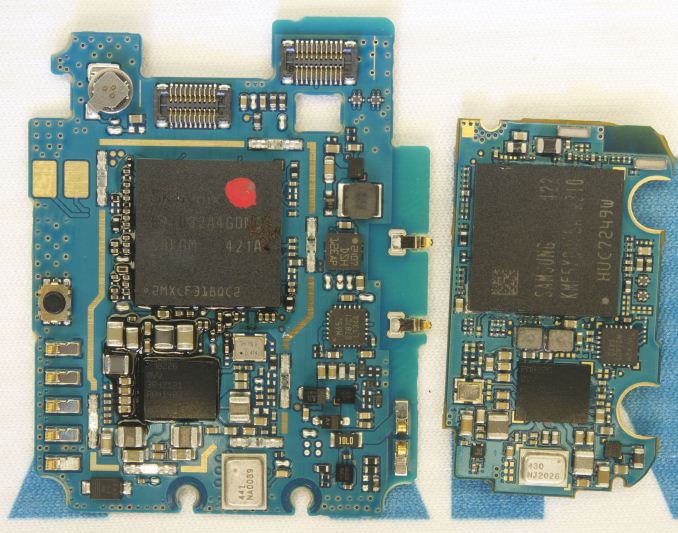

Overall Samsung definitely has the more compact (and complex) internal PCB (the picture at the top of the article compares the two side by side), which makes sense given the company's relative head start in this space.

There's been a ton of innovation in the internal layout of smartphones over the years. As these are the very first Android Wear devices, it'll be interesting to see how the internal structure evolves over the coming years. One thing is for sure: those batteries are going to have to get thinner, or find a new place to live. Perhaps split up and distributed into a watch band?

26 Comments

View All Comments

bengildenstein - Monday, June 30, 2014 - link

It's humbling to think that these smartwatches feature SoCs that should be comparable in CPU/GPU performance to my 2012 Nexus 7. These are extremely capable computers, certainly capable of running the majority of content on the Play Store, though not in a practical way given the ultra-small display.JeffFlanagan - Monday, June 30, 2014 - link

The SOC may be comparable to your phone, but if the CPU is cranked as fast as the one in your phone to run phone apps, the battery life would have to be terrible. These batteries are tiny!bengildenstein - Monday, June 30, 2014 - link

You are correct! I remember reading that the clock rate of the CPU in these smartwatches was around 1.2GHz, but I think that was literature on the typical clocks of the SoC itself (targeted for low-end smartphones). In practice, a little googling has revealed that they are clocked at around 800MHz, which is ~60% of the speed (and the A7 is slower than the A9). I would expect that the GPU would be underclocked as well (though even at very low-clocks, the 305 may be comparable to the Tegra 3).Given another generation iteration, these devices should feature Cortex A53, or equivalent CPUs which would be clock-for-clock as capable as the A9 found in Nexus 7, though it remains to be seen if the final clock speed will reach the 1.3GHz found in the tablet. Given a node-shrink (which is looking more like 14nm FinFET every day) they most certainly should perform as well, if not better.

As it currently stands, these smartwatches are still very capable computers!

DanNeely - Monday, June 30, 2014 - link

I wouldn't be surprised to see them go the other direction. Like macs, I don't see any need for a quad core A7 CPU in the first place. I suspect it had more to do with picking something that would require minimal porting effort and/or being able to use an off the shelf SoC with support for features that haven't trickled down to lower end chips (eg the newest bluetooth), and wouldn't be surprised if a future generation launched with a dual core A5 or even a single core M3 to extend battery life or allow for a smaller package.Morawka - Tuesday, July 1, 2014 - link

and these SOC's are designed for phones. imagine how much battery life they'll get when they design a soc around a watch.Thrawn - Monday, June 30, 2014 - link

The highest clock speed may not be the best way to save power but doing Intel's race to sleep method for something like this is still valid and requires a powerful CPU to actually be the lowest energy usage that a responsive device is going to get. The super low wattage CPUs are used in devices that don't have to directly interact with us and so we don't notice slower speed.macs - Monday, June 30, 2014 - link

I still don't get why they use quad core SOC for a watch.2 faster core should be a better solution for mobile devices in general and even more on a watch...

knightspawn1138 - Monday, June 30, 2014 - link

I would guess that the quad-core at a lower speed helps conserve battery by allowing the CPU to complete more tasks simultaneously, reducing the time the chip would spend out of low-power mode.DanNeely - Monday, June 30, 2014 - link

Preliminary benchmarking results suggest that only 1 core is enabled. (Single and multi-core versions of geekbench returned the same results.) This lends support to my speculation that the chip was picked primarily because it made implementation easier, not because they needed this much cpu power.http://arstechnica.com/gadgets/2014/06/reviewing-a...

Guspaz - Monday, June 30, 2014 - link

These things look surprisingly space inefficient on the inside... Looks like there's significant room for improvement for manufacturers who really go nuts in miniaturization, like mounting components to thinner flexible substrates.