ATI Radeon HD 3870 X2: 2 GPUs 1 Card, A Return to the High End

by Anand Lal Shimpi on January 28, 2008 12:00 AM EST- Posted in

- GPUs

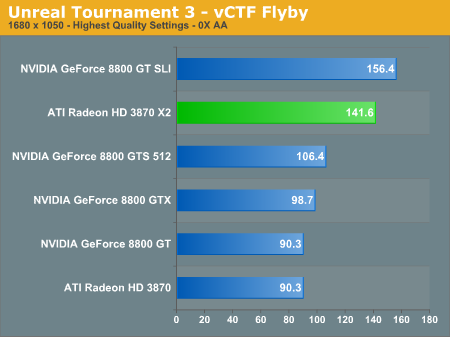

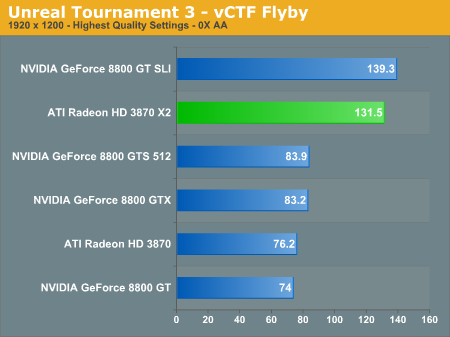

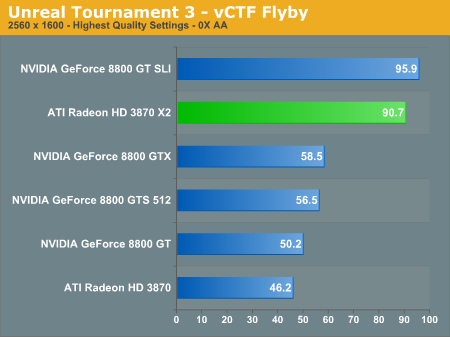

Unreal Tournament 3

We used the built-in vCTF flyby in Unreal Tournament 3, using the -compatscale=5 switch to ensure the highest in-game quality settings were used. We ran each flyby for 90 seconds and are reporting the average frame rates.

There are obvious strengths to having two GPUs and they're very visible here in our UT3 scores, compared to the single-card NVIDIA solutions we're looking at a 50%+ performance advantage. Not bad at all.

74 Comments

View All Comments

Cyspeth - Thursday, February 21, 2008 - link

At last a return to multi-processor graphics acceleration. More power for the simple expedient of more GPUs. I look forward to getting two of these babies and setting them up for crossfire mode. Quad GPU FTW.This card seems to check out pretty nicely, and I haven't heard of many problems as yet. I've heard of only one really bad X2 horror story, which was a DOA, and ATI happily replaced the defective card. Anyone who would rather an nvidia 8800 is out of their mind. Not only is it less powerful and less value for money, but nvidia is really stingy about replacement of defects, not to mention they don't have one reliable third party manufacturer, in the face of ATI's 2 really good ones (Sapphire and HIS).

I've never had a problem with any ATI card I've owned, but every single nvidia one has had some horrible design flaw leading to it's horrible, crippling failure, usually through overheating.

It's good to see an honest company making some headway in the market. I would hope that ATI can keep up their lead over nvidia with this (or another) series of card, but that doesn't seem likely with their comparative lack of market share

MadBoris - Saturday, February 2, 2008 - link

While it's good to see AMD do something decent that performs well finally, I'm not so sure it's enough at this stage. AMD needs all the good press they can get but $450 seems a bit much when comparing it to the 8800GT @ $250 without a large towering lead. Furthermore the 8800 GT SLI gives it a pretty good stomping @ $500. Hopefully the multi-gpu solution is more painless and driver bugs get worked out soon.Also, it would be a big disappointment and misstep for Nvidia not to produce a single GPU real soon that ties or supersedes 8800 ultra SLI performance. It's been 15 months since 8800 ultra came out, that's normal time for a generation leap (6800,7800,8800). So unless Nvidia has been resting on it's laurels it should produce a killer GPU, and then if they make it a multi-GPU solution it should by all means smoke this card. Here's hoping Nvidia hasn't been sleeping and just make another minor 8800 revision and call it next gen, then by all means ATI will be a competitor.

MadBoris - Saturday, February 2, 2008 - link

Also from a price/performance ratio it would be nice to see how it performs against a 8800GT SLI 256MB in current games. 8800 GT 256MB SLI can be had for $430 and I would guess it would perform better at low-med resolutions than this x2.Giacomo - Saturday, February 2, 2008 - link

I wouldn't spend four hundred bucks for gaming at 1280x*, one single 512MB GT or GTS is more than enough for that.And as you scale up to 1680*1050, I personally believe that buying 2x256MB is silly. Therefore, I believe that the 512MB one is the only reasonable 8800GT.

Your plan sounds like low-res overdriving and doesn't seem wise to me.

Giacomo

MadBoris - Saturday, February 2, 2008 - link

It's not my plan, I just said it would be good for a price/performance comparison for something like a future review.Although I no longer game at 1280, doesn't mean others don't and 2-8800gt's with more performance and less price may be beneficial for them. Personally I think SLI is a waste anyway, but if you are considering an X2, 8800 SLI is an affordable comparison whether at 256 or 512.

Aver - Friday, February 1, 2008 - link

I have searched through anand's archive but I couldn't find the command line parameters used for testing Unreal Tournament 3 in these reviews. Can anybody help?tshen83 - Thursday, January 31, 2008 - link

That PLX bridge chip is PCI-Express 1.1, not 2.0. Using a PCI-Express 1.1 splitter to split 16x 1.1 bandwidth into 8x 1.1 bandwidth to feed two PCI-Express 2.0 capable Radeon HD3870 chips shows AMD's lack of engineering efforts. They basically glued two HD3870 chips together. Talking about the HD3870, it is basically HD2900XT on 55nm process with a beat up 256bit memory bus. Not exactly good engineering if you ask me.(props to TSMC, definitely not to ATI)Scaling is ok, but not great. The review failed to review dual HD3870x2s in Crossfire, basically to show the 4 Radeon HD3870s in Crossfire mode. I have seen reports say that 4 way scaling is even worse than 2 way. It is scaling in Lg(n) mode. Adding 2 more of the Radeon 3870s is only 40% faster than two way HD3870.

Giacomo - Thursday, January 31, 2008 - link

That's always curious to see users talking about "lacking of engineering efforts" and so on, as if they can teach Intel/AMD/nVIDIA how they should implement their technologies. PCI-X 2.0 is now out, and then we start seeing people who look at the previous version as it definitely should be dismissed. Folks, maybe we should at least consider that the 3870 design simply doesn't generate so much bandwidth to justify a PCI-X 2.0 bridge, don't we?Sorry, but I kinda laugh when I read "engineering" suggestions from users, supposedly like me, independently from how good their knowledge is. We're not talking about a Do-It-Yourself piece of silicon, we are talking about the last AMD/ATi card. And the same, of course, would apply to an nVIDIA one, to Intel stuff, and so on. The most correct approach I think it would be something like this: "Oh, see, they put a PCI-X 1 bridge in there, and look at the performance, when it's not cut by bad driver support, the scaling is amazing... So it's true that PCI-X 2.0 is still overkill, for nowadays cards".

That one sounds rationale to me.

About Crossfire, CrossfireX is needed for two 3870X2 to work together, and I've read in more than one place on the web that drivers for that aren't ready yet. Apart from this, before spitting on scaling and so on, don't forget that all these reviews are done with very early beta drivers...

Giacomo

Axbattler - Tuesday, January 29, 2008 - link

I seem to remember at least one review pointing out some fairly dire minimum frame rates. Basically, the high and impressive maximum frame rate allowed the card to have more a very good average frame rate despite a few instance of very dire minimum frame rate (if I remember correctly, it sometime dips below that of a single core card).Did Anand notice anything like this during the test?

Giacomo - Wednesday, January 30, 2008 - link

That's not exactly right. I mean, the average is not pulled up by any incredible maximum, it works in a different way: the maximum are nothing incredibly higher than the normal average, simply those minimum are rare, due to particular circumstances in which the application (the game) loads up stuff in the card while it's rendering, and it shouldn't do. This is the juice of an ATi's answer posted in the review by DriverHeaven. They told ATi about these instantaneous drops and that was the answer.Of course, remaining the fact that other cards didn't show similar problems, I believe that the problem is more driver-related, and ATi should really work fast to fix issues like this one.

However, DriverHeaven reported that this issue showed up mostly in scenario-loadings indeed, and therefore wasn't too annoying.

For what I've understood.

My two cents,

Giacomo