The Intel SSD 660p SSD Review: QLC NAND Arrives For Consumer SSDs

by Billy Tallis on August 7, 2018 11:00 AM EST

When NAND flash memory was first used for general purpose storage in the earliest ancestors of modern SSDs, the memory cells were treated as simply binary, storing a single bit of data per cell by switching cells between one of two voltage states. Since then demand for higher capacity has pushed the industry to store more bits in each flash memory cell.

In the past year, the deployment of 64-layer 3D NAND flash has allowed almost all of the SSD industry to adopt three bit per cell TLC flash, which only a few short years ago was the cutting edge. Now, four bit per cell, also known as Quad-Level Cell (QLC) NAND flash, is the current frontier.

Each transition to storing more bits per memory cell comes with significant downsides that offset the appeal of higher storage density. The four bits per cell storage mode of QLC requires discriminating between 16 voltage levels in a flash memory cell. The process of reading and writing with adequate precision is unavoidably slower than accessing NAND flash that stores fewer bits per cell. The error rates are higher, so QLC-capable SSD controllers need very robust error correction capabilities. Data retention and write endurance are reduced.

But QLC NAND is entering a market where TLC NAND can provide more performance and endurance than most consumers really need. QLC NAND doesn't introduce any fundamentally new problems, it just is afflicted more severely with the challenges that have already been overcome by TLC NAND. The same strategies that are in widespread use to mitigate the downsides of TLC NAND are also usable for QLC NAND, but QLC will always be the cheaper lower-quality alternative to TLC NAND.

On the commercial product front, Micron introduced an enterprise SATA SSD with QLC NAND this spring, and everyone else is working on QLC NAND as well. But for consumers, where the pricing advantages of QLC are going to be the most noticed, it is Intel who the first to market with a consumer SSD that uses QLC NAND flash memory. Today the company is taking the wraps off of their new Intel SSD 660p, an entry-level M.2 NVMe SSD with up to 2TB of QLC NAND.

Intel has reportedly cut off further development of consumer SATA drives, so naturally their first consumer QLC SSD is a member of their 6-series, the lowest tier of NVMe SSDs. The 660p comes as a replacement for the Intel SSD 600p, Intel's first M.2 NVMe SSD and one of the first consumer NVMe drives that aimed to be cheaper and slower than the premium high-end NVMe SSDs, through the use of TLC NAND at a time when NVMe SSDs were still primarily using MLC NAND. The purpose of the Intel 660p is to push prices down even further while still providing better performance than SATA SSDs or the 600p.

| Intel SSD 660p Specifications | |||||

| Capacity | 512 GB | 1 TB | 2 TB | ||

| Controller | Silicon Motion SM2263 | ||||

| NAND Flash | Intel 64L 1024Gb 3D QLC | ||||

| Form-Factor, Interface | single-sided M.2-2280, PCIe 3.0 x4, NVMe 1.3 | ||||

| DRAM | 256 MB DDR3 | ||||

| Sequential Read | up to 1800 MB/s | ||||

| Sequential Write (SLC cache) | up to 1800 MB/s | ||||

| Random Read (4kB) | up to 220k IOPS | ||||

| Random Write (4kB, SLC cache) | up to 220k IOPS | ||||

| Warranty | 5 years | ||||

| Write Endurance | 100 TB 0.1 DWPD |

200 TB 0.1 DWPD |

400 TB 0.1 DWPD |

||

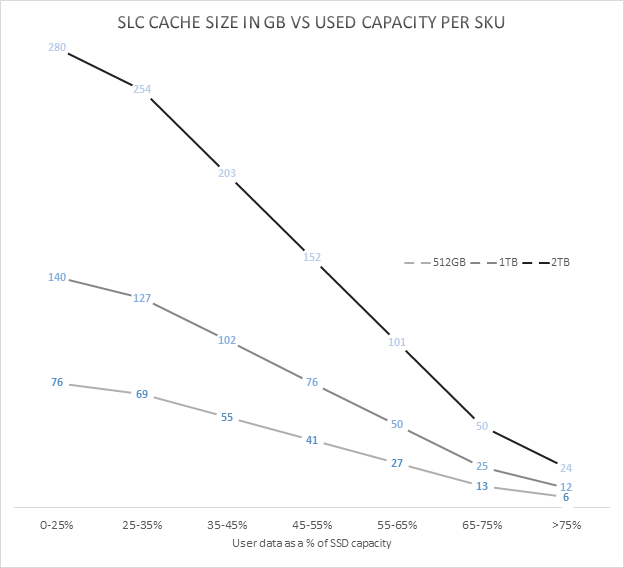

| SLC Write Cache | Minimum | 6 GB | 12 GB | 24 GB | |

| Maximum | 76 GB | 140 GB | 280 GB | ||

| MSRP | $99.99 (20¢/GB) | $199.99 (20¢/GB) | TBD | ||

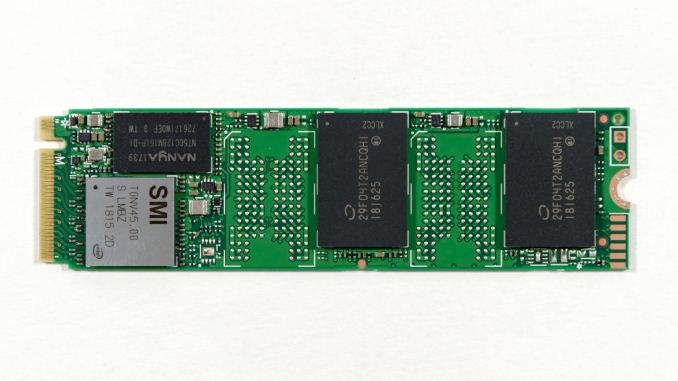

Looking under the hood, Intel's partnership with Silicon Motion for client and consumer SSDs continues with the use of the SM2263 NVMe SSD controller for the 660p. This is the smaller 4-channel sibling to the SM2262 and SM2262EN controllers that are doing very well in the more high-end parts of the SSD market. A 4-channel controller makes sense for a QLC drive, because the large 1Tb (128GB) per-die capacity of Intel's 64-layer 3D QLC NAND means it only takes a few chips to reach mainstream drive capacities.

The 660p lineup starts at 512GB (four QLC dies) and scales up to 2TB. All three capacities are single-sided M.2 2280 cards with a constant 256MB of DRAM. Mainstream SSDs typically use 1 GB of DRAM per 1TB of flash, so the 660p is rather short on DRAM even at the 512GB capacity. As a cost-oriented SSD it might make sense to use the DRAMless SM2263XT controller and the NVMe Host Memory Buffer feature, but that significantly complicates firmware development and error handling. The small size of the SM2263 controller allows Intel to fit all four NAND packages used by the 2TB model on one side of the PCB.

Intel doesn't break down performance specs for the 660p by drive capacity, and the read and write performance ratings are the same, thanks to the acceleration of SLC write caching. Intel doesn't provide any official spec for write performance after the SLC cache is filled, but we've measured about 100 MB/s on our 1TB sample. This steady-state sequential write speed will vary with drive capacity. Intel is offering a 5 year warranty on the drive and write endurance is about 0.1 drive writes per day, lower than the 0.3 DWPD typical of mainstream consumer SSDs, but something that should still adequate for most users.

Probably the most important aspect of a consumer QLC drive design is the behavior of the SLC cache. Consumer TLC drives universally treat a portion of their NAND flash memory as pseudo-SLC, using that higher-performing memory segment as a write cache. QLC SSDs are even more reliant on SLC caching because the performance of raw QLC NAND is even lower than that of TLC.

No SLC caching strategy can perfectly accelerate every workload and use case, and there are significant tradeoffs between different strategies. The Intel SSD 660p employs a variable-size SLC cache, and all data written goes first to the SLC cache before being compacted and folded into QLC blocks. This means that the steady-state 100MB/s sequential write speed we've measured is significantly below what the drive could deliver if the writes went directly to the QLC without the extra SLC to QLC copying step getting in the way. When the drive is mostly empty, up to about half of the available flash memory cells will be treated as SLC NAND. As the drive fills up, blocks will be converted to QLC usage, shrinking the size of the cache and making it more likely that a real-world use case could write enough to fill that cache.

Our current test suite cannot fully capture the dynamics of a variable-size SLC cache, and we haven't had the 660p in hand long enough to thoroughly test it at various states of fill. When the Intel SSD 660p is mostly empty and the SLC cache size is huge, many of our standard benchmarks end up testing primarily the performance of the SLC cache — and for reads in addition to writes, because in these conditions the 660p isn't very aggressive about moving data from SLC blocks to QLC. As a result, our synthetic benchmark tests have been run both with our standard methodology, and with a completely full drive so that the tests measure performance of the QLC memory (with an SLC cache that is too small to entirely contain any of our tests). The two sets of scores thus represent the two extremes of performance that the Intel SSD 660p can deliver. The full-drive results in this review represent a worst-case scenario that will almost never be encountered by real-world usage, because our tests give the drive limited idle time to flush the SLC cache but in the real world consumer workloads almost always give SSDs far more idle time than they need.

Intel's initial pricing for the 660p works out to just under 20¢/GB, putting it very close to the street prices of the cheapest current-generation TLC-based SSDs. The Intel SSD 660p goes on sale today, and is being showcased by Intel at Flash Memory Summit this week. In traveling to FMS, I left behind a testbed full of drives running extra benchmarks for this review. When I can catch a break from all the news and activities at FMS, I will be adding to this review.

The Competition

The Intel SSD 660p is positioned as a very cheap entry-level NVMe SSD, so our primary focus is on comparing it against other low-end NVMe drives and against SATA drives. As usual, the Crucial MX500 serves as our representative of mainstream SATA SSDs thanks to its consistently good pricing and solid all-around performance. The other low-end NVMe SSDs in this review are the 660p's predecessor Intel SSD 600p, the Phison E8-based Kingston A1000 and and the Toshiba RC100 DRAMless NVMe SSD that uses the Host Memory Buffer feature.

| AnandTech 2018 Consumer SSD Testbed | |

| CPU | Intel Xeon E3 1240 v5 |

| Motherboard | ASRock Fatal1ty E3V5 Performance Gaming/OC |

| Chipset | Intel C232 |

| Memory | 4x 8GB G.SKILL Ripjaws DDR4-2400 CL15 |

| Graphics | AMD Radeon HD 5450, 1920x1200@60Hz |

| Software | Windows 10 x64, version 1709 |

| Linux kernel version 4.14, fio version 3.6 | |

| Spectre/Meltdown microcode and OS patches current as of May 2018 | |

- Thanks to Intel for the Xeon E3 1240 v5 CPU

- Thanks to ASRock for the E3V5 Performance Gaming/OC

- Thanks to G.SKILL for the Ripjaws DDR4-2400 RAM

- Thanks to Corsair for the RM750 power supply, Carbide 200R case, and Hydro H60 CPU cooler

- Thanks to Quarch for the XLC Programmable Power Module and accessories

- Thanks to StarTech for providing a RK2236BKF 22U rack cabinet.

86 Comments

View All Comments

limitedaccess - Tuesday, August 7, 2018 - link

SSD reviewers need to look into testing data retention and related performance loss. Write endurance is misleading.Ryan Smith - Tuesday, August 7, 2018 - link

It's definitely a trust-but-verify situation, and is something we're going to be looking into for the 660p and other early QLC drives.Besides the fact that we only had limited hands-on time with this drive ahead of the embargo and FMS, it's going to take a long time to test the drive's longevity. Even with 24/7 writing, with a sustained 100MB/sec write rate you're looking at only around 8TB written/day. Which means you're looking at weeks or months to exhaust the smallest drive.

eastcoast_pete - Tuesday, August 7, 2018 - link

Hi Ryan and Billie,I second the questions by limitedaccess and npz, also on data retention in cold storage. Now, about Ryan's answer: I don't expect you guys to be able to torture every drive for months on end until it dies, but, is there any way to first test the drive, then run continuous writes/rewrites for seven days non-stop, and then re-do some core tests to see if there are any signs or even hints of deterioration? The issue I have with most tests is that they are all done on virgin drives with zero hours on them, which is a best-case scenario. Any decent drive should be good as new after only 7 days (168 hours) of intensive read/write stress. If it's still as good as when you first tested it, I believe that would bode well for possible longevity. Conversely, if any drive shows even mild deterioration after only a week of intense use, I'd really like to know, so I can stay away.

Any chance for that or something similar?

JoeyJoJo123 - Tuesday, August 7, 2018 - link

>and then re-do some core tests to see if there are any signs or even hints of deterioration?That's not how solid state devices work. They're either working or they're not. And even if they're dead, that's not to say anything that it was indeed the nand flash that deteriorated beyond repair, it could've been the controller or even the port the SSD was connected that got hosed.

Literally testing a single drive says absolutely nothing at all about the expected lifespan of your single drive. This is why mass aggregate reliability ratings from people like Backblaze is important. They buy enough bulk drives that they can actually average out the failure rates and get reasonable real world reliability numbers of the the drives used in hot and vibration-prone server rack environments.

Anandtech could test one drive and say "Well it worked when we first plugged it in, and when we rebooted, the review sample we got no longer worked. I guess it was a bad sample" or "Well, we stress tested it for 4 weeks under a constant mixed read/write load, and the SMART readings show that everything is absolutely perfect, we can extrapolate that no drive of this particular series will never _ever_ fail for any reason whatsoever until the heat death of the universe". Either way, both are completely anecdotal evidence, neither can have any real conclusive evidence found due to the sample size of ONE drive, and does nothing but possibly kill the storage drive off prematurely for the sake of idiots salivating over elusive real world endurance rating numbers when in reality IT REALLY DOESN'T MATTER TO YOU.

Are you a standard home consumer? Yes.

And you're considering purchasing this drive that's designed and marketed towards home consumers (ie: this is not a data center priced or marketed product)?: Yes.

Are you using it under normal home consumer workloads (ie: you're not reading/writing hundreds of MB/s 24/7 for years on end)? Yes.

Then you have nothing to worry about. If the drive dies, then you call up/email the manufacturer and get warranty replacement for your drive. And chances are, your drives will likely be useless due to ever faster and more spacious storage options in the future than they will fail. I got a basically worthless 80GB SATA 2 (near first gen) SSD that's neither fast enough to really use as a boot drive nor spacious enough to be used anywhere else. If anything the NAND on that early model should be dead, but it's not, and chances are the endurance ratings are highly pessimistic of their actual death as seen in the ARS Technica report where Lee Hutchinson stressed SSDs 24/7 for ~18 months before they died.

eastcoast_pete - Tuesday, August 7, 2018 - link

Firstly, thanks for calling me one of the "idiots salivating over elusive real world endurance rating numbers". I guess it takes one to know one, or think you found one. Second, I am quite aware of the need to have a sufficient sample size to make any inference to the real world. And third, I asked the question because this is new NAND tech (QLC), and I believe it doesn't hurt to put the test sample that the manufacturer sends through its paces for a while, because if that shows any sign of performance deterioration after a week or so of intense use, it doesn't bode well for the maturity of the tech and/or the in-house QC.And, your last comment about your 80 GB near first gen drive shows your own ignorance. Most/maybe all of those early SSDs were SLC NAND, and came with large overprovisioning, and yes, they are very hard to kill. This new QLC technology is, well, new, so yes I would like to see some stress testing done, just to see if the assumption that it's all just fine holds, at least for the drive the manufacturer provided.

Oxford Guy - Tuesday, August 7, 2018 - link

If a product ships with a defect that is shared by all of its kind then only one unit is needed to expose it.mapesdhs - Wednesday, August 8, 2018 - link

Proof by negation, good point. :)Spunjji - Wednesday, August 8, 2018 - link

That's a big if, though. If say 80% of them do and Anandtech gets the one that doesn't, then...2nd gen OCZ Sandforce drives were well reviewed when they first came out.

Oxford Guy - Friday, August 10, 2018 - link

"2nd gen OCZ Sandforce drives were well reviewed when they first came out."That's because OCZ pulled a bait and switch, switching from 32-bit NAND, which the controller was designed for, to 64-bit NAND. The 240 GB model with 64-bit NAND, in particular, had terrible bricking problems.

Beyond that, there should have been pressure on Sandforce's decision to brick SSDs "to protect their firmware IP" rather than putting users' data first. Even prior to the severe reliability problems being exposed, that should have been looked at. But, there is generally so much passivity and deference in the tech press.

Oxford Guy - Friday, August 10, 2018 - link

This example shows why it's important for the tech press to not merely evaluate the stuff they're given but go out and get products later, after the initial review cycle. It's very interesting to see the stealth downgrades that happen.The Lenovo S-10 netbook was praised by reviewers for having a matte screen. The matte screen, though, was replaced by a cheaper-to-make glossy later. Did Lenovo call the machine with a glossy screen the S-11? Nope!

Sapphire, I just discovered, got lots of reviewer hype for its vapor chamber Vega cooler, only to replace the models with those. The difference? The ones with the vapor chamber are, so conveniently, "limited edition". Yet, people have found that the messaging about the difference has been far from clear, not just on Sapphire's website but also on some review sites. It's very convenient to pull this kind of bait and switch. Send reviewers a better product then sell customers something that seems exactly the same but which is clearly inferior.