Power Within Reach: NVIDIA's GeForce 8800 GTS 320MB

by Derek Wilson on February 12, 2007 9:00 AM EST- Posted in

- GPUs

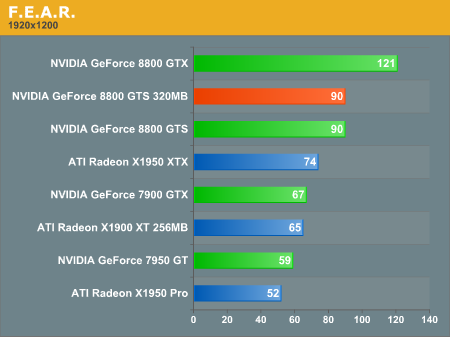

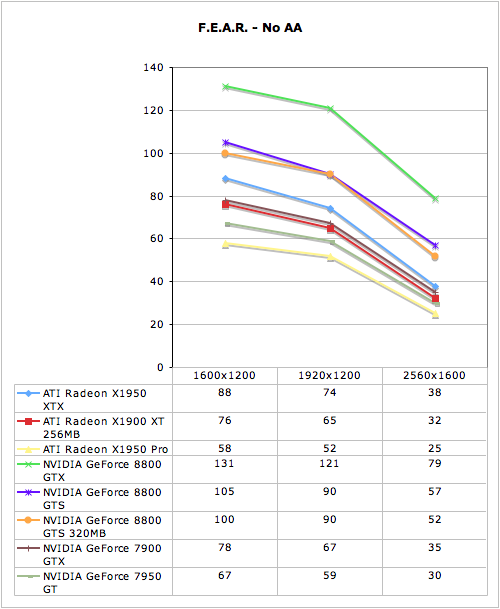

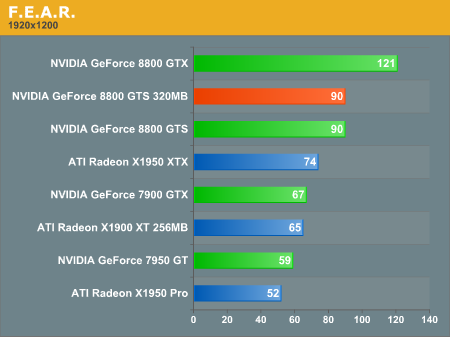

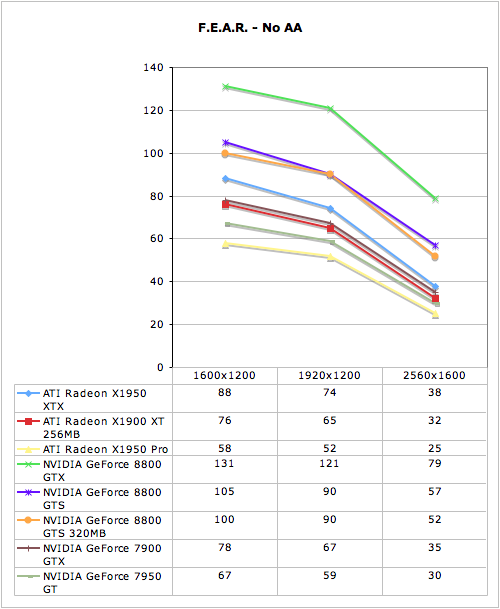

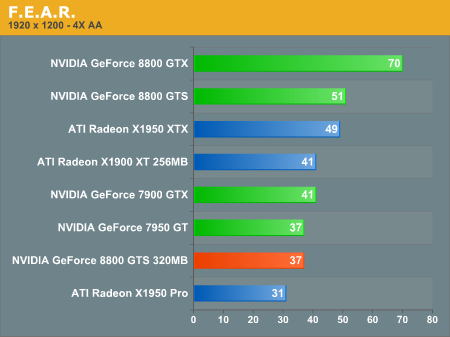

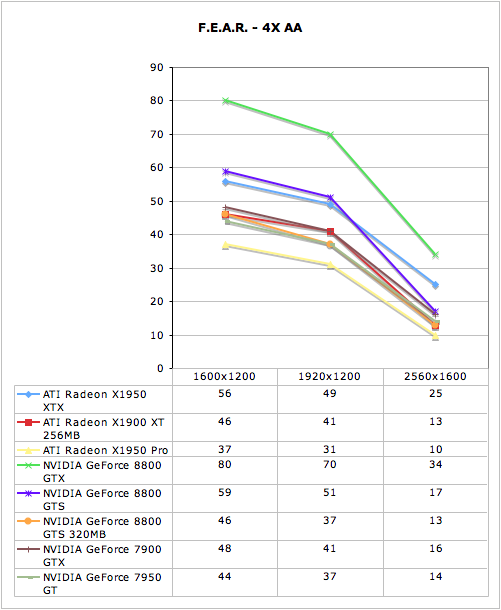

F.E.A.R. Performance

There is a built in performance test in F.E.A.R. that provides some useful statistics including average framerate. We use this performance test with all the options turned all the way up except for Soft Shadows. We disable Soft Shadows because it incurs a very high performance penalty while not delivering a good quality effect. Here we are using the 1.08 patch.

The 320MB 8800 GTS is easily able to keep up with the 640MB version under F.E.A.R. without 4xAA enabled. Performing identically at 1920x1200 shows that memory size doesn't make a difference here. Of course, as we've seen with other games, AA does increase memory usage and performance in a big way on the smaller memory part.

The two GTS parts scale similarly here, but the 320MB part performs much worse even at 1600x1200. Only our 8800 GTX is really playable at 2560x1600, but at least the 8800 GTS 320MB makes the grade at 1920x1200. Coming in with a playable score on a fairly widely used resolution is good news to majority of gamers who don't own 30" monitors.

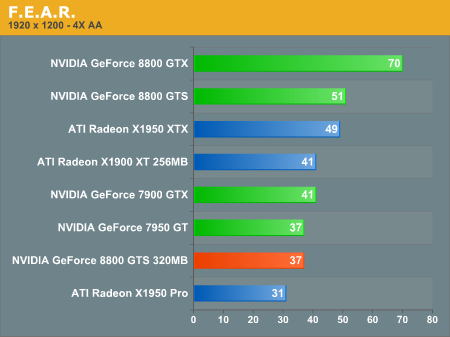

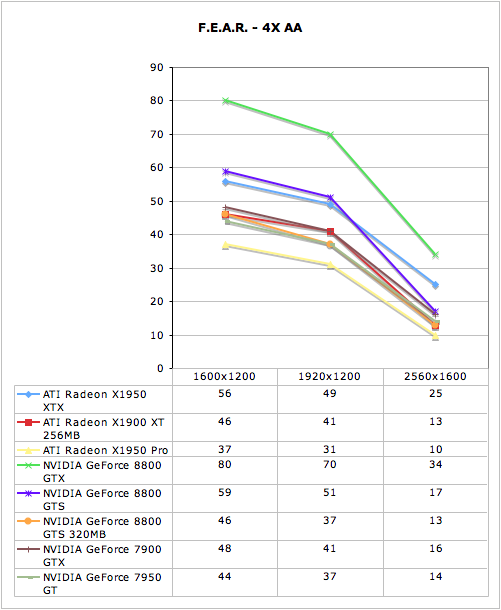

There is a built in performance test in F.E.A.R. that provides some useful statistics including average framerate. We use this performance test with all the options turned all the way up except for Soft Shadows. We disable Soft Shadows because it incurs a very high performance penalty while not delivering a good quality effect. Here we are using the 1.08 patch.

The 320MB 8800 GTS is easily able to keep up with the 640MB version under F.E.A.R. without 4xAA enabled. Performing identically at 1920x1200 shows that memory size doesn't make a difference here. Of course, as we've seen with other games, AA does increase memory usage and performance in a big way on the smaller memory part.

The two GTS parts scale similarly here, but the 320MB part performs much worse even at 1600x1200. Only our 8800 GTX is really playable at 2560x1600, but at least the 8800 GTS 320MB makes the grade at 1920x1200. Coming in with a playable score on a fairly widely used resolution is good news to majority of gamers who don't own 30" monitors.

55 Comments

View All Comments

anandtech02148 - Sunday, February 18, 2007 - link

what is the power consumption idle/load for this card?less memory matters right? 30buxs cooler, 200-300 buxs cpu, and an overheat graphic card more money for cooling.

Maroth - Wednesday, February 14, 2007 - link

Article is very fine, but can additional benchmark make ? Between 8800GTS 640MB and 320MB (in Q4 or BF2):1) in different PCI-Express mode (x16, x4, x1, PCI-Ex Disabled)

2) in different "PCI-Ex Texture Memory" setting (256MB, 128, 0MB)

(default used in test was 256MB ?)

3) in different "Texture Quality" in-game setting

What about "Frames Render Ahead" driver setting ? Default value (3) used in test was ?

Is these option performance-impact (on 8800GTS 320MB) ?

Webgod - Tuesday, February 13, 2007 - link

Isn't it pointlessly taxing the video card with pixels so small?It's been a while since I've tried anything at 1600x1200 on my 19" CRT, now I use a 26" LCD TV at 1360x768. Obviously AA is my friend at that rez, but higher on up, why not just crank the anisotropic filtering to 16x or 32x and run with it??

Isn't SLI becoming seriously less relevant once you've got a great framerate at 1600x1200 and above?

Who's going to run anything higher than 1900x1200, much less with forcing 4xAA??

MadAd - Thursday, February 15, 2007 - link

I took some comparison screenshots in battlefield 2 the other week and 4x AA is definately worthwhile in 1600x1200 and 1920x1200 (full ansio, everything on high), 8x is better but not at the expense of the big performance hit- hopefully the next card I go to will get me 8x.The change from none to 4 has more impact than 4 to 8 but its definately not pointless and the more the better IMO, who needs 999 fps when you can spend some of it ratcheting up the AA to look good.

Sunrise089 - Tuesday, February 13, 2007 - link

As one of those in the ideal target audience of 22" widescreen displays (and I would have loved to see some 1680x1050 tests, since you guys say that's the ideal resolution) and on a reasonable budget, this card does seem interesting. (For now, I'll buy Jarred's explanation of driver issues with AA). One thing I would love to see are numbers comparing overclocked performance between max OC'd 8800GTXs and both GTS parts. Not only am I interested in general with seeing how close the GTSs can get to the GTX, but I'd also love to see whether or not the difference in memory affects the memory overclock on this new model.VooDooAddict - Tuesday, February 13, 2007 - link

While I agree that NEW systems will probably be pairing the 8800GTS 320MB with a 22" 1680x1050, there is a still a large % of people very happy with their 19" 1280x1024 displays. There are also people that might save a little on the display and get a 19" WS 1440x900 in favor of more RAM or better video.The large screen segment is also interested in 1280x1024. There are plenty of people running Large 27"-32" LCD TVs as gaming monitors. these have usable 1280x720 or 1280x768 wide screen modes for most games. Also the 30" LCD crowd likes to see 1280x1024 to get an idea of how a nicely scaled 1280x800 will run. On a 30" LCD, I want World of Warcraft to run smooth at 2560x1600 with some AF and light AA, but am happy to get smooth Oblivion at 1280x768.

The bottom line is there are too many people looking for 1280x1024 to ignore it. ESPECIALLY with any video card products focused on price. Ignoring 1280x1024 in an 8800GTX SLI review ... I don't think anyone would fault you much there.

I really think you need to go back and run 1280x1024.

Also what is up with Quake4 "ULTRA", from what I remember with Doom3 and Quake4 ULTRA mode was specifically for cards with 512MB or more of video RAM due to the uncompressed textures. Is there any difference on Non-Ultra?

Any video card review dealing with memory size needs a mention of MMOs. I can't tell you how many times I've heard people think they need to spend more $$ on a card with more video RAM for an MMO. Until I ran some benches of my own I had also convinced myself that 512MB video RAM would be better for MMOs.

chizow - Monday, February 12, 2007 - link

Derek, great review as usual. Noticed you said you were going to take a closer look at the 320MB's poor performance at high resolutions, especially considering how the 256MB X1900 parts performed better with AA in some instances.Earlier today a Polish review was linked on AT http://www.in4.pl/recenzje.htm?rec_id=388&rect...">here. What's interesting to note is they used some utility (someone said RivaTuner) to track memory usage at each resolution. If you go through the benchmarks, they all tell the same story. System memory/page file is getting slammed on the 320MB GTS at higher resolutions/AA settings.

I'm no video card/driver expert but I'm thinking a simple driver optimization could improve the 320 GTS performance dramatically. It looks like the 8-series driver isn't correctly handling the 320 GTS' lower local memory limitations and handling its memory like a 640 or 768 GTS/GTX, so the additional requirements are being dumped into system memory, drastically decreasing performance. Considering the 256MB parts are handling high resolution/AA settings better, maybe an optimization limiting memory to local and faster caching/clearing of the local frame buffer would be the fix?

Just a thought and maybe something to pass along to nVidia.

kilkennycat - Monday, February 12, 2007 - link

Again, NVidia accomplishes a genuine hard launch..........Seems as if AMD/Ati will have a lot to live up to with their Dx10 graphics-card releases.See:-

http://www.newegg.com/Product/ProductList.asp?DEPA...">http://www.newegg.com/Product/ProductLi...GTS&...

And comparison-sampling from the above list:-

The EVGA Superclocked 576/1700 8800GTS/640 is $379.99 ( with Dark Messiah and after a $30 rebate thru 3/31)

and for comparison the

EVGA (superclocked) 576/1700 8800GTS/320 is $319.99 with same bundle but no $30 rebate.

LoneWolf15 - Monday, February 12, 2007 - link

At the same time, the inconsistency in G80 driver quality is something to be noted when buying a G80 (note: I own an 8800GTS, and can speak to this).This inconsistency is something that seems to have been largely glossed over by hardware review sites, so a number of purchasers have had some disappointments from games with texture corruption or that weren't/aren't well supported. nVidia's slowness in making their cards as "Vista Ready" as they advertised them to be is something to consider too.

Don't get me wrong, I like my card. I just think that when looking at ATI vs. nVidia, one needs to look objectively. I've owned 7 ATI-GPU cards and 7 nVidia-GPU cards (not including all the other ones) since 1992, and both have had their ups and their downs.

kilkennycat - Monday, February 12, 2007 - link

Remember that the 8800 is a brand-new architecture. Like to exchange your 8800 for my 7800GTX ? You are suffering the pain of being an early-adopter. ATi/AMD still has to go through the same pain with the R600, including having to handle the new driver interfaces in Vista, plus juggle the actual drivers themselves for both DX10 and DX9.