DX10 for the Masses: NVIDIA 8600 and 8500 Series Launch

by Derek Wilson on April 17, 2007 9:00 AM EST- Posted in

- GPUs

The Elder Scrolls IV: Oblivion Performance

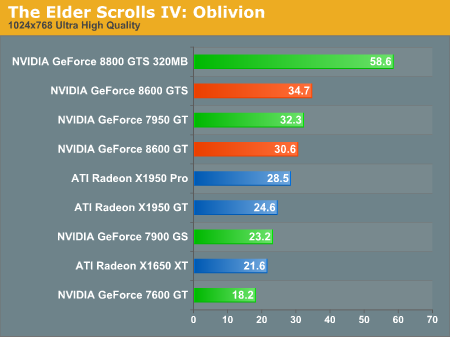

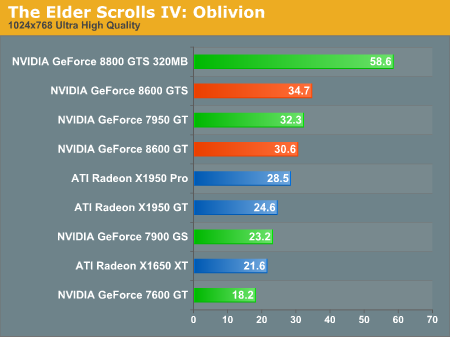

One of the more important tests we have in our arsenal is Oblivion. This game really pushes the limits of hardware with beautiful scenery and HDR effects. While the 8800 GTS 320MB owns this benchmark, the 8600 GTS comes in second well above the nearest AMD competitor and the current $200 NVIDIA part it is replacing: the 7950 GT. Even the 8600 GT comes in ahead of most of the pack.

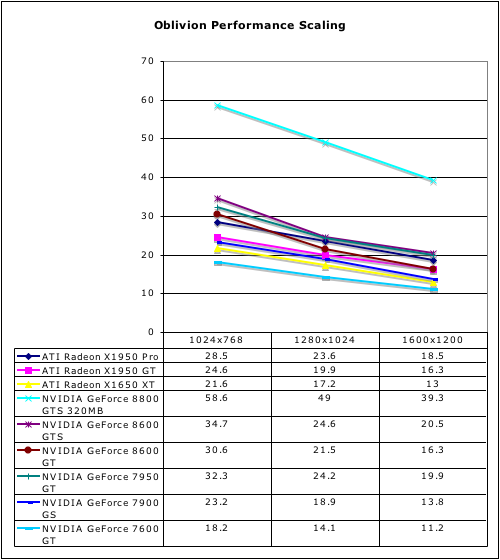

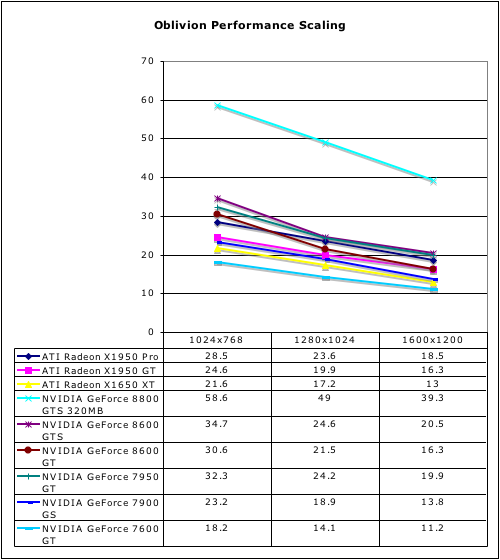

There is certainly an affinity between Oblivion and the GeForce 8 series, and if this is any indication of the type of games on the horizon NVIDIA is in a good place. Unfortunately, we can't know if this will be typical of the future games or not; most likely, it will be true sometimes and not others. It's all really up to the game developers at this point.

One of the more important tests we have in our arsenal is Oblivion. This game really pushes the limits of hardware with beautiful scenery and HDR effects. While the 8800 GTS 320MB owns this benchmark, the 8600 GTS comes in second well above the nearest AMD competitor and the current $200 NVIDIA part it is replacing: the 7950 GT. Even the 8600 GT comes in ahead of most of the pack.

There is certainly an affinity between Oblivion and the GeForce 8 series, and if this is any indication of the type of games on the horizon NVIDIA is in a good place. Unfortunately, we can't know if this will be typical of the future games or not; most likely, it will be true sometimes and not others. It's all really up to the game developers at this point.

60 Comments

View All Comments

deathwalker - Wednesday, April 18, 2007 - link

So, whats the word on the Ultra version of the 8600? Has that fallen to the wayside?crystal clear - Wednesday, April 18, 2007 - link

Interview: NVIDIA's Keita IidaThe future of Direct X, Crysis and PS3 under the spotlight.

Keita Iida, Director of Content Management at NVIDIA sat down with IGN AU to discuss all things Direct X 10 and the evolution of their Geforce graphics cards. Iida goes into detail on the differences between developing for the PS3's RSX graphics processor, and the latest development tools to hit the scene.

quote:

Selected portions of the interview-

IGN AU: What are your thoughts on Microsoft effectively forcing gamers to upgrade to Vista in order to run Direct X 10 - when there's no real reason why it can't run on Windows XP?

Keita Iida: It's a business and marketing decision.

IGN AU: Can you comment on what happened with NVIDIA's Vista drivers? You guys have had access to Vista for years to build drivers and at the launch of Vista there were no drivers. The ones that are out now are still basically crippled. Why did this happen?

Keita Iida: On a high level, we had to prioritise. In our case, we have DX9, DX10, multiple APIs, Vista and XP - the driver models are completely different, and the DX9 and 10 drivers are completely different. Then you have single- and multi-card SLI - there are many variables to consider. Given that we were so far ahead with DX10 hardware, we've had to make sure that the drivers, although not necessarily available to a wide degree, or not stable, were good enough from a development standpoint.

If you compare our situation to our competitor's, we have double the variables to consider when we write the drivers; they have much more time to optimise and make sure their drivers work well on their DX10 hardware when it comes out. We've had to balance our priorities between making sure we have proper DX10 feature-supported drivers to facilitate development of DX10 content, but also make sure that the end user will have a good experience on Vista. To some degree, I think that we may have underestimated how many resources were necessary to have a stable Vista driver off the bat. I can assure you and your readers that our first priority right now is not performance, not anything else; it's stability and all the features supported on Vista.

IGN AU: So what kind of timeline are we looking at until the end user can be comfortable with Vista drivers? With DX9 drivers that work as stably and quickly as they do with XP?

Keita Iida: We're ramping up the frequency of our Vista driver releases. Users will probably understand that we release a number of beta drivers on our site, so we're making incremental progress. We believe that, in a very short time we will have addressed the vast majority, if not all of the issues. We've had teams who were working on other projects who have mobilised to make sure that as quickly as possible we have the drivers fixed. I'm not going to give you an exact timeframe, but it's going to be very soon. We're disappointed that we couldn't do it right off the bat, but we hear what everyone is saying and we're willing to fix it.

http://pc.ign.com/articles/780/780314p1.html">http://pc.ign.com/articles/780/780314p1.html

xpose - Tuesday, April 17, 2007 - link

This next gen purevideo stuff sounds amazing. I thought I was gonna have to get a new motherboard and dual core cpu to play some HD-DVD content smoothly. Please, do try and rush testing the purevideo stuff ASAP. Blu-ray and hd-dvd is growing. . .shabby - Tuesday, April 17, 2007 - link

128bit/256meg for $200 bucks? Gimme a break.Sunrise089 - Tuesday, April 17, 2007 - link

Unless these cards are majically fast under DX10 (and we all know they won't be, they will play Crysis, but not quickly) they offer less performance than even midrange parts from the last get.Anyone remember how a 6600GT offreed 9800pro beating performance, and how nVidia sold millions of them. I don't see that happening here. What I do see is a wait-and-see attitude. Does anyone else think it's VERY suspicious that there are no 64 shader cards? Here is what may happen: nVidia waits for the midrange AMD cards to emerge. If they offer better performance, nVidia slashes prices of these and releases a 8800GS with 64 shaders for $200. I won't be surprised at all if that's what we have in 3 months.

JarredWalton - Tuesday, April 17, 2007 - link

We've got 128 SP on the GTX, 96 on the GTS... and then 32 on the G84. I'd say there's definitely room for 64 SP from NVIDIA, and possibly 48 SP as well. Will they go that route, though? Unless they've already been working on it, doing a new chip will cost quite a bit of time and effort. I was expecting 8600 to be 64 SP and 8300 to be 32 SP before we had any details, but then the 8600 probably would have been too close to the 8800.kilkennycat - Tuesday, April 17, 2007 - link

Er, wait (not too long) for nVidia's re-roll of the 8xxx-series on 65nm... You might just get your wish. I believe that nV is copying Intel's 'tic-toc' process strategy - architecture and go to production on a mature process (80nm half-node), then transfer and refine the implementation on the new process. Note the interesting and important tweaks in the implementation of the 8600 vs 8800... which gives a glimpse of the future 65nm 9xxx(??)-family architecture but with higher numbers of stream-processors and high-precision math processing for the expected GPGPU applications.nVidia has already hinted that the successor to the 8800 will be available before the end of 2007, and no doubt will be on 65nm for the obvious cost and yield reasons. If the R600 turns out to be a true contender for the 8800 "crown" in the same price-range, then I fully expect nV to accelerate the appearance of the 8800 successor. No doubt the design was started long before the 8800 itself was production-available.

Toebot - Tuesday, April 17, 2007 - link

No, nothing to sneeze at, just something to blow my nose on! Utter wretch. This card is NVidia's attempt to milk the Vista market, nothing more.DerekWilson - Tuesday, April 17, 2007 - link

We should at least wait and see what DX10 performance looks like first.AdamK47 - Tuesday, April 17, 2007 - link

With what software?