SanDisk X300s (512GB) Review

by Kristian Vättö on August 21, 2014 2:15 PM ESTAnandTech Storage Bench 2013

Our Storage Bench 2013 focuses on worst-case multitasking and IO consistency. Similar to our earlier Storage Benches, the test is still application trace based – we record all IO requests made to a test system and play them back on the drive we are testing and run statistical analysis on the drive's responses. There are 49.8 million IO operations in total with 1583.0GB of reads and 875.6GB of writes. I'm not including the full description of the test for better readability, so make sure to read our Storage Bench 2013 introduction for the full details.

| AnandTech Storage Bench 2013 - The Destroyer | ||

| Workload | Description | Applications Used |

| Photo Sync/Editing | Import images, edit, export | Adobe Photoshop CS6, Adobe Lightroom 4, Dropbox |

| Gaming | Download/install games, play games | Steam, Deus Ex, Skyrim, Starcraft 2, BioShock Infinite |

| Virtualization | Run/manage VM, use general apps inside VM | VirtualBox |

| General Productivity | Browse the web, manage local email, copy files, encrypt/decrypt files, backup system, download content, virus/malware scan | Chrome, IE10, Outlook, Windows 8, AxCrypt, uTorrent, AdAware |

| Video Playback | Copy and watch movies | Windows 8 |

| Application Development | Compile projects, check out code, download code samples | Visual Studio 2012 |

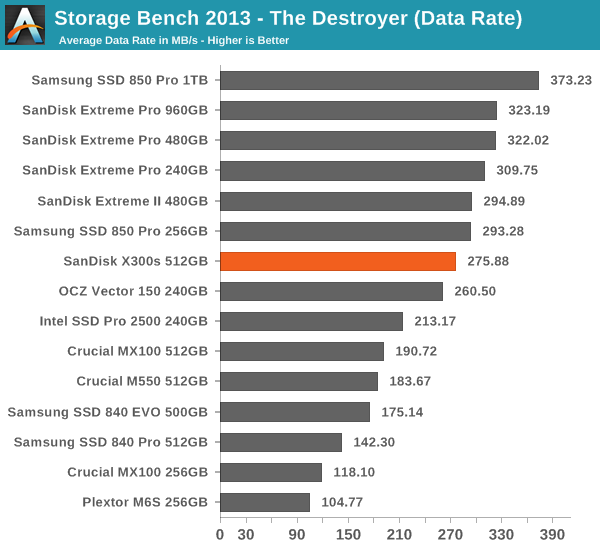

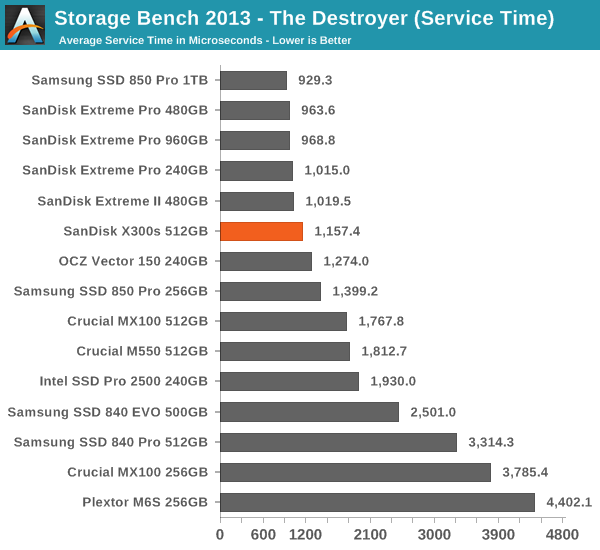

We are reporting two primary metrics with the Destroyer: average data rate in MB/s and average service time in microseconds. The former gives you an idea of the throughput of the drive during the time that it was running the test workload. This can be a very good indication of overall performance. What average data rate doesn't do a good job of is taking into account response time of very bursty (read: high queue depth) IO. By reporting average service time we heavily weigh latency for queued IOs. You'll note that this is a metric we have been reporting in our enterprise benchmarks for a while now. With the client tests maturing, the time was right for a little convergence.

Due to the lower over-provisioning, the X300s ends up being slightly slower than the Extreme Pro and Extreme II. Still, the X300s is a solid performer and faster than e.g. Intel's SSD Pro 2500, which is Intel's offering to the business market. Ultimately the 850 Pro is the fastest SED on the market but the X300s provides a more complete set of features with the inclusion of Wave's EMBASSY Security Control.

34 Comments

View All Comments

vaayu64 - Thursday, August 21, 2014 - link

Thanks for the review, as always =).If you have the opportunity to meet with sandisk, can you please ask if there will be a msata version of their extreme or ultra ssd.

vaayu64 - Thursday, August 21, 2014 - link

Another question, does this X300 provide power loss protection?Regards

hojnikb - Thursday, August 21, 2014 - link

Looking at the PCB it appears not.Samus - Thursday, August 21, 2014 - link

That's too bad since it clearly has a piece of (undisclosed capacity) memory on the PCB. Looks to be a 128MB DDR2 chip. I wonder if any user data is stored in there of if it truly caches only the indirection table?Kristian Vättö - Thursday, August 21, 2014 - link

The X300s does not have capacitors to provide power-loss protection as that is generally an enterprise-only feature. SanDisk does have a good white paper about their power-loss protection techniques, though.http://www.sandisk.com/assets/docs/unexpected_powe...

Samus - Friday, August 22, 2014 - link

Enterprise-only feature? Many mainstream drives have capacitors dating back to the Intel SSD 320 (X25-M v3)Some of the cheapest SSD's on the market have capacitors (Crucial MX100) so its inconceivable to leave them out in 2014.

Kristian Vättö - Friday, August 22, 2014 - link

"Many mainstream drives have capacitors dating back to the Intel SSD 320 (X25-M v3)"There is only a handful of client-grade drives that provide power loss protection in the form of capacitors (Crucial M500, M550 & MX100, Intel SSD 730 & SSD 320 are the only ones I can remember).

The SSD 320 was never strictly a client drive as Intel also targeted it towards the entry-level enterprise market, hence the power loss protection. The SSD 730, on the other hand, is derived from the DC S3500/S3700, so it is basically a client tuned enterprise drive.

The power loss protection in the MX100 and other Crucial's client drives is not as perfect as their marketing makes you think. Crucial only guarantees that the capacitors provide enough power to save the NAND mapping table, which means user data is vulnerable to data loss. That is why the M500DC uses different capacitors because the ones in the client drives do not provide enough power to save all writes in progress.

SanDisk's approach is to use nCache (i.e. an SLC portion) to flush the NAND mapping table from the DRAM more often. The lower write latency that SLC has ensures that in case of a power loss, the data loss is minimal but it is true that some data may be lost. Crucial/Micron operates all NAND as MLC, which is why they need the capacitors to make sure that the NAND mapping table is safe.

hojnikb - Friday, August 22, 2014 - link

On the subject of mapping tables; how does controllers like sandforce (and some marvell implementations) work without DRAM ? Do they dedicate a portion of flash for that and how do they keep track of that portions activity (eg block wear) ?Also, since some of the manufactures use pseudo SLC (ie MLC/TLC acting as SLC) how is endurance of those cells affected ? Can SLC portion last longer than normal MLC/TLC ?

Kristian Vättö - Friday, August 22, 2014 - link

The controller designs that don't utilize DRAM use the internal SRAM cache in the controller to cache the NAND mapping table. It just requires a different mapping table design since SRAM caches are much smaller than DRAM. Ultimately the mapping table is still stored in NAND, though.Pseudo-SLC can definitely last longer than MLC/TLC. With only one bit per cell, there is much more voltage headroom as there are only two voltage states.

hojnikb - Friday, August 22, 2014 - link

So really, MLC/TLC and SLC dies do not differ much internally. I'm guessing that real SLC just uses less on die error correction than MLC, but cells shouldn't be that different at all. Same i suppose goes for TLC aswell.If this is the case, it brings an interesting question; If one were to buy MLC drive and wanted SLC grade endurace, it could (if access to firmware was available) tweak the firmware in a manner, so the whole drive would act as a pSLC; obviously at a cost of performance. Something like nCache 2.0, but expanded to whole capacity.

I believe some cheap flash drive controllers offered something like that using their MPtools. I remember messing around with a cheap TLC based flash drive; Once done, i ended up with 1/3 of the capacity, but write speeds increased dramaticly.