Why NVIDIA Is Focused On Geometry

Up until now we haven’t talked a great deal about the performance of GF100, and to some extent we still can’t. We don’t know the final clock speeds of the shipping cards, so we don’t know exactly what the card will be like. But what we can talk about is why NVIDIA made the decisions they did: why they went for the parallel PolyMorph and Raster Engines.

The DX11 specification doesn’t leave NVIDIA with a ton of room to add new features. Without the capsbits, NVIDIA can’t put new features on their hardware and easily expose them, nor would they want to at risk of having those features (and hence die space) go unused. DX11 rigidly says what features a compliant video card should offer, and leaves you very little room to deviate.

So NVIDIA has taken a bit of a gamble. There’s no single wonder-feature in the hardware that immediately makes it stand out from AMD’s hardware – NVIDIA has post-rendering features such as 3D Vision or compute features such as PhysX, but when it comes to rendering they can only do what AMD does.

But the DX11 specification doesn’t say how quickly you have to do it.

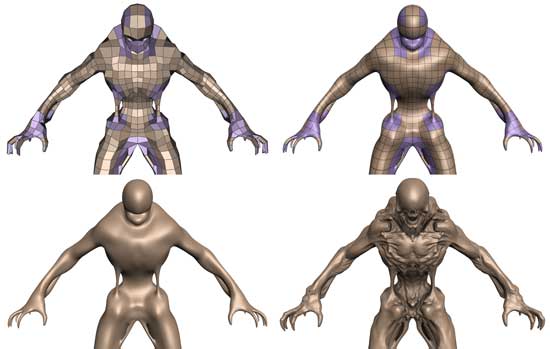

Tessellation in action

To differentiate themselves from AMD, NVIDIA is taking the tessellator and driving it for all its worth. While AMD merely has a tessellator, NVIDIA is counting on the tessellator in their PolyMorph Engine to give them a noticeable graphical advantage over AMD.

To put things in perspective, between NV30 (GeForce FX 5800) and GT200 (GeForce GTX 280), the geometry performance of NVIDIA’s hardware only increases roughly 3x in performance. Meanwhile the shader performance of their cards increased by over 150x. Compared just to GT200, GF100 has 8x the geometry performance of GT200, and NVIDIA tells us this is something they have measured in their labs.

So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance. And this is why NVIDIA has 16 PolyMorph Engines and 4 Raster Engines, because they need a lot of hardware to generate and process that much geometry.

NVIDIA believes their strategy will work, and if geometry performance is as good as they say it is, then we can see why they feel this way. Game art is usually created at far higher levels of detail than what eventually ends up being shipped, and with tessellation there’s no reason why a tessellated and displacement mapped representation of that high quality art can’t come with the game. Developers can use tessellation to scale down to whatever the hardware can do, and in NVIDIA’s world they won’t have to scale it down very far to meet up with the GF100.

At this point tessellation is a message that’s much more for developers than it is consumers. As DX11 is required to take advantage of tessellation, only a few games exist while plenty more are on the way. NVIDIA needs to convince developers to ship their art with detailed enough displacement maps to match GF100’s capabilities, and while that isn’t too hard, it’s also not a walk in the park. To that extent they’re also extolling the other virtues of tessellation, such as the ability to do higher quality animations by only needing to animate the control points of a model, and letting tessellation take care of the rest. A lot of the success of the GF100 architecture is going to ride on how developers respond to this, so it’s going to be something worth keeping an eye on.

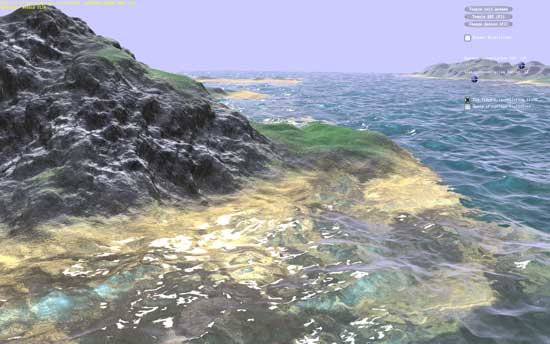

NVIDIA's water tessellation demo

NVIDIA's hair tessellation demo

115 Comments

View All Comments

dentatus - Monday, January 18, 2010 - link

Absolutely. Really, the GT200/RV700 generation of DX10 cards was inarguably 'won' (i.e most profitable) for AMD/ATI by cards like the HD4850. But the overall performance crown (i.e highest in-generation performance) was won off the back of the GTX295 for nvidia.But I agree with chizow that nvidia has ultimately been "winning" (the performance crown) each generation since the G80.

chizow - Monday, January 18, 2010 - link

Not sure how you can claim AMD "inarguably" won DX10 with 4850 using profits as a metric. How many times did AMD turn a profit since RV770 launched? Zero. They've posted 12 straight quarters of losses last time I checked. Nvidia otoh has turned a profit in many of those quarters and most recently Q3 09 despite not having the fastest GPU on the market.Also, the fundamental problem people don't seem to understand with regard to AMD and Nvidia die size and product distribution is that they overlap completely different market segments. Again, this simply serves as a referendum in the differences in their business models. You may also notice these differences are pretty similar to what AMD sees from Intel on the CPU side of things....

Nvidia GT200 die go into all high-end and mainstream parts like GTX 295, 285, 275, 260 that sell for much higher prices. AMD RV770 die went into 4870, 4850, and 4830. The latter two parts were competing with Nvidia's much cheaper and smaller G92 and G96 parts. You can clearly see that the comparison between die/wafer sizes isn't a valid one.

AMD has learned from this btw, and this time around it looks like they're using different die for their top tier parts (Cypress) and their lower tier parts (Redwood, Cedar) so that they don't have to sell their high-end die at mainstream prices.

Stas - Tuesday, January 19, 2010 - link

[quote]Not sure how you can claim AMD "inarguably" won DX10 with 4850 using profits as a metric. How many times did AMD turn a profit since RV770 launched? Zero. They've posted 12 straight quarters of losses last time I checked. Nvidia otoh has turned a profit in many of those quarters and most recently Q3 09 despite not having the fastest GPU on the market. [/quote]AMD also makes CPUs... they also lost market due to Intel's high end domination... they lost money on ATI... If it wasn't for success of the HD4000 series, AMD would've been in deep shit. Just think before you post.

Calin - Tuesday, January 19, 2010 - link

Hard to make a profit paying the rates of a 5 billion credit - but if you want to take it this way (total profits), why wouldn't we take total income?AMD/ATI:

PERIOD ENDING 26-Sep-09 27-Jun-09 28-Mar-09 27-Dec-08

Total Revenue 1,396,000 1,184,000 1,177,000 1,227,000

Cost of Revenue 811,000 743,000 666,000 1,112,000

Gross Profit 585,000 441,000 511,000 115,000

NVidia

PERIOD ENDING 25-Oct-09 26-Jul-09 26-Apr-09 25-Jan-09

Total Revenue 903,206 776,520 664,231 481,140

Cost of Revenue 511,423 619,797 474,535 339,474

Gross Profit 391,783 156,723 189,696 141,666

Not looking so good for the "winner of the generation", though. As for the die size and product distribution, all I'm looking at is the retail video card offer, and every price bracket I choose have both NVidia and AMD in it.

knutjb - Wednesday, January 20, 2010 - link

You missed my point. I wasn't talking about AMD as a whole I was talking about ATI as a division within AMD. If a company bleeds that much and still survives some part of the company must be making some money and that is the ATI division. ATI is making money. Your macro numbers mean zip.The model ATI is using is putting out competitive cards from a company, AMD, that is bleeding badly. What generation card is easier to sell the new and improved one with more features, useful or not, or the last generation chip?

beck2448 - Tuesday, January 19, 2010 - link

Those numbers are ludicrous. AMD hasn't made a profit in years. ATI's revenue is about 30% of Nvidia's.knutjb - Monday, January 18, 2010 - link

ATI is what has been floating AMD with its profits. ATI has decided to make smaller incremental developmental steps that lower end production costs.Nvidia takes a long time to create a monolithic monster that required massive amounts of capital to develop. They will not recoup this investment off gamers alone because most don't have that much cash to put one of those cards in their machines. It is needed for marketing so they can push lower level cards implying superiority, real or not, they are a heavy marketing company. This chip is directed at their GPU server market and that is where they hope to make their money hoping it can do both really well.

ATI on the other hand by making smaller steps, but at a higher cycle of product development, have focused on the performance/mainstream market. With lower development costs they can turn out new cards that payback development costs back quicker allowing them to put that capital back into new products. Look at the 4890 and 4870. They both share similar architecture but the 4890 is a more refined chip. It was a product that allowed ATI to keep Nvidia reacting to ATI's products.

Nvidia's marketing requires them to have the fastest card on the market. ATI isn't trying to keep the absolute performance crown but hold onto the price/performance crown. Every time they put out a slightly faster card it forces Nvidia to respond. Nvidia recieves lower profits from having to drop card prices. I don't think this chip will be able to function on the 8800 model because AMD/ATI is now on stronger financial footing than they have been in the past couple years and Nvidia being late to market is helping ATI line their pockets cash. The 5000 series is just marginally better, but is better than Nvidia's current offerings.

Will Nvidia release just a single high end card or several tiers of cards to compete across the board? I don't think one card will really help the bottom line over the longer term.

StormyParis - Monday, January 18, 2010 - link

I'm not sure what "winning" means, nor, really what a generation is.you can win on highest performance, highest marketshare, highest profit, best engineering...

a generation may also be adirectX iteration, a chip release cycle (in which case, each manufacturer has its own), a fiscal year...

Anyhoo, I don't really care, as long as i'm regularly getting better, cheaper cards. I'll happily switch back to nVidia

chizow - Monday, January 18, 2010 - link

I clearly defined what I considered a generation, historically the rest of the metrics measured over time (market share, mind share, profits, value-add features, game support) tend to follow suit.For someone like you that doesn't care about who's winning a generation it should be simple enough, buy whatever is best that suits your price:performance requirements when you're ready to buy.

For those who want to make an informed decision once every 12-16 months per generation to avoid those niggling uncertanties and any potential buyer's remorse, they would certainly want to consider both IHV's offerings before making that decision.

Ahmed0 - Monday, January 18, 2010 - link

How can you "win" if your product isnt intended for a meaningful number of customers. Im sure ATi could pull out the biggest, most expensive, hottest and fastest card in the world as well but theres a reason why they dont.Really, the performance crown isnt anything special. The title goes from hand to hand all the time.