AMD Radeon HD 7970 GHz Edition Review: Battling For The Performance Crown

by Ryan Smith on June 22, 2012 12:01 AM EST- Posted in

- GPUs

- AMD

- GCN

- Radeon HD 7000

Introducing PowerTune Technology With Boost

Since the 7970GE’s hardware is identical to the 7970, let’s jump straight into AMD’s software stack.

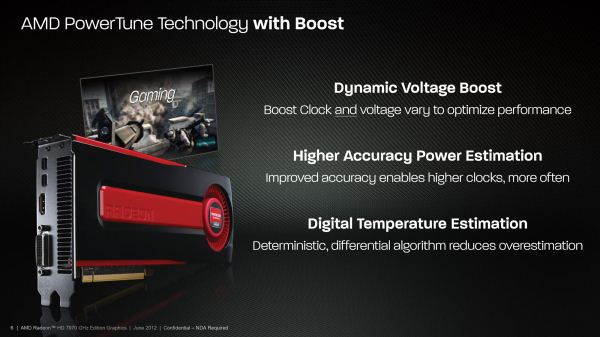

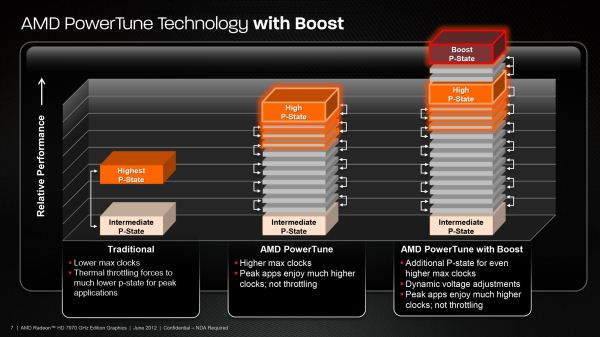

With the 7970GE AMD is introducing their own form of GPU Boost, which they are calling PowerTune Technology With Boost (PT Boost). PT Boost is a combination of BIOS and Catalyst driver changes that allow AMD to overdrive the GPU when conditions permit, and can be done without any hardware changes.

In practice PT Boost is very similar to NVIDIA’s GPU Boost. Both technologies are based around the concept of a base clock (or engine clock in AMD’s terminology) with a set voltage, and then one or more boost bins with an associated voltage that the GPU can move to as power/thermal conditions permit. In essence PT Boost allows the 7970GE to overvolt and overclock itself to a limited degree.

With that said there are some differences in implementation. First and foremost, AMD isn’t pushing the 7970GE nearly as far with PT Boost as NVIDIA has the GTX 680 with GPU Boost. The 7970GE’s boost clock is 1050MHz, a mere 50MHz better than the base clock, while the GTX 680 can boost upwards of 100MHz over its base clock. So long as both companies go down this path I expect we’ll see the boost clocks move higher and become more important with successive generations, just like what we’ve seen with Intel and their CPU turbo boost, but for the time being GPU turboing is going to be far shallower than what we’ve seen on the CPU.

At the same time however, while AMD isn’t pushing the 7970GE as hard as the GTX 680 they are being much more straightforward in what they guarantee – or as AMD likes to put it they’re being fully deterministic. Every 7970GE can hit 1050MHz and every 7970GE tops out at 1050MHz. This is as opposed to NVIDIA’s GPU Boost, where every card can hit at least the boost clock but there will be some variation in the top clock. No 7970GE will perform significantly better or worse than another on account of clockspeed, although chip-to-chip quality variation means that we should expect to see some trivial performance variation because of power consumption.

On that note it was interesting to see that because of their previous work with PowerTune AMD has far more granularity than NVIDIA when it comes to clockspeeds. GK104’s bins are 13MHz apart; we don’t have an accurate measure for AMD cards because there are so many bins between 1000MHz and 1050MHz that we can’t accurately count them. Nor for that matter does the 7970GE stick with any one bin for very long, as again thanks to PowerTune AMD can switch their clocks and voltages in a few milliseconds as opposed to the roughly 100ms it takes NVIDIA to do the same thing. To be frank in a desktop environment it’s not clear whether this is going to make a practical difference (we’re talking about moving less than 2% in the blink of an eye), but if this technology ever makes it to mobile a fast switching time would be essential to minimizing power consumption.

Such fast switching of course is a consequence of what AMD has done with their internal counters for PowerTune. As a reminder, for PowerTune AMD estimates their power consumption via internal counters that monitor GPU usage and calculate power consumption based on those factors, whereas NVIDIA simply monitors the power going into the GPU. The former is technically an estimation (albeit a precise one), while the latter is accurate but fairly slow, which is why AMD can switch clocks so much faster than NVIDIA can.

For the 7970GE AMD is further refining their PowerTune algorithms in order to account for PT Boost’s voltage changes and to further improve the accuracy of the algorithm. The big change here is that on top of their load based algorithm AMD is adding temperatures into the equation, via what they’re calling Digital Temperature Estimation (DTE). Like the existing PowerTune implementation, DTE is based on internal counters rather than an external sensor (i.e. a thermal diode), with AMD using their internal counters and knowledge about the cooling system to quickly estimate the GPU’s temperature similar to how they estimate power consumption, with a focus on estimating power in and heat out in order to calculate the temperature.

The end result of this is that by estimating the temperature AMD can now estimate the leakage of the chip (remember, leakage is a function of temperature), which allows them to more accurately estimate total power consumption. For previous products AMD has simply assumed the worst case scenario for leakage, which kept real power below AMD’s PowerTune limits but effectively overestimated power consumption. With DTE and the ability to calculate leakage AMD now has a better power estimate and can push their clocks just a bit higher as they can now tap into the headroom that their earlier overestimation left. This alone allows AMD to increase their PT Boost performance by 3-4%, relative to what it would be without DTE.

AMD actually has a longer explanation on how DTE works, and rather than describing it in detail we’ll simply reprint it.

DTE works as a deterministic model of temperature in a worst case environment, as to give us a better estimate of how much current the ASIC is leaking at any point in time. As a first order approximation, ASIC power is roughly a function of: dynamic_power(voltage, frequency) + static_power(temperature, voltage, leakage).

Traditional PowerTune implementations assume that the ASIC is running at a worst case junction temperature, and as such always overestimates the power contribution of leaked current. In reality, even at a worst case ambient temp (45C inlet to the fansink), the GPU will not be working at a worst case junction temperature. By using an estimation engine to better calculate the junction temp, we can reduce this overestimation in a deterministic manner, and hence allow the PowerTune architecture to deliver more of the budget towards dynamic power (i.e. frequency) which results in higher performance. As an end result, DTE is responsible for about 3-4% performance uplift vs the HD7970 GHz Edition with DTE disabled.

The DTE mechanism itself is an iterative differential model which works in the following manner. Starting from a set of initial conditions, the DTE algorithm calculates dTemp_ti/dt based on the inferred power consumption over a previous timeslice (is a function of voltage, workload/capacitance, freq, temp, leakage, etc), and the thermal capacitance of the fansink (function of fansink and T_delta). Simply put, we estimate the heat into the chip and the heat out of the chip at any given moment. Based on this differential relation, it’s easy to work back from your initial conditions and estimate Temp_ti, which is the temperature at any timeslice. A lot of work goes into tuning the parameters around thermal capacitance and power flux, but in the end, you have an algorithmic way to always provide benefit over the previous worst-case assumption, but also to guarantee that it will be representative of the entire population of parts in the market.

We could have easily done this through diode measurements, and used real temperature instead of digital temperature estimates…. But that would not be deterministic. Our current method with DTE guarantees that two parts off the shelf will perform the same way, and we enable end users to take advantage of their extra OC headroom on their parts through tools like Overdrive.

By tapping into this headroom however AMD has also increased their real power consumption at lower temperatures and leakages, which is why despite the identical PowerTune limits the 7970GE will consume more power than the 7970. We’ll get into the numbers in our benchmarking section, but it’s something to keep in mind for the time being.

Finally, on the end-user monitoring front we have some good news and some bad news. The bad news is that for the time being it’s not going to be possible to accurately monitor the real clockspeed of the 7970GE, either through AMD’s control panel or through 3rd party utilities such as GPU-Z. As it stands AMD is only exposing the base P-states but not the intermediate P-states, which goes back to the launch of the 7970 and is why we have never been able to tell if PowerTune throttling is active (unlike the 6900 series). So for the time being we have no idea what the actual (or even average) clockspeed of the 7970GE is. All we can see is whether it’s at its boost P-state – displayed as a 1050MHz core clock – or whether it’s been reduced to its P-state for its base clock, at which point the 7970GE will report 1000MHz.

The good news is that internally of course AMD can take much finer readings (something they made sure to show us at AFDS) and that they’ll finally be exposing these finer readings to 3rd party applications. Unfortunately they haven’t given us an expected date, but soon enough their API will be able to report the real clockspeed of the GPU, allowing users to see the full effects of both PT Boost and PT Throttle. It’s a long overdue change and we’re glad to see AMD is going to finally expose this data.

110 Comments

View All Comments

silverblue - Tuesday, June 26, 2012 - link

I think that's the way people do every review. However, ordinarily I'd recommend looking back at the 680 review, but as we've seen with the new Catalyst drivers, performance can vary over a relatively short period of time. So, a future article such as "AMD's Radeon 7970 and NVIDIA's GTX 680: How Much Difference Can A Few Months Make?" might be very nice *hint hint*. ;)Temelj - Thursday, July 12, 2012 - link

For simplicity, the OC data should be put up on this graph for reference purposes and ease of use. Who on earth wants to troll a few reviews and collect this data manually? At the very least include a reference link to the previous article that compares the NVidia 680 and provides the OC scores.Also, instead of a conclusion write up why not have a result summary showing all performed tests, the cards there were used as reference and provide a tabular view clearly showing the top runner of each test (or top 3).

b3nzint - Wednesday, June 27, 2012 - link

So what about ; DX11 DirectCompute, SmallLuxGPU, Fluid simulation, WinZip 16.5 tests. amd is winning streak. Dont buy nvidia, its an empty thing!CeriseCogburn - Saturday, June 30, 2012 - link

If you're going to use winzip to game, and support evil proprietary corruption in software by amd while using open source, great, hypocrisy and lying to stone cold stupid amd fans for years works well !Fluid sim - not a game

DX11 DC - not a game

SmallLux - not a game

Oops ! "Empty" suddenly applies to amd when it wins any "benchmarks that are not real world for end users, ever."

I guess empty crap no one uses, declared fraudulently, as a "win", sways the dark hollow spaces in the hearts and minds of the little amd fans. It's sad.

yay123 - Saturday, June 30, 2012 - link

hi there I'm buying this card but my psu is cm gx550w does it fit well if I oc it?Temelj - Thursday, July 12, 2012 - link

If you can afford a card like this, why not just upgrade your power supply?Review System Requirements here: http://www.amd.com/us/products/desktop/graphics/70...

Jamahl - Thursday, July 5, 2012 - link

Comments totally ruined by CeriseCogburn's bullshit on every page.Is this maddoctor in disguise, or one of the other Nvidia zealots? Whatever, just IP ban this weirdo and be done with it.

Mauhi123 - Monday, October 15, 2012 - link

Dear All.Hello,

I am having 3960x and DX79SI and graphics card asus hd7970-dc2t-3gd5

i am not able to boot the computer. when i am bootiing the computer on mother board 2 digit led shows "00" duble zero and on led screen shows "0_" and stops, but i can reboot the computer useing ctl+atl+del. i can able to oparate bios. that means the computer is not in hanging mode.

Please Help me ASAP......

seansplayin - Tuesday, November 20, 2012 - link

I have the Xfx 7970 Ghz edition and I really am not sure what is the big deal with the noise. My Card is not that loud. Honestly Power control settings @ +20%, Gpu core 1175 and memory @ 1600 completely stable. The games I play are at 1080P MAx everything and my GPU rarely gets above 70C, which is only around 40% fan speed. @ 40% fan speed I literally cannot hear the GPU fan unless I have the speakers completely turned off and even still I have to listen carefully to actually discern that the noise I hear is coming from the Video Card. My experience in gaming the GPU fan noise is absolutely NOT an issue. when I'm running synthetic GPU benchmarking apps like geekbench's Furmark then the card will ramp up around 70% fan speed and you can hear it, but even then it is really not an Issue. I am using the latest catylist beta Driver 12.11 which as added 15% increase in BF3 FPS and 10% increase in Dirt 3, basically taking Nvidia's crown in virtually every game.I do lot's of Video transcoding and the openCL domination this card produces is amazing.

Yesterday I trancoded a 1080P 5.3GB .mkv file to .mp4 with nero 11 when using AMD's app acceleration codec the transcode took 20 minutes as compared to 60 minutes when I used Nero's .mp4 codec at the same output settings. Durring the Transcoding the GPU stays at I believe 300 mhz with the GPU at 20% load average. when doing transcoding the gpu hoovers around 111F with the Fan at like 5%.

I love this card.

My Computer has three states, Idle 60% of the time, gaming and transcoding 40% of the time. At Idle with AMD's zero core this video card is using 10 watts less than Nvidia's 680, In gaming it's beating the 680 in almost every game now, and when it comes to encoding open cl and open gl it's basically a blowout averaging 75% more than the 680. If your an Nvidia fan (I formally was) and open CL is important to you, go with the Fermi cards because on most GPGPU processing they outperform with Kepler cards.

IF you question anything I've said do some google homework. Catalyst 12.11 actually does what they say, I can attest to it at least when it comes to Encoding, playing BF3 and Dirt 3

Peters357 - Wednesday, June 27, 2018 - link

The majority of good front-loaders receive https://washersanddryersmaker.squarespace.com at the very least CEE Tier II form III, a recognition https://washersanddryerstop.jimdofree.com from the Consortium for Energy Performance https://canvas.instructure.com/courses/1351368/ass... for super effective washing machines any great http://bestwashersanddryers.wikidot.com/ HE top-loader has an Energy Star badge agitator https://www.wattpad.com/586634818-best-smart-washe... top-loaders don't even claim to be reliable assuming