OCZ Vector (256GB) Review

by Anand Lal Shimpi on November 27, 2012 9:10 PM ESTTimes are changing at OCZ. There's a new CEO at the helm, and the company is now focused on releasing fewer products but that have gone through more validation and testing than in years past. The hallmark aggressive nature that gave OCZ tremendous marketshare in the channel overstayed its welcome. The new OCZ is supposed to sincerely prioritize compatibility, reliability and general validation testing. Only time will tell if things have changed, but right off the bat there's a different aura surrounding my first encounter with OCZ's Vector SSD.

Gone are the handwritten notes that accompanied OCZ SSD samples in years past, replaced by a much more official looking letter:

The drive itself sees a brand new 7mm chassis. The aluminum colored enclosure features a new label. Only the bottom of the SSD looks familiar as the name, part number and other details are laid out in traditional OCZ fashion.

Under the hood the drive is all new. Vector uses the first home-grown SSD controller by OCZ. Although the Octane and Vertex 4 SSDs both used OCZ Indilinx branded silicon, they were both based on Marvell IP - the controller architecture was licensed, not designed in house. Vector on the other hand uses OCZ's brand new Barefoot 3 controller, designed entirely in-house.

Barefoot 3 is the result of three different teams all working together. OCZ's UK design team, staffed with engineers from the PLX acquisition, the Korea design team inherited after the Indilinx acquisition, and folks at OCZ proper in California all came together to bring Barefoot 3 and Vector to life.

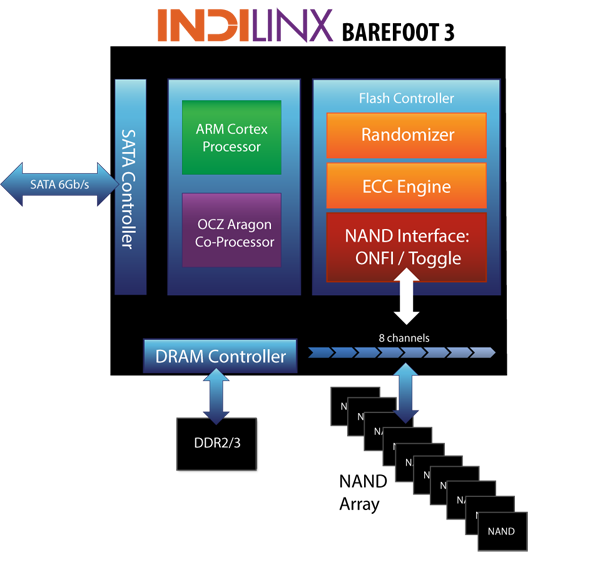

The Barefoot 3 controller integrates an unnamed ARM Cortex core as well as an OCZ Aragon co-processor. OCZ isn't going into a lot of detail as to how these two cores interact or what they handle, but multi-core SoCs aren't anything new in the SSD space. A branded co-processor is a bit unusual, and I suspect that whatever is responsible for Vector's distinct performance has to do with this part of the SoC.

Architecturally, Barefoot 3 can talk to NAND across 8 parallel channels. The controller is paired with two DDR3L-1600 DRAMs, although there's a pad for a third DRAM for use in the case where parity is needed for ECC.

Hardware encryption is not presently supported, although OCZ tells us Barefoot 3 is more than fast enough to handle it should a customer demand the feature. Hardware encryption remains mostly unused and poorly executed on client drives, so its absence isn't too big of a deal in my opinion.

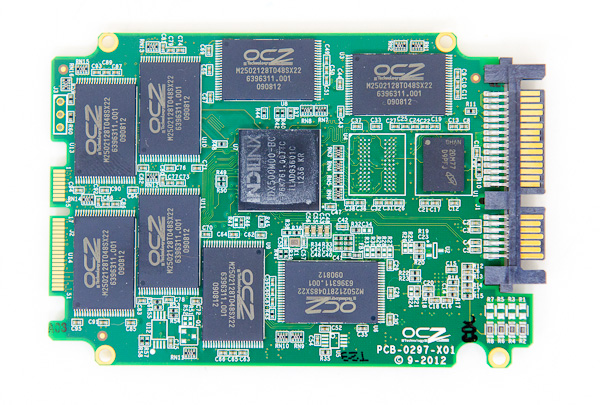

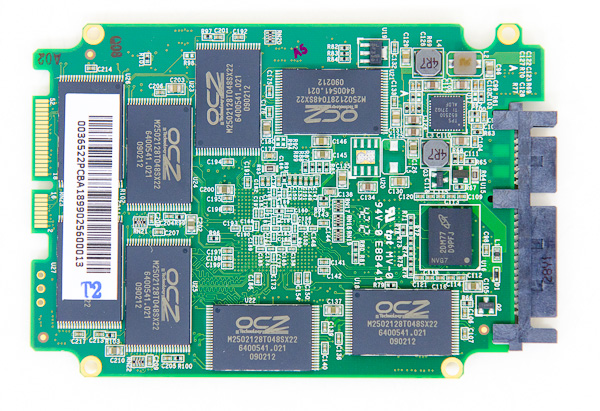

OCZ does its own NAND packaging, and as a result Vector is home to a sea of OCZ branded NAND devices. In reality you're looking at 25nm IMFT synchronous 2-bit-per-cell MLC NAND, just with an OCZ silkscreen on it. There's no NAND redundancy built in to the drive as OCZ is fairly comfortable with the error and failure rates at 25nm. The only spare area set aside is the same 6.8% we see on most client drives (e.g. a 256GB Vector offers 238GB usable space in Windows).

| OCZ Vector | |||||

| 128GB | 256GB | 512GB | |||

| Sequential Read | 550 MB/s | 550 MB/s | 550 MB/s | ||

| Sequential Write | 400 MB/s | 530 MB/s | 530 MB/s | ||

| Random Read | 90K IOPS | 100K IOPS | 100K IOPS | ||

| Random Write | 95K IOPS | 95K IOPS | 95K IOPS | ||

| Active Power Use | 2.25W | 2.25W | 2.25W | ||

| Idle Power Use | 0.9W | 0.9W | 0.9W | ||

Regardless of capacity, OCZ is guaranteeing the Vector for up to 20GB of host writes per day for 5 years. The warranty on the Vector expires after 5 years or 36.5TB of writes, whichever comes first. As with most similar claims, the 20GB value is pretty conservative and based on a 4KB random write workload. With more realistic client workloads you can expect even more life out of the NAND.

Despite being built on a brand new SoC, there's a lot of firmware carryover from Vertex 4. Indeed if you look at the behavior of Vector, it is a lot like a much faster Vertex 4. OCZ does continue to use its performance mode that enables faster performance if less than 50% of the drive's capacity is used, however in practice OCZ seems to rely on it less than in the Vertex 4.

The design cycle for Vector is the longest OCZ has ever endured. It took OCZ 18 months to bring the Vector SSD to market, compared to less than 12 months for previous designs. The additional time was used not only to coordinate teams across the globe, but also to put Vector through more testing and validation than any previous OCZ SSD. It's impossible to guarantee a flawless drive, but doing considerably more testing can't hurt.

The Vector is available starting today in 128GB, 256GB and 512GB capacities. Pricing is directly comparable to Samsung's 840 Pro:

| OCZ Vector Pricing (MSRP) | ||||||

| 64GB | 128GB | 256GB | 512GB | |||

| OCZ Vector | - | $149.99 | $269.99 | $559.99 | ||

| Samsung SSD 840 Pro | $99.99 | $149.99 | $269.99 | $599.99 | ||

OCZ is a bit more aggressive on its 512GB MSRP, otherwise it's very clear what OCZ views as Vector's immediate competition.

151 Comments

View All Comments

Brahmzy - Wednesday, November 28, 2012 - link

I should note that this works best after a secure erase and during the Windows install, don't let Windows take up the entire partition. Create a smaller pertition from the get-go. Don't shrink it later in Windows, once the OS has been installed. I believe the SSD controller knows that it can't do it's work in the same way if there is/was an empty partition taking up those cells. I could be wrong - this was the case with the older SSDs - maybe the newer controllers treat any free space as fair game to do their garbage collection/wear leveling.jwilliams4200 - Wednesday, November 28, 2012 - link

If the SSD has a good TRIM implementation, you should be able to reap the same OP benefits (as a secure erase followed by creating a smaller-than-SSD partition) by shrinking a full-SSD partition and then TRIMming the freed LBAs. I don't know for a fact the Windows 7 Disk Management does a TRIM on the freed LBAs after a shrink, but I expect that it does.I tend to use linux more than Windows with SSDs, and when I am doing tests I often use linux hdparm to TRIM whichever sectors I want to TRIM, so I do not have to wonder whether Windows TRIM did I wanted or not. But I agree that the safest way to OP in Windows is to secure erase and then create a partition smaller than the SSD -- then you can be absolutely sure that your SSD has erased LBAs, that are never written to, for the SSD to use as spare area.

seapeople - Sunday, December 2, 2012 - link

Wouldn't it be better if you just paid half price and bought the 60GB drive (or 80GB if you actually *need* 60GB) for the amount of space you needed at the present, and then in a year or two when SSD's are half as expensive, more reliable, and twice as fast you upgrade to the amount of space your needs have grown to?Your new drive without overprovisioning would destroy your old overprovisioned drive in performance, have more space (because we're double the size and not 30% OP'ed), you'd have spent the same amount of money, AND you now have an 80GB drive for free.

Of course, you should never go over 80-90% usage on an SSD anyway, so if that's what you're talking about then never mind...

kozietulski - Wednesday, November 28, 2012 - link

Nice results and great pictures. Really shows importance of free space/OP for random write preformance. Even more amazing is that the results you got seem to fit quite good with simplified model of SSD internal workings:Lets assume we have SDD with only the usual ~7% of OP which was nearly 100% filled (one can say trashed) by purely random 4KB writes (should we now write KiB just to make a few strange guys happy?) and assuming also that the drive operates on 4KB pages and 1MB blocks (today drives seem to be using rather 8KB/2MB but 4KB makes things simpler to think about) so having 256 pages per block. If trashing was good enough to achieve perfect randomisation we can expect that each block contains about 18-19 free pages (out of 256). Under heavy load (QD32, using ncq etc) decent firmware should be able to make use of all that free pages in given block before it (the firmware) decides to write the block back to NAND. Thus under heavy load and with above assumptions ( 7% OP) we can expect at worst case (SSD totaly trashed by random writes and thus free space fully randomized) Wear Amplification of about 256:18 ~= 14:1.

Now when we allow for 20% of free space (in addition to implicit ~7%OP) we should see on average about 71-72 out of 256 pages free in each and every block. This translates to WA ~= 3.6:1 (again assuming that firmware is able to consume all free space in the block before writing it back to nand. That is maybe not so obvious as there are limits in max number of i/o bundled in single ncq request but should not be impossible for the firmware to delay the block write few msecs till next request comes to see if there are more writes to be merged into the block).

Differences in WA translate directly to differences in performance (as long as there is no other bottleneck of course) so with 14:3.6 ~= 3.9 we may expect random 4KB write performance nearly 4 higher for drive with 20% free space compared to drive working with only bare 7% of implicit OP.

May be just an accident but that seem to fit pretty close to results you achieved. :)

jwilliams4200 - Wednesday, November 28, 2012 - link

Interesting analysis. Without knowing exactly how the 840 Pro firmware works I cannot be certain, but it does sound like you have a reasonable explanation for the data I observed.kozietulski - Wednesday, November 28, 2012 - link

Yeah, there is lot of assumptions and simplifications in above... well surely woudn't call it analysis, perhaps hypothesis would be also a bit too much. Modern SSDs have lots of bells and whistles - as well as quirks - and most of them - particularly quirks - aren't documented well. All that means that conformity of the estimation with your results may very well be nothing more then just a coincidence.The one thing I'm reasonably sure however - and it is the reason I thought it is worth to write the post above - is that for random 4K writes on the heavily (ab)used SSD the factor which is limiting performance the most is Write Amplification. (at least as long as we talk about decent SSDs with decent controllers - Phisons does not qualify here I guess :)

In addition to obvious simplifications there was one another shortcut I took above: I based my reasoning on the "perfectly trashed" state of SSD - that is one where SSD's free space is in pages equally spread over all nand blocks. In theory the purpose of GC algos is to prevent drives reaching such a state. Still I think there are workloads which may bring SSDs close enough to that worst possible state and so that it is still meaningful for worst case scenario.

In your case however the starting point was different. AFAIU you used first sequential I/O to fill 100 / 80 % of drive capacity so we can safely assume that before random write session started drive contained about 7% (or ~27% in second case) of clean blocks (with all pages free) and the rest of blocks were completely filled with data with no free pages (thanks to nand-friendly nature of sequential I/O).

Now when random 4K writes start to fly... looking from the LBA space perspective these are by definition overwrites of random chunks of LBA space but from SSD managed perspective at first we have writes filling pool of clean blocks coupled by deletions of randomly selected pages within blocks which were until now fully filled with data. Surely such deletion is actually reduced to just marking of such pages as free in firmware FTL tables (any GC at that moment seem highly unlikely imho).

At last comes the moment when clean blocks pool is exhausted (or when size of clean pool falls below the threshold) which is waking up GC alhorithms to make their sisyphean work. At that moment situation looks like that (assuming that there was no active GC until now and that firmware was capable enough to fill clean blocks fully before writing to NAND): 7% (or 27% in second case) of blocks are fully filled with (random) data whereas 93/73 % of blocks are now pretty much "trashed" - they contain (virtually - just marked in FTL) randomly distributed holes of free pages. Net effect is that - compared to the starting point - free space condensed at first in the pool of clean blocks is now evenly spread (with page granularity) over most of drive nand blocks. I think that state does not look that much different then the state of complete, random trashing I assumed in post above...

From that point onward till the end of random session there is ongoing epic struggle against entropy: on one side stream of incoming 4K writes is punching more free page holes in SSD blocks thus effectively trying to randomize distribution of drive available free space and GC algorithms on the other side are doing their best to reduce the chaos and consolidate free space as much as possible.

As a side note I think it is really a pity that there is so little transparency amongst vendors in terms of comunicating to customers internal workings of their drives. I understand commercial issues and all but depriving users of information they need to efficiently use their ssds leads to lots of confused and sometimes simply disappointed consumers and that is not good - in the long run - for the vendors too. Anyway maybe it is time to think about open source ssd firmware for free community?! ;-)))

ps. Thanks for reference to fio. Looks like very flexible tool, may be also easier to use then iometer. Surely worth to try at least.

cbutters - Wednesday, November 28, 2012 - link

So based on 36TB to end the warranty, basically you can only fill up your 512GB drive 72 times before the warranty expires? That doesn't seem like a whole lot of durability. reinstalling a few large games several times could wear this out pretty quickly... or am I understanding something incorrectly.According to my calculations, assuming a gigabit network connection, running at 125MB per second storing data, that is .12GB per second, 7.3242GB per minute, 439GB per hour, or 10.299TB per day... Assuming this heavy write usage,... that 36 TB could potentially be worn out in as little as 3.5 days using a conservative gigabit network speed as the baseline.

Makaveli - Wednesday, November 28, 2012 - link

I've had an SSD 3 years and it currently has 3TB's of host writes!jimhsu - Wednesday, November 28, 2012 - link

I assume warranties such as this assume an unrealistically high write amplification (e.g. 10x) (to save the SSD maker some skin, probably). Your sequential write example (google "rogue data recorder") most likely has a write amplification very close to 1. Hence, you can probably push much more data (still though the warranty remains conservative).jwilliams4200 - Wednesday, November 28, 2012 - link

Even WA=10 is not enough to account for the 512GB warranty of 36TB. That only comes to about 720 erase cycles if WA=10.I think the problem is that the warrantied write amount should really scale with the capacity of the SSD.

Assuming WA=10, then 128GB @ 3000 erase cycles should allow about 3000 *128GB/10 = 38.4TB. The 256GB should allow 76.8TB. And the 512GB should get 153.6TB.