Understanding Apple's Fusion Drive

by Anand Lal Shimpi on October 24, 2012 1:36 AM ESTDuring its iPad mini launch event today Apple updated many members of its Mac lineup. The 13-inch MacBook Pro, iMac and Mac mini all got updated today. For the iMac and Mac mini, Apple introduced a new feature that I honestly expected it to debut much earlier: Fusion Drive.

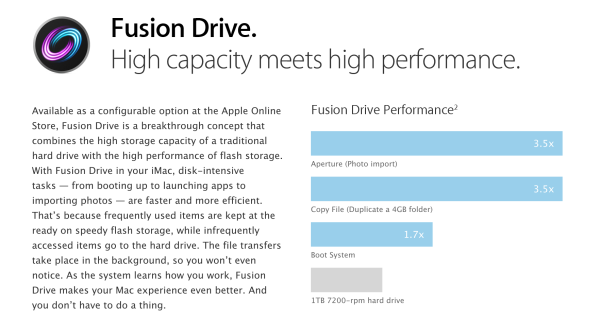

The idea is simple. Apple offers either solid state or mechanical HDD storage in its iMac and Mac mini. End users have to choose between performance or capacity/cost-per-GB. With Fusion Drive, Apple is attempting to offer the best of both worlds.

The new iMac and Mac mini can be outfitted with a Fusion Drive option that couples 128GB of NAND flash with either a 1TB or 3TB hard drive. The Fusion part comes in courtesy of Apple's software that takes the two independent drives and presents them to the user as a single volume. Originally I thought this might be SSD caching but after poking around the new iMacs and talking to Apple I have a better understanding of what's going on.

For starters, the 128GB of NAND is simply an SSD on a custom form factor PCB with the same connector that's used in the new MacBook Air and rMBP models. I would expect this SSD to use the same Toshiba or Samsung controllers we've seen in other Macs. The iMac I played with had a Samsung based SSD inside.

Total volume size is the sum of both parts. In the case of the 128GB + 1TB option, the total available storage is ~1.1TB. The same is true for the 128GB + 3TB option (~3.1TB total storage).

By default the OS and all preloaded applications are physically stored on the 128GB of NAND flash. But what happens when you go to write to the array?

With Fusion Drive enabled, Apple creates a 4GB write buffer on the NAND itself. Any writes that come in to the array hit this 4GB buffer first, which acts as sort of a write cache. Any additional writes cause the buffer to spill over to the hard disk. The idea here is that hopefully 4GB will be enough to accommodate any small file random writes which could otherwise significantly bog down performance. Having those writes buffer in NAND helps deliver SSD-like performance for light use workloads.

That 4GB write buffer is the only cache-like component to Apple's Fusion Drive. Everything else works as an OS directed pinning algorithm instead of an SSD cache. In other words, Mountain Lion will physically move frequently used files, data and entire applications to the 128GB of NAND Flash storage and move less frequently used items to the hard disk. The moves aren't committed until the copy is complete (meaning if you pull the plug on your machine while Fusion Drive is moving files around you shouldn't lose any data). After the copy is complete, the original is deleted and free space recovered.

After a few accesses Fusion Drive should be able to figure out if it needs to pull something new into NAND. The 128GB size is near ideal for most light client workloads, although I do suspect heavier users might be better served by something closer to 200GB.

There is no user interface for Fusion Drive management within OS X. Once the volume is created it cannot be broken through a standard OS X tool (although clever users should be able to find a way around that). I'm not sure what a Fusion Drive will look like under Boot Camp, it's entirely possible that Apple will put a Boot Camp partition on the HDD alone. OS X doesn't hide the fact that there are two physical drives in your system from you. A System Report generated on a Fusion Drive enabled Mac will show both drives connected via SATA.

The concept is interesting, at least for mainstream users. Power users will still get better performance (and reliability benefits) of going purely with solid state storage. Users who don't want to deal with managing data and applications across two different volumes are still the target for Fusion Drive (in other words, the ultra mainstream customer).

With a 128GB NAND component Fusion Drive could work reasonable well. We'll have to wait and see what happens when we get our hands on an iMac next month.

87 Comments

View All Comments

dagamer34 - Wednesday, October 24, 2012 - link

Of course, the idea with algorithms like this is to break them so they get better, right? :Dspacebarbarian - Wednesday, October 24, 2012 - link

I dont really see how they would improve upon this, its really just solving the inconvenience of keeping system and frequent apps on the SSD and data on HDD. I'm sure Apple has a great algorithm already for deciding what gets to be on the SSD based on your system usage, though I hope there is an option to put new apps directly on the SSD on install (as I would want when installing games etc on my Windows PC). I hope windows 8 packs similar functionality because to the non-power user its not as trivial as it may seem to me.stevedemena - Thursday, October 25, 2012 - link

If the drive is presented as a single volume I don't see how you could request a new app be installed & why should you? Shouldn't the automatic tiering automatically move it to the SSD if warranted? (Used alot)lyeoh - Thursday, October 25, 2012 - link

What I wonder is what happens when one of the drive fails? Say the SSD fails.If it's was a cache and they did things right, it wouldn't matter if the SSD failed (as long as the system realized the SSD had failed).

Whereas in Apple's case it's not a cache. From the description it seems to me that you will lose data if either of the drives failed.

From what I see SSD failure rates aren't low enough to say that 99% of the time it'll be the spinning disk that fails.

Zink - Friday, October 26, 2012 - link

It's that same as having a SSD boot drive and a data HDD and managing files yourself. More disks is going to increase the likelihoods of failure.Site7000 - Monday, October 29, 2012 - link

It's a primary drive; there's no backup component to it. You back it up like you would any drive. To put it another way, the SSD is for speed, not safety.Bownce - Tuesday, October 30, 2012 - link

Any spanned volume works the same way. Double the capacity; halve the reliability. Whereas mirroring halves the usable capacity while doubling the reliability.Guspaz - Wednesday, October 24, 2012 - link

I would have expected a larger write buffer than 4GB... In fact, I would have expected writes to work much like reads, with all writes going entirely to the SSD and files being moved to the slower HDD later if they weren't frequently accessed.Paulman - Wednesday, October 24, 2012 - link

Well, once it's been written to the write buffer, I assume the OS will decide whether or not to keep it there or move it to the rotational HD later.And I don't see why greater than 4GB would be necessary. It truly is intended to just be a scratchpad/clipboard of sorts. As long as it can easily handle typical workloads, then the user shouldn't be able to see any improvement going to a larger buffer.

Freakie - Wednesday, October 24, 2012 - link

I would think that media editors would benefit from a larger write-cache. So would anyone transferring a folder larger than 4GB I would think, which tends to happen not-too-infrequently from my experience xP I think a 12GB write-cache would have been pretty decent myself and would cover a lot of file-move scenarios.