Gigabyte GA-7PESH1 Review: A Dual Processor Motherboard through a Scientist’s Eyes

by Ian Cutress on January 5, 2013 10:00 AM EST- Posted in

- Motherboards

- Gigabyte

- C602

Visual Inspection

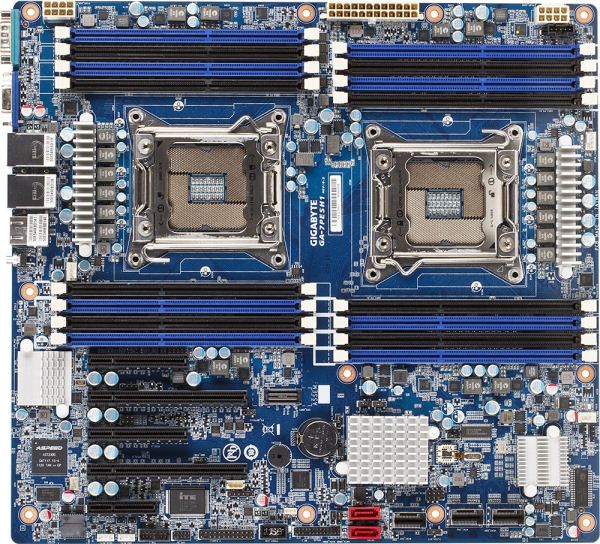

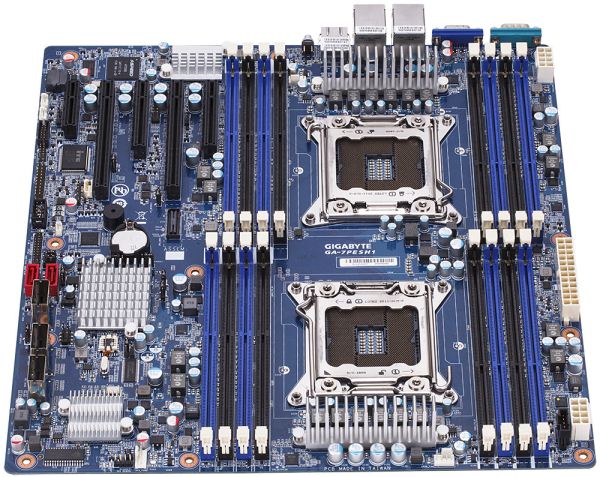

I knew server boards were large, but coming from the ATX and E-ATX standards, this thing is huge. It measures 330mm x 305mm (13” x 12”) which correlates to the SSI EEB specification for server motherboards. This is the size exact size of an official E-ATX motherboard (despite a small amount of loose definition), but to put the icing on the cake, the mounting holes for the motherboard are different to the normal ATX standards. If we took a large case, like the Rosewill Blackhawk-Ultra, it supports ATX, SSI CEB, XL-ATX, E-ATX and HPTX, up to 13.6” x 15”, but not SSI EEB. Thus drilling extra holes for standoffs may be required.

Unlike the SR-X or Z9PE-D8 WS, the GA-7PESH1 supports two memory modules per channel for all channels on board. In terms of specifications this means support for up to 128 GB UDIMM (i.e. regular DDR3), 128 GB UDIMM ECC, and 512 GB RDIMM ECC. Due to the nature of the design, only 1066-1600 MHz is supported, but the GA-7PESH1 supports 1600 MHz when all slots are populated. For our testing, Kingston has kindly supplied us with 8x4GB of their 1600 C11 ECC memory.

As with the majority of server boards, stability and longevity is a top priority. This means no overclocking, and Gigabyte can safely place a six phase power delivery on each CPU – it also helps that all SB-E Xeons are multiplier locked and there is no word of unlocked CPUs being released any time soon. As we look at the board, standards dictate that the CPU on the right is designated as the first CPU. Each CPU has access to a single fan header, and specifications for coolers are fairly loose in both the x and the y directions, limited only by memory population and the max z-height of the case or chassis the board is being placed into. As with all dual CPU motherboards, each CPU needs its own Power Connector, and we find them at the top of the board behind the memory slots and at opposite ends. The placement of these power connectors is actually quite far away for a normal motherboard, but it seems that the priority of the placement is at the edge of the board. In between the two CPU power connectors is a standard 24-pin ATX power connector.

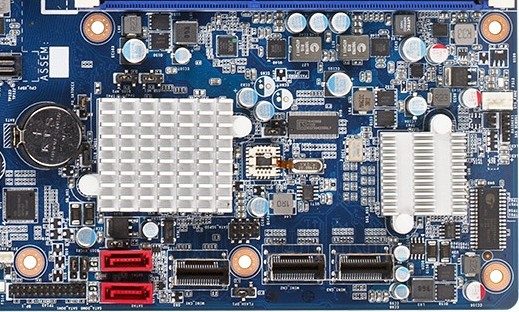

One of the main differences I note coming from a consumer motherboard orientation is the sheer number of available connectors and headers on such a server motherboard. For example, the SATA ports have to be enabled by moving the jumpers the other side of the chipset. The chipset heatsink is small and basic – there is no need for a large heatsink as the general placement for such a board would be in a server environment where noise is not particularly an issue if there are plenty of Delta fans to help airflow.

On the bottom right of the board we get a pair of SATA ports and three mini-SAS connections. These are all perpendicular to the board, but are actually in the way of a second GPU being installed in a ‘normal’ motherboard way. Users wishing to use the second PCIe x8 slot on board may look into PCIe risers to avoid this situation. The heatsink on the right of this image covers up an LSI RAID chip, allowing the mSAS drives to be hardware RAIDed.

As per normal operation on a C602 DP board, the PCIe slots are taken from the PEG of one CPU. On some other boards, it is possible to interweave all the PCIe lanes from both CPUs, but it becomes difficult when organizing communication between the GPUs on different CPUs. From top to bottom we get an x8 (@x4), x16, x8 (@x4), x16 (@x8), x4(@x1). It seems odd to offer these longer slots at lower speed ratings, but all of the slots are Gen 3.0 capable except the x4(@x1). The lanes may have been held back to maintain data coherency.

To those unfamiliar with server boards, of note is the connector just to the right of center of the picture above. This is the equivalent of the front panel connection on an ATX motherboard. At almost double the width it has a lot more options, and where to put your cables is not printed on the PCB – like in the old days we get the manual out to see what is what.

On the far left we have an ASPEED AST2300 chip, which has multiple functions. On one hand it is an onboard 2D graphics chip which powers the VGA port via its ARM926EJ (ARM9) core at 400 MHz. For the other, it as an advanced PCIe graphics and remote management processor, supporting dual NICs, two COM ports, monitoring functions and embedded memory. Further round this section gives us a removable BIOS chip, a COM header, diagnostic headers for internal functions, and a USB 2.0 header.

The rear IO is very bare compared to what we are normally used to. From left to right is a serial port, the VGA port, two gigabit Ethernet NICs (Intel I350), four USB 2.0 ports, the KVM server management port, and an ID Switch button for unit identification. There is no audio here, no power/reset buttons, and no two-digit debug LED. It made for some rather entertaining/hair removing scenarios when things did not go smoothly during testing.

Board Features

| Gigabyte GA-7PESH1 | |

| Price |

Contact: 17358 Railroad St. City of Industry CA 91748 +1-626-854-9338 |

| Size | SSI EEB |

| CPU Interface | LGA 2011 |

| Chipset | Intel C602 |

| Memory Slots |

Sixteen DDR3 DIMM slots supporting: 128GB (UDIMM) @ 1.5V 512GB (RDIMM) @ 1.5V 128GB DDR3L @ 1.35 V Quad Channel Arcitecture ECC RDIMM for 800-1600 MHz Non-ECC UDIMM for 800-1600 MHz |

| Video Outputs | VGA via ASPEED 2300 |

| Onboard LAN | 2 x Intel I350 supporting uo to 1000 Mbps |

| Onboard Audio | None |

| Expansion Slots |

1 x PCIe 3.0 x16 1 x PCIe 3.0 x16 (@ x8) 2 x PCIe 3.0 x8 (@ x4) 1 x PCIe 2.0 x4 (@ x1) |

| Onboard SATA/RAID |

2 x SATA 6 Gbps, Supporting RAID 0,1 2 x mini-SAS 6 Gbps, Supporting RAID 0,1 1 x mini-SAS 3 Gbps, Supporting RAID 0,1 |

| USB | 6 x USB 2.0 (Chipset) [4 back panel, 2 onboard] |

| Onboard |

2 x SATA 6 Gbps 2 x mSAS 6 Gbps 1 x mSAS 3 Gbps 1 x USB 2.0 Header 4 x Fan Headers 1 x PSMI header 1 x TPM header 1 x SKU KEY header |

| Power Connectors |

1 x 24-pin ATX Power Connector 2 x 8-pin CPU Power Connector |

| Fan Headers |

2 x CPU (4-pin) 2 x SYS (4-pin, 3-pin) |

| IO Panel |

1 x Serial Port 1 x VGA 2 x Intel I350 NIC 4 x USB 2.0 1 x KVM NIC 1 x ID Switch |

| Warranty Period | Refer to Sales |

| Product Page | Link |

Without having a direct competitor to this board on hand there is little we can compare such a motherboard to. In this level having server grade Intel NICs should be standard, and this board can take 8GB non-ECC memory sticks or 32GB ECC memory sticks, for a maximum of 512 GB. If your matrix solvers are yearning for memory, then this motherboard can support it.

64 Comments

View All Comments

toyotabedzrock - Saturday, January 5, 2013 - link

There is a large number of very smart people on Google+. You really should come join us.JlHADJOE - Tuesday, January 8, 2013 - link

Of course there are lots of smart people on G+! You're all google employees right? =PActivate: AMD - Saturday, January 5, 2013 - link

As a fellow chemist, I must say that you have to be some kind of nut to want to do computational/physical chemistry. If you need me, I'll be at the bench!Good article too!

engrpiman - Saturday, January 5, 2013 - link

I didn't read the article in full but what I did read was top notch. I found your simulations and mathematics very interesting. I took a Physics class which was focused in writing code to run mathematical simulations . Using the given java lib. I wrote my own code to calculate PI. When I returned from the gym the program had calculated 3.1 . I then re-wrote the program from scratch and ditched the built in libs. and reran. I had 20 decimals in 30 sec it was an epic improvement.All in all I think your article could be very useful to me.

Thanks for writing.

SodaAnt - Saturday, January 5, 2013 - link

THIS is why I read Anandtech. I'll admit that I wasn't quite in the mood to read all the equations (I'll have to do that later), but really, these kind of reviews make my day.Cardio - Saturday, January 5, 2013 - link

Wonderful review...as always. ThanksHakon - Saturday, January 5, 2013 - link

Hi Ian. Thanks for the nice article. I have one suggestion regarding the explicit finite difference code:You could try to reorder the loops such that the memory access is more cache friendly. Right now 'pos' is incremented by NX (or even NX*NY in 3D) which will generate a lot cache misses for large grids. If you switch the x and the y loop (in the 2D case) this can be avoided.

IanCutress - Saturday, January 5, 2013 - link

Either way I order the loops, each point has to read one up, one down, one left and one right. My current code tries to keep three as consecutive reads and jump once, keeping the old jump in local memory. If I adjusted the loops, I could keep the one dimension in local memory, but I'd have to jump outside twice (both likely cache misses) to get the other data. I couldn't cache those two values as I never use them again in the loop iteration.When I did this code on the GPU, one method was to load an XY block into memory and iterate in the Z-dimension, meaning that each thread per loop iteration only loaded one element, with a few of them loading another for the halo, but all cache aligned.

I hope that makes sense :)

Ian

Hakon - Saturday, January 5, 2013 - link

Yes, but when you access the array 'cA' at 'pos' the CPU will fetch the entire cache line (64 byte in case of your machine, i.e. 16 floats) of the corresponding memory address into the CPU cache. That means that subsequent accesses to say 'pos + 1', 'pos + 2' and so on will be served by the cache. Accessing an array in such a sequential manner is therefore fast.However, when you access an array in a nasty way, e.g. 'NX + x' -> '2*NX + x', -> '3*NX + x', then each such access implies a trip to main memory if NX happens to be large.

That you need to move up / down and sideways in memory does not matter. When you write down the accesses of the code with the reordered loops you will notice that they just access three "lines" in memory in a cache friendly way.

Not reusing the old values of the last iteration should not affect performance in a measurable way. Even if the compiler fails to see this optimization, the accesses will be served by the L1 cache.

Btw, did you allocate the array having NUMA in mind, i.e. did you initialize your memory in an OpenMP loop with the same access pattern as used in the algorithms? I am a bit surprised by the bad performance of your dual Xeon system.

IanCutress - Saturday, January 5, 2013 - link

Memory was allocated via the new command as it is 1D. When using a 2D array the program was much slower. I was unaware you could allocate memory in an OpenMP way, which thinking about it could make the 2D array quicker. I also tried writing the code using the PPL and lambdas, but that was also slower than a simple OpenMP loop.I'm coming at these algorithms from the point of view of a non-CompSci interested in hardware, and the others in the research group were chemists content to write single threaded code on multi-core machines. Transferring the OpenMP variations of that code from a 1P to a 2P, as the results show, give variable results depending on the algorithm.

There are always ways to improve the efficiency of the code (and many ways to make it unreadable), but for a large part moving to the 2P system all depends on how your code performs. Please understand that my examples being within my limits of knowledge and representative of the research I did :) I know that SSE2/SSE4/AVX would probably help, but I have never looked into those. More often than not, these environments are all about research throughput, so rather than spend a few week to improve efficiency by 10% (or less), they'd rather spend that money getting a faster system which theoretically increases the same code throughput 100%.

I'll have a look at switching the loops if I write an article similar to this in the future :)

Ian