The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Modifying a Krait Platform: More Complicated

Modifying the Dell XPS 10 is a little more difficult than Acer's W510 and Surface RT. In both of those products there was only a single inductor in the path from the battery to the CPU block of the SoC. The XPS 10 uses a dual-core Qualcomm solution however. Ever since Qualcomm started doing multi-core designs it has opted to use independent frequency and voltage planes for each core. While all of the A9s in Tegra 3 and both of the Atom cores used in the Z2760 run at the same frequency/voltage, each Krait core in the APQ8060A can run at its own voltage and frequency. As a result, there are two power delivery circuits that are needed to feed the CPU cores. I've highlighted the two inductors Intel lifted in orange:

Each inductor was lifted and wired with a 20 mΩ resistor in series. The voltage drop across the 20 mΩ resistor was measured and used to calculate CPU core power consumption in real time. Unless otherwise stated, the graphs here represent the total power drawn by both CPU cores.

Unfortunately, that's not all that's necessary to accurately measure Qualcomm CPU power. If you remember back to our original Krait architecture article you'll know that Qualcomm puts its L2 cache on a separate voltage and frequency plane. While the CPU cores in this case can run at up to 1.5GHz, the L2 cache tops out at 1.3GHz. I remembered this little fact late in the testing process, and we haven't yet found the power delivery circuit responsible for Krait's L2 cache. As a result, the CPU specific numbers for Qualcomm exclude any power consumed by the L2 cache. The total platform power numbers do include it however as they are measured at the battery.

The larger inductor in yellow feeds the GPU and it's instrumented using another 20 mΩ resistor.

Visualizing Krait's Multiple Power/Frequency Domains

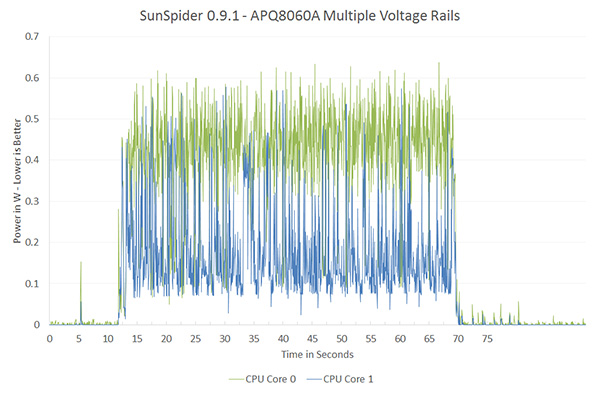

Qualcomm remains adament about its asynchronous clocking with multiple voltage planes. The graph below shows power draw broken down by each core while running SunSpider:

SunSpider is a great benchmark to showcase exactly why Qualcomm has each core running on its own power/frequency plane. For a mixed workload like this, the second core isn't totally idle/power gated but it isn't exactly super active either. If both cores were tied to the same voltage/frequency, the second core would have higher leakage current than in this case. The counter argument would be that if you ran the second core at its max frequency as well it would be able to complete its task quicker and go to sleep, drawing little to no power. The second approach would require a very fast microcontroller to switch between v/f modes and it's unclear which of the two would offer better power savings. It's just nice to be able to visualize exactly why Qualcomm does what it does here.

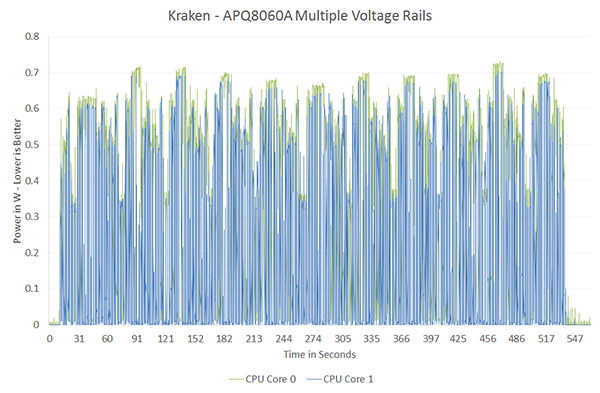

On the other end of the spectrum however is a benchmark like Kraken, where both cores are fairly active and the workload is balanced across both cores:

Here there's no real benefit to having two independent voltage/frequency planes, both cores would be served fine by running at the same voltage and frequency. Qualcomm would argue that the Kraken case is rare (single threaded performance still dominates most user experience), and the power savings in situations like SunSpider are what make asynchronous clocking worth it. This is a much bigger philosophical debate that would require far more than a couple of graphs to support and it's not one that I want to get into here. I suspect that given its current power management architecture, Qualcomm likely picked the best solution possible for delivering the best possible power consumption. It's more effort to manage multiple power/frequency domains, effort that I doubt Qualcomm would put in without seeing some benefit over the alternative. That being said, what works best for a Qualcomm SoC isn't necessarily what's best for a different architecture.

140 Comments

View All Comments

StrangerGuy - Sunday, March 24, 2013 - link

You really think having a slightly better chip would make Samsung risk everything to get locked into a chip with an ISA owned, designed and manufactured by one single sole supplier? And when that supplier in question historically has shown all sorts of monopolistic abuses?And when a quad A7 could already scroll desktop sites in Android capped at 60 fps additional performance provides very little real world advantage for most users. I'll even say most users would be annoyed by I/O bottlenecks like LTE speeds long before saying 2012+ class ARM CPUs are too slow.

duploxxx - Monday, January 7, 2013 - link

Now it is clear that when Intel provides that much material and resources that they know they are at least ok in the comapere against ARM on cpu and power... else they wouldn't make such a fuss...,but what about GPU? any decent benchmark or testing available on GPU performance.

I played in december with HP envy2 and after some questioning they installed a few light games which were "ok" but i wonder how well the gpu in the atom really is, power consumption looks ok, but i preffer a performing gpu @ a bit higher power then a none performing one.

memosk - Tuesday, January 8, 2013 - link

It looks like old problem with PowerPc v.s. PC .PowerPC have had a faster Risc procesor and PC have had slower x86 like procesor .

The end result was that clasical PC has won this Battle . Because of tradition and what is more important , you need knowledge about platform over users, producers, programers ...

And you should think about economical thinks like mortage and whole enviroment named as Logistik.

The same problem was Tesla vs. Edison. Tesla have had better ideas and Edison was Bussines-man. Who has won ? :)

memosk - Tuesday, January 8, 2013 - link

Nokia tryed seriosly sell windows 8 phones without SD cards And they said because of microsoft .How can you then compete again android with SD cards. But if you copy an Apple you think it has logic.

You need generaly: complete and logic and OWN and CONSISTENT and ORIGINAL strategy.

If you copy something it is dangerous that you strategy will be incostintent , leaky , "two way" , vague also with tragical errors like incompatibility or like legendary siemens phones: 1 crash per day . :D

apozean - Friday, January 11, 2013 - link

I studied the setup and it appears and Intel just wants to take on Nvidia's Tegra 3. Here are a couple of differences that I think are not highlighted appropriately:1. They used an Android tablet for Atom, Android tablet for Krait, but a Win RT (Surface) for Tegra 3. It must have been very difficult to fund a Google Nexus 7. Keeping the same OS across the devices would have controlled for a lot of other variables. Wouldn't it?

2. Tegra 3 is the only quad core chip among chips being compared. Atom and Krait are dual-core. If all four cores are running, wouldn't it make a different to the idle power?

3. Tegra 3 is built on 40nm and is one of the first A9 SoCs. In contrast, Atom is 32nm and Krait is 28nm.

How does Tegra 3 fits in this setup?

apozean - Friday, January 11, 2013 - link

Fixing typos..I studied the setup and it appears that Intel just wants to take on Nvidia's Tegra 3. Here are a couple of differences that I think are not highlighted appropriately:

1. They used an Android tablet for Atom, Android tablet for Krait, but a Win RT (Surface) for Tegra 3. It must have been very difficult to fund a Google Nexus 7. Keeping the same OS across the devices would have controlled for a lot of other system variables. Wouldn't it?

2. Tegra 3 is the only quad core chip among chips being compared. Atom and Krait are dual-core. If all four cores are running, wouldn't it make a difference to the idle power?

3. Tegra 3 is built on 40nm and is one of the first A9 SoCs. In contrast, Atom is 32nm and Krait is 28nm.

How does Tegra 3 fit in this setup?

some_guy - Wednesday, January 16, 2013 - link

I thinking this may be the beginning of Intel being commoditied and the end of the juicy margins for most of their sales.I was just reading an article about how hedge funds love Intel. I don't see it, but that doesn't mean that the hedge funds would make money. Perhaps they know the earning report that is coming out soon, maybe tomorrow, will be good. http://www.insidermonkey.com/blog/top-semiconducto...

some_guy - Wednesday, January 16, 2013 - link

I meant to say "but that doesn't mean that the hedge funds won't make money."raptorious - Wednesday, February 20, 2013 - link

but Anand has no clue what the rails might actually be powering. How do we know that the "GPU Rail" is in fact just powering the GPU and not the entire uncore of the SOC? This article is completely biased towards Intel and lacks true engineering rigor.EtTch - Tuesday, April 2, 2013 - link

My take in all of this is that ARM and x86 is in comparable at this point when it comes to comparing the different instruction set architectures due to different the lithography size and the new 3d transistors. When ARM based SOC has finally all the physical features of the x86 then it's only truly comparable. Right now x86 is most likely to have a lower power consumption than ARM based processors that has a higher lithographic size than itself. (I really don't know what it's called but I'll go out on a limb and call it lithography size even though I know that I am most likely wrong)