Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

Recently I was asked to give a presentation about smartphone imaging and optics at a small industry event, and given my background I was more than willing to comply. At the time, there was no particular product or announcement that I crafted this presentation for, but I thought it worth sharing beyond just the event itself, especially in the recent context of the HTC One. The high level idea of the presentation was to provide a high level primer for both a discussion about camera optics and general smartphone imaging trends and catalyze some discussion.

For readers here I think this is a great primer for what the state of things looks like if you’re not paying super close attention to smartphone cameras, and also the imaging chain at a high level on a mobile device.

Some figures are from of the incredibly useful (never leaves my side in book form or PDF form) Field Guide to Geometrical Optics by John Greivenkamp, a few other are my own or from OmniVision or Wikipedia. I've put the slides into a gallery and gone through them pretty much individually, but if you want the PDF version, you can find it here.

Smartphone Imaging

The first two slides are entirely just background about myself and the site. I did my undergrad at the University of Arizona and obtained an Optical Sciences and Engineering bachelors doing the Optoelectronics track. I worked at a few relevant places as an undergrad intern for a few years, and made some THz gradient index lenses at the end. I think it’s a reasonable expectation that everyone who is a reader is also already familiar with AnandTech.

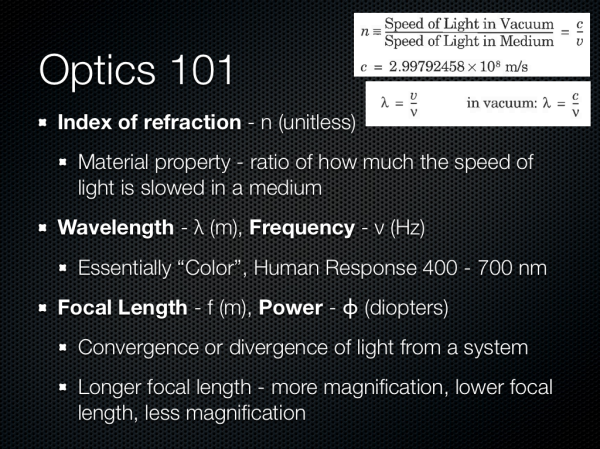

Next up are some definitions of optical terms. I think any discussion about cameras is impossible to have without at least introducing the index of refraction, wavelength, and optical power. I’m sticking very high level here. Numerical index refers of course to how much the speed of light is slowed down in a medium compared to vacuum, this is important for understanding refraction. Wavelength is of course easiest to explain by mentioning color, and optical power refers to how quickly a system converges or diverges an incoming ray of light. I’m also playing fast and loose when talking about magnification here, but again in the camera context it’s easier to explain this way.

Other good terms are F-number, the so called F-word of optics. Most of the time in the context of cameras we’re talking about working F-number, and the simplest explanation here is that this refers to the light collection ability of an optical system. F-number is defined as the ratio of the focal length to the diameter of the entrance pupil. In addition the normal progression for people who think about cameras is in square root two steps (full stops) which changes the light collection by a factor of two. Finally we have optical format or image sensor format, which is generally in some notation 1/x“ in units of inches. This is the standard format for giving a sensor size, but it doesn’t have anything to do with the actual size of the image circle, and rather traces its roots back to the diameter of a vidicon glass tube. This should be thought of as being analogous to the size class of TV or monitor, and changes from manufacturer to manufacturer, but they’re of the same class and roughly the same size. Also 1/2” would be a bigger sensor than 1/7".

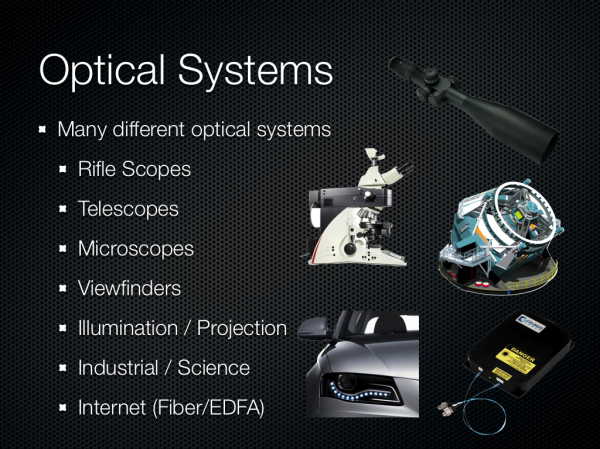

There are many different kinds of optical systems, and since I was originally asked just to talk about optics I wanted to underscore the broad variety of systems. Generally you can fit them into two different groups — those designed to be used with the eye, and those that aren’t. From there you get different categories based on application — projection, imaging, science, and so forth.

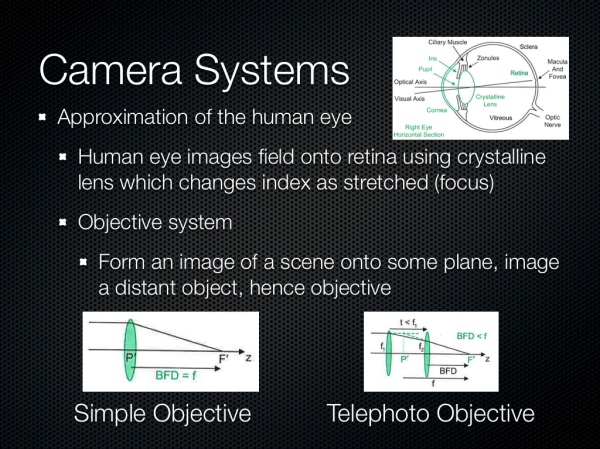

We’re talking about camera systems however, and thus objective systems. This is roughly an approximation of the human eye but instead of the retina the image is formed on a sensor of some kind. Cameras usually implement similar features to the eye as well – a focusing system, iris, then imaging plane.

60 Comments

View All Comments

MrSpadge - Sunday, February 24, 2013 - link

You're right, conceptually one would "only" need to adapt a multi-junction solar cell for spatial resoluition, i.e. small pixels. This would introduce shadowing for the bottom layers similar to front side illumination again, though. Which might be countered with vias through the chips, at the cost of making manufacturing more expensive. And the materials and their processing become way more expensive in general, as they will be CMOS incompatible III-V composites.And worst: one could only gain a 3 times higher light sensitivity at maximum, so currently it's probably not worth the effort.

mdar - Thursday, February 28, 2013 - link

I think you are talking about Foveon sensors, used by Sigma to make some of their DSLR cameras. Since photons of different colors have different energies, they use this principle to detect color. Not sure how they do it (probably by checking at which depth the electron is generated), but there is lot of information on web about it.fuzzymath10 - Saturday, February 23, 2013 - link

one of the non-traditional imaging sensors around is the foveon x3 sensor. each pixel can sense all three primary colours rather than relying on bayer interpolation. it does have many limitations though.evonitzer - Wednesday, February 27, 2013 - link

Yeah, like only being in Sigma cameras that use Sigma mounts. Who on earth buys those things? The results are stunning to see, but they need some, well, design wins, to use the parlance of cell phones.They also need to make tiny sensors. AFAIK they only have the APS-C one, and those won't be showing up in phones anytime soon. :)

ShieTar - Tuesday, February 26, 2013 - link

The camera equivalent of a 3 LCD projector does exist, for example in so-called "Multi-Spectral Imager" instruments for space missions. The light entering the camera aperture is split into spectral bands by dichroic mirrors, and then imaged on a number of CCDs.The problem with this approach is that it takes considerable engineering effort to make sure that all the CCDs are aligned to each other with sub-pixel accuracy. Of course the cost of multiple CCDs and the space demand for the more complex optical system make this option quiet irrelevant for mobile devices.

nerd1 - Friday, February 22, 2013 - link

I wonder who actually tested the captured image using proper analysing software (Dxo for example) to see how much they ACTUALLY resolve?And I don't think we get diffraction limit of 3um - see the chart here

http://egami.blog.so-net.ne.jp/2011-07-11

We have 1.34um at f2.0 and 1.88um at f2.8.

Typical 8MP sensor have 1.4um photosites so 8MP sensors looks like an ideal spot for f2 optics. (Yes, 13MP @ 1.1um is just marketing gimmick I think)

In comparison, 36MP Nikon D800 has 4.9um photosite size, which is diffraction limited between f5.6 and f8.

jjj - Friday, February 22, 2013 - link

"smartphones are or are poised to begin displacing the role of a traditional point and shoot camera "That started quite a while ago so a rather disappointing "trends"section. Was waiting for some actual features , ways to get there. and more talk about video since it's becoming a lot more important.

Johnmcl7 - Friday, February 22, 2013 - link

Agreed, the presentation feels a bit out of date for current technology particularly as you say phone cameras have been displacing compact cameras for years - I'd say right back to the N95 which offered a decent 5MP AF camera and was released before the first Iphone.I'm also surprised to see no mention of Nokia pretty much even though they've very much been pushing the camera limits, their ultra high resolution Pureview camera showed you could have a very high number of pixels and high image quality (which this article seems to claim isn't possible even with lower resolution devices) and the Lumia 920 is an interesting step forward in having a physical image stabilisation system.

Also with regards to shallow depth of field with F2, that's just not going to happen on a camera phone because depth of field is primarily a function of the actual focal length (not the equivalent focal length) so to get a proper shallow depth of field effect (as in not shooting at very close macro distances) a camera phone would need a massive aperture many stops wider than F2 to counter the very short focal length.

John

Tarwin - Saturday, February 23, 2013 - link

Actually it makes sense he doesn't mention all that. He's talking about trends, the Pureview did not fit into the trends, both in quality and sensor size.Optical image stabilization doesn't fit in either as it only affects image quality in less than ideal situations such as no tripods/shaky hands. But he did mention the need for extra parts in the module configuration shouldnthat be part of the setup.

And in his defence of the comment ofndisplacing P&S cameras, he says "smartphones are or are poised to begin displacing the role" so he's notnsaying that they aren't doing it already, he gives you a choice in perspectives. Also I don't think you can say the N95 displaced P&S at the market level, only in casual use.

Manabu - Friday, February 22, 2013 - link

What about the "large" sensor 41MP Nokia 808 phone? It is sure a interesting outlier.And point & shot cameras still have the advantage of optical zoom, better handling, and can have bigger sensors. Just look at S110, LX7 or RX100 cameras. But budget super-compact cameras are indeed in extinction.