The Great Equalizer: Apple, Android & Windows Tablet GPUs Compared using GL/DXBenchmark 2.7

by Anand Lal Shimpi on March 31, 2013 11:58 PM EST_678x452.png)

For the past few years we have been lamenting the state of benchmarks for mobile platforms. The constant refrain from those who had been around long enough to remember when all PC benchmarks were terrible was to wait for the release of Windows 8 and RT. The release of those two OSes would bring many of the traditional PC benchmark vendors space into the fray. While we're expecting to see new Android, iOS, Windows RT and Windows 8 benchmarks from Futuremark and Rightware, it's our old friends at Kishonti who are first out of the gate with a cross-OS/API/platform benchmark. GLBenchmark has existed on both Android and iOS for a while now, but we're finally able to share information and performance data using DXBenchmark - GLB's analogue for Windows RT/8.

As the name difference implies, DXBenchmark uses Microsoft's DirectX API while GLBenchmark relies on OpenGL ES. The API difference alone makes true cross-platform comparison difficult, especially since we're comparing across APIs, OSes and hardware - but we at least have the option to get a rough idea of how these platforms stack up to one another. There are a lot of improvements expected with Windows Blue later this year from a platform optimization standpoint from the ARM based SoC vendors, so I wouldn't read too much into any of the Android vs. Windows RT comparisons of the same hardware (even though some key results end up being very close).

While GLBenchmark 2.7 doesn't yet take advantage of OpenGL ES 3.0 (GLB 3.0 will deliver that), it does significantly update the tests to recalibrate performance given the advances in modern hardware. Version 2.7 ditches classic, keeps Egypt HD and adds a new test, T-Rex HD, featuring a dinosaur in pursuit of a girl on a dirt bike:

Scene complexity goes up tremendously with the T-Rex HD benchmark. GLBenchmark has historically been more computationally bound than limited by memory bandwidth. The transition to T-Rex HD as the new flagship test continues the trend. While we see scaling in average geometry complexity, depth complexity and average memory bandwidth requirements, it's really in the shader instruction count that we see the biggest increase in complexity:

| GL/DXBenchmark 2.7: T-Rex HD Compared to Egypt HD Benchmark | |||||||

| Increase in T-Rex HD | |||||||

| Average Geometry Complexity | +55% | ||||||

| Average Depth Complexity | +41% | ||||||

| Average Texture Memory Bandwidth Requirements | +41% | ||||||

| Average Shader Instruction Count | +165% | ||||||

T-Rex HD should benefit from added memory bandwidth, but increases in raw compute performance will be most visible. Given the comparatively static nature of memory bandwidth improvements, scaling shader instruction count to increase complexity makes sense.

Just as before, both GL and DXBenchmark 2.7 can run in onscreen (native resolution, v-sync enabled) and offscreen (1080p, v-sync disabled) modes.

The Android and iOS versions retain the UI of their predecessors, while DXBenchmark 2.7 introduces a Windows RT/8 flavored take on the UI:

There will be a unified database of scores across both GL and DXBenchmark once the latter gets enough submissions.

The low level tests are comparable between GLBenchmark 2.5 and 2.7, only results from the new T-Rex HD benchmark can't be compared to anything GLBenchmark 2.5 produced (for obvious reasons).

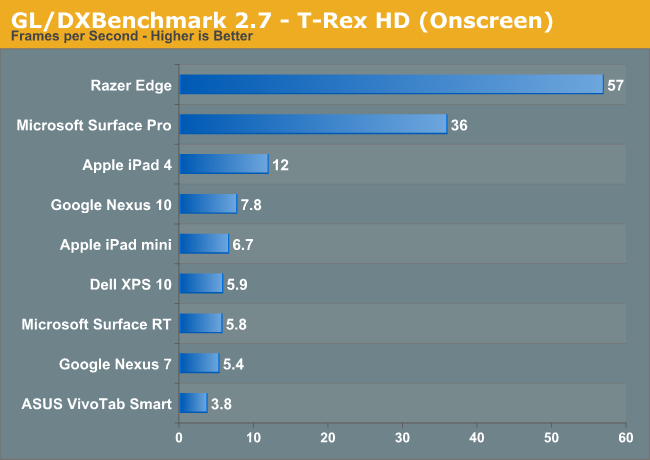

Now time for the exciting part. The usual suspects from the iOS and Android worlds are present, I didn't include anything slower than a Tegra 3 given how low T3 scores in the T-Rex HD test. From the Windows RT camp we've got Microsoft's Surface RT (Tegra 3) and Dell's XPS 10 (APQ8060A/Adreno 225). The sole 32-bit Windows 8 Pro representative is ASUS' VivoTab Smart (Atom Z2560/PowerVR SGX 545). Finally, running Windows 8 Pro (x64) we have Microsoft's Surface Pro (Core i5-3317U/HD 4000) and the Razer Edge (Core i7-3517U/GeForce GT 640M LE).

As always, we'll start with the low level results and move our way over to the scene tests:

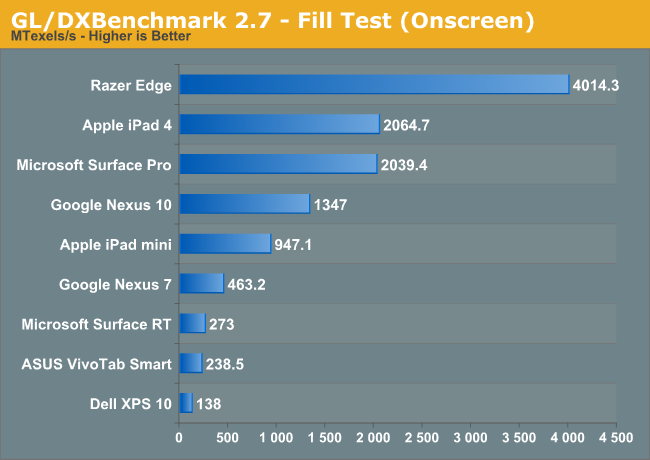

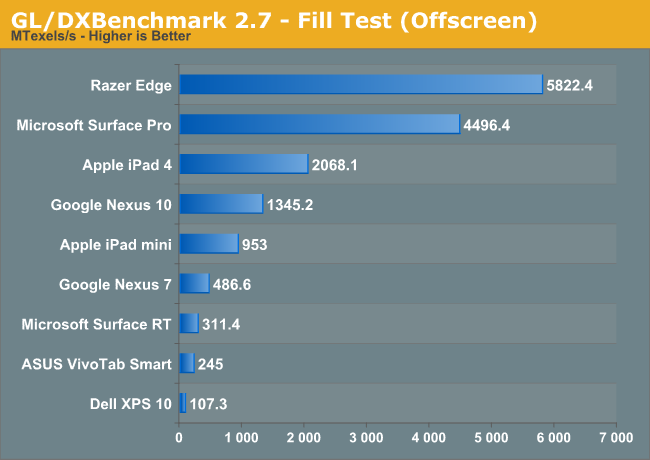

Looking at the fill rate tests, we have the first indication of how Intel's HD 4000 graphics compares to the best in the tablet space. Unconstrained, Surface Pro delivers a fill rate of over 2x that of the 4th generation iPad. NVIDIA's GeForce GT 640M LE delivers nearly 3x the fill rate of the iPad 4.

The Mali-T604 in Google's Nexus 10 finds itself in between the iPad 4 and iPad mini, while Tegra 3 ends up faster than both the Clover Trail and Qualcomm Windows RT platforms. It's interesting to note the big difference in fill rate between the Nexus 7 (Android/Tegra 3) and Surface RT (Windows RT/Tegra 3). You would think that driver maturity would be better on Windows for NVIDIA, but assuming this isn't some big API difference it could very well be that Tegra 3 on Android is more mature.

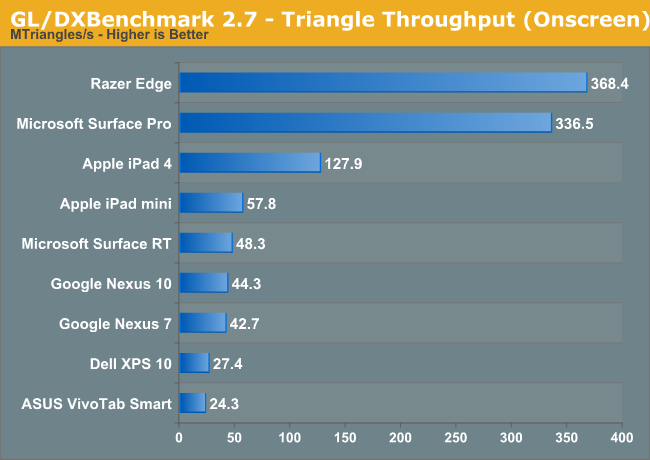

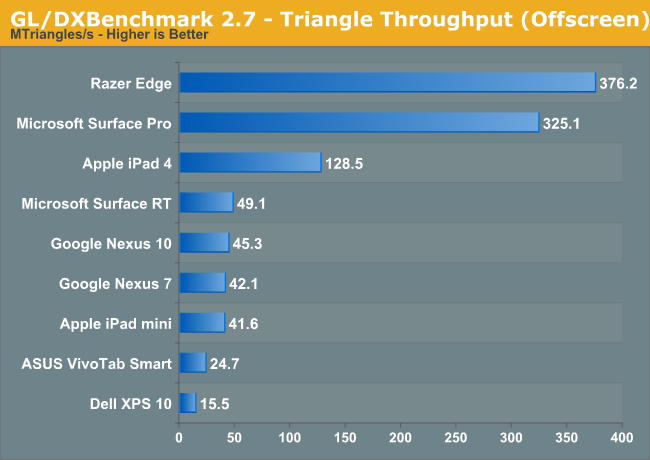

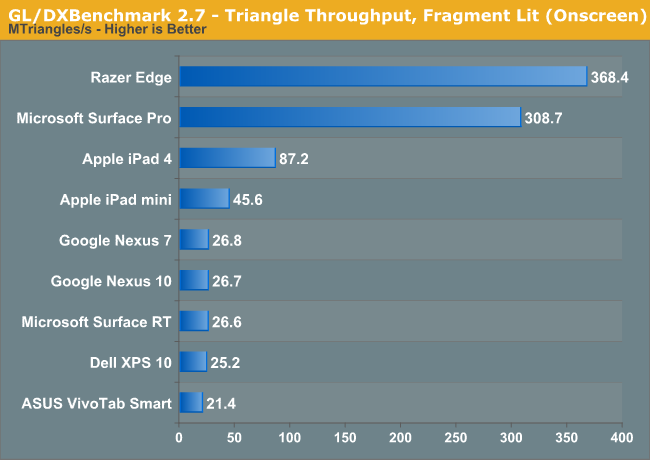

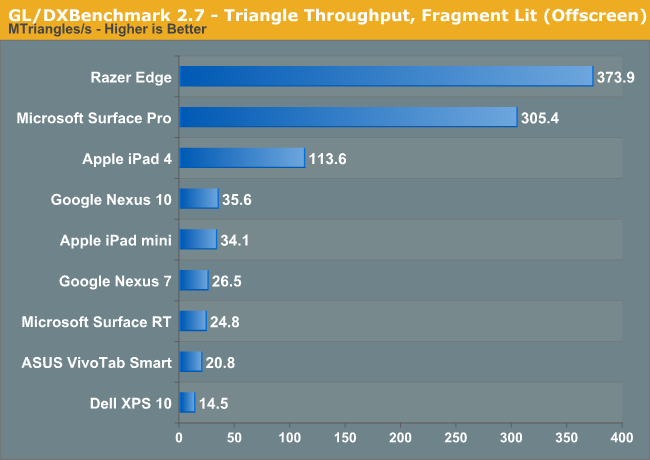

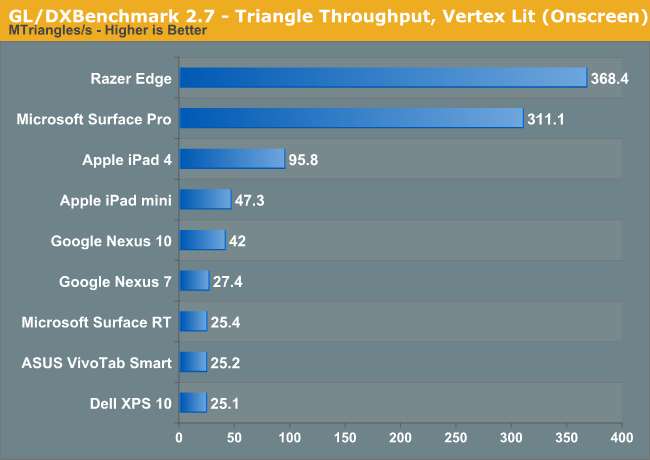

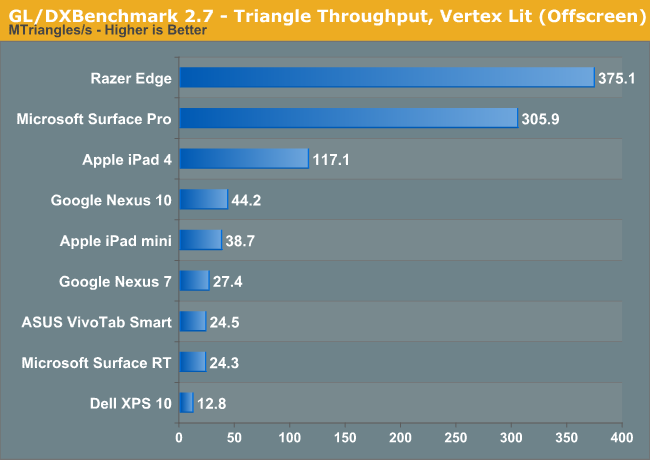

The gap in geometry performance between Intel's HD 4000 and Imagination Tech's PowerVR SGX 554MP4 grows to over 2.5x. Surface RT and the Nexus 7 switch positions, and grow a lot closer than they were in the fill rate test. Qualcomm's Windows RT platform remains at the bottom of the list, and Intel's Clover Trail remains disappointing in the graphics department.

Increase the complexity of the triangle test and things don't change all too much.

Moving on to the scene tests, we have the first look at the current landscape of T-Rex HD performance on tablets. When Egypt HD first came out, the best SoCs were barely able to break 20 fps with the majority of platforms delivering less than 13 fps. In the 8 months since the release of GLBenchmark 2.5, the high end bar has moved up considerably. The best tablet SoCs can now deliver more than 40 fps in Egypt HD, with even the latest smartphone platforms hitting 30 fps. T-Rex HD hits the reset button, with the fastest ARM based SoCs topping out at 16 fps.

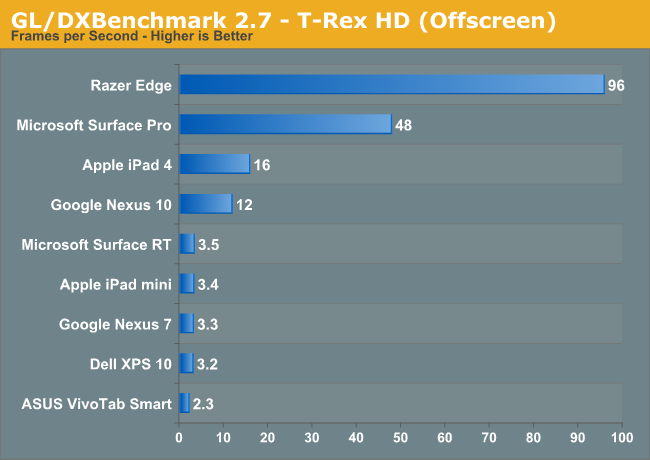

Looking at the offscreen results, we finally get what we came here for. Intel's HD 4000 manages to deliver 3x the performance of the PowerVR SGX 554MP4, obviously at a much higher power consumption level as well. The Ivy Bridge CPU used in Surface Pro carries a 17W TDP, and it's likely that the A6X used in the iPad 4 is somewhere south of 5W. The big question here is how quickly Intel can scale its power down vs. how quickly can the ARM guys scale their performance up. Claiming ARM (and its partners) can't build high performance hardware is just as inaccurate as saying that Intel can't build low power hardware. Both camps simply chose different optimization points on the power/performance curve, and both are presently working towards building what they don't have. The real debate isn't whether or not each side is capable of being faster or lower power, but which side will get there first, reliably and with a good business model.

To put these results in perspective, the GPU in the Xbox 360 still has around 3x the compute power of what's in the iPad 4. We're getting closer to having current (soon to be previous) gen console performance in our ultra mobile devices, but it'll take another year or two to get there in the really low power devices. Surface Pro is already there.

The rest of the players here aren't that interesting. Everything from the Tegra 3 to the old A5 in the iPad mini performs fairly similarly when faced with the same display resolution (1080p). Despite standings in some of the lower level benchmarks, Qualcomm's aging APQ8060A platform in the Dell XPS 10 (Windows RT) manages a healthy performance advantage over Intel's Atom Z2560 - both aren't particularly exciting parts from a graphics performance standpoint however. It's interesting to note just how close Surface RT and the Nexus 7 are here, given that they are running different OSes, using different APIs but powered by the same Tegra 3 SoC.

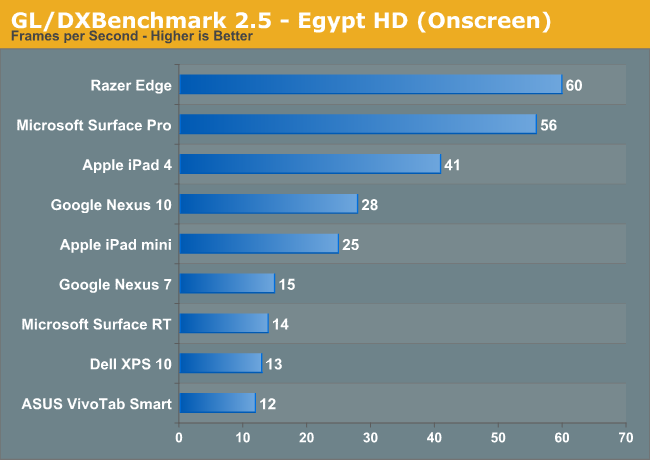

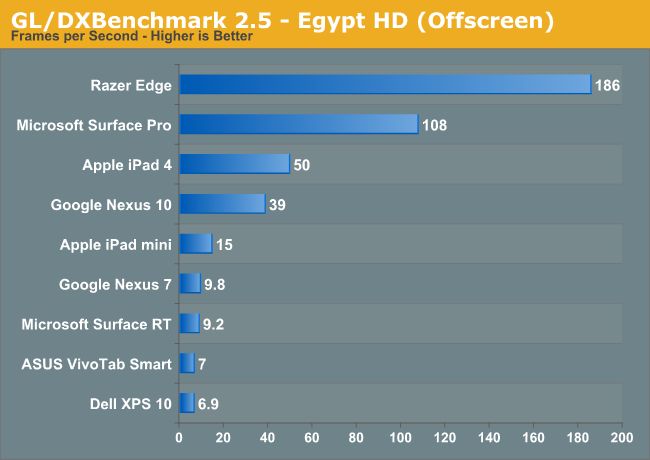

The only other scene test we have is Egypt HD, which is a known quantity these days. The only new bits are the inclusion of the Windows players using DXBenchmark:

Everyone's performance looks a lot better under the Egypt HD test, which is of course the motivation for creating the T-Rex HD test. Also interesting to note is the Apple/Intel gap shrinks a bit here, now the advantage is only 2x. It's important to put all of this in perspective. If your ultimate goal is to be able to run a shader heavy workload like T-Rex HD, then most of the tablet platforms have a long way to go. If Epic's Citadel demo release on Android is any indication however, there's a lot that can be done even with the mainstream level of performance available on smartphones and tablets today. Identifying and delivering the best performance at whatever that sweet spot may be is really the name of the game here, and it's one that the ARM folks have done a great job of playing.

I'm very curious to see how these graphs change over the next two years. I don't suspect Haswell will shift peak platform power down low enough to really be viewed as an alternative to something like an iPad, but with Broadwell (2014) and Skylake (2015) that may be a possibility. The fact that these charts are even as close as they are, spanning 7-inch tablets all the way up to full blown PC hardware, is an impressive statement on the impact of the mobile revolution.

_575px.png)

_thumb.png)

83 Comments

View All Comments

Th-z - Monday, April 1, 2013 - link

Hmm, by definition since this is a benchmark program not an actual game or program, everything about it is synthetic, including the real time rendering part -- the "benchmark demo" you referred to. I think what you meant are the individual theoretical tests. Imo they are still useful for research and comparison purposes, if they are accurate.In normal AMD/NVIDIA GPU reviews because we have so many actual games and applications that use the same platform we can exclude synthetic benchmark programs, but we don't have that luxury in mobile space. If a benchmark program is really good that reflects well in the real world, it's still a good data point to consider. In server people use a lot of synthetic benchmark programs.

shompa - Monday, April 1, 2013 - link

Its amazing how fast A6X is with a 3+ year old GPU. Imagine how fast PowerVR Rouge will be. With a bit of luck we will see that in A7. Chance to beat Nvidia 640M.Also remember that Intel/Nvidia parts are 22/28 nm parts while A6X is 32 nm. Just doing ARM on 28nm with SOI makes it possible to clock Cortex9 at 3ghz.

I therefore disagree with the article. Intel can't compete on power. 1) The X86 stuff makes the dies always 1/3 bigger. 2) CISC have always been slower than RISC. 3) Intel uses 64 bit extensions. Remember that X64 is slower than X32 by 3%! On RISC 64 bit have always been much faster than 32bit. Just look at history. Since 2006 intel have increased desktop speeds by about 130%. At the same time ARM is 1700% faster.

And people should be happy about it. No more 1000 dollar Intel CPUs. Instead ARM licensed SoCs where ARM gets 6-10 cent per core. Intel can therefore never compete on price. An ARM SoC cost 15 dollar to produce. Intel can't sustain its leading edge in fabs on that revenue.

hobagman - Monday, April 1, 2013 - link

Erm, yea, when an ARM beats Intel on performance, then Intel will have a serious problem. But at this point, performance is so closely tied to transistor improvements that Intel is the one with the advantage. They 2-4 years ahead in process technology and they are already developing the 10 nm node. And the reason why ARM's performance looks like it's come up faster is just because it was so much slower to begin with. They're hitting the power wall now, just like Intel, and that's why you see all the smartphones with 2-4 cores.cjb110 - Monday, April 1, 2013 - link

Urm, what? Assuming your right about the x86 die size, Intel have a 2y lead in FAB tech, and have been at least one process node ahead of pretty much everyone else.As for the history, well that's just a daft metric, given that in 2006 Intel had just introduced the Core 2, which can equal current ARM for performance...all your percentage shows is that ARM was damn slow in 2006, and is still pretty slow now.

As AnandTech have been saying for a while, in some senses its a race, ARM to increase performance 4x-6x fold, and Intel to reduce power 2x-3x fold (without sacrificing performance)

However one issue is that Intel might not decide to go all the way down to properly compete in the phone space...if they stop at tablet, then the power budget is much greater. Also ARM can't ignore the phone space, so its race for performance can't increase its power either, otherwise it stands to lose its biggest market.

In my mind given ARM's reliance on third parties, Intel has the upper hand, it has more control over the platform and could hit the power budget by reducing the whole system...i.e. the intel cpu would consume more power than the ARM, but the total system power could be equal.

frozentundra123456 - Monday, April 1, 2013 - link

The key to me is x86 compatability. I have an android tablet, and I will never consider another one. Never, ever. I have never used a more frustrating device in my life.aTonyAtlaw - Monday, April 1, 2013 - link

Really? Why? I've been considering getting one, but have held off because I'm just not sure it will satisfy me.What have you found frustrates you so much?

Death666Angel - Monday, April 1, 2013 - link

I have an Android 10.1" tablet, an 11.6" laptop and a powerful desktop. When I'm at home, there is no reason to use the Android tablet for browsing the web or reading emails, as many people seem to do, because I have my desktop PC, a nice 27" monitor and a comfortable chair at my desk. When I'm on the go, I like having the versatility of the laptop (running all my media, having 100+GB space, running full office, Steam and gog.com games) and I am never gone long enough for the battery life advantage of the Android tablet to kick in. Also, I hate having every program full screen in Android. In my Windows devices, I always use the 50/50 split with one window usually being the browser and the other being a video, music player, office document, another browser... Can't do that in Android. I've pretty much only used the Android tablet for some games that took advantage of the larger display compared to my Galaxy Nexus. But those are so few and far between (and many hit Steam these days anyway), that the thing has been gathering dust for months now. ARM Tablet are great for people who don't use a PC for anything resembling productivity and have very limited demands in other areas.aTonyAtlaw - Tuesday, April 2, 2013 - link

That seems fair. I was really really looking forward to the Microsoft Surface Pro release, but sad to see that they basically bungled it. I'm basically waiting for a proper windows 8 tablet to be truly worth buying.dcollins - Monday, April 1, 2013 - link

You're off by an order of magnitude: x86 decoding takes up about 3% of the CPU die. For an SoC where the CPUs encompass < 50% of the total die, the cost shrinks to 1.5%. The CISC versus RISC debate is pointless these days: the tiny power cost isn't a significant factor compared to architectural differences.Look at the drastic jump in power consumption between the A9 and the A15. The new Exynos is downclocked to 1.2Ghz to keep power to an acceptable level. You can expect similar increases in the next generation as ARM approaches the holy grail of "good enough not to be noticed"-Core2Duo-level performance. At the same time, Haswell will be targeting high end tablets, preparing Intel for a concerted push into the ~5W SoC market with its successor.

Intel has plenty of work left to compete directly with ARM, particularly since they will need to forego the high margins they've grown accustom to, but you would be a fool to write them off entirely at this point in the game.

Death666Angel - Monday, April 1, 2013 - link

"Look at the drastic jump in power consumption between the A9 and the A15. The new Exynos is downclocked to 1.2Ghz to keep power to an acceptable level."The A15 in the Exynos 5 Octa is clocked at 1.6 GHz. It's the A7 that are at 1.2 GHz.