NVIDIA’s GeForce 700M Family: Full Details and Specs

by Jarred Walton on April 1, 2013 9:00 AM EST

Introducing the NVIDIA GeForce 700M Family

With spring now well under way and the pending launch of Intel’s Haswell chips, OEMs always like to have “new” parts across the board, and so once more we’re getting a new series of chips from NVIDIA, the 700M parts. We’ve already seen a few laptops shipping with the 710M and GT 730M; today NVIDIA is filling out the rest of 700M family. Last year saw NVIDIA’s very successful launch of mobile Kepler; since that time, the number of laptops shipping with NVIDIA dGPUs compared to AMD dGPUs appears to have shifted even more in NVIDIA’s favor.

Not surprisingly, with TSMC still on 28nm NVIDIA isn’t launching a new architecture, but they’ll be tweaking Kepler to keep it going through 2013. Today's launch of the various 700M GPUs is thus very similar to what we saw with the 500M launch: everything in general gets a bit faster than the previous generation. To improve Kepler NVIDIA is taking the existing architecture and making a few moderate tweaks, improving their drivers (which will also apply to existing GPUs), and as usual they’re continuing to proclaim the advantages of Optimus Technology.

Starting on the software side of things, we don’t really have anything new to add on the Optimus front, other than to say that in our experience it continues to work well on Windows platforms—Linux users may feel otherwise, naturally. On the bright side, things like the Bumblebee Project appear to be helping the situation, so now it's at least possible to utilize the dGPU and iGPU under Linux. As far as OEMs go, Optimus has now matured to the point where I can't immediately come up with any new laptop that has an NVIDIA GPU and doesn't support Optimus; we're now at the point where an NVIDIA equipped laptop inherently implies Optimus support.

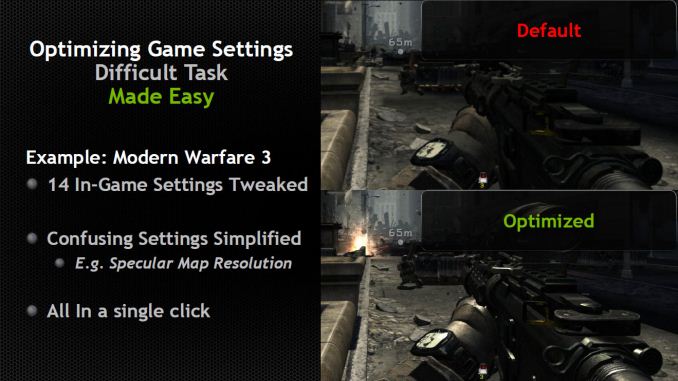

The second software aspect is NVIDIA’s GeForce Experience software, which allows for automatic game configuration based on your hardware. You can see the full slide deck in the gallery at the end with a few additional details, but GeForce Experience is a new software tool that’s designed to automatically adjust supported games to the “best quality for your hardware” setting. This may not seem like a big deal for enthusiasts, but for your average Joe that doesn’t know what all the technical names mean (e.g. antialiasing, anisotropic filtering, specular highlighting, etc.) it’s a step towards making PCs more gamer friendly—more like a console experience, only with faster hardware. ;-) GeForce Experience is already in open beta, with over 1.5 million downloads and counting, so it’s definitely something people are using.

Finally, NVIDIA has added GPU Boost 2.0 to the 700M family. This is basically the same as what’s found in GeForce Titan, though with some tuning specific to mobile platforms as opposed to desktops. We’re told GPU Boost 2.0 is the same core hardware as GPU Boost 1.0, with software refinements allowing for more fine-grained control of the clocks. Ryan has already covered GPU Boost 2.0 extensively, so we won’t spend much more time on it other than to say that over a range of titles, NVIDIA is getting a 10-15% performance improvement relative to GPU Boost 1.0.

Moving to the hardware elements, hardware change only applies to one of the chips. GK104 will continue as the highest performing option in the GTX 675MX and GTX 680M (as well as the GTX 680MX in the iMac 27), and GK106 will likewise continue in the GTX 670MX (though it appears some 670MX chips also use GK104). In fact, for now NVIDIA isn’t announcing any new high-end mobile GPUs, so the GTX 600M parts will continue to fill that niche. The changes come for everything in the GT family, with some of the chips apparently continuing to use GK107 while a couple options will utilize a new GK208 part.

While NVIDIA won’t confirm which parts use GK208, the latest drivers do refer to that part number so we know it exists. GK208 looks to be largely the same as GK107, and we’re not sure if there are any real differences other than the fact that GK208 will be available as a 64-bit part. Given the similarity in appearance, it may serve as a 128-bit part as well. Basically, GK107 was never available in a 64-bit configuration, and GK208 remedies that (which actually makes it a lower end chip relative to GK107).

91 Comments

View All Comments

StevoLincolnite - Monday, April 1, 2013 - link

Not really. Overclocking is fine if you know what you're doing.Years ago I had a Pentium M 1.6ghz notebook with a Mobility Radeon 9700 Pro.

Overclocked that processor to 2.0ghz+ and the Graphics card core clock was almost doubled.

Ran fine for years, eventually the screen on it died due to sheer age, but I'm still using it as file server hooked up to an old monitor still to this day, with about a half dozen external drives hanging off it.

JarredWalton - Monday, April 1, 2013 - link

Hence the "with very few exceptions". You had a top-end configuration and overclocked it, but that was years ago. Today with Turbo Boost the CPUs are already pushing the limits most of the time in laptops (and even in desktops unless you have extreme cooling). GPUs are doing the same now with GPU Boost 2.0 (and AMD has something similar, more or less). But if you have a high-end Clevo, you can probably squeeze an extra 10-20% from overclocking (YMMV).But if we look at midrange offerings with GT 640M LE...well, does anyone really think an Acer M5 Ultrabook is going to handle the thermal load or power load of a GPU that's running twice as fast as spec over the long haul? Or what about a Sony VAIO S 13.3" and 15.5" -- we're talking about Sony, who is usually so worried about form that they underclock GPUs to keep their laptops from overheating. Hint: any laptop that's really thin isn't going to do well with GPU or CPU overclocking! I know there was a Win7 variant of the Sony VAIO S that people overclocked (typically 950MHz was the maximum anyone got stable), but that was also with the fans set to "Performance".

Considering the number of laptops I've seen where dust buildup creates serious issues after six months, you're taking a real risk. The guys who are pushing 950MHz overclocks on 640M LE are also the same people that go and buy ultra-high-end desktops and do extreme overclocking, and when they kill a chip it's just business as usual. Again, I reiterate that I have seen enough issues with consumer laptops running hot, especially when they're over a year old, that I suggest restraint with laptop overclocking. You can do it, but don't cry to NVIDIA or the laptop makers when your laptop dies!

transphasic - Monday, April 1, 2013 - link

Totally agreed. I had a Clevo/Sager Laptop with the 9800m GTX in it, and after only two years, it died, due to the Nvidia GPU getting fried to a crisp. The heat build-up from internal dust accumulation was what destroyed my $2700 dollar laptop after only 2 years of use.Ironically, I was thinking about overclocking it prior to it dying on me. In looking back, good thing I didn't do it. Overclocking is risky, and the payoffs are just not worth it, unless you are ready to take the expensive financial risks involved.

Drasca - Tuesday, April 2, 2013 - link

I've got a Clevo x7200 and I just cleaned out a wall of dust after discovering it was thermal throttling hard core. I've got to hand it to the internals and cooling of this thing though, it was still running like a champ.This thing's massive cooling is really nice.

I can stably overclock the 485m GPU from 575 Mhz to 700Mhz without playing with voltages. No signifigant difference in temps, especially compared to when it was throttling. Runs at 61C.

I love the cooling solution on this thing.

whyso - Monday, April 1, 2013 - link

It depends really. As long as you don't touch voltage the temperature does not rise much. I have a 660m and it reaches 1085/2500 without any problems (ANIC rating of 69%). Overclocked vs non overclocked is basically a 2 degree difference (72 vs 74 degrees). Better than a stock 650 desktop.Also considering virtually every 660m I have seen boost up to 950/2500 from 835/2000 I don't think the 750m is going to be any upgrade. Many 650m have a boost of 835 core so there really is no upgrade there either (maybe 5-10%). GK107 is fine with 64 GB/sec bandwidth.

whyso - Monday, April 1, 2013 - link

Whoops sorry didn't see the 987 clocks, nice jump there.JarredWalton - Monday, April 1, 2013 - link

Funny thing is that in reading comments on some of the modded VBIOS stuff for the Sony VAIO S, the modder say, "The Boost clock doesn't appear to be working properly so I just set it to the same value..." Um, think please Mr. Modder. The Boost clock is what the GPU is able to hit when certain temperature and power thresholds are not exceeded; if you overclock, you've likely inherently gone beyond what Boost is designed to do.Anyway, a 2C difference for a 660M isn't a big deal, but you're also looking at a card with a default 900MHz clock, so you went up in clocks by 20% and had a 3% temperature increase (and no word on fan speed). Going from 500MHz to 950MHz is likely going to be more strenuous on the system and components.

damianrobertjones - Monday, April 1, 2013 - link

"and their primary competition in the iGPU market is going to be HD 4000 running on a ULV chip!"Wouldn't that be the HD 4600? Also it's a shame that no-one really states the HD4000 with something like Vengeance ram which improves performance

HisDivineOrder - Monday, April 1, 2013 - link

So if the "core hardware" is the same from Boost 1 and 2, then nVidia should go on and make Boost 2.0 be something we all can enable in the driver.Or... are they trying to get me to upgrade to new hardware to activate a feature my card is already fully capable of supporting? Haha, nVidia, you so crazy.

JarredWalton - Monday, April 1, 2013 - link

There may be some minor difference in the core hardware (some extra temperature or power sensors?), but I'd be shocked if NVIDIA offered an upgrade to Boost 1.0 users via drivers -- after all, it looks like half of the performance increase from 700M is going to come from Boost 2.0!