NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTSoftware: GeForce Experience, Out of Beta

Along with the launch of the GTX 780 hardware, NVIDIA is also using this opportunity to announce and roll out new software. Though they are (and always will be) fundamentally a hardware company, NVIDIA has been finding that software is increasingly important to the sales of their products. As a result the company has taken on several software initiatives over the years, both on the consumer side and the business side. To that end the products launching today are essentially a spearhead as part of a larger NVIDIA software ecosystem.

The first item on the list is GeForce Experience, NVIDIA’s game settings advisor. You may remember GeForce Experience from the launch of the GTX 690, which is when GeForce Experience was first announced. The actual rollout of GeForce Experience was slower than NVIDIA projected, having gone from an announcement to a final release in just over a year. Never the less, there is a light at the end of the tunnel and with version 1.5, GeForce Experience is finally out of beta and is being qualified as release quality.

So what is GeForce Experience? GFE is in a nutshell NVIDIA’s game settings advisor. The concept itself is not new, as games have auto-detected hardware and tried to set appropriate settings, and even NVIDIA has toyed with the concept before with their Optimal Playable Settings (OPS) service. The difference between those implementations and GFE comes down to who’s doing the work of figuring this out, and how much work is being done.

With OPS NVIDIA was essentially writing out recommended settings by hand based on human play testing. That process is of course slow, making it hard to cover a wide range of hardware and to get settings out for new games in a timely manner. Meanwhile with auto-detection built-in to games the quality of the recommendations is not a particular issue, but most games based their automatic settings around a list of profiles, which means most built-in auto-detection routines were fouled up by newer hardware. Simply put, it doesn’t do NVIDIA any good if a graphical showcase game like Crysis 3 selects the lowest quality settings because it doesn’t know what a GTX 780 is.

NVIDIA’s solution of choice is to take on most of this work themselves, and then move virtually all of it to automation. From a business perspective this makes great sense for NVIDIA as they already have the critical component for such a service, the hardware. NVIDIA already operates large GPU farms in order to test drivers, a process that isn’t all that different from what they would need to do to automate the search for optimal settings. Rather than regression testing and looking for errors, NVIDIA’s GPU farms can iterate through various settings on various GPUs in order to find the best combination of settings that can reach a playable level of performance.

By iterating through the massive matrix of settings most games offer, NVIDIA’s GPU farms can do most of the work required. What’s left for humans is writing test cases for new games, something again necessary for driver/regression testing, and then identifying which settings are more desirable from a quality perspective so that those can be weighted and scored in the benchmarking process. This means that it’s not entirely a human-free experience, but having a handful of engineers writing test cases and assigning weights is a much more productive use of time than having humans test everything by hand like it was for OPS.

Moving on, all of this feeds into NVIDIA’s GFE backend service, which in turn feeds the frontend in the form of the GFE client. The GFE client has a number of features (which we’ll get into in a moment), but for the purposes of GFE its primary role is to find games on a user’s computer, pull optimal settings from NVIDIA, and then apply those settings as necessary. All of this is done through a relatively straightforward UI, which lists the detected games, the games’ current settings, and NVIDIA’s suggested settings.

The big question of course is whether GFE’s settings are any good, and in short the answer is yes. NVIDIA’s settings are overall reasonable, and more often than not have closely matched the settings we use for benchmarking. I’ve noticed that they do have a preference for FXAA and other pseudo-AA modes over real AA modes like MSAA, but at this point that’s probably a losing battle on my part given the performance hit of MSAA.

For casual users NVIDIA is expecting this to be a one-stop solution. Casual users will let GFE go with whatever it thinks are the best settings, and as long as NVIDIA has done their profiling right users will get the best mix of quality at an appropriate framerate. For power users on the other hand the expectation isn’t necessarily that those users will stick with GFE’s recommended settings, but rather GFE will provide a solid baseline to work from. Rather than diving into a new game blindly, power users can start with GFE’s recommended settings and then turn things down if the performance isn’t quite high enough, or adjust some settings for others if they favor a different tradeoff in quality. On a personal note this exactly matches what I’ve been using GFE for since the earlier betas landed in our hands, so it seems like NVIDIA is on the mark when it comes to power users.

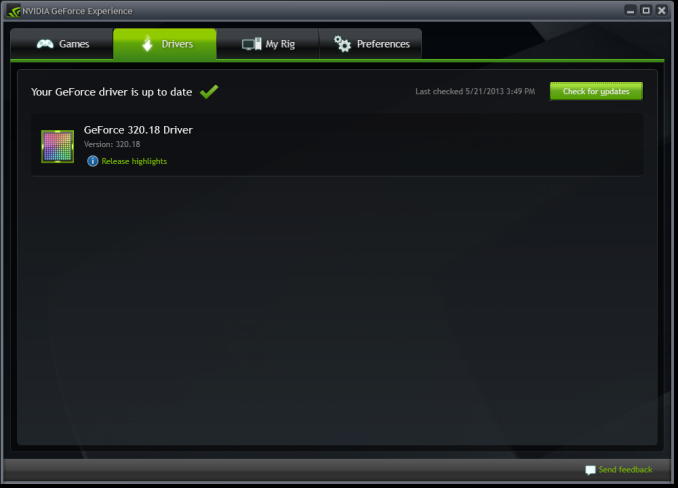

With all of that said, GeForce Experience isn’t going to be a stand-alone game optimization product but rather the start of a larger software suite for consumers. GeForce Experience has already absorbed the NVIDIA Update functionality that previously existed as a small optional install in NVIDIA’s drivers. It’s from here that NVIDIA is going to be building further software products for GeForce users.

The first of these expansions will be for SHIELD, NVIDIA’s handheld game console launching next month. One of SHIELD’s major features is the ability to stream PC games to the console, which in turn requires a utility running on the host PC to provide the SHIELD interface, control mapping, and of course video encoding and streaming. Rather than roll that out as a separate utility, that functionality will be built into future versions of GeForce Experience.

To that end, with the next release of drivers for the GTX 780 GeForce Experience will be bundled with NVIDIA’s drivers, similar to how NVIDIA Update is today. Like NVIDIA Update it will be an optional-but-default item, so users can opt out of it, but if the adoption is anything like NVIDIA Update then the expectation is that most users will end up installing GFE.

It would be remiss of us to not point out the potential for bloat here, but we’ll have to see how this plays out. In terms of file size GeForce Experience is rather tiny at 11MB (versus 169MB for the 320.14 driver package), so after installer overhead is accounted for it should add very little to the size of the GeForce driver package. Similarly it doesn’t seem to have any real appetite for system resources, but this is the wildcard since it’s subject to change as NVIDIA adds more functionality to the client.

155 Comments

View All Comments

lukarak - Friday, May 24, 2013 - link

1/3rd FP32 and 1/24th FP32 is nowhere near 10-15% apart. Gaming is not everything.chizow - Friday, May 24, 2013 - link

Yes fine cut gaming performance on 780 and Titan down to 1/24th and see how many of these you sell at $650 and $1000.Hrel - Friday, May 24, 2013 - link

THANK YOU!!!! WHY this kind of thing isn't IN the review is beyond me. As much good work as Nvidia is doing they're pricing schemes, naming schemes and general abuse of customers has turned me off of them forever. Which convenient because AMD is really getting their shit together quickly.chizow - Saturday, May 25, 2013 - link

Ryan has danced around this topic in the past, he's a pretty straight shooter overall but it goes without saying why he isn't harping on this in his review. He has to protect his (and AT's) relationship with Nvidia to keep the gravy train flowing. They have gotten in trouble with Nvidia in the past (sometime around the "not covering PhysX enough" fiasco, along with HardOCP) and as a result, their review allocation suffered.In the end, while it may be the truth, no one with a vested interest in these products and their future success contributing to their livelihoods wants to hear about it, I guess. It's just us, the consumers that suffer for it, so I do feel it's important to voice my opinion on the matter.

Ryan Smith - Sunday, May 26, 2013 - link

While you are welcome to your opinion and I doubt I'll be able to change it, I would note that I take a dim view towards such unfounded nonsense.We have a very clear stance with NVIDIA: we write what we believe. If we like a product we praise it, if we don't like a product we'll say so, and if we see an issue we'll bring it up. We are the press and our role is clear; we are not any company's friend or foe, but a 3rd party who stakes our name and reputation (and livelihood!) on providing unbiased and fair analysis of technologies and products. NVIDIA certainly doesn't get a say in any of this, and the only thing our relationship is built upon is their trusting our methods and conclusions. We certainly don't require NVIDIA's blessing to do what we do, and publishing the truth has and always will come first, vendor relationships be damned. So if I do or do not mention something in an article, it's not about "protecting the gravy train", but about what I, the reviewer, find important and worth mentioning.

On a side note, I would note that in the 4 years I have had this post, we have never had an issue with review allocation (and I've said some pretty harsh things about NVIDIA products at times). So I'm not sure where you're hearing otherwise.

chizow - Monday, May 27, 2013 - link

Hi Ryan I respect your take on it and as I've said already, you generally comment on and understand more about the impact of pricing and economy more than most other reviews, which is a big part of the reason I appreciate AT reviews over others.That being said, much of this type of commentary about pricing/economics can be viewed as editorializing, so while I'm not in any way saying companies influence your actual review results and conclusions, the choice NOT to speak about topics that may be considered out of bounds for a review does not fall under the scope of your reputation or independence as a reviewer.

If we're being honest here, we're all human and business is conducted between humans with varying degrees of interpersonal relationships. While you may consider yourself truthful and forthcoming always, the tendency to bite your tongue when friendships are at stake is only natural and human. Certainly, a "How's your family?" greeting is much warmer than a "Hey what's with all that crap you wrote about our GTX Titan pricing?" when you meet up at the latest trade show or press event. Similarly, it should be no surprise when Anand refers to various moves/hires at these companies as good/close friends, that he is going to protect those friendships where and when he can.

In any case, the bit I wrote about allocation was about the same time ExtremeTech got in trouble with Nvidia and felt they were blacklisted for not writing enough about PhysX. HardOCP got in similar trouble for blowing off entire portions of Nvidia's press stack and you similarly glossed over a bunch of the stuff Nvidia wanted you to cover. Subsequently, I do recall you did not have product on launch day and maybe later it was clarified there was some shipping mistake. Was a minor release, maybe one of the later Fermi parts. I may be mistaken, but pretty sure that was the case.

Razorbak86 - Monday, May 27, 2013 - link

Sounds like you've got an axe to grind, and a tin-foil hat for armor. ;)ambientblue - Thursday, August 8, 2013 - link

Well, you failed to note how the GTX 780 is essentially kepler's version of a GTX 570. It's priced twice as high though. The Titan should have been a GTX 680 last year... its only a prosumer card because of the price LOL. that's like saying the GTX 480 is a prosumer card!!!cityuser - Thursday, May 23, 2013 - link

whatever Nvidia do, it never improve their 2D quality, I mean , look at what nVidia will give you at BluRay playing, the color still dead , dull, not really enjoyable.It's terrible to use nVidia to HD home cinema, whatever setting you try.

Why nVidia can ignore this? because it's spoiled.

Dribble - Thursday, May 23, 2013 - link

What are you going on about?Bluray is digital, hdmi is digital - that means the signal is decoded and basically sent straight to the TV - there is no fiddling with colours, or sharpening or anything else required.