Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTBioShock: Infinite

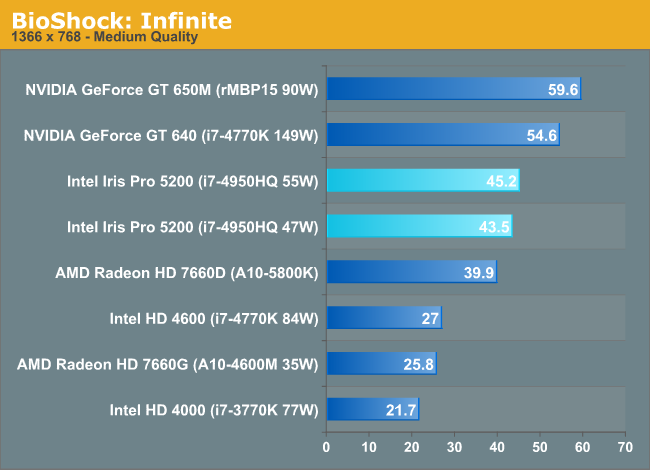

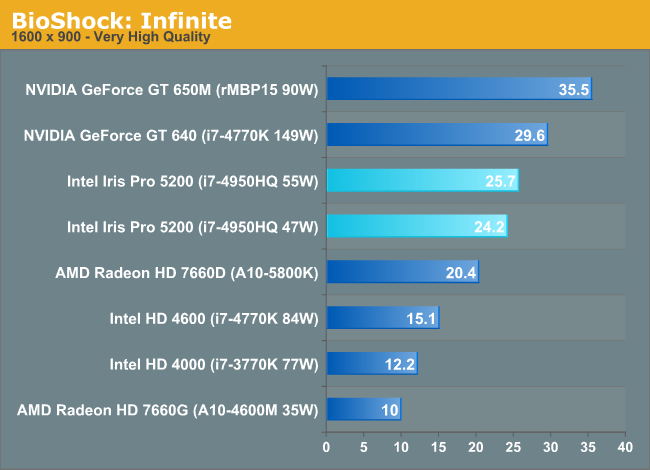

Bioshock Infinite is Irrational Games’ latest entry in the Bioshock franchise. Though it’s based on Unreal Engine 3 – making it our obligatory UE3 game – Irrational had added a number of effects that make the game rather GPU-intensive on its highest settings. As an added bonus it includes a built-in benchmark composed of several scenes, a rarity for UE3 engine games, so we can easily get a good representation of what Bioshock’s performance is like.

Both the 650M and desktop GT 640 are able to outperform Iris Pro here. Compared to the 55W configuration, the 650M is 32% faster. There's not a huge difference in performance between the GT 640 and 650M, indicating that the performance advantage here isn't due to memory bandwidth but something fundamental to the GPU architecture.

In the grand scheme of things, Iris Pro does extremely well. There isn't an integrated GPU that can touch it. Only the 100W desktop Trinity approaches Iris Pro performance but at more than 2x the TDP.

The standings don't really change at the higher resolution/quality settings, but we do see some of the benefits of Crystalwell appear. A 9% advantage over the 100W desktop Trinity part grows to 18% as memory bandwidth demands increase. Compared to the desktop HD 4000 we're seeing more than 2x the performance, which means in mobile that number will likely grow even further. The mobile Trinity comparison is a shut out as well.

177 Comments

View All Comments

s2z.domain@gmail.com - Friday, February 21, 2014 - link

I wonder where this is going. Yes the multi core and cache on hand and graphics may be goody, ta.But human interaction in actual products?

I weigh in at 46kg but think nothing of running with a Bergen/burden of 20kg so a big heavy laptop with ingratiated 10hr battery and 18.3" would be efficacious.

What is all this current affinity with small screens?

I could barely discern the vignette of the feathers of a water fowl at no more than 130m yesterday, morning run in the Clyde Valley woodlands.

For the "laptop", > 17" screen, desktop 2*27", all discernible pixels, every one of them to be a prisoner. 4 core or 8 core and I bore the poor little devils with my incompetence with DSP and the Julia language. And spice etc.

P.S. Can still average 11mph @ 50+ years of age. Some things one does wish to change. And thanks to the Jackdaws yesterday morning whilst I was fertilizing a Douglas Fir, took the boredom out of a another wise perilous predicament.

johncaldwell - Wednesday, March 26, 2014 - link

Hello,Look, 99% of all the comments here are out of my league. Could you answer a question for me please? I use an open source 3d computer animation and modeling program called Blender3d. The users of this program say that the GTX 650 is the best GPU for this program, siting that it works best for calculating cpu intensive tasks such as rendering with HDR and fluids and other particle effects, and they say that other cards that work great for gaming and video fall short for that program. Could you tell me how this Intel Iris Pro would do in a case such as this? Would your test made here be relevant to this case?

jadhav333 - Friday, July 11, 2014 - link

Same here johncaldwell. I would like to know the same.I am a Blender 3d user and work on cycles render which also uses the GPU to process its renders. I am planning to invest in a new workstation.. either a custome built hardware for a linux box or the latest Macbook Pro from Apple. In case of latter, how useful will it be, in terms of performance for GPU rendering on Blender.

Anyone care to comment on this, please.

HunkoAmazio - Monday, May 26, 2014 - link

Wow I cant believe I understood this, My computer archieture class paid off... except I got lost when they were talking about n1 n2 nodes.... that must have been a post 2005 feature in CPU N bridge S Bridge TechnologysystemBuilder - Tuesday, August 5, 2014 - link

I don't think you understand the difference between DRAM circuitry and arithmetic circuitry. A DRAM foundry process is tuned for high capacitance so that the memory lasts longer before refresh. High capacitance is DEATH to high-speed circuitry for arithmetic execution, that circuitry is tuned for very low capacitance, ergo, tuned for speed. By using DRAM instead of SRAM (which could have been built on-chip with low-capacitance foundry processes), Intel enlarged the cache by 4x+, since an SRAM cell is about 4x+ larger than a DRAM cell.Fingalad - Friday, September 12, 2014 - link

CHEAP SLI! They should make a cheap IRIS pro graphics card and do a new board where you can add that board for SLI.P39Airacobra - Thursday, January 8, 2015 - link

Not a bad GPU at all, On a small laptop screen you can game just fine, But it should be paired with a lower CPU, And the i3, i5, i7 should have Nvidia or AMD solutions.