Strontium Hawk (240GB) Review

by Kristian Vättö on June 25, 2013 8:00 AM ESTPerformance Consistency

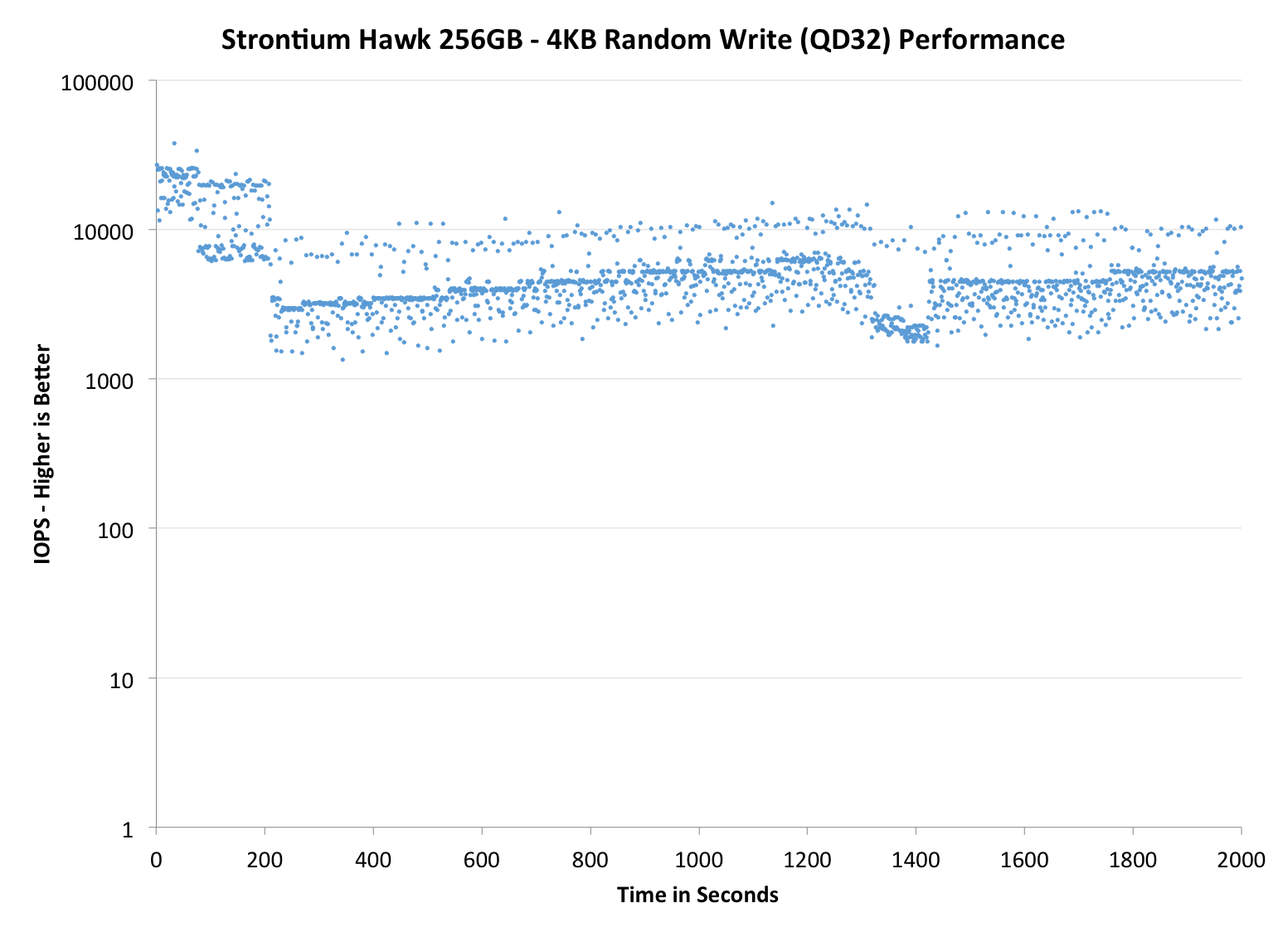

In our Intel SSD DC S3700 review Anand introduced a new method of characterizing performance: looking at the latency of individual operations over time. The S3700 promised a level of performance consistency that was unmatched in the industry, and as a result needed some additional testing to show that. The reason we don't have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag and cleanup routines directly impacts the user experience. Frequent (borderline aggressive) cleanup generally results in more stable performance, while delaying that can result in higher peak performance at the expense of much lower worst case performance. The graphs below tell us a lot about the architecture of these SSDs and how they handle internal defragmentation.

To generate the data below I took a freshly secure erased SSD and filled it with sequential data. This ensures that all user accessible LBAs have data associated with them. Next I kicked off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. I ran the test for just over half an hour, no where near what we run our steady state tests for but enough to give me a good look at drive behavior once all spare area filled up.

I recorded instantaneous IOPS every second for the duration of the test. I then plotted IOPS vs. time and generated the scatter plots below. Each set of graphs features the same scale. The first two sets use a log scale for easy comparison, while the last set of graphs uses a linear scale that tops out at 40K IOPS for better visualization of differences between drives.

The high level testing methodology remains unchanged from our S3700 review. Unlike in previous reviews however, I did vary the percentage of the drive that I filled/tested depending on the amount of spare area I was trying to simulate. The buttons are labeled with the advertised user capacity had the SSD vendor decided to use that specific amount of spare area. If you want to replicate this on your own all you need to do is create a partition smaller than the total capacity of the drive and leave the remaining space unused to simulate a larger amount of spare area. The partitioning step isn't absolutely necessary in every case but it's an easy way to make sure you never exceed your allocated spare area. It's a good idea to do this from the start (e.g. secure erase, partition, then install Windows), but if you are working backwards you can always create the spare area partition, format it to TRIM it, then delete the partition. Finally, this method of creating spare area works on the drives we've tested here but not all controllers may behave the same way.

The first set of graphs shows the performance data over the entire 2000 second test period. In these charts you'll notice an early period of very high performance followed by a sharp dropoff. What you're seeing in that case is the drive allocating new blocks from its spare area, then eventually using up all free blocks and having to perform a read-modify-write for all subsequent writes (write amplification goes up, performance goes down).

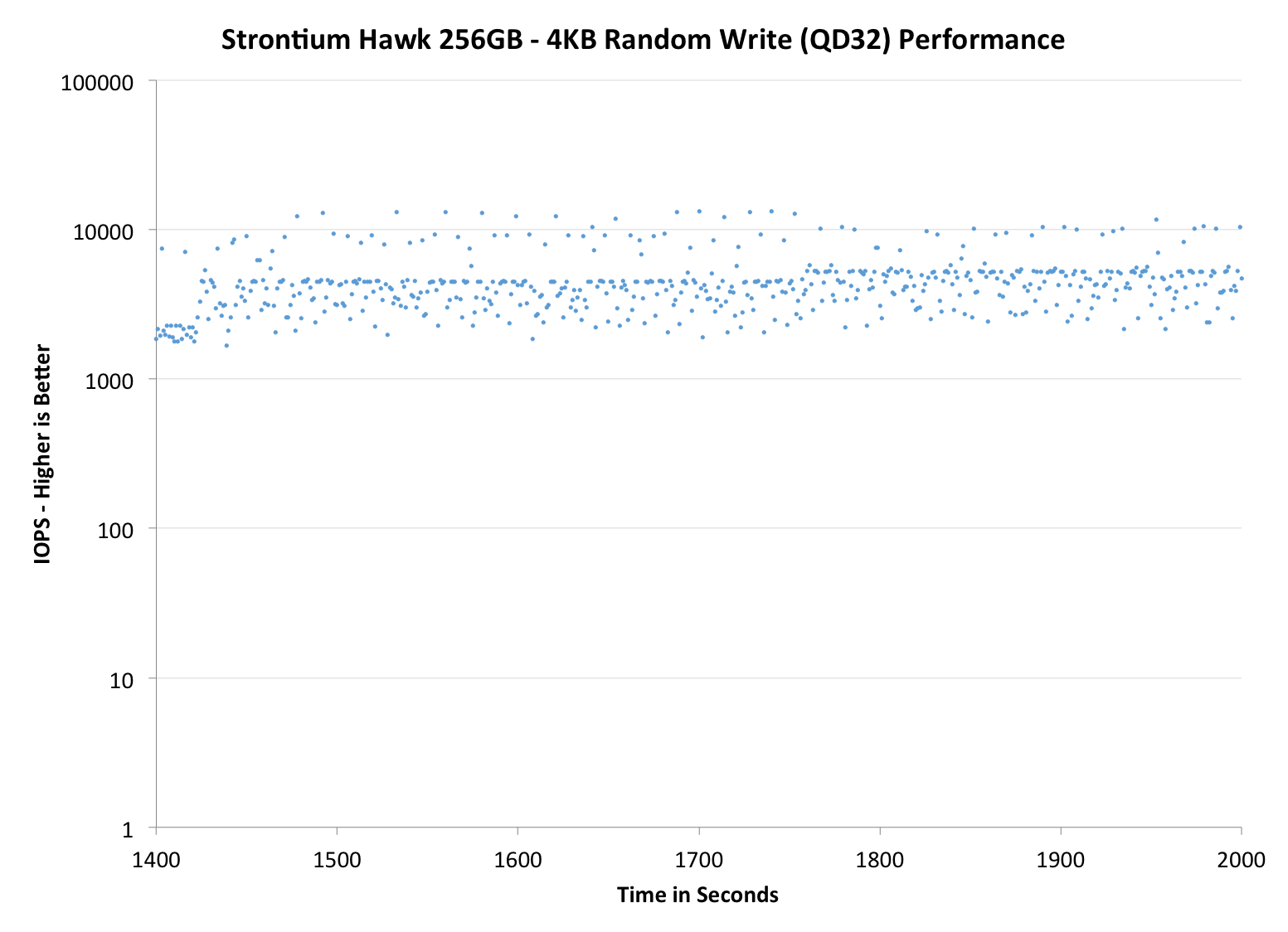

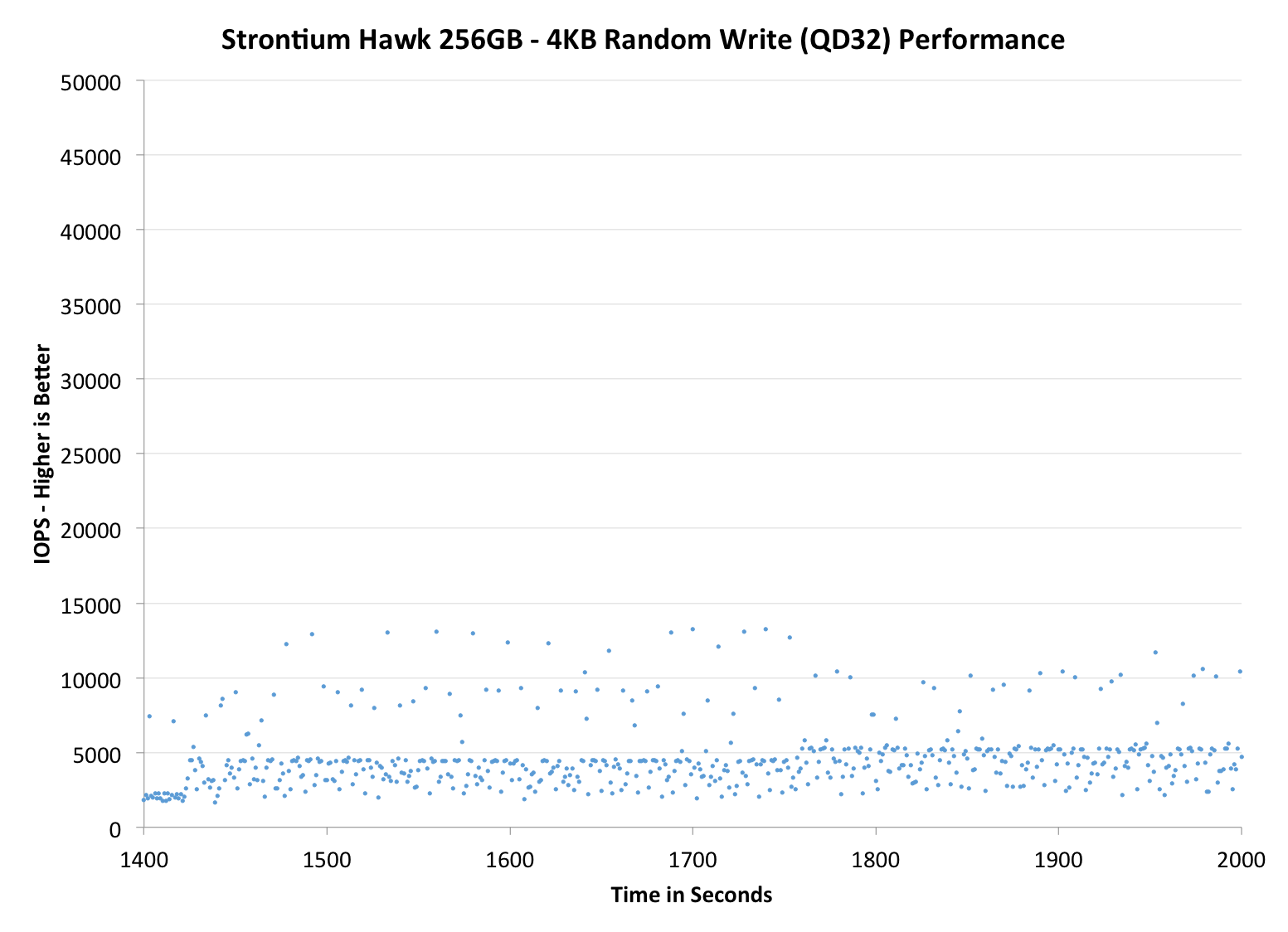

The second set of graphs zooms in to the beginning of steady state operation for the drive (t=1400s). The third set also looks at the beginning of steady state operation but on a linear performance scale. Click the buttons below each graph to switch source data.

|

|||||||||

| Strontium Hawk 256GB | Corsair Neutron 240GB | Crucial M500 960GB | Samsung SSD 840 Pro 256GB | SanDisk Extreme II 480GB | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

Performance consistency isn't very good. It's not horrible but compared to the best consumer drives, it leaves a lot to be desired. Fortunately there are no major dips in performance as the IOPS is over 1000 in all of our data points, so users shouldn't experience any significant slowdowns. Increasing the over-provisioning definitely helps but the IOPS is still not very consistent: It's going between 10K and ~2K IOPS, while for desirable drives the graph is very linear with low amplitude.

|

|||||||||

| Strontium Hawk 256GB | Corsair Neutron 240GB | Crucial M500 960GB | Samsung SSD 840 Pro 256GB | SanDisk Extreme II 480GB | |||||

| Default | |||||||||

| 25% Spare Area |

|

||||||||

|

|||||||||

| Strontium Hawk 256GB | Corsair Neutron 240GB | Crucial M500 960GB | Samsung SSD 840 Pro 256GB | SanDisk Extreme II 480GB | |||||

| Default | |||||||||

| 25% Spare Area | |||||||||

26 Comments

View All Comments

ssj3gohan - Wednesday, June 26, 2013 - link

Wait, what?! HIPM and DIPM have been standard features that are always enabled on every intel desktop system since the intel 5 series at least (and AM2+ on the AMD side, which had other reasons). The only reason not to have HIPM and DIPM is your choice of OS. It's a driver-enabled feature, it doesn't work without explicitly enabling it.Kristian Vättö - Wednesday, June 26, 2013 - link

That's not true. You can do the registry hack and get the option to enable HIPM+DIPM but that will have zero impact on power consumption on a desktop system. We've tried four different motherboards including Intel-branded ones and also both Windows 7 and 8 without success. That's why it took us so long to start doing HIPM+DIPM tests as Anand had to take an Ultrabook apart just for power testing and obviously I don't have access to that system due to geographical issues.JDG1980 - Tuesday, June 25, 2013 - link

If you want a Toshiba drive, why not just buy a Toshiba drive instead of one rebranded by a no-name company? I don't see the point of this.JarredWalton - Tuesday, June 25, 2013 - link

It's cheaper and uses the same hardware and firmware?Kristian Vättö - Tuesday, June 25, 2013 - link

Toshiba has quite poor retail presence, so Strontium may be targeting the markets where the availability of Toshiba SSDs is either very poor or non-existent.HisDivineOrder - Tuesday, June 25, 2013 - link

My concern would be what happens when they change products and firmwares are... nonexistent. SSD's are still far too temperamental to trust just some nobody who likes to throw caution to the wind.OCZ can't even be trusted in this regard.

Samsung, Intel, Micron/Crucial, maaaaybe Sandisk.

bji - Tuesday, June 25, 2013 - link

Can OCZ be trusted in *any* regard?zanon - Tuesday, June 25, 2013 - link

Kristian no they couldn't, seriously what the hell? We *still* have people arguing wrongly in freaking 2013? "Giga" is an SI prefix, it's base-10. That's what it is, anything else is wrong. This is particularly surprisingly from a technology enthusiast, as tech depends on precision, not random overloaded definitions. Maybe you'd like to suggest that they come up with their own definitions of "kilogram" and "pound" too with whatever mass they feel like? That sounds like a recipe for success!

If they want to show base-2, then they can use KiB/MiB/GiB/TiB/PiB. I don't think those sound the greatest but whatever, they're precise, correct, and the international standard (IEC 60027, published in *1999*). Storage makers were never wrong to use the SI prefixes and have base-10 numbers of sectors.

If anything the problem is Microsoft, who should long since have switched to displaying GB instead of GiB. Even Apple finally updated ages ago.

Kristian Vättö - Tuesday, June 25, 2013 - link

The problem is that Giga is not only an SI prefix, it's also a JEDEC prefix where Giga is defined as 1024^3. I do agree that Giga should only be used as an SI prefix (i.e. meaning 1000^3) but I guess someone at JEDEC thought that since 1000^3 and 1024^3 are "close enough", lets just call them the same.I also agree that it's Microsoft who should just change to the SI standard and define GB as 1000^3 bytes like Apple did. My point about Intel was that it's useless for a small OEM like Strontium to differ from the norm and I don't see Intel or anyone else switching away from the SI standard because the advertised capacities would end up being smaller than they are now.

KAlmquist - Wednesday, June 26, 2013 - link

"Storage makers were never wrong to use the SI prefixes"This is, I believe, inconsistent with your preceding paragraph. When disk drive manufacturers came up with their own definition of megabyte to make their disk drives seem larger, that created the same problems you envision if someone came up with their own definitions for "kilogram" and "pound".

As you note, that the IEC has decided to endorse the disk drive manufacture's definition of "megabyte" and has invented the term "mebibyte" to refer to a megabyte. That's a response to the linguistic mess created by the disk drive manufacturers; it doesn't justify creating the mess in the first place.

Meanwhile, as Kristian notes, JEDEC is sticking with the original meaning of the word. Words can acquire new meanings fairly quickly, but it takes a long time (on the order of 100 years) for a word to lose a well-established meaning.