NVIDIA Demonstrates Logan SoC: < 1W Kepler, Shipping in 1H 2014, More Energy Efficient than A6X?

by Anand Lal Shimpi on July 24, 2013 9:00 AM EST

Ever since its arrival in the ultra mobile space, NVIDIA hasn't really flexed its GPU muscle. The Tegra GPUs we've seen thus far have been ok at best, and in serious need of improvement at worst. NVIDIA often blamed an immature OEM ecosystem unwilling to pay for the sort of large die SoCs necessary in order to bring a high-performance GPU to market. Thankfully, that's all changing. Earlier this year NVIDIA laid out its mobile SoC roadmap through 2015, including the 2014 release of Project Logan - the first NVIDIA ultra mobile SoC to feature a Kepler GPU. Yesterday in a private event at Siggraph, NVIDIA demonstrated functional Logan silicon for the very first time.

NVIDIA got Logan silicon back from the fabs around 3 weeks ago, making it almost certain that we're dealing with some form of 28nm silicon here and not early 20nm samples.

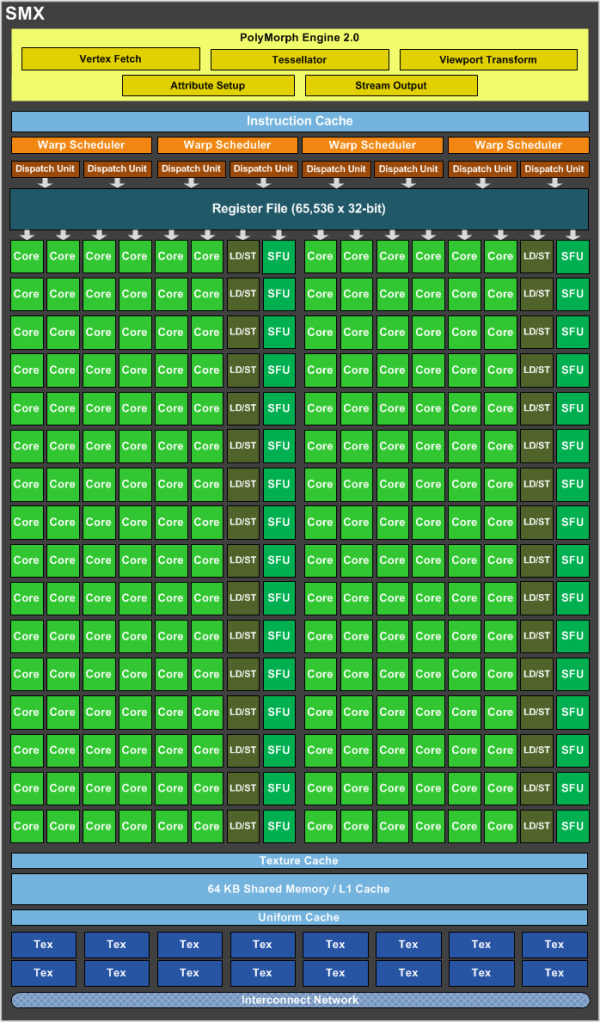

NVIDIA isn't talking about CPU cores, but it's safe to assume that Logan will be another 4+1 arrangement of cores - likely still based on ARM's Cortex A15 IP (but perhaps a newer revision of the core). On the GPU front, NVIDIA confirmed our earlier speculation that Logan includes a single Kepler SMX:

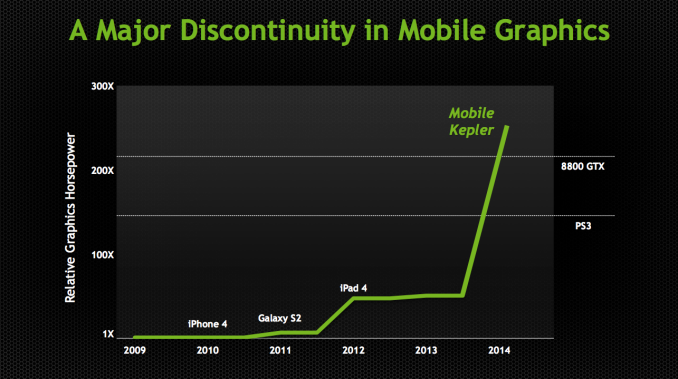

One Kepler SMX features 192 CUDA cores. NVIDIA isn't talking about shipping GPU frequencies either, but it did provide this chart to put Logan's GPU capabilities into perspective:

Don't get too excited as we're looking at a comparison of GFLOPS and not game performance, but the peak theoretical ALU bound performance of mobile Kepler should exceed that of a Playstation 3 or GeForce 8800 GTX (memory bandwidth is another story however). If we look closely at NVIDIA's chart and compare mobile Kepler to the iPad 4, we get a better idea of what sort of clock speeds NVIDIA would need to attain this level of performance. Doing some quick Photoshop estimation it looks like NVIDIA is claiming mobile Kepler has somewhere around 5.2x the FP power of the PowerVR SGX 554MP4 in the iPad 4 (76.8 GFLOPS). That works out to be right around 400 GFLOPS. With a 192 core implementation of Kepler, you get 2 FLOPS per core or 384 FLOPS per cycle. To hit 400 GFLOPS you'd need to clock the mobile Kepler GPU at roughly 1GHz. That's certainly doable from an architectural standpoint (although we've never seen it done on any low power 28nm process), but it's probably a bit too high for something like a smartphone.

NVIDIA didn't want to talk frequencies but they did tell me that we might see something this fast in some sort of a tablet. I suspect that most implementations will be clocked significantly lower. Even at half the frequency though, we're still talking about roughly Playstation 3 levels of FP power out of a mobile SoC. We know nothing of Logan's memory subsystem, which obviously plays a major role in real world gaming performance but there's no getting around the fact that Logan's Kepler implementation means serious business. For years we've lamented NVIDIA's mobile GPUs, Logan looks like it's finally going to change that.

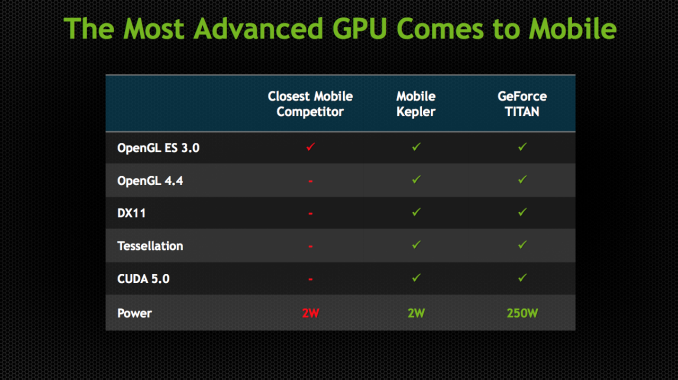

API Support and Live Demos

Unlike previous Tegra GPUs, Kepler is a fully unified architecture and OpenGL ES 3.0, OpenGL 4.4 and DirectX 11 compliant. The API compliance alone is a huge step forward for NVIDIA. It's also a big one for game developers looking to move more seriously into mobile. Epic's Tim Sweeney even did a blog post for NVIDIA talking about Logan's implementation of Kepler and how it brings feature parity between PCs, next-gen consoles and mobile platforms. NVIDIA responded in kind by running some Unreal Engine 4 demos on Android on a Logan test platform. That's really the big story behind all of this. With Logan, NVIDIA will bring its mobile GPUs up to feature parity with what it's shipping in the PC market. Game developers looking to port games between console, PC, tablet and smartphone should have an easier job of doing that if all platforms supported the same APIs. Logan will take NVIDIA from being very behind in API support (with no OpenGL ES 3.0 support) to the head of the class.

NVIDIA took its Ira demo, originally run on a Titan at GTC 2013, and got it up and running on a Logan development board. Ira did need some work to make the transition to mobile. The skin shaders were simplified, smaller textures are used and the rendering resolution is dropped to 1080p. NVIDIA claims this demo was done in a 2 - 3W power envelope.

The next demo is called Island and was originally shown on a Fermi desktop part. Running on Logan/mobile Kepler, this demo shows OpenGL 4.3 and hardware tessellation working.

The development board does feature a large heatspreader, but that's not too unusual for early silicon just out of bring up. Logan's package size should be comparable to Tegra 4, although the die size will clearly be larger. The dev board is running Android and is connected to a 10.1-inch 1920 x 1200 touchscreen.

141 Comments

View All Comments

yhselp - Thursday, July 25, 2013 - link

At some point in the near future, if Intel doesn't decide to do it themselves, would it be possible for an OEM to licence NVIDIA IP and integrate it into an Intel ultra mobile design?JlHADJOE - Friday, July 26, 2013 - link

Now that would be interesting.Maybe Nokia would do it for an upcoming W8 phone? Silvermont Atom + Logan. Bringing Windows, Intel and Nvidia from your desktop to your phone.

yhselp - Friday, July 26, 2013 - link

Sound good, but there might be a few problems. Microsoft wants homogeneity between Windows phones and so they set requirements for the SoC (among other things). Back in the days of WP7 these rules were quite strict which meant an OEM didn't have complete freedom in choosing the SoC. Nowadays, as far as I understand, there's only a set of minimum system requirements that an OEM has to meet. An Intel/NVIDIA SoC would obviously be more than powerful enough, but I wonder whether Microsoft would have anything to say about such an implementation. Furthermore, there's the question of the benefits of all this; while the NT kernel is there, the mobile OS would need some work to make proper use of all that power. Not to mention, having the same architecture and API doesn't immediately translate to running the exact same software from Windows 'PC' on Windows 'mobile'.A Silvermont/Logan implementation, while great, is not that exciting. Next-gen Silvermont (hopefully wider) + Maxwell on smaller fab would be quite interesting.

HighTech4US - Thursday, July 25, 2013 - link

Anand: NVIDIA got Logan silicon back from the fabs around 3 weeks ago, making it almost certain that we're dealing with some form of 28nm silicon here and not early 20nm samples.I believe this is a wrong assumption and that the Logan sample is on 20nm.

As of April 14, 2013 TSMC has had 20mn risk production available. More than enough time for Logan to be produced on 20nm.

http://www.cadence.com/Community/blogs/ii/archive/...

Quote: While TSMC has four "flavors" of its 28nm process, there is one 20nm process, 20SoC. "20nm planar HKMG [high-k metal gate] technology has already passed risk production with a very high yield and we are preparing for a very steep ramp in two GIGAFABs," Sun said.

Quote: Sun noted that 20SoC uses "second generation," gate-last HMKG technology and uses 64nm interconnect. Compared to the 28HPM process, it can offer a 20% speed improvement and 30% power reduction, in addition to a 1.9X density increase. Nearly 1,000 TSMC engineers are preparing for a "steep ramp" of this technology.

Refuge - Thursday, July 25, 2013 - link

I think you have high hopes good sir, but I disagree with you on this one.I would be struck silly if this came on a 20nm process when released.

watersb - Friday, July 26, 2013 - link

Crikey.wizfactor - Friday, July 26, 2013 - link

Those are some fantastic numbers! While I'm all for seeing Logan in future SOCs, I'm not a huge fan of seeing them in Tegra. If developers need to rebuild their mobile games (see Riptide GP for Android) just to optimize it for your chip, you're doing something wrong. I have yet to hear anecdotes about how pleasant an experience it is to port an Android game to a proprietary chip such as Tegra.With that said, I'd love to see this GPU on other chipsets such as the Exynos, or even Apple's A-series chips. I can't help but think that Nvidia is teasing Apple into a license agreement here. I mean, the very fact that Apple could get more than double the graphics performance on their iPad 4 with a Kepler GPU under the exact same power constraint must ring music to their ears. They could either dramatically increase iPad performance and eliminate any performance woes involved with driving that Retina Display, or they could get a massive boost in battery life while keeping performance levels similar.

Of course, it's Apple's call if they want to swap out Imagination for Nvidia. Let's hope Cupertino isn't too attached to its investment in the former.

michael2k - Friday, July 26, 2013 - link

Why would they want to swap out with NVIDIA? The PowerVR Series 6, of comparable performance, was available for license last January, and expected to be out in production this year. What you miss is that with the Power VR S6 they can get more than four times the graphics performance of an iPad 4 one year earlier than with a Kepler GPU; why would they wait a year then?jipe4153 - Tuesday, July 30, 2013 - link

Their isn't a single high end PowerVR 6 such as the G6630 planned for production yet. It's just been paper launched.- No engineering samples, nothing!

- Just numbers on a paper!

Furthermore their peak paper product, the G6630 is slated for a peak performance of ~230 GFLOPS, which is almost half the performance that logan is sporting!

Logan has the winning recipe:

- Most powerful hardware

- Good efficiency

- Best sofware and driver stack on the market!

This is going to be a major upset on the mobile market.

phoenix_rizzen - Friday, December 20, 2013 - link

And yet, Apple shipped with a series 6 GPU and not an nVidia one. Where's the major upset?