Some Thoughts about the iPhone 5S Camera Improvements

by Brian Klug on September 13, 2013 1:19 AM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone 5S

One of the big improvements that comes with the iPhone 5S is a new camera system, with a faster aperture for more light throughput, bigger 8 MP sensor with correspondingly bigger pixels, dual LED color temperature matching flash, and improved ISP. I thought it worth going over some of the changes before we look at it in the review since there’s already honestly quite a lot that I’ve gathered about the 5S system just from examining EXIF on the images from Apple’s uploaded sample images gallery, what I saw in the demo room on a 5S, and what has been said publicly.

Camera has become one of the major axes of differentiation in the smartphone space. It doesn’t take much inspection to see that it has become an emphasis everywhere. Nokia has had halo devices for a long time now which establish its position with dominant smartphone camera leadership, HTC recently pushed its imaging emphasis very far with the One’s camera system and arguably the system before it for the One X and S, and even the recent Moto X made an attempt to do something fundamentally different with a color filter array including a clear pixel. Obviously the iPhone sits somewhere in that fray if nothing else since Apple really started demonstrating something above normal camera competency around the 3GS and 4 by shipping a system of its own design and specification. Since then, Apple has continued to push parts of its imaging chain further with a custom Sony CMOS starting with the 5 and custom ISP with the 4S. Obviously the statistic that Apple will cite is that the iPhone cameras take the top 3 spots on Flickr for camera popularity, making them arguably some of the most important cameras out there. Given my optical engineering background, watching smartphone cameras evolve and move in different directions is of course particularly interesting since it’s a place in the camera world with unusual constraints. As a photographer I’m also interested in finding ultimately what device has the best usability to camera tradeoff ratio, and Apple has historically made sound choices with its system.

| iPhone 4, 4S, 5, 5S Cameras | ||||

| Property | iPhone 4 | iPhone 4S | iPhone 5 | iPhone 5S |

| CMOS Sensor | OV5650 | IMX145 | IMX145-Derivative | ? |

| Sensor Format |

1/3.2" (4.54x3.42 mm) |

1/3.2" (4.54x3.42 mm) |

1/3.2" |

~1/3.0" (4.89x3.67 mm) |

| Optical Elements | 4 Plastic | 5 Plastic | 5 Plastic | 5 Plastic |

| Pixel Size | 1.75 µm | 1.4 µm | 1.4 µm | 1.5 µm |

| Focal Length | 3.85 mm | 4.28 mm | 4.10 mm | 4.12 mm |

| Aperture | F/2.8 | F/2.4 | F/2.4 | F/2.2 |

| Image Capture Size |

2592 x 1936 (5 MP) |

3264 x 2448 (8 MP) |

3264 x 2448 (8 MP) |

3264 x 2448 (8 MP) |

| Average File Size | ~2.03 MB (AVG) | ~2.77 MB (AVG) | ~2.3 MB (AVG) | 2.5 MB (AVG) |

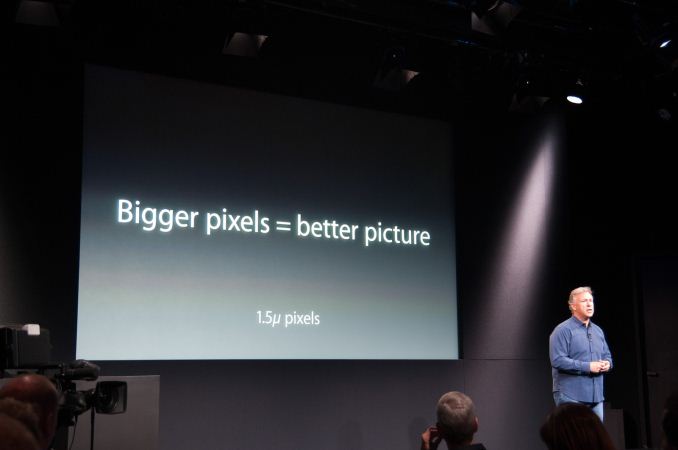

With the iPhone 5S camera, Apple makes a number of interesting changes that I already touched on. Rather than march down the pixel size roadmap to 1.1µm (and beyond that the upcoming 0.9µm step) to either increase pixel count with an equivalent sized sensor, or shrink the sensor and optical stack and maintain the same number of pixels, Apple has chosen a strategy like HTC’s and gone the other way. Apple has increased pixel size to 1.5µm (from 1.4µm), while keeping pixel count the same, thus creating a larger sensor. I remember telling Anand and a number of other people that if Apple even just stayed at 1.4µm for the 5S, it would mean validation of everything I ever said about the 1.1µm size and beyond in my prior smartphone imaging and optics presentation.

Obviously seeing Apple put up a "bigger pixels = better picture" slide during the keynote and move the opposite direction by bucking the trend just like HTC did makes me a lot more comfortable about the future of Apple’s smartphone imaging roadmap. Increasing pixel size is arguably the better but simultaneously harder direction for the industry to increase image quality, low light sensitivity, and SNR. Keep in mind that I’m talking about pixel pitch here, so Apple’s increase from 1.4µm to 1.5µm pixels really means a 14.8 percent increase in pixel area and thus integration area. If you do the math out, Apple moves from the relatively standard 1/3.2“ CMOS sensor size to around 1/3” in size, similar to the HTC One. Increasing sensor size and pixel size really will make a measurable difference in low light sensitivity, noise, and dynamic range. I don’t know any specifics but given Apple’s ability to get Sony to make a one-off custom CMOS for the iPhone 5, I wouldn’t be surprised to see Sony supply a sensor of Apple’s specification this time for the 5S, one of the nice things about having their kind of volume.

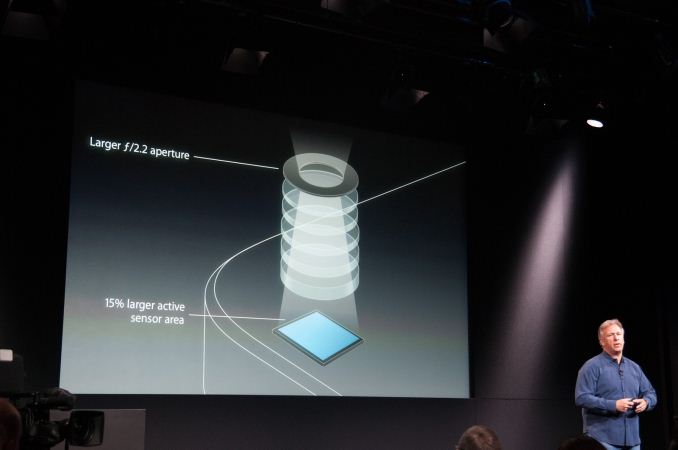

At the same time, the optical design has improved to increase the amount of light passed through the system. Apple’s 4S and 5 systems were F/2.4, the iPhone 5S system moves down a quarter stop to F/2.2. I was hoping for F/2.0 like we see a number of other OEMs shipping (HTC, Nokia) but F/2.2 might be a logical tradeoff to keep aberrations down and not run into some of the stray light issues that I’ve seen crop up in those other systems. The 5 had stray light issues that famously resulted in some purple fringing already at F/2.4, which people falsely attributed to the sapphire cover glass (which is actually colorless). It remains to be seen whether the lower F/# on the 5S increases them, though I wager the attention given to this issue probably resulted in some correspondingly better anti reflection coatings and stray light management.

Focal length changes slightly, from 4.10 mm in the 5 to 4.12 mm in the 5S. The difference in sensor size and thus crop factor gives a 35mm equivalent focal length of around 31mm in the 5 and 29.7mm in the 5S. Shorter focal length has generally been a tradeoff for a while to decrease the z-height of the module further, and you see a lot of smartphone vendors hovering around 28mm or slightly lower, which is relatively wide. Marketing will then turn around and market the wider angle like it’s some positive thing, which is hilarious. Apple has thus far been steadfast at staying around 30mm, but there’s no denying that the 5S will indeed be a bit wider angle than the 5 in practice. Ideally I’d love to have a smartphone module with around a 35mm focal length in 35mm equivalent numbers.

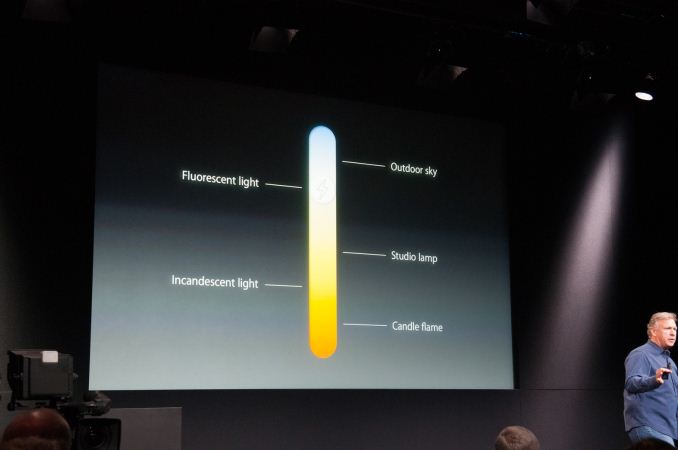

The most visible and talked about change for the 5S imaging system thus far is the dual LED “true tone” flash, which really is a system with two LEDs of different color temperature. Spectral output of LEDs tend to be a bunch of narrow bandwidth spikes, which results in weird color rendering (analogous to the sometimes poor color rendering index of LED lightbulbs or CCFLs). In addition, white LED flashes just end up having a blue tint compared to Xenon. The solution is to add another color temperature alongside, then mix the two LEDs to get the desired color temperature that matches the scene. I actually saw NVIDIA doing this dual color temperature LED flash mixing on their Tegra 4 reference tablet back before MWC, and this is something other OEMs have been talking about, Apple just has the first device to market with it.

I played around with the flash in the demo area, and the 5S system pre-flashes, computes the correct flash amplitude to match scene color temperature, and then fires a flash during capture with the right color temperature.

The result is a photo that does look better in terms of color rendering and temperature. Personally I still have a deep seated dislike of on-camera or direct flash, and only use it when natural light is absolutely insufficient to get a photo. I don’t think this will really change my feelings about on-camera flash, but if you do have to use flash to light a dark scene, this makes it a lot less terrible.

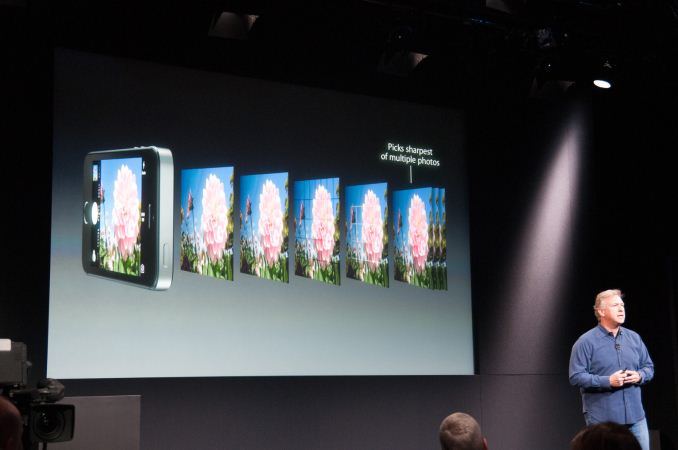

Finally there are improvements to ISP and video encode. The 5S includes a further improved ISP, though I have no way of knowing what’s new inside beyond what Apple stated during the keynote, and in general SoC ISP remains a closely guarded secret for all of the silicon players. Apple purports their new ISP has improved AWB, AE, new local tone mapping, new autofocus matrix metering with 15 focus zones, and automatic selection of the sharpest image in the capture buffer when you finally do press capture. Of course there’s also a new burst capture mode which activates when holding down the capture button and captures at 10 FPS. I held this down in the demo area and took around 500 images without the speed slowing down. Apple is probably buffering these in DRAM and writing them to NAND at the same time.

On the video side the 5S now includes a 120FPS capture mode. The camera UI has a “slow-mo” option beyond the video mode, which captures 720p120 video. The framerate in this mode in the camera preview is visibly faster, capture works like normal, but inside the playback UI are two scrubbers which let you play back that 120FPS video at 30 FPS, making it look 4x slower. I’m unclear whether the 5S makes the raw 720p120 video available, but I really hope that’s the case. I do wish that there was 1080p60 capture, but that might be exposed through the video capture APIs somewhere, again it’s still unclear.

What’s missing from the 5S is OIS. I didn’t ever think it was in the cards for the 5S, so its absence isn’t a surprise, but it’s a substantial improvement for video and longer exposures. The reality is that an increasing number of players are including it – Nokia, HTC, and LG, and that list will only continue getting larger. Its absence isn’t the end of the world, but the stronger OIS implementations make a substantial difference for both videos and still images. Apple’s going the electronic and computational route with further improvements to its EIS auto image stabilization, which combines the sharp parts of multiple images together to get a single sharp picture. Almost every smartphone camera system now has a back buffer of images coming from the sensor, Apple purports that it is able to do some computational analysis, grab sections of the last few images and combine them to produce a sharp result. This will help in good lighting where the system can grab a lot of images quickly with good exposure, but obviously doesn’t fundamentally solve the low light problem where the exposures themselves are still longer. Grabbing photographs without blur remains a challenging problem for everyone, obviously OIS doesn’t help with scenes where the subject is moving either.

I’ll add that I’m still unclear whether the 2x2 pixel binning remains in place with the 5S camera. I would be surprised if it wasn’t in place however, especially given the 720p120 recording mode which would require it to get the higher sensitivity required for higher framerate. Higher framerate video capture means less integration time per frame to gather light.

Selected EXIF from Apple's iPhone 5S Sample Photos:

Make : AppleCamera Model Name : iPhone 5sExposure Time : 1/1866F Number : 2.2Exposure Program : Program AEISO : 32Metering Mode : SpotFlash : Off, Did not fireFocal Length : 4.1 mmFocal Length In 35mm Format : 30 mmGPS Altitude : 15.8 m Above Sea LevelGPS Latitude : 38 deg 1' 22.22" NGPS Longitude : 122 deg 31' 22.23" WGPS Position : 38 deg 1' 22.22" N, 122 deg 31' 22.23" WImage Size : 3264x2448

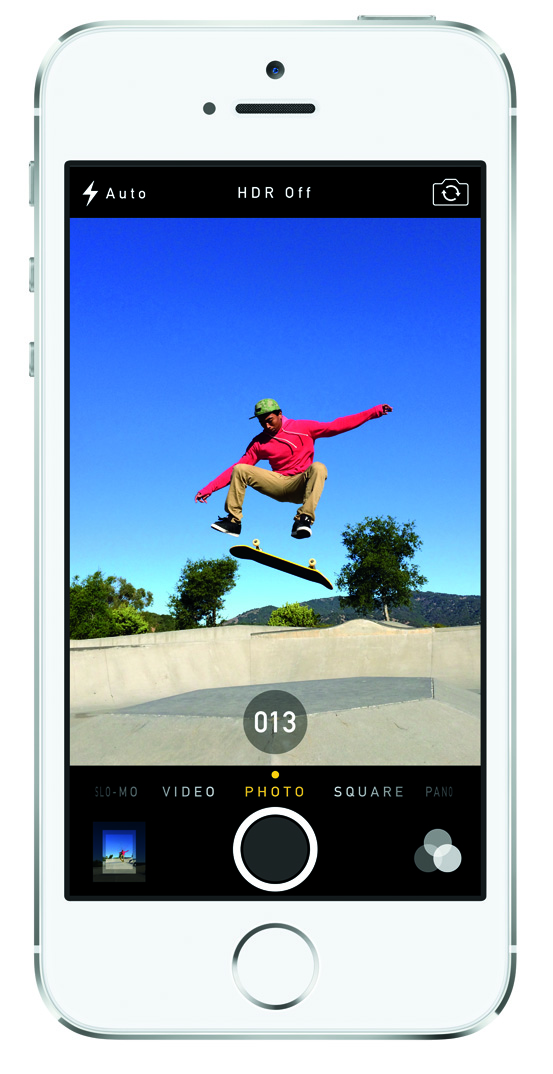

A few iPhones ago, Apple started posting sample images from their new iPhone camera in full resolution taken straight from the device on their own site right after the announcement. That has turned into common practice now, and the 5S is no exception. I’ve gone over the images each time and looked at EXIF for whatever info is available (and the GPS tags that generally were left on), and did the same for the 5S. Interestingly enough Apple only left location tagging on for one of the 6 images (the skateboard pic) this time around.

The photo samples look very good. None of the photos go over ISO 100, in fact most of them are at a very low ISO 32 which keeps noise down. Exposure times are also very fast. Only image 3 is somewhat low light looking. I was hoping Apple would include a few samples showing low light performance indoors with and without flash, but I guess I’m not surprised Apple would pick scenarios that show off the best quality rather than situations that might strain it. For that we’ll have to wait.

-

Image number one which is a macro shot of a flower has a nice looking blurry background effect without distracting or artificial looking bokeh. For a smartphone camera this looks very good.

-

Image number two is a top down shot of some chilis on a wood table, relatively planar. What’s awesome here is it lets me see immediately that there’s good sharpness across the entire field of view – the edges don’t get soft like a number of other smartphones do. Maintaining good MTF across the entire field of view is difficult, especially at extreme field angles like the corners. While there’s definitely some falloff, it’s very controlled on this 5S sample photo. In addition there’s no weird distortion, the horizontal grains in the wood remain horizontal for example.

-

Image number three is of a jellyfish, and is the only somewhat lower light photo, though I’m not convinced this is what I’d consider low light. It looks good but moreover illustrates that the auto exposure algorithm in the 5S isn’t just blindly taking exposure over the whole scene (the black aquarium) – spot metering mode is noted in the EXIF indicating the user tapped the jellyfish.

-

Image four is an impressive sunset, although here the bokeh is a bit more distracting and weird looking in the background. Sharpness is retained however in the grass close to the sunset bloom, without washing out. I also don’t see any fringing.

-

Image five is of some kids in a pool, skin tones look great here against the water, sharpness is also great.

-

Image six has action nicely frozen and was probably captured using burst mode, if there’s any opportunistic image recombination here for the scene, I can’t detect it in the output result.

The camera UI in iOS 7 also gets a substantial overhaul. Gone is the still image capture preview which is fit to the long axis of the iPhone 5/5C/5S display, which crops off the top and bottom of the image. Thankfully, Apple has come to its senses and changed the UI so the full field of view of the image is visible, and what you see is now what you get. If only Google would now follow suit and do the same to the AOSP/stock Android UI.

The camera UI has completely different iconography and styling from the old one. Gone is the video toggle, and in its place is a mode ring which switches between slow-mo, videos, photo, square, and panorama. This eliminates some of the feature cruft that was piling up in the “options” button from the old UI. There’s also the filters option which shows a live preview grid of some filters on the image – think photo booth for iOS. My only complaint is that whereas the previous iOS camera UI had more visual cues that made it easy to confirm the camera detected proper portrait or landscape orientation, the iOS 7 camera really doesn’t. Only the thumbnail and flash/HDR/front camera icons rotate. Further, the text ring switcher doesn’t rotate, which adds some mental processing when you’re shooting in landscape (which you should, especially for video).

I explored the iOS 7 camera UI and slow motion features on the iPhone 5S a lot in our hands on video which I’d encourage you to check out.

Overall the improvements to the 5S camera system are very positive and I’m very happy to see Apple going the direction of bigger pixels rather than marching down the pitch size roadmap and trading off sensitivity. Larger pixels and bucking that trend is absolutely positively the right direction to go. If Apple went the other way I’d start getting concerned about the camera team over there. Obviously the choices made in the 5S do a lot to put me at ease and reassure that there’s still some sanity in the smartphone imaging space. Likewise the dual color temperature LED flash system is another low light improvement, even if I still dislike on-camera flash in general and will probably never use it – every iPhone I’ve ever owned has had flash set to off rather than auto. Faster F/# is a big improvement along that same axis as well, letting in more light and possibly giving more shallow depth of field at some focus positions. High framerate video coming to the 5S was a no brainer given the new APIs and features added to iOS 7, I just assumed it’d be 1080p60 rather than 720p120, although there’s still a chance that 1080p60 is an option through the capture API rather than the camera UI. Finally I’m very happy that Apple has sorted out the aspect ratio cropping issues in the camera UI that really frustrated me with the iPhone 5’s display ratio change. The end takeaway is better low light performance compared to the 5 at the same resolution, substantially better LED flashes when native light doesn't get you far enough, and of course those extra added features like burst mode and 120FPS video record.

Most of my time getting hands on with the iPhone 5S in the town hall demo room was spent playing with the camera UI, but there’s only so much you can really tell given a few minutes. Either way the iPhone 5S looks to be another big improvement to Apple’s very popular camera system.

98 Comments

View All Comments

DERSS - Friday, September 13, 2013 - link

Not only smaller pixels capture less light, the sensor's blind area because of interpixel structures is relatively much bigger. Even with backslide illumination such blind areas can go very significant.WaltFrench - Friday, September 13, 2013 - link

There would be a slight degradation because each pixel has a fixed overhead of connection circuitry, so a two half-sized pixels aren't quite 50% of the original's area. We'd need somebody who understands the current BSI technology to comment as this may no longer be a big issue. But I think it is.Otherwise, yes, a smart algorithm should be able to make a good tradeoff, possibly better, between better detail that you might want in a well-lit crop, versus averaging in a low-light setting.

“Should” being the operative word. It seems there's a huge variation in approaches here; some approaches may fail badly in some circumstances even if they're better in most. Besides the hardware, there's a software arms race here.

ajcarroll - Friday, September 13, 2013 - link

there is a difference, if the smaller pixels have less than half the information. Remeber difference colors are at difference frequencies. red has a lower frequence than green or blue. lower frequencies means longer wave lengths, and a very red, red had a wavelength around 700nm.... so if your pixel is less than approx 1400nm, it's less than 2x the wavelength of the deepest color you're trying to detect.... 1100nm is simply not going to reproduce color with the same fidelityjoelypolly - Saturday, September 14, 2013 - link

You forget that there is still electronics between the pixels and its not just a gapless design. Microlenses, defraction are all things that need to be compared.Midwayman - Tuesday, September 17, 2013 - link

You're talking about 4 pixels down sampled to 1 normally, but you lose area to wiring, etc every time you sub devide an area. 4 pixels isn't the same as one larger one with 4x the area.chaosbloodterfly - Friday, September 13, 2013 - link

I think he means relative to other smartphone cameras, not the 4S.Yazz_ - Monday, September 16, 2013 - link

I've had 4MP camera's nearly a decade ago that took far better pictures then a lot of the more recent 12-20+MP. Resolution means almost nothing for picture quality, it's mostly how good of a censer and lenses you have. Still have that old Kodak digital camera around someplace, first camera I ever saw with Bluetooth. P.S. - if you need a visual image of what I mean by quality, think to the "snow" image affect you can see in solid colors when you look up real close. Even multi-thousand dollor DSLR's have difficulty reducing those artifacts in low lighting conditions.Sorry to turn this into a rant, but the current resolution is pretty high, and we're constantly syncing backups to the cloud, unless you notice a tiny insect by chance after you've taken a photo and you want to zoom in and see if there's a visible smile on it's face, you don't really have much to gain at all to gain from increasing the resolution beyond what the 4S already has.

Krysto - Friday, September 13, 2013 - link

So far I've noticed quite some large diferences between 8MP cameras and 13MP in terms of detail. I think between 8MP and 20 MP (Xperia Z1), 13MP is probably the sweet spot for a lot more detail than the 8MP one, and without being much slower (20MP+).Also Apple's take on picking the best picture from 10 others, is very stupid. It will drain battery faster than it should if it takes 10 pictures all the time. Using OIS to take the more steady/better picture would've been much better, along with other low-light advantages that OIS has.

akdj - Friday, September 13, 2013 - link

You don't have to shoot in burst mode. You still have a single shot option. It's definitely not 'stupid' (the burst mode---it's actually extremely intelligent). In fact...it's extremely ignorant for you to consider this type of engineering 'stupid' in any sort of discussion. It's by far and away one of the hardest and challenging types of engineering considering the spatial limitations inside of a 'phone'.Impulses - Friday, September 13, 2013 - link

Shooting a quick burst and picking the best one is pretty logical, other phones have been doing it for quite a while (HTC's been doing it since last gen) and even pros with real cameras will fire quick bursts during a shoot to then pick the best one... How's it a battery drain if you can turn it off and the stream is probably going thru the processor at all times anyway... Now, automatically combining them in a pseudo always on HDR mode would be a different story and that would probably take a bit of a battery penalty as it's processed.