The Google Nexus 9 Review

by Joshua Ho & Ryan Smith on February 4, 2015 8:00 AM EST- Posted in

- Tablets

- HTC

- Project Denver

- Android

- Mobile

- NVIDIA

- Nexus 9

- Lollipop

- Android 5.0

The Secret of Denver: Binary Translation & Code Optimization

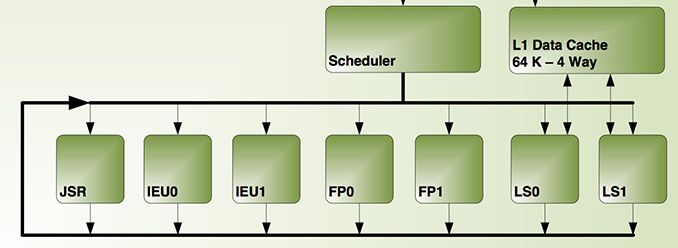

As we alluded to earlier, NVIDIA’s decision to forgo a traditional out-of-order design for Denver means that much of Denver’s potential is contained in its software rather than its hardware. The underlying chip itself, though by no means simple, is at its core a very large in-order processor. So it falls to the software stack to make Denver sing.

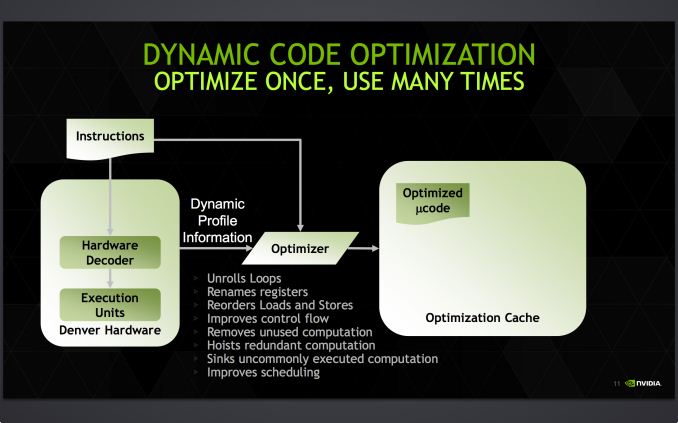

Accomplishing this task is NVIDIA’s dynamic code optimizer (DCO). The purpose of the DCO is to accomplish two tasks: to translate ARM code to Denver’s native format, and to optimize this code to make it run better on Denver. With no out-of-order hardware on Denver, it is the DCO’s task to find instruction level parallelism within a thread to fill Denver’s many execution units, and to reorder instructions around potential stalls, something that is no simple task.

Starting first with the binary translation aspects of DCO, the binary translator is not used for all code. All code goes through the ARM decoder units at least once before, and only after Denver realizes it has run the same code segments enough times does that code get kicked to the translator. Running code translation and optimization is itself a software task, and as a result this task requires a certain amount of real time, CPU time, and power. This means that it only makes sense to send code out for translation and optimization if it’s recurring, even if taking the ARM decoder path fails to exploit much in the way of Denver’s capabilities.

This sets up some very clear best and worst case scenarios for Denver. In the best case scenario Denver is entirely running code that has already been through the DCO, meaning it’s being fed the best code possible and isn’t having to run suboptimal code from the ARM decoder or spending resources invoking the optimizer. On the other hand then, the worst case scenario for Denver is whenever code doesn’t recur. Non-recurring code means that the optimizer is never getting used because that code is never seen again, and invoking the DCO would be pointless as the benefits of optimizing the code are outweighed by the costs of that optimization.

Assuming that a code segment recurs enough to justify translation, it is then kicked over to the DCO to receive translation and optimization. Because this itself is a software process, the DCO is a critical component due to both the code it generates and the code it itself is built from. The DCO needs to be highly tuned so that Denver isn’t spending more resources than it needs to in order to run the DCO, and it needs to produce highly optimal code for Denver to ensure the chip achieves maximum performance. This becomes a very interesting balancing act for NVIDIA, as a longer examination of code segments could potentially produce even better code, but it would increase the costs of running the DCO.

In the optimization step NVIDIA undertakes a number of actions to improve code performance. This includes out-of-order optimizations such as instruction and load/store reordering, along register renaming. However the DCO also behaves as a traditional compiler would, undertaking actions such as unrolling loops and eliminating redundant/dead code that never gets executed. For NVIDIA this optimization step is the most critical aspect of Denver, as its performance will live and die by the DCO.

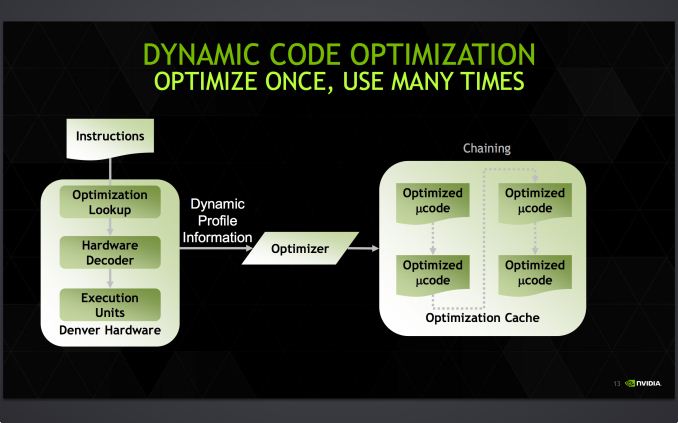

Denver's optimization cache: optimized code can call other optimized code for even better performance

Once code leaves the DCO, it is then stored for future use in an area NVIDIA calls the optimization cache. The cache is a 128MB segment of main memory reserved to hold these translated and optimized code segments for future reuse, with Denver banking on its ability to reuse code to achieve its peak performance. The presence of the optimization cache does mean that Denver suffers a slight memory capacity penalty compared to other SoCs, which in the case of the N9 means that 1/16th (6%) of the N9’s memory is reserved for the cache. Meanwhile, also resident here is the DCO code itself, which is shipped and stored as already-optimized code so that it can achieve its full performance right off the bat.

Overall the DCO ends up being interesting for a number of reasons, not the least of which are the tradeoffs are made by its inclusion. The DCO instruction window is larger than any comparable OoOE engine, meaning NVIDIA can look at larger code blocks than hardware OoOE reorder engines and potentially extract even better ILP and other optimizations from the code. On the other hand the DCO can only work on code in advance, denying it the ability to see and work on code in real-time as it’s executing like a hardware out-of-order implementation. In such cases, even with a smaller window to work with a hardware OoOE implementation could produce better results, particularly in avoiding memory stalls.

As Denver lives and dies by its optimizer, it puts NVIDIA in an interesting position once again owing to their GPU heritage. Much of the above is true for GPUs as well as it is Denver, and while it’s by no means a perfect overlap it does mean that NVIDIA comes into this with a great deal of experience in optimizing code for an in-order processor. NVIDIA faces a major uphill battle here – hardware OoOE has proven itself reliable time and time again, especially compared to projects banking on superior compilers – so having that compiler background is incredibly important for NVIDIA.

In the meantime because NVIDIA relies on a software optimizer, Denver’s code optimization routine itself has one last advantage over hardware: upgradability. NVIDIA retains the ability to upgrade the DCO itself, potentially deploying new versions of the DCO farther down the line if improvements are made. In principle a DCO upgrade not a feature you want to find yourself needing to use – ideally Denver’s optimizer would be perfect from the start – but it’s none the less a good feature to have for the imperfect real world.

Case in point, we have encountered a floating point bug in Denver that has been traced back to the DCO, which under exceptional workloads causes Denver to overflow an internal register and trigger an SoC reset. Though this bug doesn’t lead to reliability problems in real world usage, it’s exactly the kind of issue that makes DCO updates valuable for NVIDIA as it gives them an opportunity to fix the bug. However at the same time NVIDIA has yet to take advantage of this opportunity, and as of the latest version of Android for the Nexus 9 it seems that this issue still occurs. So it remains to be seen if BSP updates will include DCO updates to improve performance and remove such bugs.

169 Comments

View All Comments

rpmrush - Wednesday, February 4, 2015 - link

I find the 4:3 aspect ratio a turn off. Why change now. There are zero apps natively designed for this in the Android ecosystem. Why would a developer make a change for one device? It just seems like more fragmentation for no reason. I'm picking up a Shield Tab soon.kenansadhu - Wednesday, February 4, 2015 - link

One example to drive my point: I bought kingdom rush and found out that on my widescreen tablet, the game won't fit the screen properly. If any, this will fit apps previously designed for ipads well. Hate to admit it, but apple has such a huge lead in the tablet market it's just reasonable for developers to focus on them first.melgross - Wednesday, February 4, 2015 - link

Well, there are almost no tablet apps at all for Android. One reason is because of the aspect ratio being the same for phones and tablets. Why bother writing g a tablet app when the phone app can stretch to fit the screen exactly? Yes, they're a waste of time, but hey, it doesn't cost developers anything either.Maybe goi g to the much more useful 4:3 ratio for tablets will force new, real tablet apps.

It's one reason why there are so many real iPad apps out there.

retrospooty - Wednesday, February 4, 2015 - link

You sound like you are stuck in 2012. Update your arguments ...UtilityMax - Sunday, February 8, 2015 - link

There will be more tablets coming with 4:3 screen. Samsung's next flagship tablet will be 4:3. As much as I like watching movies on a wide screen, I think it's not the killer tablet application for most users, and most people will benefit from having a more balanced 4:3 screen. It works better for web browsing, ebooks, and productivity apps.Impulses - Wednesday, February 4, 2015 - link

Most simpler apps just scale fine one way or the other... I think 4:3 makes a ton of sense for larger tablets, it remains almost exactly as tall in landscape mode (which a lot of people seem to favor, and I find bizarre) and more manageable in portrait since it's shorter.7-8" & 16:9 is still my personal preference, since I mostly use it for reading in portrait. Try to think outside of your personal bubble tho... I bought the Nexus 9 for my mother who prefers a larger tablet, never watches movies on it, yet almost always uses it in landscape.

It'll be perfect for her, shoot, it even matches the aspect ratio of her mirrorless camera so photos can be viewed full screen, bonus.

UtilityMax - Sunday, February 8, 2015 - link

I personally think about the reverse. Big tablets with 9-11 screens are often bought for media consumption. Because of that, it makes sense for them to come with a wide screen. For me, having wide screen for watching movies on the flights and in the gym was one of the prime reasons to buy a Samsung Galaxy Tab S 10.5, even though its benchmarks look only so so.However, a 9 to 11 inch tablet is too bulky to hold in one hand and type with another. It almost begs for a stand. So for casual use, like casual web browsing or ebook reading, a smaller tablet with a 4:3 screen works better. And so I went ahead and got a tablet with 4:3 screen for that purpose.

Impulses - Monday, February 9, 2015 - link

Valid points, obviously usage cases can differ a lot, that's the nice thing about Android tho... It doesn't have to conform to any one aspect ratio that won't fit everyone's taste.LordConrad - Wednesday, February 4, 2015 - link

I love the 4:3 aspect ratio. I primarily use tablets in Portrait Mode, and have always disliked the "tall and thin" Portrait Mode of traditional android tablets. This is the main area where Google has always fallen behind Apple, IMHO. This is the main reason I gave my Nexus 7 (2013) to my nephew and bought a Nexus 9, and I have no regrets.R. Hunt - Thursday, February 5, 2015 - link

Agreed. I understand that YMMV and all that, but to me, large widescreen tablets are simply unusable in portrait. I'd love to have the choice of a 3:2 Android tablet though.