The AMD Radeon R9 Fury Review, Feat. Sapphire & ASUS

by Ryan Smith on July 10, 2015 9:00 AM ESTCompute

Shifting gears, we have our look at compute performance. As compute performance will be more significantly impacted by the reduction in CUs than most other tests, we’re expecting the performance hit for the R9 Fury relative to the R9 Fury X to be more significant here than under our gaming tests.

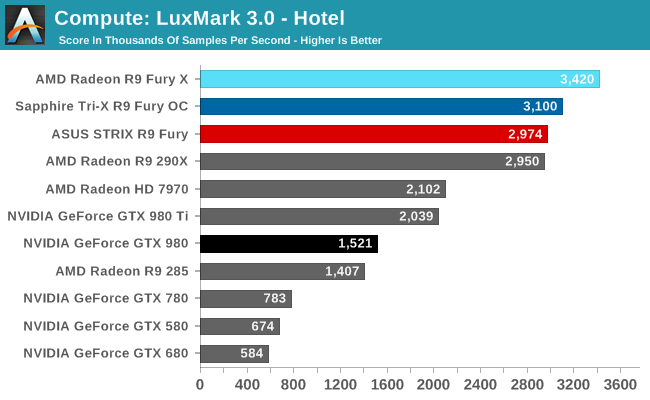

Starting us off for our look at compute is LuxMark3.0, the latest version of the official benchmark of LuxRender 2.0. LuxRender’s GPU-accelerated rendering mode is an OpenCL based ray tracer that forms a part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

For LuxMark with the R9 Fury X already holding the top spot, the R9 Fury cards easily take the next two spots. One interesting artifact of this is that the R9 Fury’s advantage over the GTX 980 is actually greater than the R9 Fury X’s over the GTX 980 Ti’s, both on an absolute and relative basis. This despite the fact that the R9 Fury is some 13% slower than its fully enabled sibling.

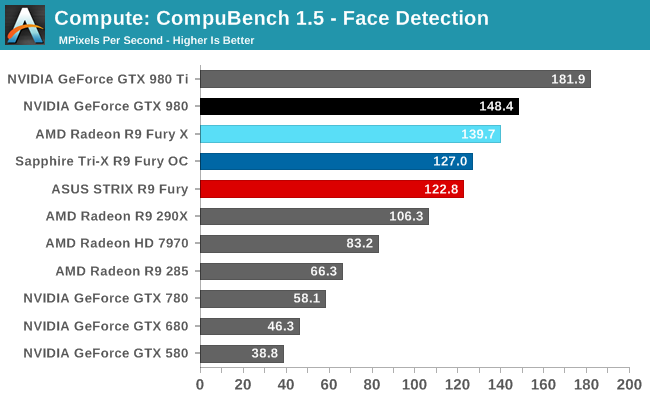

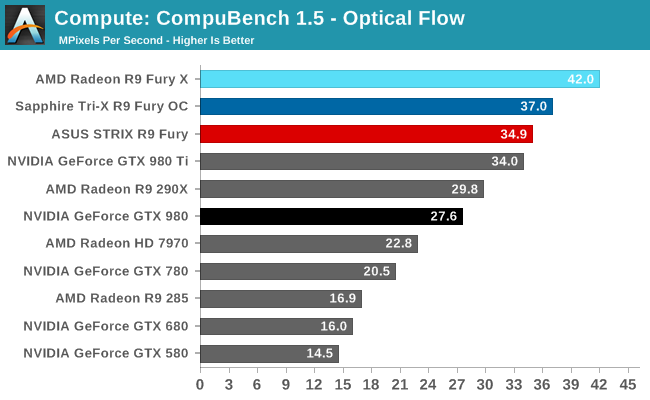

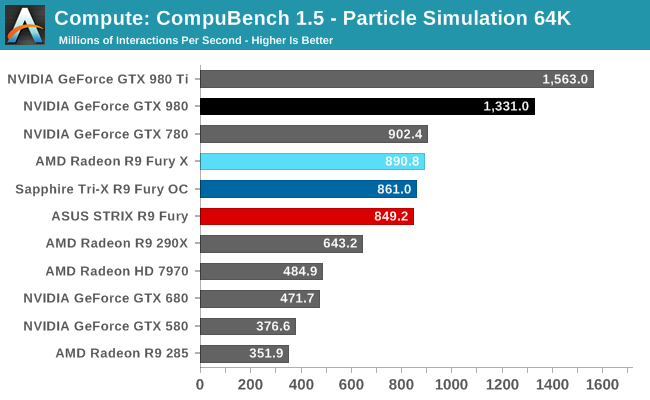

For our second set of compute benchmarks we have CompuBench 1.5, the successor to CLBenchmark. CompuBench offers a wide array of different practical compute workloads, and we’ve decided to focus on face detection, optical flow modeling, and particle simulations.

Not unlike LuxMark, tests where the R9 Fury X did well have the R9 Fury doing well too, particularly the optical flow sub-benchmark. The drop-off in that benchmark and face detection is about what we’d expect for losing 1/8th of Fiji’s CUs. On the other hand the particle simulation benchmark is hardly fazed beyond the clockspeed drop, indicating that the bottleneck lies elsewhere.

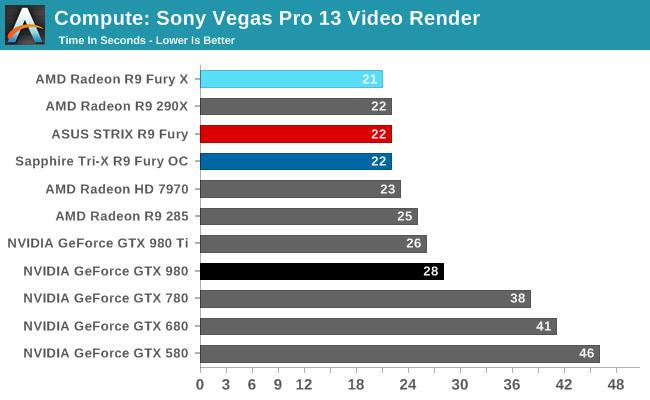

Our 3rd compute benchmark is Sony Vegas Pro 13, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

At this point Vegas is becoming increasingly CPU-bound and will be due for replacement. The R9 Fury comes in one second behind the chart-topping R9 Fury X, at 22 seconds.

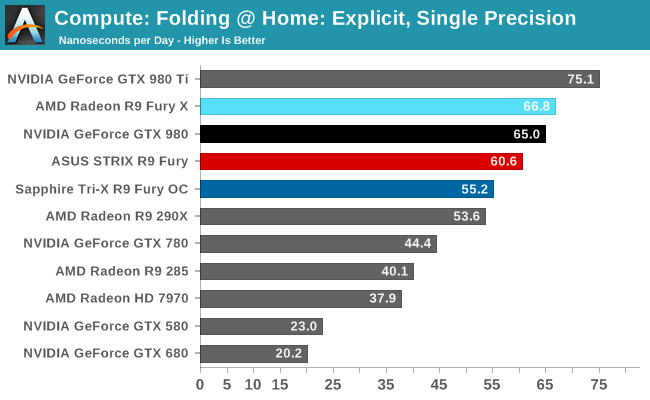

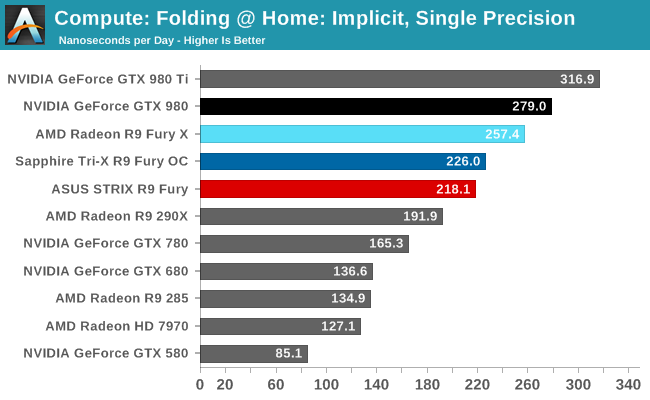

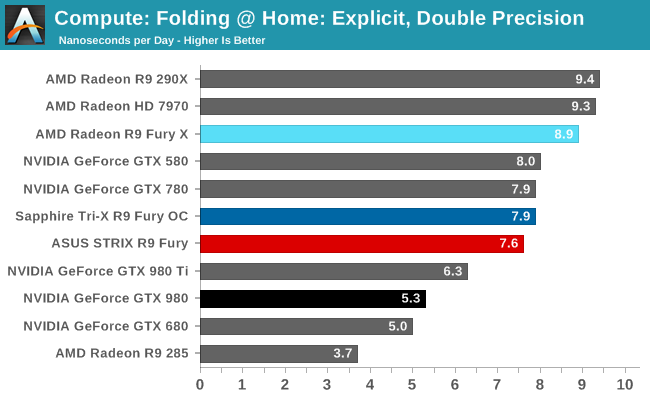

Moving on, our 4th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, utilizing the OpenCL path for FAHCore 17.

Overall while the R9 Fury doesn’t have to aim quite as high given its weaker GTX 980 competition, FAHBench still stresses the Radeon cards. Under single precision tests the GTX 980 pulls ahead, only surpassed under double precision thanks to NVIDIA’s weaker FP64 performance.

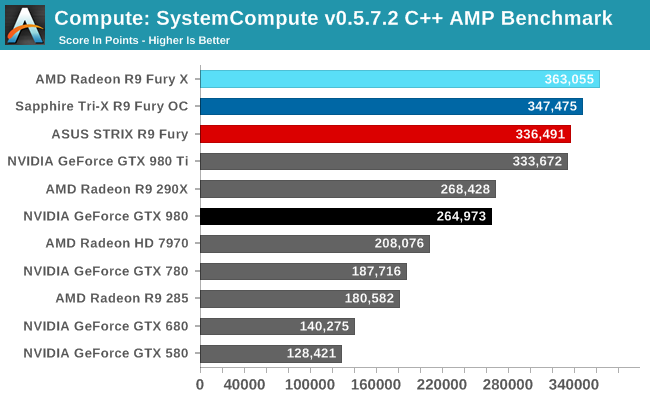

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

As with our other tests the R9 Fury loses some performance on our C++ AMP benchmark relative to the R9 Fury X, but only around 8%. As a result it’s competitive with the GTX 980 Ti here, blowing well past the GTX 980.

288 Comments

View All Comments

Midwayman - Friday, July 10, 2015 - link

I'd love to see these two go at it again once dx12 games start showing up.Mugur - Saturday, July 11, 2015 - link

Bingo... :-). I bet the whole Fury lineup will gain a lot with DX12, especially the X2 part (4 + 4 GB won't equal 4 as in current CF). The are clearly CPU limited at this point.squngy - Saturday, July 11, 2015 - link

I don't know...Getting dx12 performance at the cost of dx11 performance sounds like a stupid idea this soon before dx12 games even come out.

By the time a good amount of dx12 games come out there will probably be new graphics cards available.

thomascheng - Saturday, July 11, 2015 - link

They will probably circle around and optimize things for 1080p and dx11, once dx12 and 4k is at a good place.akamateau - Tuesday, July 14, 2015 - link

DX12 games are out now. DX12 does not degrade DX11 performance. In fact Radeon 290x is 33% faster than 980 Ti in DX12. Fury X just CRUSHES ALL nVIDIA silicon with DX12 and there is a reason for it.Dx11 can ONLY feed data to the GPU serially and sequencially. Dx12 can feed data Asynchronously, the CPU send the data down the shader pipeline WHEN it is processed. Only AMD has this IP.

@DoUL - Sunday, July 19, 2015 - link

Kindly provide link to a single DX12 game that is "out now".In every single review of the GTX 980 Ti there is this slide of DX12 feature set that the GTX 980 Ti supports and in that slide in all the reviews "Async Compute" is right there setting in the open, so I'm not really sure what do you mean by "Only AMD has this IP"!

I'd strongly recommend that you hold your horses till DX12 games starts to roll out, and even then, don't forget the rocky start of DX11 titles!

Regarding the comparison you're referring to, that guy is known for his obsession with mathematical calculations and synthetic benchmarking, given the differences between real-world applications and numbers based on mathematical calculations, you shouldn't be using/taking his numbers as a factual baseline for what to come.

@DoUL - Sunday, July 19, 2015 - link

My Comment was intended as a reply to @akanateauOldSchoolKiller1977 - Sunday, July 26, 2015 - link

You are an idiotic person, wishful think and dreams don't make you correct. As stated please provide a link to these so called DX12 games and your wonderful "Fury X just CRUCHES ALL NVidia" statement.Michael Bay - Sunday, July 12, 2015 - link

As long as there is separate RAM in PCs, memory argument is moot, as contents are still copied and executed on in two places.akamateau - Tuesday, July 14, 2015 - link

Negative. Once Graphic data is processed and sent to the shaders it next goes to VRAM or video ram.System ram is what the CPU uses to process object draws. Once the objects are in the GPU pipes system ram is irrelevant.

IN fact that is one of AMD's stacked memory patents. AMD will be putting HBM on APU's to not only act as CPU cache but HBM video ram as well. They have patents for programmable HBM using FPGA's and reconfigurable cache memory HBM as well.

Stacked memory HBM can also be on the cpu package as a replacement for system ram. Can you imagine how your system would fly with 8-16gb of HBM instead of system ram?