Intel's Pentium 4 E: Prescott Arrives with Luggage

by Anand Lal Shimpi & Derek Wilson on February 1, 2004 3:06 PM EST- Posted in

- CPUs

31 Stages: What's this, Baskin Robbins?

Flip back a couple of years and remember the introduction of the Pentium 4 at 1.4 and 1.5GHz. Intel went from a 10-stage pipeline of the Pentium III to a 20-stage pipeline, an increase of 100%. Initially the Pentium 4 at 1.5GHz had a hard time even outperforming the Pentium III at 1GHz, and in some cases was significantly slower.

Fast forward to today and you wouldn't think twice about picking a Pentium 4 2.4C over a Pentium III 1GHz, but back then the decision was not so clear. Does this sound a lot like our CPU design example from before?

The 0.13-micron Northwood Pentium 4 core looked to have a frequency ceiling of around 3.6 - 3.8GHz without going beyond comfortable yield levels. A 90nm shrink, which is what we thought Prescott was originally going to be, would reduce power consumption and allow for even higher clock speeds - but apparently not high enough for Intel's desires.

Intel took the task of a 90nm shrink and complicated it tremendously by performing significant microarchitectural changes to Prescott - extending the basic integer pipeline to 31 stages. The full pipeline (for an integer instruction, fp instructions go through even more stages) will be even longer than 31 stages as that number does not include all of the initial decoding stages of the pipeline. Intel informed us that we should not assume that the initial decoding stages of Prescott (before the first of 31 stages) are identical to Northwood, the changes to the pipeline have been extensive.

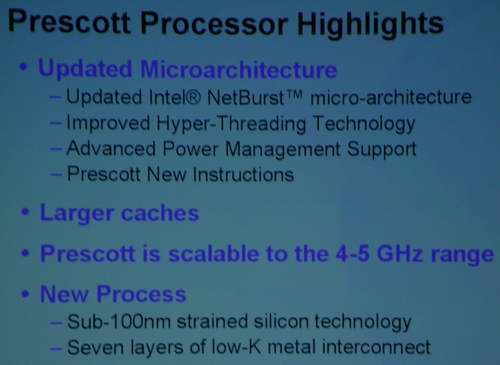

The purpose of significantly lengthening the pipeline: to increase clock speed. A year ago at IDF Intel announced that Prescott would be scalable to the 4 - 5GHz range; apparently this massive lengthening of the pipeline was necessary to meet those targets.

Lengthening the pipeline does bring about significant challenges for Intel, because if all they did was lengthen the pipeline then Prescott would be significantly slower than Northwood on a clock for clock basis. Remember that it wasn't until Intel ramped the clock speed of the Pentium 4 up beyond 2.4GHz that it was finally a viable competitor to the shorter pipelined Athlon XP. This time around, Intel doesn't have the luxury of introducing a CPU that is outperformed by its predecessor - the Pentium 4 name would be tarnished once more if a 3.4GHz Prescott couldn't even outperform a 2.4GHz Northwood.

The next several pages will go through some of the architectural enhancements that Intel had to make in order to bring Prescott's performance up to par with Northwood at its introductory clock speed of 3.2GHz. Without these enhancements that we're about to talk about, Prescott would have spelled the end of the Pentium 4 for good.

One quick note about Intel's decision to extend the Pentium 4 pipeline - it isn't an easy thing to do. We're not saying it's the best decision, but obviously Intel's engineers felt so. Unlike GPUs that are generally designed using Hardware Description Languages (HDLs) using pre-designed logic gates and cells, CPUs like the Pentium 4 and Athlon 64 are largely designed by hand. This sort of hand-tuned design is why a Pentium 4, with far fewer pipeline stages, can run at multiple-GHz while a Radeon 9800 Pro is limited to a few hundred-MHz. It would be impossible to put the amount of design effort making a CPU takes into a GPU and still meet 6 month cycles.

What is the point of all of this? Despite the conspiracy theorist view on the topic, a 31-stage Prescott pipeline was a calculated move by Intel and not a last-minute resort. Whatever their underlying motives for the move, Prescott's design would have had to have been decided on at least 1 - 2 years ago in order to launch today (realistically around 3 years if you're talking about not rushing the design/testing/manufacturing process). The idea of "adding a few more stages" to the Pentium 4 pipeline at the last minute is not possible, simply because it isn't the number of stages that will allow you to reach a higher clock speed - but the fine hand tuning that must go into making sure that your slowest stage is as fast as possible. It's a long and drawn out process and both AMD and Intel are quite good at it, but it still takes a significant amount of time. Designing a CPU is much, much different than designing a GPU. This isn't to say that Intel made the right decision back then, it's just to say that Prescott wasn't a panicked move - it was a calculated one.

We'll let the benchmarks and future scalability decide whether it was a good move, but for now let's look at the mammoth task Intel brought upon themselves: making an already long pipeline even longer, and keeping it full.

104 Comments

View All Comments

Jeff7181 - Thursday, March 11, 2004 - link

#98Yes, increasing the drive current means increasing the current that's flowing through the transistors, which does explain the heat increase.

watts = current x voltage

If we do the math, we can figure out how many amps the current Prescott runs on...

The 3.2 and 3.4 Ghz models have a spec of 103 watts, and the voltage is 1.385, so divide 103 by 1.385 and you get about 74.3 amps.

The 3.4 Ghz Northwood has a spec of 89 watts, and the voltage is 1.550, that's 57.4 amps.

That's a 30% increase in current, with only a 20% reduction in voltage. There's your extra heat. 103 watts vs. 89 watts... about a 16% increase in heat. We can take this a little further and say...

The Prescott at 3.4 Ghz produces 103 watts of heat, max. The Prescott at 3.0 Ghz produces 89 watts of heat, max. That means a 3.4 GHz Prescott runs on 74.3 amps, and the 3.0 GHz Prescott runs on 64.3 amps. So increasing the speed by 400 Mhz requires 10 more amps.

So a 3.6 GHz Prescott would run on 79.3 amps, which would create 109.8 watts...

and a 3.8 GHz Prescott would run on 84.3 amps, which would create 116.8 watts...

and a 4.0 GHz Prescott would run on 79.3 amps, which would create 123.7 watts...

and a 5.0 GHz Prescott would run on 104.3 amps, which would create 144.5 watts.

This is of course assuming they don't make core changes that require less current, and that they don't make core changes that require less voltage. It will be VERY interesting to see how they deal with this increased thermal output... considering it looks like the 2.8 Ghz Prescotts are maxing out at 50 degrees C with the retail heatsinks... and the thermal output of a 5 Ghz Prescott is about twice that, so, with the same heatsink as the 2.8... a 5 Ghz Prescott should run at about 100 degrees C, lol. 5 Ghz is a ways away though, 4 is much closer, but still, that's about a 75% increase in heat over the 2.8... so you're gonna be looking at full load temps around 80 degrees C unless Intel pulls something out of their hat.

On a side note...

Strained Silicon is supposed to reduce current leakage, and it does. But what I think Intel maybe didn't foresee is the 30% increase in current… or maybe they thought they could run on 1.0 – 1.2 volts.

See, voltage is electrical pressure, current is electrical volume. If you increase the volume of electricity moving through, but decrease the pressure, not as much current will leak. Think of it like a water hose. If you need a certain amount of water in a certain amount of time, you can increase the water pressure, and it will move faster so you'll have more water, but you might spring a leak in the hose... or you can just get a bigger hose and use less water pressure, which is basically what Intel did with Strained Silicon.

AMD’s approach with using SOI has been, dare I say, more successful. When you look at the specifications, the 3400+, 2.2 GHz has a maximum of 89 watts at 1.5 volts. When the PowerNow feature is used, it drops down to 2.0 GHz, and 1.4 volts, the wattage drops down to a cool 69 watts. When it drops down again to 1.8 GHz and 1.3 volts, the wattage drops to 50 watts. And finally when it drops down to 1.0 GHz and 1.1 volts, the wattage is a frigid 22 watts. Normally you would think that means for every 200 MHz increase, your wattage increases by 10 watts. However… the FX-53 runs at 2.4 GHz and it’s maximum wattage is also 89 watts. So it seems as though AMD may be estimating very high with these early processors if a 2.4 GHz chip has the same maximum heat dissipation of a 2.0 GHz chip. The only explanation I can come up with is that as they get more experience at manufacturing these chips, current leakage just gets better and better. We can only hope to see the same from Intel with the Prescott as they refine their Strained Silicon and 90nm process.

slashbinslashbash - Sunday, February 29, 2004 - link

Oh, and I think that the conspicuous silence by AT and everybody else on this subject only confirms that Intel indeed has something up its sleeve. They all say "Prescott has higher energy consumption" and "a larger transistor count" without even speculating as to what could create the wild disparity that we see with the transistor math.slashbinslashbash - Sunday, February 29, 2004 - link

#96: I'm no CPU designer, but it seems to me that the "add transistors to dissapate heat more evenly" argument doesn't make sense. Why not just have empty silicon if you need to spread things out? Adding actual transistors will also increase the amount of heat output, so the density of heat would stay the same.Lots of good speculation on Prescott/Yamhill here: http://www.chip-architect.com/

Regs - Tuesday, February 17, 2004 - link

Ah god, I'm sorry. This is suppose to be about the Prescott, and I just completely made a "fan boy" remark.Regs - Tuesday, February 17, 2004 - link

So it's pricey, runs hot, shows little improvement over the earlier northwoods, and did I mention pricey? The 3.4c is 415 dollars at newegg let alone what a 3.2 o 3.4E would cost.To us tech-gurus it comes down to common sense, but everybody knows marketing will always get the better of AMD. Intel well shovel "you're paying for the best performer", which is sadly true by a small margin if that for a huge price difference. And how people ignore the A64 completely just because 64-bit is not needed as of right now is just frustrating.

AMD made a remarkable achievement for making affordable technology while satisfying the need for higher performance.

TrogdorJW - Wednesday, February 4, 2004 - link

What does it mean to increase the transistor drive current by 10-20%? Does that mean that they need to run, say, 1.1 to 1.2 Amps instead of 1.0 Amps? (I know that's not what the processors use; I'm just using those numbers because they're easy to work with.) If that's correct, then it would certainly account for some of the heat increase.Initially, I read about strained silicon and thought that the idea was that it would take less power to run the chips at the same speed. The atoms are further apart, electrons flow more easily... doesn't that mean that strained silicon should make things run cooler? (I'll be honest - the electromagnetic physics course I had to take in college was *NOT* my favorite course. Talk about a HARD class....)

PrinceGaz - Wednesday, February 4, 2004 - link

I think Intel's heat problems are in part down to the Strained-Silicon technology they've introduced with the 90nm process as much as anything else. If as it says it increases the transistor drive-current by 10-20% then thats 10-20% more power and therefore heat being generated by each transistor for a given voltage.AMD however has opted to go for SOI now and that reduces leakage-current (waste) from the transistors, which means less heat is generated by them.

Intel is expected to introduce SOI with their 65nm process in 2005 and that should help reduce their heat problem a bit, and AMD will no doubt adopt Strained-Silicon around about the same time which will raise the amount of heat in their chips making them both about even again.

The difference now is that Intel implemented the heat-increasing performance improvement first, while AMD implemented the heat-decreasing one first.

TrogdorJW - Wednesday, February 4, 2004 - link

Aceshardware has some information on the transistors as well, on the bottom of page one of their review: http://www.aceshardware.com/read.jsp?id=60000315Of course, they also end up concluding the same things as me: the changes that Intel has really told us about don't seem like they should really be using up the 45 million added transistors. (A Northwood with 1 MB of L2 would be an 80 million transistor CPU.)

Intel did make numerous small changes to the processor, so I guess that it is possible that they could have used up all of the extra transistors. Who knows?

One other thing that isn't really being talked about anywhere is transistor density. In the past, shrinking the transistor size always ended up making chips run cooler. It appears that this may not be the case with 90nm processes and beyond. If Intel had stuck with a straight Northwood core and simply moved to 90nm, then the CPU die size would be something like half of what it currently is. So instead of 112 mm2, it would be 60 mm2 or something.

With all of the heat being generated in such a small area, maybe they had to add transistors and size just to spread out the heat dissipation? It's a weird argument, but it *could* be true. When AMD releases 90nm chips and we see how hot they get, we'll probably gain more insight into this. If AMD's chips run slightly hotter, then 90nm will have marked a transition to a new set of problems in processor die shrinks.

Pumpkinierre - Wednesday, February 4, 2004 - link

The only other explanation is that prescott is dual core. Really if the stages get smaller as the pipeline gets deeper then the transistor count should stay the same. So a dual core with double the cache should be 2xNorthwood= 110 million transistors- still 15 million unaccounted for and available for other things. Other people are saying that the 31 stage pipeline cant be right as the processor's power would be much weaker than the observed performance cf. equivalent (20 stage pipe) Northwood, despite the tweaks. It seems to perform well on the hyperthreaded enabled software and dual cpu may explain the slowness of the cache like duallies where one cpu has to keep tabs on the other. It also explains the heat for which a size reduction on a single core should augur less heat in contrast to Prescott's > 100 Watts.Pumpkinierre - Tuesday, February 3, 2004 - link

That's it. Prescott is already 64bit enabled. They have'nt bothered to switch them off as no intel mobo BIOS detects the 64bit extensions anyway. That's where the extra heat is coming from. I mean Northwood is ~130MM2 (55million transistors) and Prescott is close in size 112mm2 but 125million transistors - so approximately the same size but far greater transistor density so more heat. Even with the extra cache it should have been around 80 million and thus heat would have been at Northwood levels. The extra transistors still seem excessive for x86-64. So it might even be IA-64. Sckt 478 might not be pinned enough but 775 should do it. Here's my prediction then: ** 64bit WILL be available when Sckt LBGA 775 Prescott cpus come out in April with the new Grantsdale and Alderwood mobos **. And thats what is going on display in coupla of weeks time. How to check it, maybe write some assembler using X86-64 or IA-64 commands and see if they work.