NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Derek Gets Technical: 15th Century Loom Technology Makes a Comeback

Because it's multithreaded...

Yes I know it's horrible, but NVIDIA has gone a bit deeper in explaining their architecture to us and they thought borrowing terminology from weaving was clever. But as much as that might make you want to roll your eyes, the explanation of how things work that is enabled is worth it.

In cloth weaving, a warp is the vertical group of parallel threads that are held taught while the weft are the threads passed through these. I suppose it makes sense, then, that NVIDIA decided to call their grouping of parallel threads to be executed on an SM a warp.

See the group of threads that hang from the top of this loom? That's called a warp.

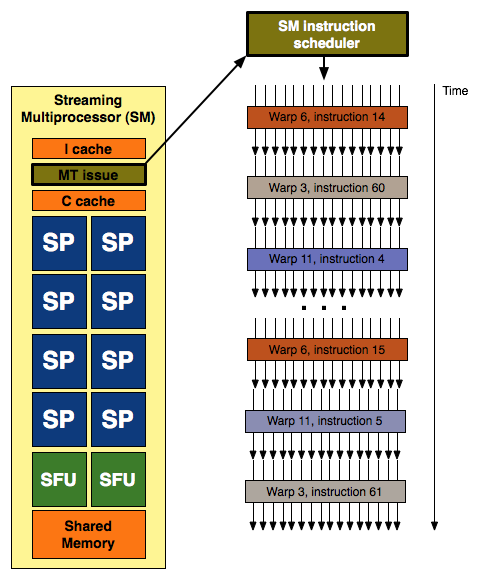

With each SM having 8 SPs and G80 having two SMs per TPC (for a total of 16 SPs) it looked like a natural fit to execute quads across these 16 scalar units. We learned that this was not the case, but seeing the grouping of three SMs per TPC in GT200 still looked a little funny until we really learned more about how things are scheduled on NVIDIA's unified architecture. Each SM's scheduler picks a new warp to work on every clock cycle, and since the scheduler runs at half speed this ends up being every other clock cycle from the perspective of the rest of the SM. Each warp is made up of a group of 32 threads (in pixel shaders this is a group of 8 quads) that share an instruction stream (a shader program or a kernel if you're talking about GPU computing).

32 threads in a warp, issued in two groups of 16 threads - one such group is depicted above

Different warps built from threads executing the same shader program can follow completely independant paths down the code, but every thread within a warp must be exectuing the same instruction. This means that our branch granularity is 32 threads: every block of 32 threads (every warp) can branch independantly of all others, but if one or more threads within a warp branch in a different direction than the rest then every single thread in that warp must execute both code paths. Each threads only retains the result from the path it was supposed to follow probably by using the branch result to dynamically predicate the apporpriate path per thread. Each SM can have 32 (up from 24 in G80) warps in flight at the same time (for a total of 1024 threads in flight per SM). With 10 TPCs each containing 3 SMs, that's up to 30720 threads in flight on a GTX 280.

Each SM with it's 8 SPs and 2 SFUs is capable of processing up to 8 ops per clock (MAD (a fused floating point multiply and add) being the most complex) in the SPs and 8 ops per clock on the SFUs (which are made up of 4 floating point MUL units and other logic). Warps are executed on SMs in 2 groups of 16 threads (probably four quads for pixels) over four clock cycles.

This is where it gets interesting.

The SPs and SFUs are scheduled on alternating clock cycles from the perspective of the SM scheduler. They execute independent warps. SPs are scheduled on one clock cycle and SFUs are scheduled the next. Each is able to complete the processing of an entire warp before the next time it is scheduled, which happens to be four clocks (relative to the SPs and SFUs) after they it was scheduled with the last warp.

But wait ... aren't SMs supposed to be able to support dual-issue MAD+MUL? Well, back when G80 launched, Beyond3d did an excellent job of exploring the reality of the situation. With the fact that the SFU handles the "dual-issued" MUL and shares its time with other responsibilities like transcendental calculation and attribute interpolation, the ability of the hardware to actually accelerate cases that could take advantage of dual-issue was significantly reduced. But this scheduling revelation makes it clear that the problem is much more complex.

In order for code that could benefit from dual-issue hardware to take advantage of G80 or GT200, a warp must be scheduled on an SP for the MAD and then it must be re-scheduled on the SFU before it hits the SPs again. If the SFU is too busy handling attribute interpolation or transcendental math, then our warp will be rescheduled on the SPs to calculate the MUL and we will have lost our potential dual-issue speed up.

NVIDIA tells us that they "had some scheduling/issue problems with getting the MUL in the SFU to work consistently per clock. We fixed that in GT200." This makes perfect sense in light of what we now know about scheduling on G80/GT200. What they needed to do to improve the ability of their hardware to speed up cases where a MAD+MUL dual issue would help was to make sure that these cases were properly prioritized to execute on the SFU. We don't see MAD+MUL in every line of code, so giving these cases higher priority on the SFU than its other duties should help to optimize the utilization of the hardware. They do have to be careful not to start just running random MULs on the SFU because those special functions do still need to get done, but pushing a select subset of MULs onto the SFU (where they directly follow MADs that have just been executed on SPs) should definitely help in certain cases.

Really the main focus for NVIDIA is proper utilization and prioritization. Everything for any given frame needs to get done at some point or other, so simulating "dual-issue" shouldn't take priority over other things that need to get done that might be more important to completing the next frame. But organizing how to handle it is a big deal. Real time compilers are a big part of that, but internal thread management and scheduling in an architechure this wide also cannot be ignored. NVIDIA says they can now get 93-94% efficiency from their "dual-issue" implementation in directed tests and that this is significantly higher than on G80. Real world results will be lower, but the thing to remember is that the goal is simply to make maximally efficient use of the hardware availalbe. Just because the SFU isn't assisting in a MAD+MUL every clock doesn't mean it isn't doing something important.

This whole situation leaves us with mixed feelings. The hardware itself is not capable of "dual-issue" as it is understood in an architectural sense. The obfuscation of graphics hardware technology in a competitive industry has been the norm for the past decade, and we can accept this. We would prefer to know what the hardware is actually doing, but we are more than happy to have an explanation of something as a hardware feature where the hardware merely simulates the effect a specific architectural design has. But in the case where the hardware doesn't perform in nearly the same way as it would if the feature had actually been implemented as a hardware feature, we just can't help but be a little disappointed.

And we are conflicted about this because NVIDIA's design is actually very elegant. Attribute interpolation will always need to be done, and having hardware set aside for complex math is also very useful. But rather than making a dedicated fixed function interpolator or doing taylor expansion of complex math on SPs, NVIDIA built hardware that could serve both purposes and that had time left over to help offload some well placed MULs within the instruction stream of running programs.

If NVIDIA had been as open about their architecture as Intel is about their CPU designs, we could not have helped but to be impressed by this. The "missing MUL" wouldn't have been seen as a problem with NVIDIA's dual-issue "hardware"; we would have been praising NVIDIA's ability to schedule and multitask the different units within their SMs in order to improve utilization.

108 Comments

View All Comments

elchanan - Monday, June 30, 2008 - link

VERY eye-opening discussion on TMT. Thank you for it.I've been trying to understand how GPUs can be competitive for scientific applications which require lots of inter-process communication, and "local" memory, and this appears to be an elegant solution for both.

I can identify the weak points of it being hard to program for, as well as requiring many parallel threads to make it practical.

But are there other weak points?

Is there some memory-usage profile, or inter-process data bandwidth, where the trick doesn't work?

Perhaps some other algorithm characteristic which GPUs can't address well?

Think - Friday, June 20, 2008 - link

This card is a junk bond when taking into consideration cost/perfomance/power consumption.Reminds me of a 1976 Cadillac with a 7.7litre v8 with only 210 horsepower/3600 rpm.

It's a PIG.

Margalus - Tuesday, June 24, 2008 - link

this shows how many people don't run a dual monitor setup. I would snatch up one of these 260/280's over the gx2's anyday, gladly!!The performance may not be quite as good as an sli setup, but it will be much better than a single card which is what a lot of us are stuck with since you CANNOT run a dual monitor setup with sli!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

iamgud - Wednesday, June 18, 2008 - link

"I can has vertex data"LOL

These look fine, but need to be moved to 55nm. By the time I save up for one they will .

calyth - Tuesday, June 17, 2008 - link

Well what the heck are they doing with 1.4B transistors, which is becoming the largest die that TMSC has been producing so far?The larger the core, the more likely that an blemish would take out the core. As far as I know, didn't Phenom (4 cores on die) suffered low-yield problems?

gochichi - Tuesday, June 17, 2008 - link

You know, when you consider the price and you look at the benchmarks, you start looking for features and NVIDIA just doesn't have the features going on at all.COD4 -- Ran perfect at 1920x1200 with last gen stuff (the HD3870 and 8800GT(S))so now the benchmarks have to be for outrageous resolutions that a handful of monitors can handle (and those customers already bought SLI or XFIRE, or GTX2 etc.)

Crysis is a pig of a game, but it's not that great (it is a good technical preview though, I admit), and I don't think even these new cards really satisfy this system hog... so maybe this is a win, but I doubt too many people care... if you had an 8800GT or whatever, you're already played this game "well enough" on medium settings and are plenty tired of it. Though we'll surely fire it up in the future once our video cards "happen to be able to run it on high" very few people are going to go out of their way $500+ for this silly title.

In any case, then you look at ATI, and they have the HDMI audio, the DX 10.1 support and all they have to do at this point is A) Get a good price out the door, B) Make a good profit (make them cheap, which these NVIDIA are expensive to make, no doubt) and C) handily beat the 8800GTS and many of us are going to be sold.

These cards are what I would call a next gen preview. Some overheated prototypes of things to come. I doubt AMD will be as fast, and in fact I hope they aren't just as long as they keep the power consumption in check, the price, and the value (HDMI, DX10.1, etc).

Today's release reminded me that NVIDIA is the underdog, they are the company that released the FX series (desperate technology, like these are). ATI has been around well before 3DFX made 3d-accelerators. They were down for a bit, and we all said it was over for ATI but this desperate release from NVIDIA makes me think that ATI is going to be quite tought to beat.

Brazofuerte - Tuesday, June 17, 2008 - link

Can I go somewhere to find the exact settings used for these benchmarks? I appreciate the tech side of the write up but when it comes to determining whether I want one of these for my gaming machine (I ordered mine at midnight), I find HardOCP's numbers much more useful.woofermazing - Tuesday, June 17, 2008 - link

AMD/ATI isn't going to abandon the high end like your article implies. Their plan is to make a really good mid range chip, and ductape to cores together ala the X2's. Nvidia goes from the high-end down, ATI from the mid-end up. From the look of it, ATI might have the right idea, atleast this time around. I seriously doubt we'll see a two core version of this monster anytime soon.DerekWilson - Tuesday, June 17, 2008 - link

they are abandoning the high end single GPU ...we did state that they are planning on competing in the high end space with multiGPU cards, but that there are drawbacks to that.

we'll certainly have another article coming out sometime soon that looks a little more closely at AMD's strategy.

KeypoX - Tuesday, June 17, 2008 - link

i dont like it, not impressed either :(. Hopefully my 8800gt last for a while, far past this crap atleast