Plextor M5S 256GB Review

by Kristian Vättö on July 18, 2012 3:00 AM ESTInside the M5S

Since the M5S does not include any software discs or 2.5" to 3.5" bracket, the packaging is noticeably smaller than M3's.

There are absolutely no add-ons included. The only contents of the package are the SSD itself and a quick installation guide. It should be noted that not even mounting screws are included, so hopefully your case came with a few.

The chassis at least looks like it's the same as in the M3. The color is a match and both measure in at 9.5mm in height.

The innards have changed quite significantly. The M3 and M3 Pro both had separate thermal pads for each main component (controller, NAND, DRAM), but the M5S only has one thermal pad which is for the controller. This is without a doubt a cost cutting measure. Thermal pads are not really necessary as SSDs don't generate much heat anyway.

The M5S is powered by Marvell's 88SS9174-BLD2 controller, just like the M3 and M3 Pro were too. I was hoping to see Marvell's 88SS9187, but perhaps Plextor is saving that for "M5 Pro". According to Plextor, the M5S does come with a different firmware than the M3 and M3 Pro, although I'm guessing that the M5S firmware was built upon the M3 (Pro) firmware. It's likely that the M5S firmware just adds Micron NAND support.

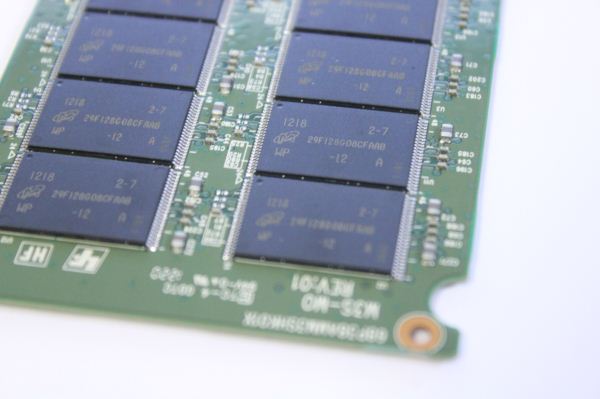

The only change in hardware appears to be in the NAND department. The M3 and M3 Pro used Toshiba's 24nm Toggle-Mode 2.0 MLC NAND, whereas the M5S is using 25nm ONFi 2.x MLC NAND from Micron. The change in NAND supplier has also resulted in changes in the PCB layout. There are now sixteen NAND packages, eight on each side of the PCB. The M3 and M3 Pro both had only eight NAND packages, regardless of the capacity. The 256GB model we have uses 16GB packages, each consisting of two 8GB dies.

Plextor stuck with Nanya as its DRAM supplier. There are two 256MiB DDR3-1333 SDRAM chips, giving the M5S a total of 512MiB of cache.

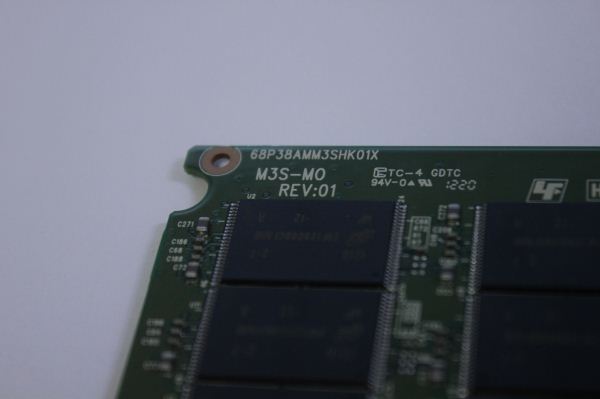

The markings on the PCB actually say "M3S". Our unit is a pre-production sample, so that could be the reason but essentially the M5S is just an M3 with ONFi NAND.

Test System

| CPU |

Intel Core i5-2500K running at 3.3GHz (Turbo and EIST enabled) |

| Motherboard |

AsRock Z68 Pro3 |

| Chipset |

Intel Z68 |

| Chipset Drivers |

Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory | G.Skill RipjawsX DDR3-1600 2 x 4GB (9-9-9-24) |

| Video Card |

XFX AMD Radeon HD 6850 XXX (800MHz core clock; 4.2GHz GDDR5 effective) |

| Video Drivers | AMD Catalyst 10.1 |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

43 Comments

View All Comments

shodanshok - Wednesday, July 18, 2012 - link

Hi Kristian,thank you for your reply.

I understand that measuring WA is your "special sauce" (anything to do with SMART 0xE6-0xF1 attributes ? ;)), but the interesting thing is the Plextor was able to minimize WA while, at the same time, maximize idle GC efficiency.

Other drivers that heavily use GC (eg: Toshiba and previously Indilinx controllers) seems to cause a much higher WA.

Thank you for these comprehensive review.

sheh - Thursday, July 19, 2012 - link

Thanks.I have to say, though, that it's difficult to give credence to data that is the result of undisclosed calculations, and not even by the hardware manufacturers.

Kristian Vättö - Thursday, July 19, 2012 - link

The method we use was disclosed by a big SSD manufacturer a few years ago. It does not rely on SMART or power consumption, and it can be run on any drive.If we revealed the method we use, we would basically be giving it out to every other site. Tech industry is quite insolent about "stealing" nowadays, getting content from other sites without giving credit seems to be fine by today's standards.

Also, our method is just one way of estimating worst case write amplification.

shodanshok - Thursday, July 19, 2012 - link

Hi KristianI totally understand your point.

Thank you for these great reviews ;)

sheh - Thursday, July 19, 2012 - link

I can't say I understand this logic, but so be it. Thanks for replying. :)jwilliams4200 - Sunday, July 22, 2012 - link

Does it work for Sandforce SSDs? Because I noticed your WA chart does not have any Sandforce SSDs.Are you just measuring the fresh out-of-box (or secure erase) write speed with HD Tune, then torturing the drives and then measuring the worst case write speed with HD Tune? Then saying WA = FOB write speed / worst case write speed ?

If that is what you are doing, then I don't think it is very accurate. Any SSDs that have aggressive background garbage collection could make the "worst case" write speed fluctuate or stabilize at a value that does not reflect the worst case write amplification.

Kristian Vättö - Sunday, July 22, 2012 - link

SandForce drives break the chart, hence I couldn't include any. SandForce drives typically have worst case WA of around 2x, though.I still cannot say what our testing methods are. Anand has made the decision that he doesn't want to share the method and I have to respect that. You can email him and ask about our method - I can't share our methods without his permission.

In the end it's an estimation, nothing more. How accurate, it's hard to say as it will vary depending on usage.

jwilliams4200 - Monday, July 23, 2012 - link

So it is TERRIBLY inaccurate, because Sandforce SSDs actually have worst case write amplification of well over 10, just like other SSDs.In that case, I assume I was correct that you are just using ratio of write speeds from HD Tune, but since HD Tune writes highly compressible data, you are getting bogus results for Sandforce SSDs (actually, I should say, even more inaccurate for Sandforce SSDs than for non-Sandforce)

Anand really needs to reconsider some of his policies. This "secret" test method is just absurd.

jwilliams4200 - Wednesday, July 18, 2012 - link

It all hinges on finding a way of measuring "flash writes", the amount erased/written to flash chips, as opposed to "host writes", which is easy to measure (the amount your computer writes to the SSD).Usually you can find or guess which one of the SMART attributes represents flash writes. You can start by doing large sequential writes to the SSD (for which the WA should be close to, but a little over, 1) and monitoring the SMART attributes to see which one changes like it is monitoring flash writes.

I remember some time ago an anandtech article mentioned another way of doing it. I'm not sure if they are using this method now or not (I have my doubts about the accuracy of the method). It had to do with measuring the power usage and somehow correlating that to how much writing to flash is occurring. The reason I have doubts about the accuracy of the method is that it would require measuring a sort of "baseline" power consumption when writing to the flash, and to get the baseline you would have to control the conditions of the write (for example, doing it write after a secure erase) in order that you can guess/assume what the WA is, so that you will then be able to compute the WA in more complicated conditions based on the "baseline". But that is rather like pulling yourself up by your own bootstraps, so I would not trust the results.

The first method I described is the way to go, unless the SSD does not have a SMART attribute that measures flash writes.

cserwin - Wednesday, July 18, 2012 - link

I have to say seing the Plextor brand name resurface kindles a warm, happy feeling.There was a time when they made the optical drives to have. A Plextor CD-ROM, a 3DFX Voodoo, a 17" Sony Trinitron, IBM Dekstar...

Good luck, Plextor. Nice to see the old school still kickin.